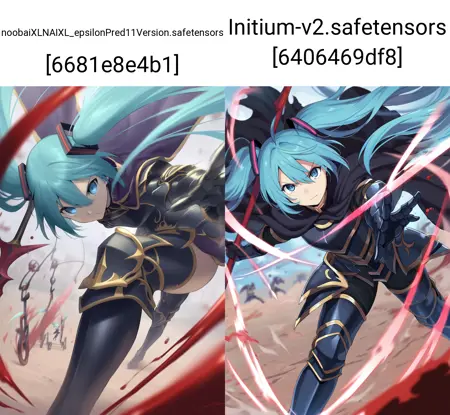

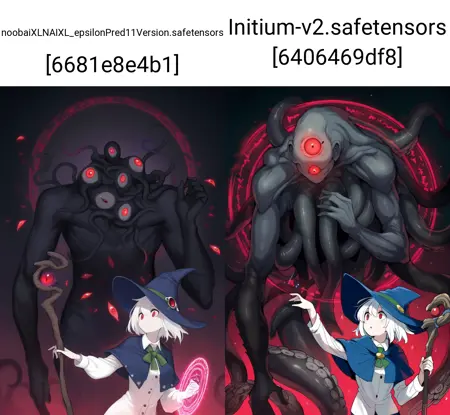

Initium (SDXL, IllustriousXL): What is Initium?

Built on Noobai V1.1, model designed to help "fix" some of the remaining issues on NoobAI 1.1, meant as a model for creating your own merges, ideally used as training base for merges that have this in their shared heritage.

Features:

Custom Trained Text Encoders: Incorporates unique CLIP/TENC training and U-net Aligning to them.

Dataset: Trained with a ~~220k image dateset (lucereron + Initium v1 dataset + new data), dataset wasn't solely focused on 1girl, will try to produce difference scenery, abstract and no humans, should be a bit less biased towards girls, and this list of artists was trained, remember to use escape characters like sen \(astronomy\), samples can be seen here for a lot of artists, https://files.catbox.moe/9lglyd.png, https://files.catbox.moe/4earj9.png, https://files.catbox.moe/b5egkz.png, https://files.catbox.moe/timjci.png, https://files.catbox.moe/8jni63.png, https://files.catbox.moe/c0zd98.png, https://files.catbox.moe/gw1v45.png, https://files.catbox.moe/9lyidu.png, a v3 will be made down the line in an effort to remove most signatures present.

LoRA: LoRAs trained on Illust 0.1 might not work due to custom TENCs, go with NoobAI 1.0/1.1 or stuff trained right on this model

Tag based Loss Scaling: An Imitation of NAI v3's implementation to avoid overffitting to stuff, when tags are too present in the dataset their loss will be reduced, inverted for tags that barely have presence these ones will have their loss increased.

Usage Tips:

Positive Prompt:

masterpiece, best qualityNegative Prompt:

low quality, bad hands, greyscale, bad anatomyOptional Negative Prompt:

signature, 4koma, multiple views, watermark, patreon logoSchizo Negative Prompt: worst quality, low quality, worst aesthetic, old, early, blurry, lowres, signature, artist name, watermark, twitter username, sketch, logo, furry, text, speech bubble, censored

Styling:

Use

realistic/3dtags as needed, adjust negatives to guide the model towards your desired look.User artists from the artist list as these were used regularization for learning and didn't lose knowledge from the other dataset, dataset was reduced after 20 epochs for all content, going from 220k original images to 40k cherrypicked ones.

Technical Settings:

Sampler: Euler a, Euler

Steps: 20-28

CFG Scale: 5.0-8.0

Resolution: 1024x1024 or any aspect ratio that falls within ~~1.048.576 pixels.

Model Recipe:

Developed with bespoke CLIP/TENC settings and U-net Aligning.

Compatibility:

Sync with Noobai v1.1 for optimal results using the attention cut in ComfyUI, guided by PotatCat.

Acknowledgments:

Thanks to Arc En Ciel discord server, @mfcg , @FallenIncursio , @Anzhc, @richyrich515 , and others for their support!

Disclaimer for Fun: By using this model, you agree to donate one of your virtual balls to @novowels .

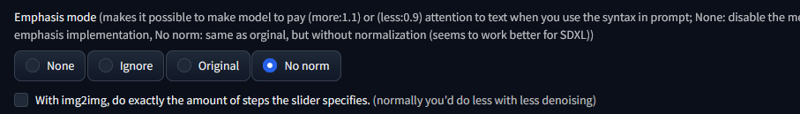

Remember to set these in your reforge for best results.

Emphasis mode No Norm is suggested for SDXL models

If you want to support me, consider liking the model, and/or tipping buzz/donating to my Ko-fi, as this was finetuned on 8xL40S

Relevant training information:

Trained with at least 220k images.

Total of about ~~4.5m real steps after batching across 40 epochs.

Effective batch size was 15 x 8 GPUs x 6 Gradient Accumulation (RTX Ada 6000 x8)

Learning rate was 6e-6 with Compass, then 1.5e-5 AdamW, Clip L was 5e-7, Clip G was trained at 4e-7 with Adabelief.

Description

FAQ

Comments (10)

What does "Sync with Noobai v1.1 for optimal results using the attention cut in ComfyUI, guided by PotatCat." mean?

smh

"The dataset wasn't solely focused on '1girl'. Always the same boring '1girl' focus. Seriously, where's the originality? Your models are just drowning in a sea of other equally uninspired ones, like the 95% of other models on this site.

Hey there thank you, but have you tried to actually use what I said?

When checking Initium V2 out of curiosity, i noticed a key is missing in the model (conditioner.embedders.0.transformer.text_model.embeddings.position_ids).

It still seems to work fine but is it "normal" due to the change in the TENC or maybe something got lost in the mix?

@n_Arno its normal, it happens even in NoobAI, don't worry about it.

@bluvoll Thanks for the answer. I did a test by swapping the TENC in an other model to measure the change and its significant indeed, why did you chose to do so?

It creates an other fracture in the already messy Illustrious landscape (between the original Illustrious, NoobAi and the various "Noob fixing merge", we don't have like with Pony a single true ancestor to build upon).

@n_Arno Its because it needed extra proper training, basically Initium is the result of research.

good model! but could you explain what the "discard next-to-last sigma" and "sgm noise multiplier" settings do?

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.