Aha said I lobotomized this model 💀

Aha said I lobotomized this model 💀

Description

Lobotomized Mix is a highly capable base model built on NoobAI-vpred with extremely high character fidelity and prompt responsiveness. It has a strong predilection for complex lighting effects and functions best with clear well defined prompt tags. Character lora responsiveness is good. Style lora responsiveness is varied, ranging from "well you can clearly see some sort of effect" and "this mimicks the style perfectly". It is generally capable prompting up to three characters interacting with any issue or need for regional conditioning. Prompting four characters is possible but attribute-blend becomes increasingly likely the more characters are prompted. Prompting more than four distinct characters is probably impossible on the SDXL architecture without aid from extensions (though this does not count for clones or twins or otherwise visually distinct characters).

Usage Requirements

Standard NoobAI quality tags apply. Check NoobAI documentation for details. In addition, the very aesthetic positive and displeasing negative tags have some minor effect. The recommended structure according to LAXHAR Labs for ordering prompts is: artist tags, [your prompt here], quality tags

This is a V-pred ZSNR model and will not work with Automatic1111-webui. In order to get images out of vpred models you need to either switch the dev branch on Automatic1111 webui, ComfyUI or reForge. My personal recommendation is to switch to reForge.

In order to properly replicate the example images, you will need to enable the following extensions on reForge, listed in order of importance.

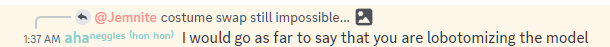

"APG's now your CFG" and Guidance Limiter for reForge

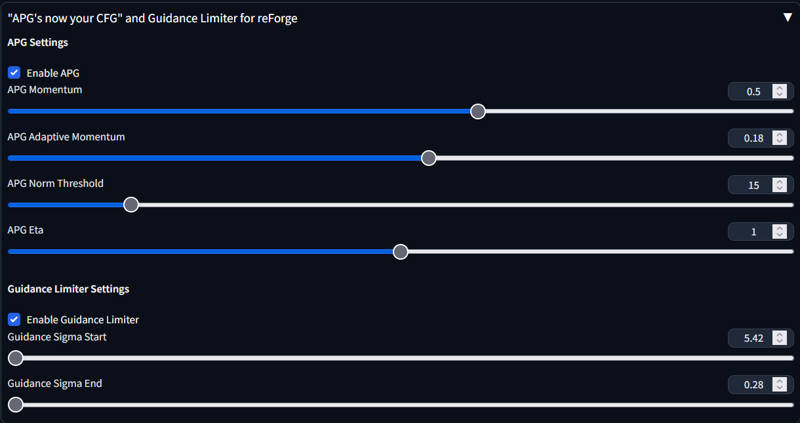

Perturbed-Attention Guidance

Perturbed-Attention Guidance

and Mahiro CFG for reForge

and Mahiro CFG for reForge

Recommended VAE is the one by LAXHAR Lab member heziiiii. You can find it here.

Recommended VAE is the one by LAXHAR Lab member heziiiii. You can find it here.

License

Same as NoobAI.

Q & A

Q: What's the recipe?

A: Version 1 is just a straight lora/lycoris merge. NoobAI-XL (vpred), this LyCORIS, this LoRA, this LoRA, this LyCORIS, this LoRA, and this LoCON. Weights are 0.6, 0.5, 0.5, 0.25, 0.5, and 1. You can take them and make your own mix or whatever, I don't give a shit. Hiding recipes for merges is lame and for gigalosers.

Version 1.5 was originally an attempt to convert MIX-GEM-XLBD1 to vpred, but I ended up using so many weights from LobotomizedMix that it ended up just being another version of lobotomized mix. The sd-mecha code for this comes from @illyaeater (thanks imi). Mostly it just SLERP merges the dissimilarity between LobotomizedMix_v1,MIX-GEM-XLBD1, and the parallel component between the two using SDXL1.0 as a base, at alpha 0.5 which is blurred Gaussian kernel to prevent sharp transitions and introduce artifacts, onto SDXL1.0. (For what a SLERP merge is, see the sd-mecha documentation). After that it's just a simple merge of replacing index 0 of every unet attention layer from the former with the latter. Code is here.Q: Difference between versions?

A: Check the version sidebar. Alternatively, X/Y comparison charts for:Q: Does this model have innate artist tags?

A: Yes, but they're not very strong. You either have to prompt them at high weights to overcome the base style or they're not readily apparent.Q: Tips and or tricks?

A: If you have a composition you like but you don't like the way the final generation comes out, experiment with changing the prompt and sampler during a hires fix.Q: How to get good eyes and fingers?

A: Hires fix. Hires fix always. Models trained with 4CH VAE innately suck at producing clean results off the bat unless you're going for a simple style so you need to run the second denoise pass to clean up noise, even if you don't upscale.Q: Pony?

A: No.Q: Why are all the seeds 114514?

A:

Description

Very minimal differences. Text encoder is the same, many of the weight blocks are also the same, slight adjustment to some blocks regarding lighting. Primarily LobotomizedMix_v10 was multi_domain_alignment merged with MIX-GEM-XLBD1 and some other unet blocks remerged from v1. Compared to v1, the primary effect is that the color blue is a lot less dominant and the color yellow is much dominant. As a result, warm colors now take precedent over cold colors.

Pros:

Shine, luminescence, caustics, and other warm color dependent lighting elements now are slightly improved

Improved backgrounds, large anatomical features like limbs see improvement, fingers are sometimes better sometimes worse

Clothing and object interaction is better on average, may be worse in individual seeds

Default style greatly improved, also better at wide aspect ratios

Cons:

Shadows, darkness, black backgrounds, and other cold color depending lighting elements are worse

Artist keyword effectiveness heavily reduced, default style is harder to move away from

Seed variation reduced, randomizing seed now produces less dramatic effects

FAQ

Comments (15)

will you adress the botox allegations

Hi, what is that "BD1epstopredtxttxt2" model ? (ouch complex name lol)

In the comparison you posted, I think it's often better than Lobotomized v1.0, slightly more background details and better lighting. Lobotomized v1.5 looks better too. It's all very subtle improvements but it's there

It's just Lobotomized Mix v1.5. I injected weights for 'v_pred' and 'ztsnr' directly into the state_dict. This doesn't change anything about inference, it just makes it so that UIs like ComfyUI or reForge with the capability to do Zero SNR out of the box will automatically detect that it's a v_pred model and swap to using ztsnr. That's why the hash is difference but otherwise it performs exactly the same. The state_dict alteration is mostly a convenience thing and also to save my sanity from users constantly complaining that the model doesn't work (they didn't realize that you have to use v_pred sampling with v_pred models).

@Jemnite I have no idea what you're talking about (v_pred, zero snr, state dict) but thank you for the explanation anyway xDDD

oh, here's the holy grail

Hi, can you give some hints on how to write a prompt correctly so that the right text appears on the body\clothes ?

If you're looking for text you probably want to try FLUX instead.

Interesting look. I like this model. Thank you.

Don't use pony score tags, they don't do anything on this and also waste tokens.

Do you plan on updating this? It is really good and I like its style a lot.

also, what do you prefer in general?

SIH or lobotomized?

Personally, I like sih better because it's more flexible and works a lot better with loras. The big issue with updating this model is that because once you get to a certain point in capability you don't really get upgrades, just style rehashes. And when it comes to this model, because it's entirely a style mix, I want to avoid changing the style. Essentially if you improve in one aspect, another aspect gets worse because you're already brushing up against the limits of the architecture. For example, I uploaded a sih version that I've just not published because even though one has better anatomy and posing, it loses a lot of capability when it comes to simple styles. I've just been running constant x/y comparisons with it and the improvement hasn't been consistent across the board.

At this point every SDXL model in the NoobAI lineage is some variant of sidegrade rather than a pure upgrade. There are models which can produce better outputs but that's because they give up style flexibility, character recognition, or are just utilizing the magic of seed randomization and cherry picking outputs. NovelAI V4 is better than any SDXL model, but that's because it runs on a new architecture that uses attention-based Transformers (like FLUX) rather than the CLIP+uNet combination that SDXL models use.

td;lr no plans to update this b/c I don't think there's any clear upgrade path

@Jemnite I too thought that we've reached the limit of sdxl.

looks like the plan is to wait pony v7, though I'm worried that most of us won't be able to use it because auraflow is such a big model.

@Stirya I don't think PonyV7 will be good. I think Auraflow will probably be better at attention detail than anany SDXL based model but it has some shortcoming compared to other DiT based models. The VAE is still only 4channel which means it's going to learn worse and output worse, But mostly I'm skeptical of the purported effectiveness of "super artists" and the actual effectiveness of the dataset. If the super artists don't work, we're going to end up a much less flexible model than even a SDXL model. lodestones is working on a Flux finetune which looks somewhat more promising but the initial dataset is extremely small. Other than that, the space is developing really fast and DiT hasn't really settled well enough to start finetuning. We're just starting to see people experimenting with using different LLMs to act as tencs so I imagine there will be a lot more exexperimenting before we start to see a viable product that isn't NAIv4.

Dropping in to say I enjoy what this produces merged at various proportions with base Noob. Bit rich for my blood at full blast, but stabilizes Noob really nicely.