Contrast Controller

A handcrafted LoRA. (No joke).

Quick description:

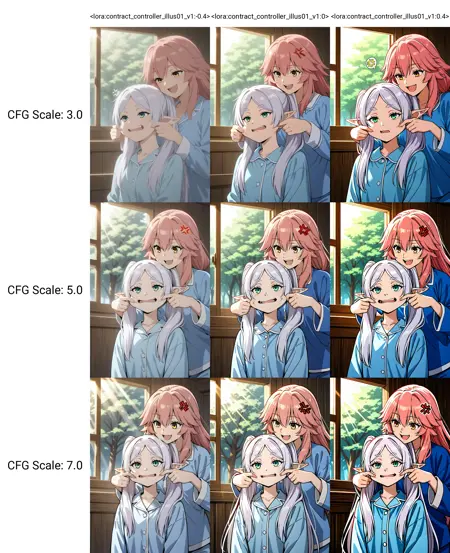

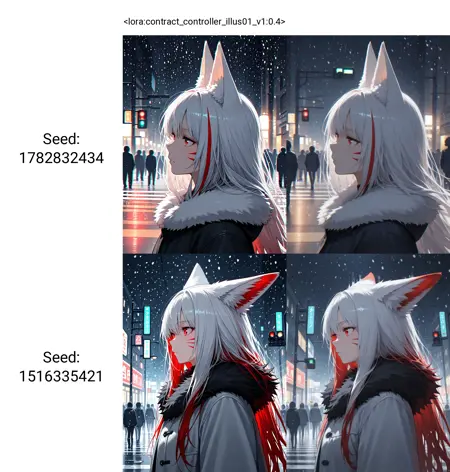

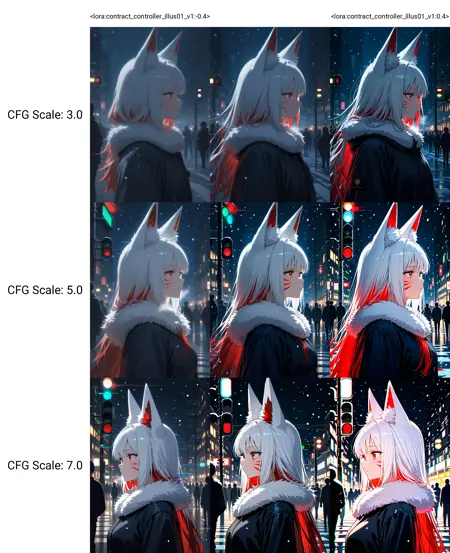

It can amplify (strength >0) or reduce (strength <0) the contrast of your base model.

Useful when you want something really colorful, or your base model has oversaturation issue.

Unlike other "trained" LoRA. This is "handcrafted". There is no training, so zero style shifting/pollution. (not an approximation, it is mathematical zero)

The size of this LoRA is 400KB. It is not a common LoRA. So don't be surprised after downloading it. The file is not broken.

Working strength is around -0.5~0.5. Recommended -0.2~0.2.

Technically supports all based models, including NoobAI. Unless your base model somehow nuked U-net OUT8 block, which is very rare.

Advance description:

You may have heard about FreeU. It can manipulate U-net blocks output. But it requires software support. What about using a LoRA to do the same thing?

So you can think of this LoRA as FreeU, sort of. It patches U-net OUT8 block (the final block) and manipulates its output.

You no longer have to choose between "creativity/low CFG scale" and "high contrast". Now you can have both.

You can increase the U-Net strength so you can lower CFG scale, and get more creative result and details.

Or vice versa, reduce the U-Net strength so you can use higher CFG to get a stable and clean result, without oversaturation.

Share merges using this LoRA is allowed. However, you must credit the creator and provide a link to this page. Beware that weight pattern will become very unique after this LoRA applied. A normally trained model will never have such kind of pattern.

This LoRA is highly experimental. Remember to leave feedback in comment section. Don't write feedback in Civitai review system, it was poorly designed, literally nobody can find and see the review.

Have fun.

Description

FAQ

Comments (28)

"contract_controller.safetensors" 🤣

Since AI people hate it when a tensor breaks the contract

oops, typo in the file name 🤣

fun experiment: SPO lora high, contrast troller negative

Does this work for sdxl?

Me encanta, es brutal

I place in lora folder and it dont show in lora tab

Me too. I tried XL and IL, still could not see this lora.

Something is wrong with the download.

I can't use it on reforge, can't find the file on the list

Lora works exactly as described.

Incredibly well.

This is real good for the Illustrious era of AI art where people often end up shiny and eyes are LED.

So glad this exists now. Thank you for making my life in updating my merges easier.

When using with weights exceeding 0.2, strange ripple textures often appear. Are there any ways to mitigate this issue?

strange ripple. You mean like this?

https://civitai.com/models/971952

(image at the start of the description.

sounds like a messed up noise schedule.

@reakaakasky

A typical example of this issue is the emergence of these abnormal textures, which occur with high probability when the LoRA weight exceeds 0.2. While some images can be successfully restored through Hires.fix upscaling, others irreparable , as shown in this image

https://civitai.com/posts/23107025

This issue particularly manifests on smooth surfaces with uniform color gradients - most notably on skin, clothing, and walls - where these abnormal textures tend to emerge with higher frequency

@ggg493 I see...

This looks like a slightly mismatched noise schedule. So the image is "unfinished".

This is the base model problem. most likely it merged with some vpred models.

This can't be fixed.

not a fan of Wai, this base model is a lie, I suggest switch base model.

@reakaakasky Can you recommend a better base model?

@ggg493 no. But I do recommend "trained" base model instead of "merged"

makes the rescaleCFG comfy node almost obsolet.

emmm... this LoRA is sort of "dirty fix". And highly experimental. quite handy for simple setup.

But you should definitely use rescaleCFG and freeU whenever you can, they have more controls and options. 😅

@reakaakasky hehe i'm mainly generating on tensortArt and often too lazy to setup a comfy workflow. So for their easy to use creation mode the lora works quite okay with some of the usual vpred suspects :D

@reakaakasky Also I was wondering - so in Crodys Merge Scripter Guide if you scroll down you find the section "4. Fine Tuning" - Is your contrast controll function similar to what such merging script offers as finetuning params? And could you potentially also make LoRas for the other scripts it has for finetuning? Theoretically speaking. https://civitai.com/articles/12245/crodys-model-merge-guide-team-c

@deitychaser Can't find the code so can't tell. but just base on the content of the "guide". I think it's nonsense. Especially section "3. Block Merge". That is 200% nonsense plus.

LoRAs for other control: Theoretically no. Not like this one. But you can do it by training on super bias dataset. I think there are such LoRAs already on civitai.

@reakaakasky The Block merge paragraph is nonsense meaning the description of the blocks are wrong? Do you happen to have an accurate description for each block I can look at?

@deitychaser no, there is no such description of what block doing what.

Fact about AI model that may sound funny but also so damn true: people don't know what the model learned.

If such accurate description existing, that would be a Nobel prize.

@reakaakasky Well at least OUT8 seem to be associated with contrast given that your lora works. Are any other loose (less accurate) labels for other blocks known to you?

@deitychaser the diffusion model does not output the image. there is no such concept like color, brightness etc. at all. It outputs noise. OUT8 is final layer and is the easiest place to modify the strength of the output noise. This affect the contract of the image (How much the pixel value deviates from the avg 127.)

less accurate: deeper layers are more sensitive. Small changes can dramatically change the output image. Because the changes will be amplified by outer layers. Which gives you a feeling that inner layers are usually more related to high level concepts.

Is there any academic paper on the theory behind your hand-crafting technique? If not, I believe you should write one.