A finetune, aligning NoobAI v-pred 1.0 to Anzhc's EQ-VAE-B7 for basic usage and further training with loras or finetuning, less noisy generations due to cleaner latents at training time, usual v-pred shenanigans apply, but model should be more resilient to colors exploding and colors should be a bit cleaner overall compared to base v-pred, does this behave like a slight aesthetic tunning? yes due to limited data get me a 5090 and I can do bigger finetunes instead of choking my 4090,, this was trained for 370k steps on 1x4090 I slammed my head hard against the dataset and compute wall, go donate to Anzhc for his efforts training the VAE.

If you like it, and see some future for it, donate to me at Ko-fi as this was trained at my own expense and it took several days of tries with single GPU Training in a 4090.

Generation settings? Default v-pred, just use the bundled VAE, or B7 from Anzhc's Repo, yes he is banned, yes I'll claim the NoobAI1.1EQ he did and the VAEs here in civitai as he has given my permission to claim those.

Does this still leak random styles with some tokens due to Natural Language? yes it fucking does, fuck Natural Language with Clip L and G

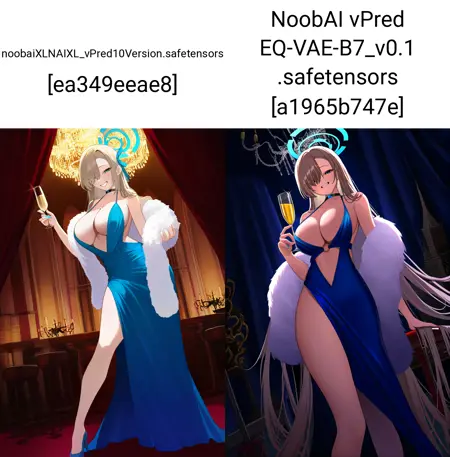

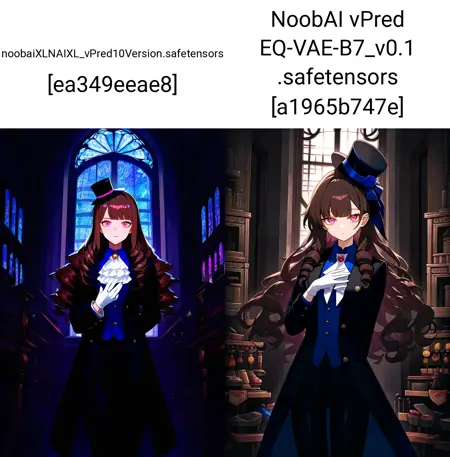

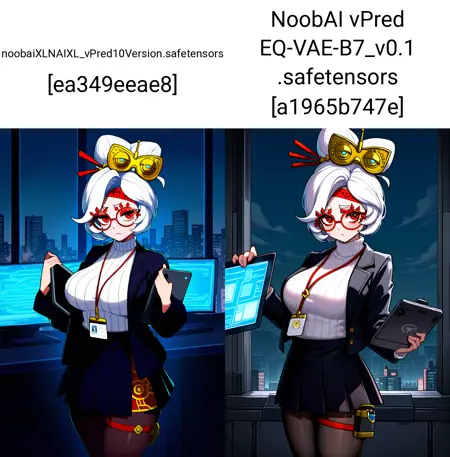

Some shitty comparisons

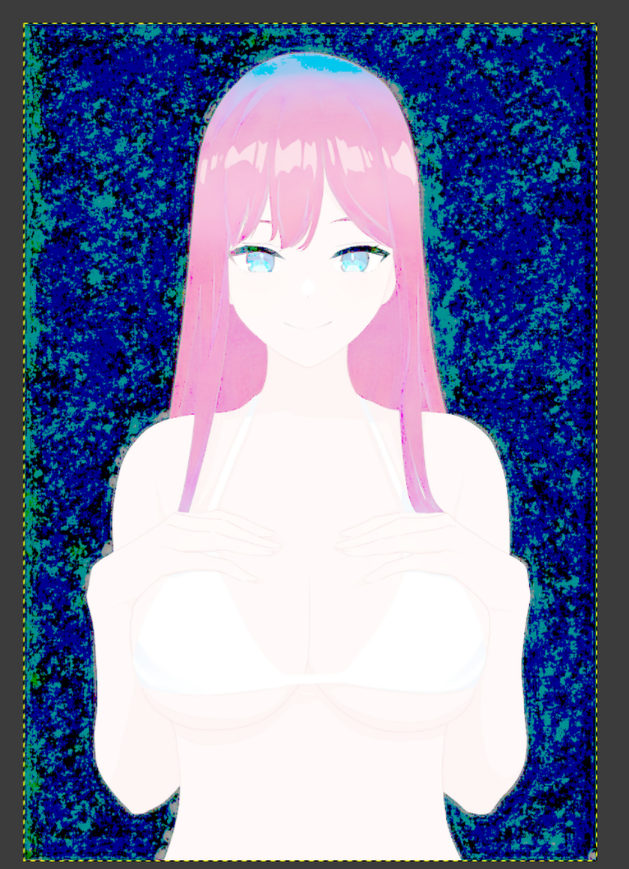

Raw gen

levels changed to expose noise in the background which remains very consistent

Same image in base v-pred

levels changed to expose noise in the background which remains very bad

Description

Trained for ~~370k total steps

Batch Size=7

Gradient Accumulation=4

Full_BF16

AdamW8bit Kahan Summation Optimizer

FAQ

Comments (32)

v.01 usable -> Does this mean CLIP was now aligned too?

@deitychaser this is aligned to Noob's Clip, Clip wasn't trained as that is a can of worms when aligning to VAE, what this means, is this model can be used for inference and should be decently stable, and you can even train over it.

@bluvoll I see, thank you. Maybe I just confused this model with some other release where the creator stated that he'll see if he can also align CLIP in the future. Thought that was you. Anyways, thanks. I'll try this model out now.

@deitychaser No problem.

goonable 💪

Sadly, the anatomy is still wacky. Generated images have a much stronger ai-generated feel compared to the base noob (even in your examples), and while the colors aren’t exploding even at high cfg, images kinda... unpleasantly oversaturated. Like in cyberfix/itercomp merges. The images are sharper and cleaner tho, I’ll probably use this model for img2img upscales. Good job.

@herkerp123759 yes, its a 0.1 version after all, hopefully 0.2 behaves better.

@bluvoll any plans on incorporating Anzh's clip finetune for a potential 0.2?

Oh I really like this😋

Just tested some trails on Lora training based on usable checkpoint with kohya ss. All I got are grey noise. I guess it may be caused by the integrated VAE, or this version "usable" is not applicable to the prodigy optimizer.

@DeepDark_Fantasy514 Make sure to use the same VAE as the one bundled with the ckpt, no need to load one from outside, I trained using sd-scripts and it worked without issues.

1 day of 4090 training on 340k images, isn't that like 50 bucks of h100s or better? wouldn't it be worth to try doing this on rented hardware with bigger dataset? also can you please share how to do the training part? I've been trying to merge it but it's a bit tough

@illyaeater I'm not getting donations or anything for this, I'm training purely on my local hardware, as for trraining, its the same as always, just load the vae from the checkpoint instead of using sdxl_vae, regardless of Lora or finetuning it will work.

oh

would you say using this one as base for lora training is better than than the base noobai?

@TOF_enjoyer not yet!

I have a 5090 and have tried training some LoRAs using the original Noob-Vpred1.0 and EQB7 VAE. Would using this model give better results?

yes, I've tested this as well. Improved vae should result in better loras

Any reason why you're using adamw8bit with kahan summation vs regular adamw with stochastic rounding? Could only fit things at a batch size of 4 with no gradient accumulation that way on 24gb in my experience (using lion I can fit at least 8), but you'd have more precision on the momentums at least.

@Smegmcsalt regular AdamW with SR uses more vram in my setup than AdamW8 with Kahan, the added precision is just a nice bonus vs AdamW SR, also, I can use AdamW8 with Kahan easily in my DeepSpeed Zero1 setup with both 4090s for the final run, hope that explains my point of view

@bluvoll gotcha. Have you found the reduced precision on the momentums to not really be a problem? I used to train Lion with Kahan, but I found that vram use got a little tight so I switched to SR and haven't observed much difference between the two after a few hundred steps into a training run, though kahan always seemed to have stronger starts. Never did try AdamW with Kahan as I couldn't make it fit.

Also you mention that NL has screwed some styles up. If the goal here is alignment then you could probably just get by with tags and a bit of dropout couldn't you?

@Smegmcsalt In finetune I did find that Kahan gets to target 'faster' in a way, but that's about it compared to SR, the thing I noticed with SR is that due to how Nerogar made the implementation we all use, sometimes it might slowdown some updates but that's how it works nothing major quite honestly, a little extra time to reach a desired output and well, a bit more 'random' but in all honesty it reaches the same result in the end, at least on my tests!

As for NL, I do have a copy of NoobAI's dataset, which is why this experiment is called NoobAI as well despite my pouring enough compute to perhaps rename it, I won't use the NL captions from the dataset, just pure tags, for 1epoch around 800k images to just align the u-net to the VAE, so inherent issues with training won't be fixed, I plan to provide a working base with EQ-VAE that more ppl can finetune as if it was base v-pred just less explosive at high CFGs and less noise in generation and training, then if possible I plan to convert that to Rectified Flow.

@bluvoll Ah, was under the impression that you would stick to NL for the realignment but I like the sound of your current plan. All this effort and transparency is much appreciated by the way!

As for SR, I've actually been using https://github.com/ethansmith2000/stochastic_round_cuda for a compiled solution. In practice though I don't remember the performance gains being anything too major but may be worth a shot for your setup. Also cautious weight updates might be something to look into for extra stability.

@Smegmcsalt I gotta be transparent since I'm not all knowing, so perhaps I might make mistakes, thank you for asking since it does allow some review of my hyperparameters and training ideas.

The "0.1 Usable" version is quite poor.

Some users have reported that models fine-tuned (either with LoRA or fully fine-tuned) using this version as a base model produce images with low quality, poor proportions, and broken limbs. This was NOT the case with the previous version, "Experimental EQ-VAE."

I believe this model still has significant room for improvement. I think aligning EQ-VAE with the v-prediction Unet is a great achievement. I look forward to even greater improvements in the next version.

Actually, one problem i encounterd when trying to train LoRAs was the 'Edge Artifacts' in the image.

@QR0W_ as per Anzhc's model post for the eq-vae inference needs a patch, you gotta apply it I think comfy has a node for it, and reforge did too.

@bluvoll Yes, I applied the 'VAE Reflection' node in ComfyUI. This seems to solve the problem, but not entirely.

@QR0W_ can confirm that it's not entirely solving the issue.

@deitychaser VAE Reflection should be enabled both during generation and during training. If it wasn't enabled during training, then there will be artifacts.

Awesome to see the eq vae being used! Thanks for sharing this.

I think the SDXL architecture still has much to give. Ideally, we would have a new full trained model based on danbooru with long clip and eq vae. Just dreaming here...

Which workflow is more suitable for this checkpoint? The diagrams generated by the workflow I'm currently using are all pixelated.