This is a ControlNet model! This model requires ControlNet!

Model Details

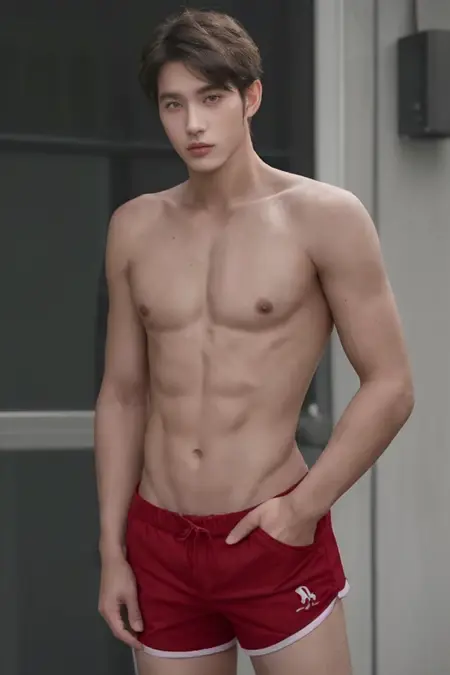

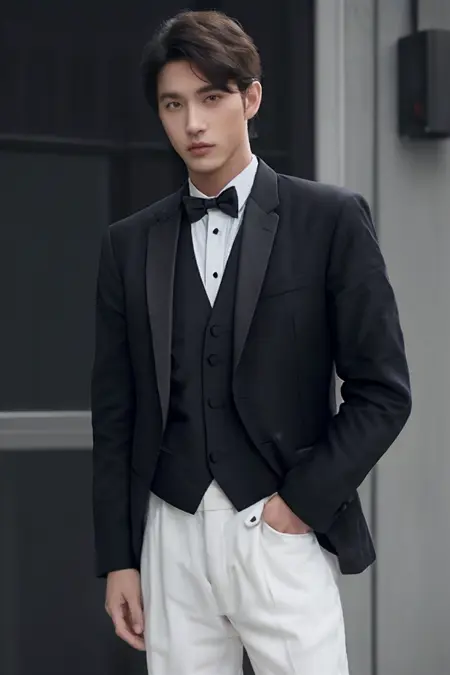

This model aims to allow users to modify what a subject is wearing in a given image while keeping the subject, background and pose consistent.

I've produced good results in txt2img, img2img as well as inpainting.

I've produced good results with images generated with Stable Diffusion as well as with pictures I've taken myself.

Installation

Place the .safetensors file into ControlNet's 'models' directory. To use the model, select the 'outfitToOutfit' model under ControlNet Model with 'none' selected under Preprocessor.

Tips for use

Images with a clearly defined subject tend to work better.

This model tends to work best at lower resolutions (close to 512px)

If you run into trouble at higher resolutions, try running a first pass at a lower resolution and then using img2img (or txt2img w/ Hires.fix) with a lower denoising strength to upscale to a higher resolution while continuing to use your original input image as input to this ControlNet model

I recommend starting with CFG 2 or 3 when using ControlNet weight 1

Higher CFG values when combined with high ControlNet weight can lead to burnt looking images.

Experiment with ControlNet Control Weights 0.4, 0.45, 0.5, 0.6, 0.8 and 1.

Lower weight allows for more changes, higher weight tries to keep the output similar to the input

Anything below 0.5 seems to rely more on the Stable Diffusion model whereas anything 0.5 and up seems to weight the ControlNet model more heavily

When using img2img or inpainting, I recommend starting with 1 denoising strength

Experiment with 0.75 denoising strength

When inpainting, I recommend trying "latent nothing" under Masked content

Consider lowering the model's weight when generating higher resolution images

The higher the resolution of the output image, the more difficult it tends to be to alter the content of the image from the input image

If the output isn't changing enough from the input, try increasing the weight of the prompts or decreasing the Control Weight of the ControlNet Unit

Can work well with other models such as OpenPose ControlNet

Description

Trained this model using a completely new dataset using more real data.

Main changes:

Overall improvements in character consistency and quality.

Discontinued the use of "the same man" / "the same woman" in prompts

Reduced NSFW generations

Known issues:

Improvements could be made in maintaining color consistency as well as improving clothing generation overall. Some of this may just be a matter of curating more and higher quality data. Other improvements may require tweaking the training script

FAQ

Comments (22)

XL

Looks interesting, bit didn't work for me. Maybe it's because I'm using Easy Diffusion. I was asked for a corresponding YAML file, I shall place alongside your model. Is there any YAML available for outfit to outfit ? Thanx in advance.

I remember I used to need a YAML file for automatic1111 and was able to use any ControlNet YAML. I can upload the one I'm talking about if you wouldn't mind testing it

Hello, EmmyJ_ . Thanks a lot for the YAML file, now the Outfit To Outfit works for me.

hope can get outfit from image

Try using IP-Adapter plus. The results are okay

I'd recommend setting IP-Adapter Control Weight lower than Outfit to Outfit Control Weight (start with 0.6)

@EmmyJ_ thx

Is there or is planed any SDXL version?

It's something I'd like to do eventually, but it requires some additional work for training and I've been spending most of my free time on curating data and running various tests to see which settings work best. I'll take another look soon, but no promises.

how to avoid jpg like noise on generate image

Sometimes Hires.fix with to higher resolutions with low denoise strength (0.3 - 0.55) helps. You can also combine this model with inpainting.

Hi, have you a Diffusers format ? I haven't been successful converting it for InvokeAI / CoreML usage

Unfortunately, I did not keep a copy in the original diffusers format. I will make sure to do that moving forward

Thought in using this with X adaptor, so sdxl can be used , this adaptor, x adaptor allows 1.5 models and lota etc to be used with sdxl

can someone for the love of God explain how to use this, whatever i tried , it does nothing. either totaly ignoring input image, or just draw that input, ignoring the prompt, img2img, inpaint......

this video should help. And you can download workflow.

@yatbond

that's for comfy. how to use in a1111?

how does it compare to IPAdapter for outfit2outfit

Hi, could you make a detailed video tutorial like the one that exists for comfyui, but for use in Automatic 1111? Because I understand how to use it successfully in text2img but not in img2img or in inpainting tab.

thank you in advance

AttributeError: 'dict' object has no attribute 'shape'

Is there something for SDXL?

Good Work!

¿you have a comfyUI composite?

Details

Files

outfitToOutfit_v20.yaml

Mirrors

outfitToOutfit_v20.yaml

controlnet11Models_pix2pix.yaml

controlnet11Models_depth.yaml

controlnet11Models_mlsd.yaml

controlnet11Models_softedge.yaml

controlnet11Models_seg.yaml

controlnet11Models_lineart.yaml

controlnet11Models_animeline.yaml

controlnet11Models_normal.yaml

controlnetQRCode_sd15V1.yaml

controlnet11Models_canny.yaml

controlnet11Models_openpose.yaml

controlnet11Models_scribble.yaml

controlnet11Models_inpaint.yaml

pixelnetControlnetFor_v00Experimental.yaml

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.