I hope you all appreciate the irony of training a powerful image generating AI to produce an art style from before computers were invented.

Pulp magazines were a popular form of entertainment that my 99 year old grandmother might have remembered from when she was a child, although she might be too young for the peak of their popularity. Magazines like Fantastic Stories and Weird Tales brought large quantities of cheap, wild stories to the public. Pulp magazines started and popularized such literary genres as "Science Fiction", "Sword and Sorcery", and "Weird Fiction". Their contributions to literature simply cannot be overstated.

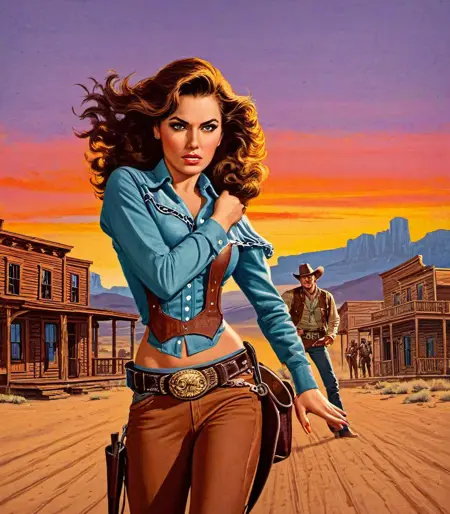

However, there was also a period of time when they featured beautiful, wild, controversial, and scandalous, cover artwork, often featuring scantily clad women in peril (although the stories inside the magazine were rarely as scandalous). These covers generated lots of controversy among the public, but also lots of sales.

This LoRA should produce artwork in the style of 1930-1960 pulp magazine cover artwork.

I know this has been done before, there are other LoRAs that do similar, so how is this one different?

Well, first, this was not trained on any pulp magazine covers, instead it was trained on the original artwork that was used for the magazine covers. That means that the training data does not include magazine titles or story names or price tags or advertisement blurbs or any text, just the artwork (and sometimes the artist signature).

Second, this was trained with rich labels on each image, so it should hopefully be good at producing lots of different elements from pulp cover artwork as well as the style with descriptive prompts. I took care to label the color of things and the specific clothing and objects in each training image.

Will this produce SFW or NSFW content?

The style is what I am interested in. Hopefully it can do either SFW or NSFW. However, this was trained on pulp cover artwork, which has a strong tendency to be a bit risque - sexy, but not explicit. I tried to include some more explicit images, but the percentage was very low. All explicit images in the training dataset were labeled with the tag, "nsfw", so if you want NSFW images, try including the "nsfw" tag, if you don't want it, then perhaps include "nsfw" in your negative prompt.

Update: I have encountered a significant number of instances where I got NSFW results without anything suggesting it in the prompt. So it seems that sometimes it may lean toward NSFW images.

What models and parameters work best with this LoRA?

I actually find that ironically, more realistic type models work best. I have been using Juggernaut v7 and a high alpha (0.85 - 0.95) with good results. If you use a more cartoony or anime focused model, it seems to work better with lower alpha values (0.4 - 0.6), but then you will get something more like half-way between the model's style and the LoRA style, whereas using realistic models with high alpha will give more of the intended pulp cover art style.

Description

FAQ

Comments (11)

A SD 1.5 version would be possible?

It would be possible. I personally don't have any interest in 1.5 anymore, but theoretically, it should be pretty easy to do, so I can look into it.

@prushik Thanks, let me know. Meanwhile i would like to know what advantages you see in SD XL. As for me, I'm very into anime characters, so the unfortunate fact XL is incompatible and I would have to trash all my LORAs is a big problem.

@settima_ai I tried training 1.5 on the same dataset, but the results were quite poor, it produces a lot of really distorted faces and extra limbs. I will continue trying, there are a few other methods I can try and some things I want to get worked out anyway, but it looks like this is a difficult dataset for 1.5.

SDXL is much bigger, much more resource intensive, slower, but it produces much better results. I had a ton of models and LoRAs saved from 1.5, including some that I trained myself and haven't yet been able to replicate on SDXL, but SDXL is still worth it. Plus, there really are a lot of LoRAs available for SDXL now, and I am getting better at training my own, so I don't think I am missing anything.

I made an SD1.5 version which you can find here: https://civitai.com/models/246686

However, if you compare this results from that model and the results from this version, you can see how much better SDXL is at reproducing the pulp cover art style. I used essentially the same dataset, just scaled for SD1.5, and with some of the minor mistakes I made in the SDXL version corrected, and with dataset augmentation.

@prushik If the difference between SD 15 and SD XL was really the same we can see in the two LORAs, there was not doubt everibody would migrate to XL instantanely! Unfortunately this is not true and you can verify in the CivitAI gallery how the actual results of SD 1.5 can still compete with XL and in most cases are better.

Probably SD 1.5 is at the end of his service life but still better thanks its super tuned checkpoints, while XL still has to develope its potential.

Recently a XL user told me he generates images with XL for the better intelligence but then he prefer to render the final result with SD 1.5 for better graphic. Apart this, the demo gallery in your LORA -especially the unacettable low quality of the face - confirm me is not a problem of SD 1.5. Something gone wrong during the training or you are using a bad checkpoint or you lose your hand in using SD 1.5. or... I dont know! Anyway, thanks for trying it. : |

@prushik EDIT: "you lose your hand in using SD 1.5..."

I have a suspect is this one, but I told directly in your LORA place...

@settima_ai Yeah, I didn't really try too hard to get the best results out of the SD1.5 version before posting, I didn't use any upscalers or face enhancers or anything, and I used DSv8, which is probably old by now but it's what I had. My inference script was also thrown together, just a copy of my SDXL script with XL stuff replaced with non-XL versions. But this is kind of my point, SD1.5 does have a lot more limitations, we have just figured out ways to overcome them with additional tools and more complicated workflows and GUIs that manage the complicated workflows. SDXL doesn't solve all the issues, but it handles a lot of the things that SD1.5 can't on its own. The larger latent space and second text encoder make a big difference, not just in the output resolution, but in the complexity it can reproduce. SDXL hasn't reached it's full potential yet for sure, but to me, that seems like good reason to be using it over 1.5.

@prushik Yeah, that was the premise about XL: more resolution and more smart. So, even I dont use it, I always look at what it can do being more smart. Unfortunately, on CivitAI, I still see only sexy "solo" girl's portraits around. Where are the examples where it can handle more complex scene? For example, can it do a man and a woman in the same image, shaking the hand each other and without exhanging hairs, clothes and other detalis specified in the prompt? 99% of images I see, is the same sh*t we are already doing with SD 1.5, only with more resolution and looking "cool". And that's all. These days I'm very depressed about the future of Stable Diffusion, exspecially after testing DALL-E 3, wich is like comparing a rock to a plane, and really really shocked me!

@settima_ai Yes, SDXL can do more complex scenes with multiple characters. It isn't good at it, but I think it is better than SD, and it is better about things like not blending fingers into hair and clothes and surroundings. It still makes those mistakes, but less frequently. I was surprised at how easily XL handles multiple characters compared to SD. Its still not great, but it is better.

DALL-E, on the other hand, is going to be naturally better at those things due to the way that it's latent-space works. DALL-E uses discrete latent tokens, so it has no problem generating multiple objects or people because it is effectively generating them in different images and gluing them together later. This comes at a price of course, and SD and especially XL is going to be better at (diversity of) smaller details. DALL-E can't draw anything it doesn't have a discrete token for, and even if it has a token for something, it can't put two tokens into the same "patch", so it needs to space them apart. DALL-E does look good, but I think the SD-style latent space is the future (or maybe some hybrid of the two).

@prushik Thank for the info. I didnt know this. It explain some facts and suggest the best way is to generate a scene with DALL-E and then render again with SD, to get exactly the 3 things DALL-E cant give: sexyness, specific characters and hires details.

So ,you are using DALL-E too?

If not, do a gift to yourself and subscribe, even if for just 1 month. It worth largely!

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.