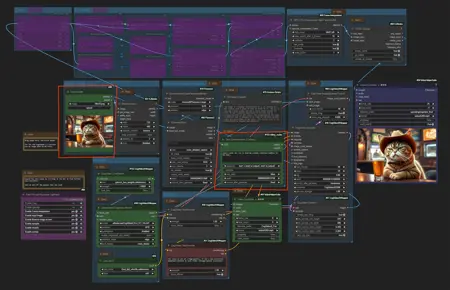

A image to video ComfyUI workflow with CogVideoX. Tested with CogvideoX Fun 1.1 and 1.5. Note that the motion lora does not work with the Fun 1.5 model. Just with the 1.1 one.

The zipfile contains the json file, the starter image, and the png file from creation, which also contains the workflow.

This workflow also contains a CogVideoX motion lora for the camera movement. And you can also add further instructions in the prompt. CogVideoX relies at motion informations in text form.

It also has a very simple upscaling method implemented. I am at my journey to figure out a special upscaling workflow though. But for some it might still be useful. It is super fast compared to an upsampling by another ksampler.

CogVideo creation size is limited. The old version 1.1 is fixed to a 16:10 format. And 720x480 resolution. The new version 1.5 goes up to double size, but the motion lora that i use here does not work with it.

There are some collapsed Note nodes besides the important nodes. Click at them to expand them.

The Note nodes contain further informations. And in case of the models also links to the models, and where to put them.

This is my first upload at Civitai, so suggesstions and feedback is very welcome.

EDIT:

The example video rendered in 8 minutes plus some overhead for preparation, without the upscaling with CogvideoX Fun version 1.1. at an 4060 TI. Version 1.5 renders doube as fast. But the motion lora does not work. Upscaling is another 4 minutes then if i remember right.

I was not able to run CogvideoX at my old card with 8 Gb. That's too low. 12 gb should work. But i cannot test it. I render at a card with 16 gb at the moment.

Webpage: https://www.tomgoodnoise.de/index.php/ai/workflows/

And there is an explanation video at Youtube:

Description

Initial release

FAQ

Comments (12)

What are the HW requirements and gen speed?

Hey Cavernust,

The example video rendered in 8 minutes plus some overhead for preparation, without the upscaling with CogvideoX Fun version 1.1. at an 4060 TI. Version 1.5 renders doube as fast. But the motion lora does not work. Upscaling is another 4 minutes then if i remember right.

I was not able to run CogvideoX at my old card with 8 Gb. That's too low. 12 gb should work. But i cannot test it. I render at a card with 16 gb at the moment.

Thanks for the question. I will add this information to the information text too :)

Kind regards

Arunderan

@Arunderan thank you!

About 5min50s with an 3090RTX to generate the 49 frames we're limited to.

@hansolocambo I'm honestly waiting for better versions of ltxv to come out. The basic version takes less time to generate than flux but we're still not at minimax levels which in my opinion should be the base in terms of quality

@Cavernust

Yeah, LTXVideo is also nice. But has currently a quality problem. I produce 99% blurry videos with it ^^

@Arunderan yeah, its not ready yet.. but hold on. soon we will be able to make entire movie trilogies for free in high quality. we are just getting started. advice to anyone reading this: don't play your best cards yet. be patient

I installed all the missing nodes. It will take my image and extract/generate the prompt for the workflow. It's breaking due to pytorch_lora_weights.safetensors not being recognized. There are a few places that's available online. I tried all of them. Which did you use?

!!! Exception during processing !!! Can't recognize LoRA D:\temp\ComfyUI_windows_portable\ComfyUI\models\CogVideo\loras\pytorch_lora_weights.safetensors

Hey anmtek312,

Have a look into the collapsed note nodes. You can expand them. And the link to the lora can be found there, and i also mention where to place it. You can name it whatever you want. You just have to choose it then in the picker.

You can find it here, in the files and versions tab:

https://huggingface.co/NimVideo/cogvideox1.5-5b-prompt-camera-motion

And you need to place it into ComfyUI\models\CogVideo\LORAS

You can also remove the lora node completely if you really cannot get it to work. Then you miss the camera movement though.

hth Arunderan

Maybe dont collapse that note, confused the hell out of me.

@Kpros Sorry for confusion, but i even mention it. The nodes are collapsed for good reason. find the expanded hint nodes a visual noise when you actually want to work with the workflow. When they are collapsed they are out of the way. And you can expand them when you need them :)

i have used cog video on a different workflow, while impressive and working well, its very slow.

10 min to render 50 frames =_=

can't wait to see this improved

(im on 3090 + ryzen7 + 64gb ddr5)