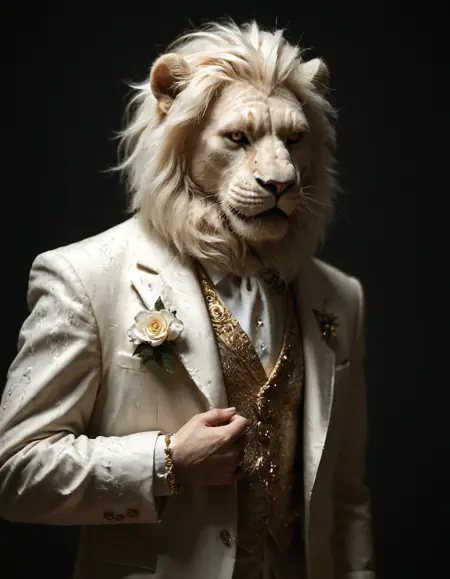

One of my pending tasks had been to create a model with cinema aesthetics, it is a new model because I think it does not correspond with any of my other creations, it is focused on creating striking images within realism, one of the approaches was to create dark environments that go back to cinema or cinematographic ideas, I hope you enjoy it as much as I did when creating it and I hope to see your images soon.

The VAE is not baked, use SDXL_vae

Clip skip: 1

Upscaler: Im using remacri_original upscale 1.5 Steps 10 denois 0.45

Width and height: 896*1152

CFG Scale: 5

Steps: 35

The sampling method i use is DPM++2M SDE-Karras but try with others if you want, i get excellent results with DPM++2M-Karras, DPM++3M-SDEPolyexponential

Description

FAQ

Comments (9)

Nice image gallery have you considered transitioning to the cyber fixed version of noob AI?

Why do you recommend using clip skip:1?

I don't have a real reason, I like the results with skip 1 but like always you can experiment with both to see what kind of results are better to you

Nice will try this one, btw, any plans to illustrious? The satpony models in there could be crazy

Illustrious is on the way soon....

Looks worth a shot. I'm curious if it can create the same stuff as your 'Reality' model? Also, noticed you use remacri for upscaling. Have you tried 4XRealWebPhoto upscaler?

I used remacri for a long time, but decided to venture off this site to see if there might be a better one. TBH, it's still fairly close by comparison, but realwebphoto can do just a tiny bit better in some cases. I haven't ran enough comparison so be certain, but in the ones I ran it got details just a bit sharper iirc.

Ty for your feedback, both models have substantial differences that's why the hay their own space, it's difficult to say which is better, I love to try different upscalers definitely I'll try the 4x

@Sateluco Yea, if you google '4XRealWebPhoto', it should come up. But then, google is kinda trash these days, so you might just get a pile of ads and sponsored content. If that happens, just get ChatGPT to look it up for you and give you the link. Interesting though, for training or fine tuning full models of the same primary style (IE: anime/realistic/semi-real), I'd think ya'd just work iteratively with a single model, adding more to it over time.

Sadly, I only got a 4060ti 16gb, so deff can't train an sdxl model, maybe fine tune.. I haven't tested that though, and training a lora already pushes my card to the limits. ChatGPT suggests that I should be able to merge loras with sdxl models, though idk if direct fine tuning uses more resources than that due to accessing more model weights.

Anywho, I digress. Point is, I'm not 100% sure how fine tuning of sdxl models even works, but given the mass amount of data one can hold, it seems like a waste to treat it in the same sense as training a lora (IE: one style per unit).

How do you train loras with this? all the samples come out lookin like fried photos cuz of not training with a vae. I get that the vae can be used after training, but isn't there a way to train with the vae? Everytime I try, Onetrainer gives an error;

``ValueError: Calling CLIPTokenizer.from_pretrained() with the path to a single file or url is not supported for this tokenizer. Use a model identifier or the path to a directory instead.

``

And I've tried useing SDXL_vae, and a handful of other vaes including ones for Pony, but it's all the same thing.