BunnyGunny v2.0

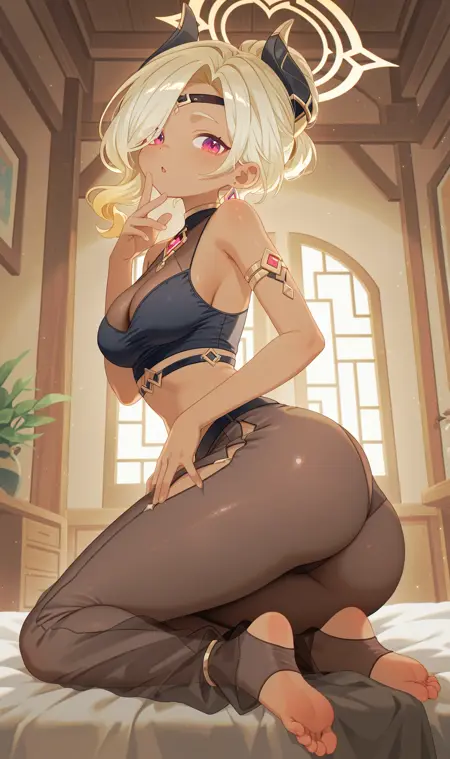

BunnyGunny v2.0 is the next evolution in the Bunny model series.

This iteration represents a significant leap forward, born from a unique merging process that blends the best aspects of NoobAI EPS 1.1 and Pony Diffusion V6 XL.

BunnyGunny v2.0 delivers vibrant and enhanced colors that pop. By combining and further training these powerful models, BunnyGunny v2.0 retains the impressive artist knowledge and compositional strength of NoobAI, while simultaneously excelling in the areas Pony Diffusion is renowned for, creating a truly synergistic model.

Key Improvements over NoobAI and Pony Diffusion V6 XL:

Enhanced and Vibrant Colors: Say goodbye to the desaturated look sometimes associated with NoobAI eps pred.

Synergistic Blend of Strengths: Retains NoobAI's strong artist understanding and composition skills, combined with Pony Diffusion's strengths in "detailed character rendering and dynamic poses".

Improved Overall Anatomy: Expect more consistent and accurate anatomy in your generated images.

Enhanced Artist Knowledge: Leverages the vast artist knowledge base of both parent models for diverse and stylistically rich outputs.

Improved Backgrounds: Creates more detailed and aesthetically pleasing backgrounds, adding depth and context to your scenes.

Easy to Use: Designed for straightforward prompting and delivers excellent results with standard SDXL workflows.

LoRA Compatibility: LoRAs trained for both NoobAI and Pony Diffusion V6 XL are expected to be compatible with BunnyGunny v2.0, expanding your creative possibilities.

Training Methodology - "Quantum Merge":

BunnyGunny v2.0 was created using a novel merging technique I call "Quantum Merge." This method leverages frequency domain manipulation and hypernetworks to intelligently blend the knowledge of NoobAI EPS 1.1 and Pony Diffusion V6 XL. In essence, it involves:

1. Feature Extraction: Utilizing a custom CLIP encoder modification to analyze and extract key features from both models.

2. Frequency Domain Blending: Applying a Fast Fourier Transform (FFT) based blending process, guided by a hypernetwork conditioned on a descriptive prompt. This allows for nuanced control over the magnitude and phase of the frequency components of the model weights, enabling a more sophisticated form of merging than simple averaging.

3. Selective Decoherence: Introducing a controlled "decoherence" factor to encourage exploration of new weight combinations and prevent simple averaging, promoting emergent properties in the merged model.

4. Refinement Training: The resulting merged model was then further refined through a week of dedicated training on a 4090 GPU to solidify the merged knowledge and optimize performance.

Intended Use:

BunnyGunny v2.0 is ideal for generating high-quality anime-inspired illustrations, character art, and scenes with vibrant colors, detailed backgrounds, and strong artistic composition. It is suitable for both personal and commercial projects, provided they adhere to responsible AI usage guidelines.

Now the fun stuff, recommended settings:

For optimal performance and image quality, consider using the following settings:

Reforge Extension: Highly recommended for enhanced sampler performance. Get it here: [https://github.com/Panchovix/stable-diffusion-webui-reForge/](https://github.com/Panchovix/stable-diffusion-webui-reForge/)

Sampler: Euler A

Scheduler (Reforge): Simple

Resolution: 1024x1728 (or any SDXL-compatible resolution ratio)

Steps: 35

CFG Scale: 4.5

Rescale: ON, 0.5

Guidance Limiter (Reforge): ON, Guidance Sigma Start: 25

Sigma Merge (Reforge): Enabled, Mode: Multiply, Strength: 1.2

Prompting Guide:

Structure your prompts with the following elements for best results:

```

1girl, [Optional: PONY SCORES IF YOU WANT], artists, character(s), backgrounds, meta tags such as ratings (e.g., masterpiece, best quality), style tags (e.g., very detailed, intricate), etc.

```

License:

Inherited from Noob and Pony.

Donations & Support:

If you enjoy BunnyGunny v2.0 and want to support future development, please consider subscribing on Patreon:

Patreon: [https://www.patreon.com/c/Lilogummie](https://www.patreon.com/c/Lilogummie)

Connect with me:

Twitter (X): [https://x.com/Juixy88](https://x.com/Juixy88)

Disclaimer:

BunnyGunny v2.0 is a community-created model and is provided as is. Use responsibly and ethically. The developers are not responsible for any misuse of this model. Generated images may be subject to copyright and content restrictions based on your prompts and settings.

Description

FAQ

Comments (8)

Is there any more information about this "quantum merge" technique cuz I couldn't find.

Also can this method be used in Supermerger/Untitled Merger/Model Mixer?

Hi, yes, I am uploading a new model with the details right now, the script is here:

https://github.com/GumGum10/QuantumMerge-sdxl/tree/main

I will try to make a PR to implement this in Supermerger as I'm somewhat familiar with it, but for now the script can be run in python

@LyloGummy , thanks, appreciated! Cuz I tried literally every single possible thing and merge method to combine Pony with Illustrious and I got only bad results - tried all the mergers with all their settings comparing with xyz plots (Supermerger, Untitled Merger, Model Mixer, Block Merge Weight etc.).

@ArtistUndead most welcome! If you are mixing the clip too you'll need to fine-tune after merge for a bit to stabilize the model though

@LyloGummy , so the merge can be done without mixing the clip? I just want to try merging these 3 models:

- https://civitai.com/models/1134436/perfectisimo-illunoob-mix

- https://civitai.com/models/1253598/another-damn-anime-model-adam-xl

- https://civitai.com/models/1192182/pantaloons-mix-pony-xl

Merging all of them with your method (likewise in Supermerger), it means that I don't have to actually merge the clips?

clip_g = "E:\Apps\...\clip_clip_g_00001_.safetensors" # Path to your CLIP G .safetensors file

clip_l = "E:\Apps\...\clip_clip_l_00001_.safetensors" # Path to your CLIP L .safetensors file

prompt_to_follow = "1girl, spiderman" # The prompt that will guide the merge

base_model = "E:\Apps\...\base_model.safetensors" # Path to your first (base) model

secondary_model = "E:\Apps\...\secondary_model.safetensors" # Path to your second model

output_path = "E:\Apps\...\output.safetensors" # Path to save the merged model

The prompt_to_follow is actually like a test prompt?

For clip_g and clip_l, how do I actually obtain these? I remember you mentioned something about ComfyUI (having an option or somethin' to extract them from the checkpoints). Can this work on A1111 or is there a standalone python script for this extraction?

Thanks for clarifying!

This might be a really stupid question but this model seems to apply a filter that removes the colour on the last step. I must be doing something wrong.

you need a sdxl vae

How did you do this merge? could you give me the merge recipe that you used to merge NoobAIXL and Pony? Thank you.