All credit for the base flex model goes to ostris, https://huggingface.co/ostris/Flex.2-preview

Flex.2-preview

Flex.2 preview is an early release to get feedback on the new features and to encourage experimentation and tooling. The most important new features and improvements over Flex.1-alpha are:

Inpainting: Flex.2 as built in inpainting support trained into the base model.

Universal Control: It has a universal control input that has been trained to accept pose, line, and depth inputs.

For Usage instructions, please visit https://huggingface.co/ostris/Flex.2-preview for detailed instructions and a workflow for comfyui, a custom node is required to run this model.

Flex Paint

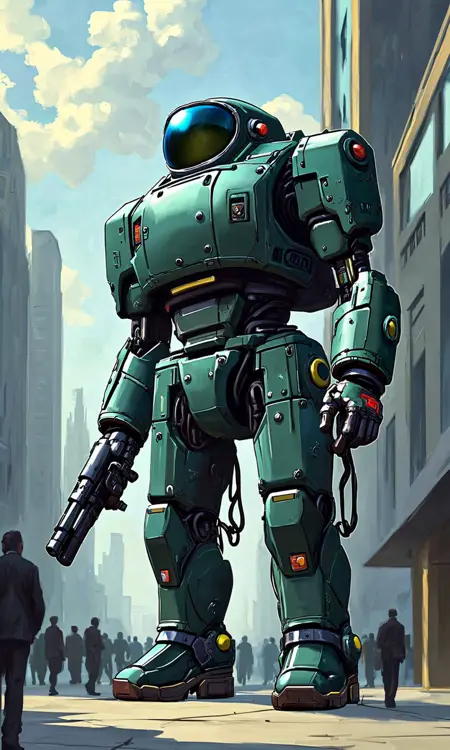

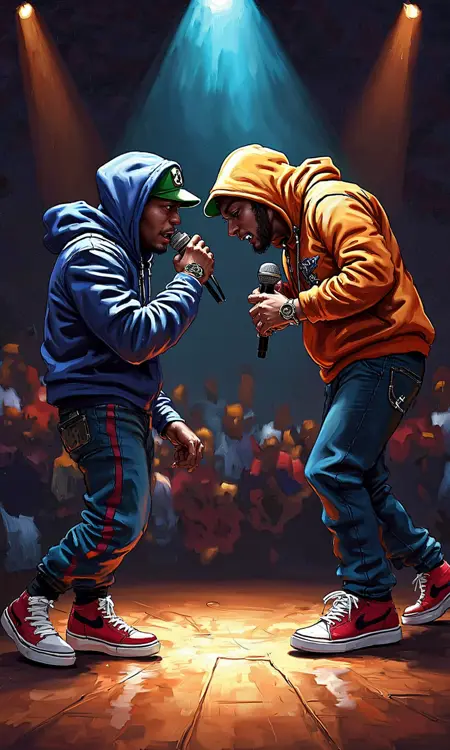

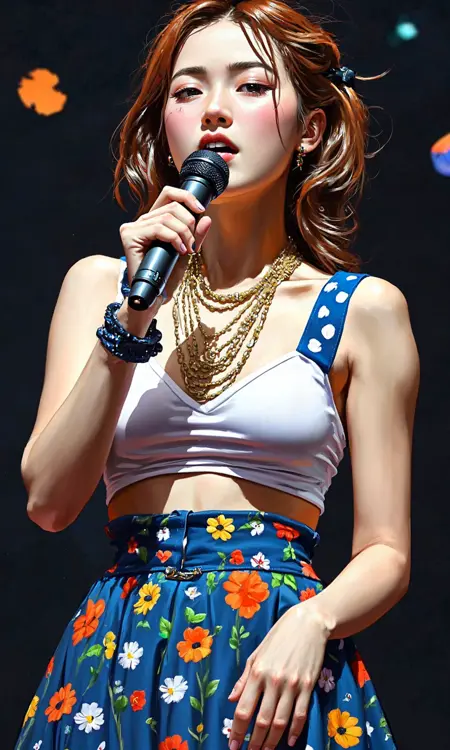

Flex paint is designed to create a more painterly style of image while still being based on the flex.1 model. Certain prompts may have more or less painting effect based on the subject matter but if you need to add a little more then add keywords like painterly or painting etc.

*Works with 12gb VRAM*

The file size is larger but that is due to the unique structure and additions to this model.

A more in depth explanation can be found in the huggingface below but just know that both flex paint and flex alpha work with 12gb vram. Flex paint can use the regular separate vae and dual clip loaders that are normally used and Flex alpha has the vae and clip included in the file. This model is based of Flux Schnell with means it uses the much better Apache 2.0 license,

Flex.1 alpha

Flex.1 alpha is a pre-trained base 8 billion parameter rectified flow transformer capable of generating images from text descriptions. It has a similar architecture to FLUX.1-dev, but with fewer double transformer blocks (8 vs 19). It began as a finetune of FLUX.1-schnell which allows the model to retain the Apache 2.0 license. A guidance embedder has been trained for it so that it no longer requires CFG to generate images.

Model Specs

8 billion parameters

Guidance embedder

True CFG capable

Fine tunable

OSI compliant license (Apache 2.0)

512 token length input

Description

FAQ

Comments (10)

How can I make this work in Forge? The additional VAE/text encoder files I use with flux dev (ae.safetensors, clip_g.safetensors, clip_l.safetensors, t5xxl_fp16.safetensors) don't work with this checkpoint.

If you are using flex paint you should be able to use the flux ones, if you are using flex alpha you can use the included ones in the safetensors, if that doesn't work, I believe there is another version on the huggingface

@Nugus Hm ... I tried to use flex paint (flexFlex1Alpha_flexpaint.safetensors is what got downloaded), but it doesn't work with the flux dev files. The error message is: TypeError: linear(): argument 'weight' (position 2) must be Tensor, not NoneType.

Any idea (anyone) what that could be?

Sorry I'm not sure, I haven't used forge, I only use Comfyui,

@Nugus Ok, thanks anyway.

how can w training that modle

Ostris, who made the the base model,also made ai-toolkit which can train the model and other models as well and it can be found here https://github.com/ostris/ai-toolkit

can we use flux loras normally with this

I can't be sure it works with all of them but I have been using mine with it and it works.

in Forge i get this error: "TypeError: linear(): argument 'weight' (position 2) must be Tensor, not NoneType"

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.