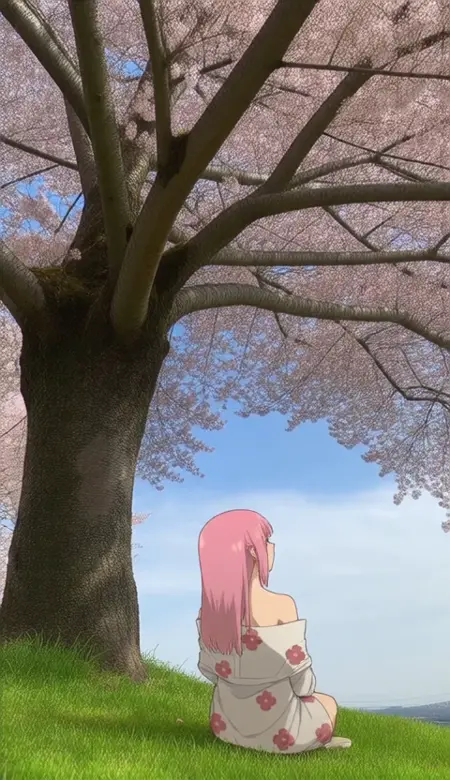

Photo Background - 2d Compositing|写真背景・二次元合成

Trained on 2d illustrations composited on a photo background.

This is a small LoRA I thought would be interesting to see how models trained on illustrations or real world images/video can produce the composite, mixed reality effect.

ℹ️ LoRA work best when applied to the base models on which they are trained. Please read the About This Version on the appropriate base models and workflow/training information.

Metadata is included in all uploaded files, you can drag the generated videos into ComfyUI to use the embedded workflows.

Description

null

FAQ

Details

Downloads

595

Platform

CivitAI

Platform Status

Available

Created

3/9/2025

Updated

5/19/2026

Deleted

-

Trigger Words:

photo background

real world location

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.