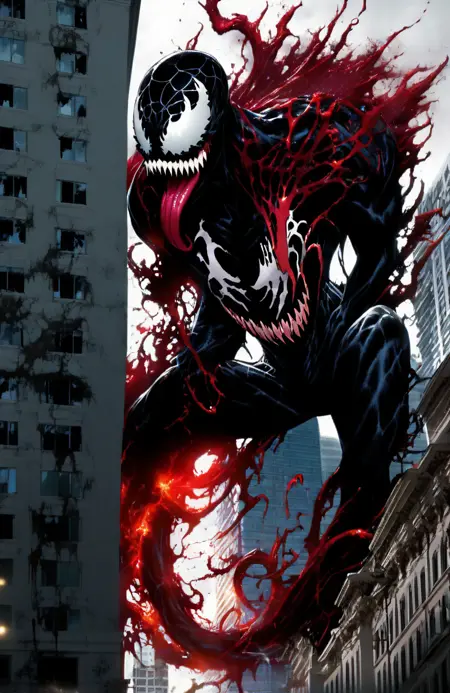

Early version of my planned base model.

Description

FAQ

Comments (8)

This model is filled with junk data, SDXL models follow a fp16 structure, making it fp32 just adds a layer of unused data. Next time make the model fp16 only, there is no need for fp32. 👍

I just want to address this and your other comments as they are factually incorrect. While you can run FP16 fine, FP32 is not "filled with junk data" -- If you convert an FP16 to FP32 it will not increase anything but the size as the increased precision cannot be generated. With that said however if you are training FP32, and using FP32, it maintains actual used data. The reason this is FP32 is that it IS FP32. It can be converted to FP16 with loss, but it is not an FP16 model. You should avoid providing factually incorrect information as it will lead to propagation of misinformation.

@GreyModeler This is correct so far as I know to date

SDXL Base 1.0 model has 6 versions;

FP16 Safetensors,

FP16 Diffusers,

FP32 Diffusers (Original),

FP32 ONNX,

FP32 OpenVINO.

SDXL is not an FP16 model, It's converted to FP16.

Only Safetensors version doesn't have the raw FP32.

But i agree with FP16 version.

FP16 version should be released alongside the FP32 since FP32 will get converted to FP16 anyway.

@Disty0 Thanks for the clarification there -- I only had the original diffusers so didn't even know there was an FP16 release. With that said my training workflow is all switched to FP16 now, so v0.3 will be trained and released in FP16 exclusively. Also yes, I really should add an FP16 version for v0.2, just been preoccupied with training woes.

incorrect statement

@GreyModeler i didnt understand, so we train b32 or b16?

@MrNoir That gets even more complicated -- While the v0.1 and 0.2 are full FP32, v0.3 and onward are different. For unet I'm maintaining FP16 weights, for the text encoders (which I am actively training unlike, to my knowledge most others training at this time [with good reason, they're a pain]) I'm using FP32 weights (I may still pull this down to FP16 in future). With that said however, there's model weights and training precision, as well as more data types for each, all with their own tradeoffs. There's no one right answer, but frankly from my testing, if you use FP16 or BF16 you're going to be fine, you don't generally need the precision of FP32, and I am not seeing the value of it vs the overhead in time and vram required to use it. With that said, BF16 while possibly better is problematic with some optimizers.