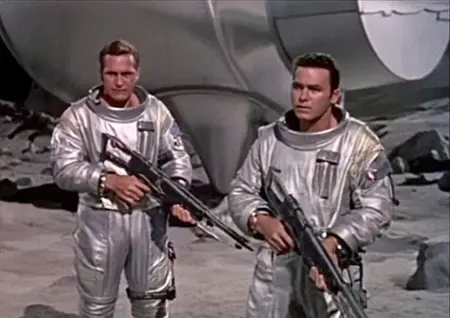

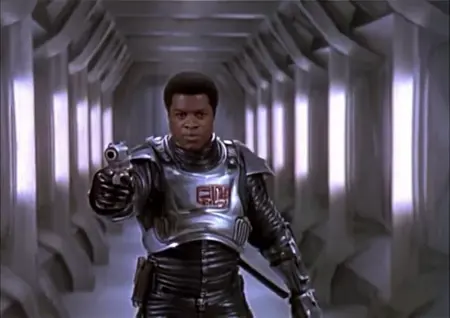

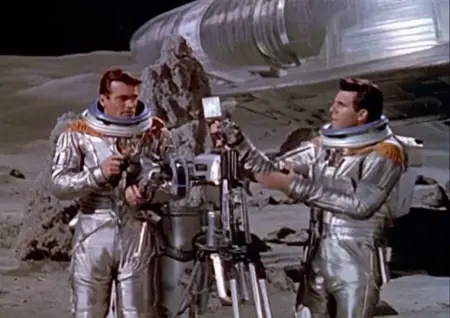

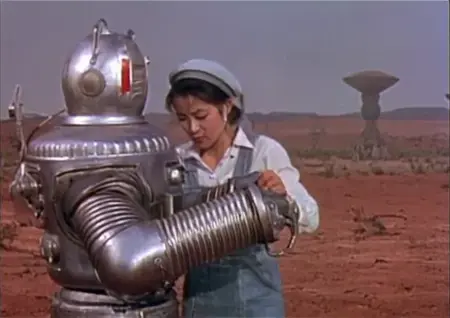

A lora to celebrate the science fiction movies and shows of the 1950s. It's fairly narrowly trained, doing well with space suits, bulky robots and moon-like landscapes.

All images/videos in showcase contain ComfyUI workflows.

Hunyuan 1.5:

First try. All examples done without lightx, 20 steps.

Wan 2.2:

The Low noise lora has absolutely most effect for style. High noise still affects the outcome, but to a much less degree.

For the Wan showcase, I use 25 steps: 15/10. 0.8 strength, and dpm++_sde scheduler in ComfyUI. I haven't had a lot of time for testing, but it might be that it loses strength as you go up in frames.

I use Q4.gguf for High noise, and Q6 for Low noise, for no particular reason. No block swapping.

Wan lora trained with diffusion-pipe.

Description

FAQ

Comments (12)

A little more, and they'd pass as real- excellent!

Great Lora! Is it works with official I2V? Finetrainers has lora convertion for ClmfyUi fkrmat? Thanks.

If it's anything like LTX, the lora works with I2V. It just isn't trained specifically to be conditioned by the initial image. So working, but not optimally, is my guess.

@neph1 Finetrainers generates Lora in Comfyui format?

@midiaplaay Sadly, no. I'm using my own fork here: https://github.com/comfyanonymous/ComfyUI/pull/6174

But there is a script that supposedly makes them compatible here: https://github.com/a-r-r-o-w/finetrainers/blob/main/examples/formats/hunyuan_video/convert_to_original_format.py

(I haven't tried it myself)

I finally got the i2v pipeline working and no, the lora sadly does not work well. I did not take into consideration that for ltx it's the same model, whereas this is a (supposedly) retrained model.

This looks amazing! Will you make a WAN version? Thanks½

I probably will if and when I transition to Wan. Should I? Is it better than Hunyuan Video?

@neph1 Yeah, better is a strong word. It is better sometimes. It also has a much more robust I2V model catalog and is generally better at complex motion and at following prompts. It is a much slower model of course because it has way more data in them but generally WAN seems to beat Hunyuan on most occasions! The only negative thing is it takes longer to inference with and outputs 16FPS instead of 24 as is the case with Hunyuan. A simple interpolation after the render though usually fixes that nicely! :) You can train with the same repo you used here so can't hurt to try? :D

@olivetty Of course not. What hurts me is usually setting models up and making them work. And then of course learning how to use them properly..

@olivetty I see 16fps as a positive. While Wan is slower to render each frame, a lower frame rate means we can interpolate to a smooth 32fps without much loss. I think video models should be built around frame interpolation. In fact, I think models should let you draft at a lower frame rate, then fill in the blank frames between the frames with the same video model. Draft, then final. I think standardizing at a higher frame rate is a mistake in this way.

FWIW, it's here.