Check out our website to see what we’ve been working on and explore our latest model!

Check out our website to see what we’ve been working on and explore our latest model!

→ https://www.illustrious-xl.ai/

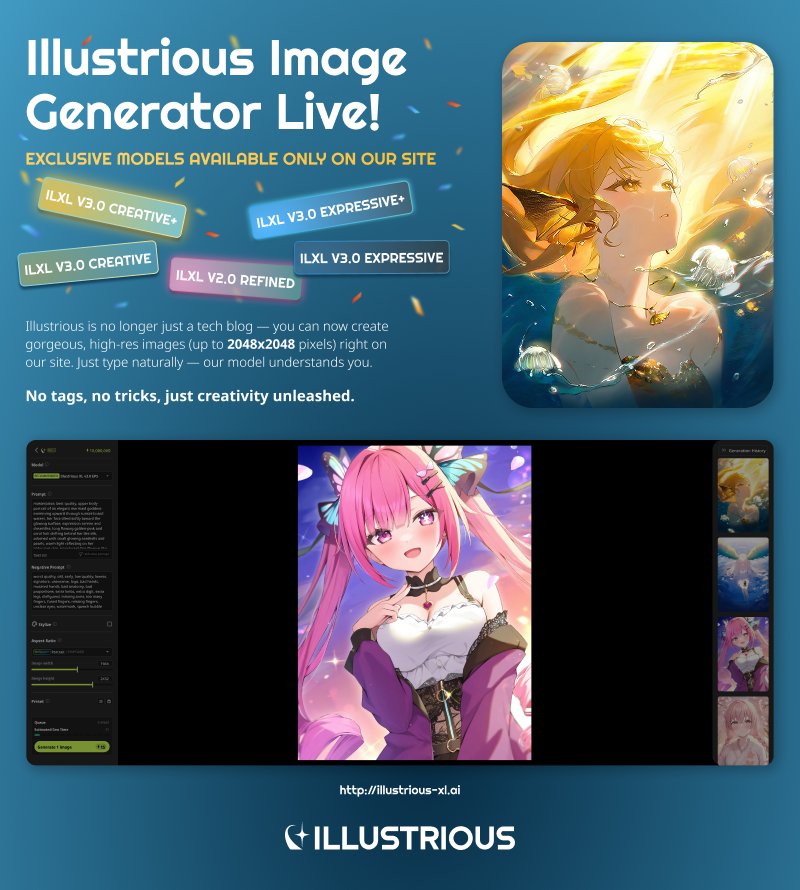

We’re excited to share that you can now generate images directly with our Illustrious XL models on our official site: [illustrious-xl.ai]. We’ve launched a full image generation platform featuring high-res outputs, natural language prompting, and custom presets — plus, several exclusive models you won’t find on any other hub. Explore our updated model tiers and naming here: [Model Series]. Need help getting started? Check out our generation user guide: [ILXL Image Generation User Guide].

Before we dive into the 2.0 model description, we owe you all an apology for the radio silence. We weren’t slacking off - we were cooking up something big. Over the past few weeks, we’ve been not only refining Illustrious but also building our very own site. Now, we have a home where you can get the latest insights on Illustrious, our training techniques, updates, and more.

From now on, all updates will be posted directly on our site!

For the v2.0 model’s technical details, check out: Illustrious XL Tech Blog

Stay in the loop with our latest releases and updates at: illustrious-xl.ai

Oh, and one more thing - we’re gearing up to showcase our models’ high-res capabilities and enhanced prompt applications soon, so keep an eye out in Q2 2025!

Illustrious XL 2.0: Increased robustness for better fine-tuning capabilities

In this version, the dataset has increased drastically, including major focus on animations & natural language focus – with updated dataset, until 2024-08. The model has performed best finetuning stability in our internal tests – with highest TrueSkill rating(among v1-v2) in external demo evaluation.

We want to note that we consider the compatibility & finetuning capability the most important feature of our baseline model. The model itself is not trained with aesthetic set / and we tried our best to debias the potential biases in model, for better overall performance.

Thus, we release ‘untuned’ base version, which should work as better merging / training bases. With release of the models, we also showcase example LoRA adapters, which is widely compatible across versions with recent character knowledges.

Natural Language Handling

The model itself is highly compatible with natural language sentences – it is far more

robust, it is less likely to generate multiple views or nonsense outputs.Generate a highly detailed anime-style illustration of a young man floating serenely

above a sprawling, futuristic cityscape. The boy has dark, messy hair and piercing blue

eyes. He's wearing a long, flowing white coat over dark, streamlined clothing – think a

mix of traditional Japanese garments and futuristic techwear. His expression is calm

and confident, almost detached. He is surrounded by a faint, glowing aura of light,

possibly blue or white.

Below him is a vast sci-fi city, filled with towering skyscrapers, holographic advertisements, and flying vehicles. The city should have a vibrant color palette – neon blues, purples, and pinks contrasting with darker metallic structures. There should be a sense of depth and scale, with buildings receding into the distance. The overall atmosphere should be epic and awe-inspiring, suggesting a powerful and mysterious character overlooking a technologically advanced world. Focus on dynamic lighting and detailed textures to create a visually stunning image. persona 3, masterpiece

High & Low Resolution supports

V2.0 supports 512~1536 resolutions. It is trained max 1:10 (or, 10:1) aspect ratio, with width / height multiples of 32.analogous colors, scenery, forest, masterpiece, black theme, dark, no lighting, night,

black background

Below is the 2x2 grid generation in 512x512 resolution:

1girl, solo, natsuiro matsuri, selfie, saturated, masterpiece, jirai kei, naughty smile,

dark, very dark, black theme, masterpiece, black, high contrast, sketch, monochrome

Negative prompt: worst quality, bad quality

Steps: 28, Sampler: DPM++ 2S Ancestral CFG++, Schedule type: Normal, CFG scale:

2.5, Seed: 1934035447, Size: 1824x1248 (NOTE CFG scale should be 6.5 in recent

implementations)

The models are capable for extreme high resolution generation more stably, with

higher denoising strength, which is important for stable detail generations. Below is an

example with 8MP img2img process:

We are also analyzing how to tweak the sampler schedule for better detail generations

– we will try our best to showcase the results.

Finally, we note the definite future plan for the model releases. We have decided to make model release sequentially, in three stage manner:

A. Exclusive

B. Early access

C. Open source

We will also continue to train & release the future model series, including DiT based

models.

Safety Guidelines

The model can be controlled by optional “rating” levels, described as explicit / questionable / sensitive / general

The alternative tokens, such as “adult content”, “r-18”, “nsfw”, “explicit material”, “sfw”, “safe for work” works for moderation.

Generative models can sometimes result biased, unintended outcomes. Users are required to generate and control the materials in responsible manner.

If you appreciate Illustrious’s evolution and are anticipating upcoming model releases, rlease consider leaving a like or comment. Your feedback is invaluable, and we deeply

appreciate your continued interest and support. Thanks!

Description

FAQ

Comments (40)

Seems like a good base model. I have trouble generating at 1536 x 1536 and getting coherent images though. Also have trouble not getting censor bars, but I imagine a good fine-tune can easily fix that.

I'm sure someone is going to come along and say "What did you expect for $500,000?" so I might as well slide in here now since I happen to be around. 😂

This resolution is way beyond the SDXL standard and it is quite common for it to not work properly. SDXL is still used entirely as a training base, and it was designed to maintain consistency at 1024px² or at resolutions very close to 1024px² (768 + 1280 = 1024px²).

Going a little over 1024px² won't be a problem, since this is the training of the entire SDXL 1.0 architecture, but 1536px² is over 50% of the model's base training, so it is completely expected that things will start to go weird.

For example, SDXL's base training makes it analyze 1024px² looking for items that are in the prompt. So if I type something like "1girl, solo, smile, 2 arms", it will look for these items within a radius of 1024px². Not that this is the limit of SDXL, but the base was trained this way. Now let's suppose that I increase the resolution by 150%, resulting in 1536px². When the image is constructed, the model will continue to look for items within a radius of 1024px² because it was trained this way. Let's suppose that the generated image shows a woman on the left side of the image, so when U-Net tries to cover the attention area, it will read the first 1024px², where there is everything that was requested in the prompt. However, when it continues execution, it will reach the right side of the image, where it will pay attention to just one more area of 1024px², leaving out 512px². Let's suppose that in these 512px² that are outside the attention block, there is the woman's left arm, then the model will understand that 1 arm is missing, and then it will add another arm to her, or it will simply reconstruct the woman a little further to the right so that the attention block can see one with two arms, but without changing the left side of the image where the 512px² that were left aside are. In the next loop, the same thing will happen, but it will only see one arm without a woman, so it will reconstruct her, and then when it goes to the other side, the same thing will happen, until the result is a completely deformed woman or two women with 2 arms.

The only way to solve this problem is to have a base training with high resolution, which happens in the most recent versions of SD 3.5, but is almost unfeasible for SDXL due to the amount of resources that the community has already invested.

Training Illustrious with more high-resolution images will not solve the problem, it will only reduce it a little. The solution would be to abandon SDXL and start training on the newer SD3.5 or Flux models, but the costs are too high for the community and it would take a long time to produce high-resolution images with the same accuracy that SDXL can produce at lower resolutions.

@AishaAI Everything you said it true and well articulated. I made my comment because the model's description says " V2.0 supports 512~1536 resolutions " , which (unless I misunderstood it) seems to suggest that 1536x1536 generation should be possible. In addition to this, the model can sometimes generate above 1024 Ok. I've done some test generations at 1596 x 1248 and they come out just fine.

@Shed_The_Skin I think they actually did some training at 1536px², but like I said, that would only help a little since the base SDXL was made entirely at 1024px²

There are multiple stables? Which one is actually stable? 🤣

Both of these files are the exact same. The huggingface one is also the exact same just with a different name... Please fix this hiccup

which one is better to make lora? 2.0 vs 2.0-stable? what's different?

It is better that you train a lora on the base Illustrious 0.1 model first. They say that further versions are lora compatible from those train on illustrious 0.1

令人悲伤的是,tag污染仍然存在,例如:

onineko-chan污染onineko

kedama污染kedama milk

long hair污染long hair between eyes

(去官网试了一下,就连3.0也没有改善,而且所有训练时用到IL的模型都不同程度中枪了,例如所有noob系模型)

这看起来并不像是一个易于解决的问题,例如“long hair污染long hair between eyes”的例子:我在Danbooru上检索了一下,在现有的帖子中有“long hair between eyes”属性并同时有着非“long hair”属性的单一角色数量(大部分帖子中存在多个角色分离两者)可能不多于10页,在现有的5.8k“long hair between eyes”tag的帖子中,实在有些少得可怜,作为训练数据集应该是不怎么够的。

个人认为在现在的Danbooru标签训练中应该没有训练让模型学习过Danbooru的tagwiki内容,换言之,AI大概是通过其自然语言处理能力来部分地理解tag词语的含义,然后将tag当作一种新的“语言”来进行理解,而非通过理解tag本身的含义来进行学习,要打个比方的话,ai对tag的理解大概相当于所谓的“散装英语”或者类似于日本的“偽中国語”?

如果能完全使用tag作为“语言”来训练模型/让模型学习tagwiki——抛弃AI的自然语言处理能力/进行优质的自然语言概念翻译,应当能解决这一问题——或者建立一个不会混淆的tag系统(类似于伪代码/元语言)用于训练,然后建立tag到tag的准确翻译——看起来无论哪种都需要不低的训练成本。

我就没见过不产生污染现象的模型,各种颜色,打个环境有XX鱼,贝壳,然后跑到人物头饰上。可能用#分隔会有点作用。

OK but it's a bit confusing. Are we supposed to be getting 2.0 and 2.0 stable? Your stardust bar on your site for milestones stated we'd get 2.0, where is it? Or is this 2.0 but labeled stable version?? Honestly the 2.0 vs stable 2.0 comparisons you showcase, 2.0 looks better. more defined lines. The lines are faded in the 'stable' version

so users pay for v2 and we get refined/stable? where is base?

base looks bad, if you to check you can download from here https://www.seaart.ai/models/detail/cvbp2qle878c7387sppg

I felt like 2.0 (the non-"stable" one) performed better.

You should give it a try.

Ah, NOW they have their 2.0 able to be downloadable on civit, finally. On their official 2.0 page.. I don't know if it's the same as on seaart or another stable version. I'll have to see later

@Light7799 its the same, I have checked the hash.

I'm bit confused, I see 2 v2.0-stable available, is there any difference?

They are both the same thing but a simple mistake while uploading during CIvitAi Maintenance, Blackout, Server issue and so on.... its not uncommon. 👍

@Kodokuna Ahhh, I see. Thanks for the explanation ❤️

This one is fine-tuned to improve stability. The other one is not fine-tuned for stability.

So, if you do LoRA training, you need to do it on the other one and run the LoRA on this one, as you will lose the stability fine-tune if you train it on this one. They have a blog about it on their website: https://www.illustrious-xl.ai/blog/7

whats the difference between this and the not stable version you can download?

if i want to train a lora should i train it on this or the not stable version?

I did some tests and this one is better, because it's literally more stable lol (visually)

Well, not just visually, actually

@Chris198 i've read their site and they do too have 2 versions of 2.0 there, this one is better at generating itself and according to them the other one is better at creating loras, making them more compatible with illustrious fine tunes.

Though i don't know for sure why you'd use illustrious 2.0 stable base instead of a fine tune

@YangDenn You're right, I was just trying this model because people were talking a lot about it and I wanted to know if it was because it had better composition or newer insights, but I didn't notice anything better than models like "WAI"

@Chris198 it has better resolution 1536x1536

нихуя не понятно. Чем базовая версия 2.0 отличается от стабильной версии 2.0?

Стилем. В стабильной версии есть относительно выраженный и повторяющийся стиль.

Why it is not available for creation on website?

为什么我现在下载不了了

What is the difference between 2.0 and 2.0-stable?

I think its more stable

Things are far behind compared to NovelAI’s checkpoints. Please, we really need an upgrade and improved checkpoints that can handle words in quotation marks.

can you add the unpruned full size base model for better lora training on civitai?

Details

Files

illustriousXLV20_v20Stable.safetensors

Mirrors

Illustrious-XL-v2.0.safetensors

illustriousXLV20_v20Stable.safetensors

illustriousXLV20_v20Stable.safetensors

TOC.safetensors

Illustrious-XL-v2.0.safetensors

Illustrious-XL-v2.0.safetensors

illustriousXLV20_v20Stable.safetensors

Illustrious-XL-v2.0.safetensors

Illustrious-XL-v2.0.safetensors

Illustrious-XL-v2.0.safetensors

Illustrious-XL-v2.0.safetensors

Illustrious-XL-v2.0.safetensors

Illustrious-XL-v2.0-stable.safetensors