🌀 Abstract Surrealism: A Fusion of FFusion.AI & DALL·E 3 Creativity 🎨

Diving deep into the intricate world of abstract surrealism, we've merged the visionary prowess of FFusion.AI with the distinct style of DALL·E 3. This artistic experimentation is bound to push boundaries and redefine perceptions.

Hardware Setup:

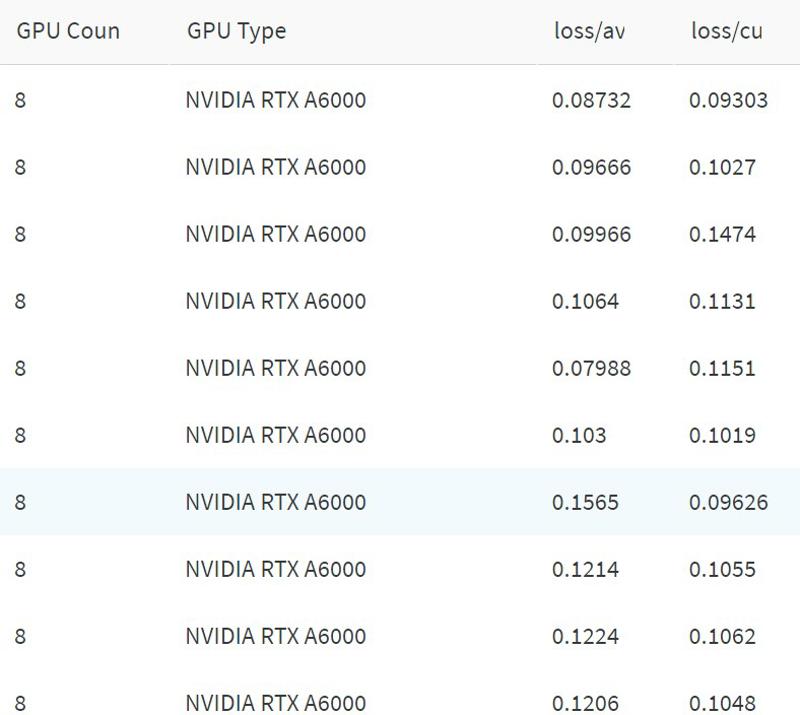

GPU Configuration:

8x A6000 cards

Loras:

LoHA Basic:

Alpha:

1.0Dim:

32Conv Dim:

32Conv Alpha:

1Use CP:

FalseAlgo:

loha

Lite Weight LoRA

Highlight: Elevated

network_alphafor sharper insights.

Heavy LoRA

Dim:

128

Lora FA

A classic integration, always evolving.

Locon with Experimental VIT Prompts

Exploring uncharted waters with VIT-based prompts.

Locon - High Alpha Version

An enhanced alpha ensures richer details and contrasts.

LoHA FULL Algorithm:

Alpha:

0.5Dim:

64Preset:

fullAlgo:

lohaDropout:

0.0Authored by:

FFusionAI

🌐 FFusion.ai Contact Information

Proudly maintained by Source Code Bulgaria Ltd & Black Swan Technologies.

📧 For collaborations, inquiries, or support: [email protected]

🌍 Locations: Sofia | Istanbul | London

Connect with Us:

Our Websites:

Description

FAQ

Comments (2)

So the dataset is from DALL·E 3 generated images? I am quite confused by the description. The card screenshot is your cluster? 10 servers with 8 GPU each? Can you share GPU resources when training on this?

We used generated our own generated images with DALL·E 3 for the Unet. Text encoder was trained on prompts totally different from the ones used to generate the DALLE images.

then since the new Lyco algos with preset = full instead of preset=attn-mlp

https://github.com/KohakuBlueleaf/LyCORIS/blob/main/docs/Preset.md

on algo lora / dylora / Loha and algo = full that yeld in 6GB lora

the screens are the wandb runs .. like this one https://wandb.ai/idle-bg/network_train/runs/w6m2uqeu/

but need some time to link those for those sets as we have more than 700+ runs :)