bigASP 🧪 v2.5

⚠️This is not a normal SDXL model and will not work by default.⚠️

A highly experimental model trained on over 13 MILLION images for 150 million training samples. It is based roughly on the SDXL architecture, but with Flow Matching for improved quality and dynamic range.

⚠️⚠️⚠️ WARNING ⚠️⚠️⚠️

You are entering THE ASP LAB

bigASP v2.5 is purely an experimental model, not meant for general use.

It is inherently difficult to use.

If you wish to persist in your quest to use this model, follow the usage guide below.

Usage

Currently, this model only works in ComfyUI. An example workflow is included in the image above, which you should be able to drop into your ComfyUI workspace to load. If that does not work for some reason, then you can manually build a workflow by:

Start with a basic SDXL workflow, but add a ModelSamplingSD3 node onto the model. i.e.

Load Checkpoint -> ModelSamplingSD3 -> KSampler

Everything else is the usual SDXL workflow with two clip encoders (one for positive and one for negative), empty latent, VAE Decoder after the sampler, etc.

Resolution

Supported resolutions are listed below, sorted roughly by best to worst supported. Any resolutions below or above these are very unlikely to work.

832x1216

1216x832

832x1152

1152x896

896x1152

1344x768

768x1344

1024x1024

1152x832

1280x768

768x1280

896x1088

1344x704

704x1344

704x1472

960x1088

1088x896

1472x704

960x1024

1088x960

1536x640

1024x960

704x1408

1408x704

1600x640

1728x576

1664x576

640x1536

640x1600

576x1664

576x1728Sampler Config

First, unlike normal SDXL generation, you now have another parameter: shift.

Shift is a parameter on the ModelSamplingSD3 node, and it bends the noise schedule. Set to 1.0, it does nothing. When set higher than 1.0 it makes the sampler spend more time in the high noise part of the schedule. That makes the sampler spend more effort on the structure of the image and less on the details.

This model is very sensitive to sampler and scheduler, and it benefits greatly from spending at least a little extra time on the high noise part of the schedule. This is unlike normal SDXL, where most schedules are designed to spend less time there.

I have, so far, found the following setups to work best for me. You should tweak and experiment on your own, but expect many failures.

Scale=1, Sampler=Euler, Schedule=Beta

Scale=6, Sampler=Euler, Schedule=Normal

Scale=3, Sampler=Euler, Schedule=Normal

I have not had much success with samplers other than Euler. UniPC does work, but generally did not perform as well. Most of the others fail or were worse. But my testing is very limited so far. It's possible the other samplers could work, but are misinterpreting this model (since it's a freak).

Beta schedule is the best general purpose option and doesn't need the scale parameter tweaked. Beta schedule forms an "S" in the noise schedule, with the first half spending more time than usual in high noise, and the latter half spending more time in low noise. This provides a good balance between the quality of image structure and the quality of details.

Normal schedule generally requires scale to be greater than 1, with values between 3 and 6 working best. I found no benefit from going higher than 6. This setup results in the sampler spending most of its time on the image structure, which means image details can suffer.

Which setup you use can vary depending on your preferences and the specific generation you're going for. When I say "image structure quality" I mean things like ensuring the overall shape of objects is correct, and placement of objects is correct. When image structure is not properly formed, you'll tend to see lots of mangled messes, extra limbs, etc. If you're doing close-ups, structure is less important and you should be able to tweak your setup so it spends more time on details. If you're medium shot or further out, structure becomes increasingly important.

CFG and PAG

In my very limited testing I've found values for CFG between 3.0 and 6.0 to work best. As always, CFG is trading off between quality and diversity, so lower CFGs produce a greater variety of images but lower quality, and vice versa. Though the quality at 2.0 and below tends to be so low as to be unusable.

I highly recommend using a PerturbedAttentionGuidance node, which should be placed after ModelSamplingSD3 and before KSampler. This has a scale parameter which you can adjust. I tend to keep it hovered around 2.0. When using PAG, you'll usually want to decrease CFG. When I have PAG enabled I'll keep my CFG between 2.0 and 5.0.

The exact values for CFG and PAG can vary depending on personal preference and what you're trying to generate. If you're not overly familiar with them, set them to the middle of the recommended ranges and then adjust up and down to get a feel for how they behave in your setup.

PAG can help considerably with image quality and reliability. However it can also tend to make images more punchy and contrast, which you may not want depending on what you're going for. Like many things it's a balancing act and it can be disabled if it's overcooking your gens.

Steps

I dunno, 28 - 50? I usually hover around 40, but I'm a weirdo.

Negative Prompt

So far the best negative I've found is simply "low quality". A blank negative works as well, as does more complicated negatives. But "low quality" alone provides a significant boost in generation quality, and other things like "deformed", "lowres", etc didn't seem to help much for me.

Positive Prompt

I do not have many recommendations here, since I have not played with the model enough to know for sure how best to prompt it. At the very least you should know that the model was trained with the following quality keywords:

worst quality

low quality

normal quality

high quality

best quality

masterpiece quality

These were injected into the tag strings and captions during training. The model generally shouldn't care where in the prompt you put the quality keyword, but closer to the beginning will have the greatest effect. You do not need to include multiple quality keywords, just the one instance should be fine. I also haven't found the need to weight the keyword.

You do not have to include a quality keyword in your prompt, it is totally optional.

I do not recommend using "masterpiece quality" as it causes the model to tend toward producing illustrations/drawings instead of photos. I've found "high quality" to be sufficient for most uses, and I just start most of my prompts with "A high quality photograph of" blah blah.

The model was trained with a variety of captioning styles, thanks to JoyCaption Beta One, along with tag strings. This should, in theory, enable you to use any prompting style you like. However in my limited testing so far I've found natural language captions to perform best overall, occasionally with tag string puke thrown at the end to tweak things. Your favorite chatbot can help write these, or you can use my custom prompt enhancer/writer (https://huggingface.co/spaces/fancyfeast/llama-bigasp-prompt-enhancer).

If you're prompting for mature subjects, I would advise to try using the kind of neutral wording that chatbots like to use for various body parts and activities. The model should understand slang, but so far in my attempts they make the gens a little worse.

The model was trained on a wide variety of images, so concept coverage should be fairly good, but not yet on the level of internet-wide models like Flux.

The Lab (What's Different About v2.5)

This model was trained as a side experiment to prepare myself for v3. It included a grab bag of weird things I wanted to try and learn from.

Compared to v2:

Captioning - The dataset was captioned using JoyCaption Beta One, instead of the earlier releases of JoyCaption.

More Data - From 6M images in v2 to 13M images.

Anime - A large chunk of anime/furry/etc type images were included in the dataset.

Flow Matching Objective - I took a crowbar to SDXL and then duct tapped on flow matching.

More Training - From 40M training samples to 150M.

Frozen Text Encoders - Both text encoders were kept completely frozen.

So ... why?

The captioning was just because I had finished Beta One by the time I was doing data prep, so I figured I should swap over. My hope is that the increased performance and variety of Beta One would imbue this model with more flexibility in prompting. However, since the text encoders were frozen I'm not sure there will be any meaningful impact here.

More data is more gooder. Most importantly, I dumped a good chunk of images in that had pre-existing alt text, and were carefully balanced to have as wide a variety of concepts as possible. This was meant to help expand the variety of images and captioning styles the model sees during training.

The inclusion of anime was done for two reasons. One is that it'd be nice to have a unified model, rather than an ecosystem split between photoreal and non-photoreal. The big gorilla models (like GPT 4o) can certainly do both modalities equally, so it's at least possible. The second reason is that I want the photoreal half to inherit concepts from the anime/furry side. The latter tends to have a much larger range of content and concepts. Photoreal datasets are more limited by comparison, and that makes it difficult for models trained on them to be creative.

Flow Matching is the objective used by most modern models, like Flux. It comes with the benefit of higher quality generations, but also a fixed noise schedule. SDXL's noise schedule is broken, which results in a variety of issues but most pronounced is its worse structure generation. That's the main source of SDXL based models tending towards duplicated limbs, melted objects, and "little buddies." It also prevents SDXL from generating images with good dynamic range, or dark images, or light images. Switching to Flow Matching fixes all of that.

More training is more bester. One of the biggest issues with v2 (and v1) is how often it produces failed gens. After much experimenting, I determined that this was down to two main things: SDXL's broken noise schedule and, more importantly, not enough training. Models like PonyXL were trained for much longer than v2. By increasing the training time from 40M samples to 150M samples, v2.5 is now in the ballpark of PonyXL's training.

As for the frozen text encoders, I didn't actually intend that to be a feature of this model. It was basically a result of me trying to deal with training instabilities.

What Worked and What Didn't

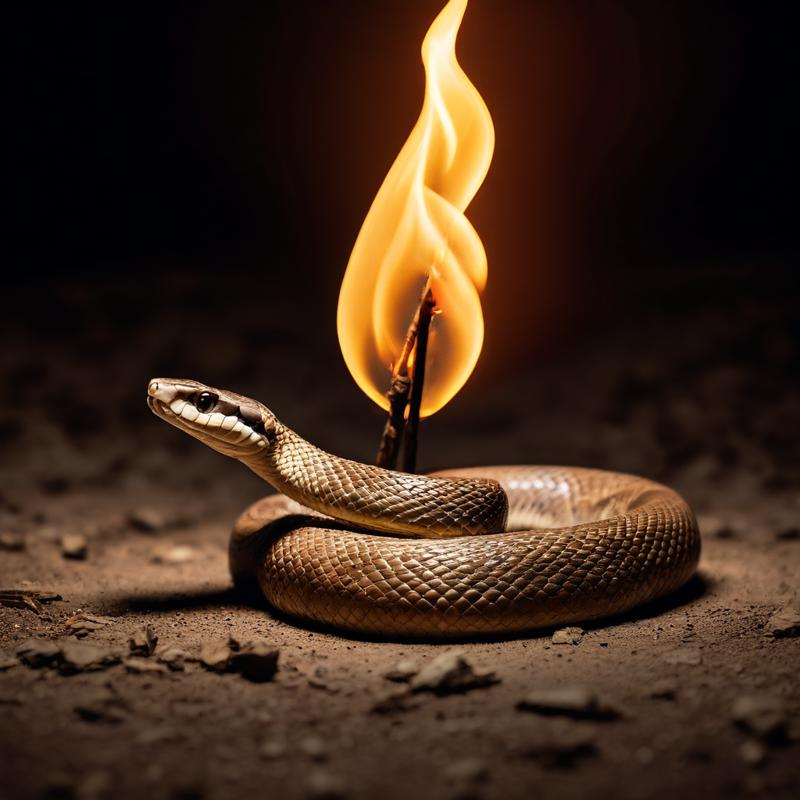

The biggest change was Flow Matching. People have transitioned SDXL over to other objectives before, like v-pred, but I don't think anyone has tried switching it to Flow Matching. Well ... it works. And I think this was a success. It's hard to know if Flow Matching helps SDXL's output quality, since results are conflated with more training, but it definitely helped the dynamic range of images, which I'm greatly enjoying. And, as noted above, the fixed noise schedule is likely a big part of why v2.5 has fewer mangled generations compared to v2.

More training almost certainly helped the model as well. Combined, v2.5's failed gen rates have drastically lowered.

More data and adding anime/etc data does seem to have expanded the model's concepts and creatively. I'm able to generate a much wider array of artsy and creative, yet still photorealistic, images.

However, v2.5 did not gain any real ability to actually generate non-photoreal content. My attempts to use it for anime-style gens have been wholly unsuccessful. Quite odd.

Freezing the text encoders is both a blessing and curse. By keeping the text encoders frozen, they retain all of their knowledge and robustness gained from the scale of their original training. That's quite useful and beneficial, especially for experiments like mine that do not have that kind of scale.

The downside is that without tuning them, prompt adherence suffers greatly. v2.5 suffers from lots of confusion like seeing "beads of sweat" and then generating a string of beads instead of sweat.

So it's a trade off. And with the text encoders frozen, v2.5 probably doesn't benefit much from JoyCaption Beta One's improvements.

Training LORAs and Merging

Frankly, I have no clue if this model can be merged. It was trained for a different objective than stock SDXL, so merging would be weird. Though apparently someone merged this model with DMD2 and it worked? So who knows.

As for using existing LORAs, the situation is going to be the same: probably not going to work but who knows for sure.

Training LORAs is similarly not going to work, due to the different training objective of this model.

I certainly don't like this, but this model was meant to be just an experiment so support for loras and such was not a priority. v3 will either be based off a model with existing tooling, or I'll release it with tooling if I somehow get forced into going down the custom architecture route again.

Support

If you want to help support dumb experiments like this, JoyCaption, and (hopefully) v3: https://ko-fi.com/fpgaminer

Description

FAQ

Comments (216)

:o

It must be my birthday today :-)

Will check it out the next days, thank you!

Works great, thank you!

Can you recommend some lighting like Taken with a DSLR Canon or something else.

I want to create natural images.

Never mind...created some with DeepSeek :-)

This is currently the best model for NSFW! My new No 1!

Can confirm DMD2 works. Using LCM sampler and Beta scheduler. 6 steps. 1.0 CFG. Pictures are coming out nice and clear. Thank you for your continued efforts in open source AI.

Agree that your settings work great. The only thing I would add is that I seem to get the best results by changing the PAG scale and model sampling shift to 0.5. Otherwise the images come out burnt for me.

GBRX I've been getting decent results with shift 1.5 and scale .15 as well. the combination of the prompt adherance in asp and the realism in gonzalomo is next level stuff. thank you for your workflows too GBRX!

mattimus Oh interesting, I'll have to try those values. Thanks for the tip and thanks for using my workflow!

how do you import the model

Kitten123 It gets loaded just like any other SDXL checkpoint.

whats your dmd2 strength and shift

Kitten123 1.0 for both

If it's robust enough, it can be solidified using my encoder sampler, but if it's not it'll shatter under the weight.

Flow models are more often very robust than they are not, so this may be a very interesting outcome.

brabo demais (very good). a great model with a great potential. prompt adherence and style verssatility are simply great in this one. still a little wild, but nothing that can't be fix with a few tries and a little persistence.

your models are gold, thanks to sharing with us.

This is seriously impressive when using the correct settings, Bravo. I am generating images easily as good as, if not better than Flux quality. I will enjoy continued experimentation with it.

Super innovative as usual 💜

quick feedback from some various testing -

I see the logic for including non-photography in the dataset. There are concepts that work better there.

HOWEVER!

It has had an effect where I find it contaminates my generations and shifts them into cartoony hentai bullshit when I don't want it. Every so often I'd get one of those semi-real pony-esque renders with exaggerated proportions and terrible skin shading.

bigASP has been the leader in photoreal quality and I'd hate for it to be tainted with ai-slop Pony aesthetic garbage.

Prompting 'low quality' does help to push it back into photo territory but then obviously you're limited to more amateur content.

I'll keep testing. but I wanted to flag this issue early.

the more I test the more I encounter it. Yes it can do perfect photography but I keep seeing pony fakeness creep in. sometimes it's subtle with just the shitty skin shading and other times it's a full on linework cartoon.

I wonder if segregating the training photos by specific means would stop this? Would starting all captions with #anime# or #real# or something similar stop the concepts from getting muddied, similar to score tags that made a big difference in the previous asps and pony?

Thank you for the feedback. I agree, I noticed that as well. I'm thinking of doing a second pass finetune on top to train the text encoder, which might help.

For now I've found a few things that can help nudge it back:

- Use the word "photograph" instead of "photo"

- Add emphasis to the word photograph. For example: "A high quality (photograph:1.4) of"...

- Add "illustration, drawing" or something like that to the negatives.

- Adding camera related stuff like "dslr", the focal range, a camera model, etc. Old school SD1.5 type prompting ;)

But yes, just to be clear my goal is to keep pushing photoreal forward.

nutbutter my problem could just exist between keyboard and chair. A learning curve is fine as long as it's stable. I've found a couple of those nudge tricks already and they help for sure.

Interested to see where it eventually goes. Always love your full breakdowns.

I'd agree with this. BigASP V2 at its best was the most realistic SDXL model by a large margin, in my observations so far 2.5 isn't quite capable of the same things in its current state, there's a lot of obvious bleed-in of the non-photo data.

Have you ever found anything more to cut out the anime stuff?

Just WOW! I have never expected SDXL to produce such gorgeous results. It can generate absolutely natural-looking photos and cinematic shots with great compositions and wide color range. Well done, so far it looks like absolute SOTA among SDXL realistic models.

Btw, have you seen there a guy adapted gemma to his illustrious based model (WIP), maybe something similar could be done for v3?

Thank you. I hadn't seen that specific model, but I'll say that I'm not sure how much value adding these huge LLMs as text encoders is providing. Like HiDream1 with four encoders, one of which is 16GB. I think it's likely they add only marginal value. e.g. an extra 16GB model for 1% better prompt adherence. Not really worth it at the hobbyist scale. Probably. Thankfully it looks like Chroma is being trained without T5 so we'll see how its adherence turns out with only CLIP.

nutbutter here is that model: Rouwei-Gemma - g3-1b_27k | Other Checkpoint | Civitai

It is 1b gemma so it is not that large, I don't think there are much improvements yet thought. Some also suggest picking t5gemma or qwen vl instead of gemma 3 1b

Very Flux-like compositional and lighting capabilities when you increase the model shift above 1.5. Probably only 20% of the images are actually usable/refineable but the quality of the 20% is so good that it's absolutely worth doing the extra generations. Paired with an SDXL refiner, this model (experimental as it is) is an invaluable tool for me and does some things no other model really can.

"ai generated" in the negative can improve generation sometimes, making it less "slop". Maybe it is better to remove AI generated images from the dataset for next versions if possible? And it is not just about the style, they may contain significant artifacts reducing the model quality

very very useful tip , improves images vastly. Paired with DMD2 lora ,the generations are amazing

I know you said lora training prob wont work but if someone figures out a way lmk....

I just meant that no existing tooling would likely work, since none of it is expecting a flow matching SDXL :P It's certainly possible and honestly I'll probably whip together a quick script to do it soon.

The prompting is super sensitive and it feels like one wrong word and the image explodes into gibberish, I have never used anything like it, but it can be fun and artistic.

EDIT: Oh I think I had my SD3 model guidance values set too low (0.5), it is a bit more normal now.

So far my go to settings are: scale=1.0, PAG=2.0, CFG=3.0, schedule=beta, sampler=euler. Should be very stable there.

This seemed to help realism without too much negative prompt impeding output. Please anyone else post suggestions.

Positive: a (real photograph:1.4) of a...

Negative: low quality, illustration, drawing, AI generated

You can also try adding a photo website to the positives like unsplash or pexels. Or throwing camera settings at it, e.g. "28 mm wide‑angle, Sony α7R IV, f/1.8, ISO 6400, 1/30 s"

The model is just awesome! I’ve been familiar with bigASP since its first version, and for me, it was a model that really shone with photorealistic images. I tried version 2.5 now, and I can confidently say it’s one of the best SDXL models out there. The way it follows prompts deserves a special shoutout. At some point, I forgot it was an SDXL model and started giving it FLUX-level prompts, and it handled them surprisingly well. Not without issues, of course. When it comes to anime or illustrations, the model’s quality takes a big hit and often generates mutants with the drawing quality of a beginner artist. I think I read on HF that this is a known issue, and the creator is still working on it. If that’s the case, I’d suggest adding something like artist styles or general styles (anime screenshot, bold lines, flat colors, etc.) to avoid the random drawing style. For photorealistic content, I have no complaints about the model. It’s a clear leap forward compared to previous versions, and I’m getting such good quality that I don’t even need to upscale. I recommend everyone at least give it a try, but don’t forget it’s still an experimental model.

Thank you for the kind words :) Yeah I'm not sure what's up with the anime half. It should have picked up general styles from the captioning on them. But to be fair I put approximately 0 effort into that half of the dataset since I was trying to just throw everything together quickly. I'll give it more love next time.

Mods will fix it, (or not). Blablalabla model working with comfy only.

Mergers, finetuners step forward.

Very interesting model. The advantages of flow matching are quite clear when it comes to the improved dynamic color range, however, there's something off a tradeoff in terms of e.g. skin quality just as a result of overall sampling quality between say Euler Beta and something like DPM++ 3M SDE SGM Uniform (which is what I typically used for BigASP V2). I suspect Clownshark Batwing's flow match compatible SDE samplers from his RES4LYF node pack can likely help with this, though, gonna try those later today.

I'm also finding so far that overall prompt adherence is really quite a lot noticeably worse than that of BigASP V2, likely due to the frozen text encoders. I think doing another small training pass to tune those up a bit as you've suggested you might would be highly beneficial.

Nice model, how to train LORAS ?

Another thing to note: ComfyUI has a built-in "hack" that makes Ancestral samplers work on all Flow matching models by default (whereas they otherwise wouldn't). In my testing so far Euler Ancestral Beta at ~CFG 5.5 gives much better results with BigASP 2.5 than regular Euler Beta at the same CFG.

DPM++ 2S Ancestral SGM Uniform @ CFG 5.5 or so also works quite well

Also, PAG node set to around 1.5 seems to be a good middle ground for efficacy without causing noticeable oversaturation

Regarding merging, traindiff seems to work very well. I haven't tried add diff but I imagine it would also work. DARE-TIES might work, but I've never used it so I really have no idea yet. Weighted sum unsurprisingly destroys the model.

what vae is being used in this model

The standard SDXL one

I've been using it for a few days and I LOVE IT, the results are very experimental, I find it quite artistic. It's not actually difficult to use, but you gotta know how to write a SDXL prompt using natural language description.

So since I use draw things, i imported the model and changed the model objective to flow matching , same as flux, and model. Is there any encoders, that is flux instead of standard sdxl.

No it's otherwise the same architecture as SDXL, so text encoders, unet, and VAE are all the same architecture. Just the objective is flow matching like SD3/Flux. The other two big things are getting the sampler and schedule right, and Perturbed Attention Guidance. I haven't used Draw Things personally, so I can't give specific advise. Generally, if it can let you use Euler schedule with Beta schedule, then you're good to go there. PAG is a nice to have, helps the image generation a lot. A quick Google didn't show Draw Things supporting it though.

nutbutter what is the condition scale set to? In draw things models like flux stable diffusion three are set to condition scale 1000.

Kitten123 were you able to make it work with drawthings ? I really would like to try this model

benshapiro679 not yet

benshapiro679 i got it to work

have to edit custom.json

{

"prefix" : "",

"file" : "bigaspv25_20250716_f16.ckpt",

"text_encoder" : "bigaspv25_20250716_open_clip_vit_bigg14_f16.ckpt",

"default_scale" : 16,

"clip_encoder" : "bigaspv25_20250716_clip_vit_l14_f16.ckpt",

"objective" : {

"u" : {

"condition_scale" : 1000

}

},

"modifier" : "none",

"noise_discretization" : {

"rf" : {

"_0" : {

"condition_scale" : 1000,

"sigma_max" : 1,

"sigma_min" : 0

}

}

},

"version" : "sdxl_base_v0.9",

"upcast_attention" : false,

"autoencoder" : "sdxl_vae_v1.0_f16.ckpt",

"name" : "bigaspv25-20250716"

}

benshapiro679 first import as sdxl normal then go to custom.json and edit the bigaspv25 code put in objective code and then change the noise

Kitten123 I cant make it work. Maybe i misunderstood some step.

1. I imported the bigaspv25-20250716.safetensors model as a normal SDXL model without changing options in the import dialog box.

2. I created a new local script called custom.js where I pasted your commands after all the commented senetnces.

Now should i just click the "play" button right next to custom.js in local scripts section ? If i do that , nothing happens. Maybe I missed something.

benshapiro679 its not a script, you have to go the custom.json and edit the code with bigasp2.5 in it. The file is in models folder in draw things files when you go to files in your mac or iphone depending on which in is it. When you download draw things app, there is already custom.json. Its not a script but its contains import setting of every model you used on draw things

Kitten123 It works now. Thanks.

benshapiro679 what setting did you use for this, shift, sampler, cfg.Did you use dmd2 and hyper lora.

benshapiro679 i got it figured out thanks

Kitten123 I was away for couple of days. In any case I used

DMD2 Lora ( https://civitai.com/models/1608870/dmd2-speed-lora-sdxl-pony-illustrious )

Euler A AYS

Following sampler also "worked"

Euler A Trailing

Euler Ancestral

DPM++ SDE AYS

DPM++ 2M AYS

DDIM Trailing

DPM++ SDE Trailing

DPM++ 2M Trailing

DDIM

Ian all others garbage image or failed render

I have no words... This model is something special for the SDXL architecture. It has a VERY good adherence to the prompts for the SDXL architecture. This is the first SDXL based model that passes the wine glasses test prompt perfectly every time. I've tried it about ten times. Usually only Flux, Lumina and other newer models with newer text encoders manage to do it. But you managed to do it on the SDXL architecture! And you didn't even train the text encoder? Bravo! I'm very curious how well this model merges with other models

The prompt "Three glasses of wine on a table. The green glass is on the left, the red glass is in the middle, the blue glass is on the right. Sunny day, outdoors, house, river, forest, trees, anime style. very detailed high quality art, masterpiece quality".

Thank you :) People don't give the CLIP models enough credit. They're over four years old yet still going strong.

Just want to say it is cool that somebody is still trying to improve SDXL instead of just moving on to whatever the latest shiny model is. Everything after SDXL seems too big/slow to run locally on peasant hardware, and too shackled/inflexible to generate the types of content that it can.

DMD2 Lora works fully with this model reducing the number of steps to 8. A note:

1. ModelShift has to be = 1 for the DMD2 lora atleast on DrawThings on MacBook

2. Sampler has to LCM

For how to make the model work on drawthings look at @Kitten123 comment

i got it to work finally. The import i got it figured out but the setting to run it you got it figured out thanks.

lcm with what ?

shift 1 or high ?

perturbed 2 or nothing ?

amazingbeauty I am using drawthings , so no perturbed. I use LCM with no shift . but with CFG Somehow in drawthings CFG > 1 with dmd2/LCM works and doesn't fry the image like Comfy. I I play with CFG from 1 to 2. And them some HiresFix

i just figured how to merge this model with other sdxl model on draw things app. I used an sdxxl v3.0 and base sdxl model to merge with it. I did the add difference merge. (1.0)bigasp2.5 *(0.6)(sdxxl- base sdxl). But you have to change the objective flow and noise discretation to the base as bigaspv25

This thing is addictive AF with the correct prompting and settings. Sure, not all of the gens are good, but those that are good are by far the best of any SDXL checkpoint I've ever tried, and the speed over Chroma makes it worthwhile to fish for that "H01y Sh1t" generation. It's not as consistent as Chroma (I know it's flux-based), but the gens that are good are seemingly better than Chroma... I feel like there's something I'm missing in the way of schedulers or other tomfoolery that would make this thing pure magic.

@nutbutter Do you have any tips on how to avoid the mangled faces that sometimes appear when the subject is beyond a certain distance from the "camera?" Also, did any of the experiments for 2.5 yield any good results? I saw there were some pickletensor files under an experimental branch and was wondering. Any chance of an n-gram list like the other asps?

Anyway, this thing's knowledge and flexibility are impressive. Absolutely stunning work! What a way to render all other SDXL photoreals irrelevant. I'm sure if you choose to work on Chroma next, yours will again be THE model for trainers/mixers to use.

hiresfix ( img2img denoise by around 0.3) by blowing up the original image to large size and using tiled diffusion and tiled vae , clears out the distant faces

regarding "correct prompting" chatgpt induced prompts really helps. try it . give your phrases , high level ideas about mood , lighting etc. ask it to construct a prompt. If it gives a too verbose flux like prompt tell it the model uses clip and tone it down. After 1 or 2 iterations of coaxing it it understands how much detail it has to put , and then the resulting prompts get amazing for use.

I agree with benshapiro679's suggestions. Though I'm still having trouble with distant faces, personally.

I'll see if I can drop the n-grams at some point.

nutbutter Sweet, thanks!

nutbutter this is amazing thanks ! I'm thinking the faces and eyes issues could be linked to the parameter used by the beta schedule as playing with those is helping a bit, but i'm far from offering a solution unfortunately.

is there a tag lists for this model.

No tag list, but here's the n-gram list: https://gitlab.com/-/snippets/4879090

After a bit more experimentation, I just wanted to note that this thing can really do A LOT more by default than ASP 2.0. Really fun model. I still feel that it could probably use a wee touch of unfrozen clip training (as it sort of just does not pick up on certain things especially in the negative prompt, sometimes), but overall yeah, really fun.

Thanks :) I agree with you; I'm planning a small training run on top of 2.5 with the text encoders partially unfrozen as well as a few other tweaks to make a v2.6.

OMG I just stumbled over this by chance after a long time of playing only with WAN and such. Bigaspv2 was/is my fav SDXL model, and since it was not one of the most liked in the list of models posted here (not as easy to get results like i.e. Lustify I guess), and not much seemed to be happening there anymore, I did not expect anything new AT ALL. Turns out you were just gone that long because you have MASSIVE updates coming. Also thanks for writing out your thoughts and explaining a bit. My limited English does not allow to tell you how excited I am for this. Especially 3.0. But I got a few free days later this month and I cannot wait to play around with 2.5.

Thank you so much you have no idea how many time I spend with v2. Most likely not as much as you but oh well. ^_^ Thanks again.

So this is a base model?

its so far removed from SDXL that it might as well be a new base model.

I have no idea how this would be done and how much work would be so I just ask: Will there be a version that work in forge UI or no such plans?

What's the point of using that GUI?

2P2 Just what I am used to work with. I understand comfy is superior but I am new to it and the comfy noodles confuse me a lot. So if it was an easy thing to do a forge version why not. I am sure there are more people like me. If it is lots of work to make it compatible I understand it won't be done though.

I'm going to try to get it working in forge and such, yeah.

nutbutter Vladmandic already implemented it in SD.Next FYI:

https://github.com/vladmandic/sdnext/wiki/CHANGELOG

People who still want to use an A1111 variant should probably consider just using that since it's actually maintained actively.

ZootAllures9111 Yeah poked around a bit on A1111 and Forge and neither seems to be maintained lately? (Forge seems to have some, but the important model detection repo isn't...)

Unfortunately, those UI are slow to no updates. Best to make the investment in time to learn comfyUI, it's not as bad as it looks with "comfy manager."

the 10k dollars model that didn't fix anatomy errors.

dislikers ..try prompt a woman from side or back.

also other anatomy errors i'll keep it to my self till i'm able to fix them that sdxl filled with like a virus , not worth to tell about while 99% even can't notice it , but it all mess (whole sdxl except itercomp).

why all the fucking standing full body renders like they are wearing invisible heels (real people isn't a fucking ballerinas )?sdxl nice to render standing aliens not humans.

Great!!

Please oh please tag and n-gram list?

nutbutter Thanks a million!

Would it be possible to train a lora for this in OneTrainer with the right settings? I gave it a shot just to see, but all I got was messed up noise pretty much.

There's at least one fork of OneTrainer that supposedly implements support for bigASP 2.5 (https://github.com/Koratahiu/OneTrainer/tree/SDXL-Flowmatching-train-(bigASP-V2.5)). I can't vouch for it because I haven't used nor do I know where it came from. I don't see anything dangerous in the diff github shows, but caveat emptor.

I have my own WIP lora training script (https://github.com/fpgaminer/bigasp-training/tree/main/v25lora-training-scripts), but it's very much WIP.

@wowlad Any tips for prompting asp 2.5 or info on other good models? Saw the username and can't resist the ask.

The aspect ratio and HxW of the images generated has an ENORMOUS impact on the realism of the output. Only use 1024x1024 if you want semireal or anime. Use a more standard photo resolution for max realism (see the description).

Thank you for mentioning that. I'm not too surprised. I should also mention that SDXL has a hidden feature I think most people don't use. During training the model is told the original resolution of the image (before cropping and resizing) as part of its conditioning. By default generator UIs like Comfy set that conditioning to be equal to the resolution you are generating. But in ComfyUI you can use SDXL specific nodes like CLIPTextEncodeSDXL to control that. I've experimented with setting that conditioning to 4x the resolution I'm generating at and it seems to provide a small boost in quality. (I only tweak the positive conditioning; leaving negative at default seems to help push the model further towards the desired result).

FYI: I just ran the numbers on the dataset and 832x1216 and 1216x832 are the most common buckets on the photo half, by far (~2.5M photos each at those aspect ratios).

you are right, man, i changed it to 832x1216 makes a huge impact on it.

nutbutter Thanks! I will try this weekend. Any other tips/tricks? Were you still planning on maybe a 2.6? I saw your github was updated with some 2.6 quality training stuff and was excited.

Yeah I'm prepping for a 2.6 to hopefully sand off some of the rough edges.

@nutbutter (Rubs hands) Hope it's going well with 2.6 training! Can't wait to see what kind of goodies it can make.

This model is definitely a step forward for photorealistic SDXL and a ton of fun!

People waiting for the next Pony, and we get a huge wild horse! Groundbreaking. Although I'm not sure how this would be used without tools like RES4LYF.

@titanin Any tips on settings with res4lyf?

Here's a very simple workflow with a few settings that have worked for me in these couple of days I've been trying it. So I really don't know what I'm doing (specially with this model), so take it with a grain of salt.

https://drive.google.com/file/d/1vT-pmb4segf4RQNYhoZRSI1w9ACOws4u/view?usp=drive_link

For 20-30 step generations. Schedulers: linear_quadratic, bong_tangent, normal, Ays30+ and Beta. The 3 latter gave me better results., and Beta is the most tweakable. Linear_quadratic could be the best for composition, but the worst for details, you can see why with the sigmasPreview graph turned on. The idea is to keep the generation in composition stage in the low frequencies for the most part, and then ramp it up for enough steps in the high frequencies for details at the end. The model shift at 6 helps bumping that graph in a better way most of the time. Samplers, as you know, there's a lot, but again, for quick 20-30 steps a safe bet are the multistep res2m, res3m or res2s, and obviously euler for anime stuff. The noise types, sigma scaling and detail boost options are also worthwhile playing with, since they add so much in composition or detail.

titanin God damn, beta thing has so many parameters to tweak in Res4lyf. I see you are changing scheduler in this workflow, is this the best workflow you could find for this model ?

Aethnos that's a basic simple stripped down version of the workflow I've been building up with this model. The reason I have those schedulers appart from the sampler is because I like to plug it to the graph to have a better view of how it's going to denoise, and maybe start a little refining with details at the best suited steps. But since this is try and fail for the most part, you can go by with the settings on the clownshark ksampler and get rid of the schedulers fat to simplify the workflow. You can use the beta 57 out of the box, (which is also the suggestion of the developer of Res4lyf). That's alpha:0.50 and beta:0.70 in the beta scheduler, I've been having consistently the best results with those settings, but you can play around also with 0.60/0.60 (the default), 0.55/0.65, etc. If you have a very complex or dynamic composition, more characters, and so, and details don't matter much, probably nothing will beat linear_quadratic. But most of the time, you can feel safe with beta 57 out of the box with this model.

titanin thanks !

I did not know the difference between beta and beta57 in Comfy, so that's useful to know :)

@titanin Did you ever find best settings or workflow for bigasp 2.5?

Sorry for the late response. I wouldn't say I found better settings for this model, more like learning ways to comunicate with it, dedicating a little more effort on the prompt. For me, the best thing about this model is that with sdxl quickness and power needs, it can take anything and will throw back something arguably undoable for an sdxl model with just a prompt. From that point, you can play around with all the possibilities the model can handle. The only thing I could add exclusively based on my experience, is that with 40+ (multi)steps the model will adhere much better to a more complex prompt.

Amazing model ! I hope it gets noticed despite the popularity of Chroma and others. You're really proposing original solutions to still use SDXL, i'm having tons of fun. I'm having difficulty choosing between beta with shift a 1 and normal with shift 5-6. Any advice ?

doesn't work with forgeui?

it is working just in comfyui because it need this node "ModelSamplingSD3"

You would need to do some modification to ForgeUI to make it understand its flow not iterative model. Probably not hard to do with today AI tools.

Personally I think BigAsp v2 doesn't really need anything but more training. Specialty models never really take off, as their use becomes limited, and so they stay obscure. I don't even think BigAsp v2 is great on its own, but with DMD2 it shines, although building in DMD2 might not be great because you then lose the ability to adjust the DMD2 strength, which can help sometimes. But building in DMD2 can also benefit in other ways, like being able to use higher steps without overbaking an image. I would say BigAsp v2 might not even necessarily need a new training set, but rather, to probably prune the training set to get rid of the stuff that might cause poor results. Of course that can be hard when we're talking millions of images, so I would say criteria could be developed, like removing images of a certain file size/quality to get the weakest data out, along with some other criteria.

I'm surprised more people aren't using this thing. It's fantastic.

Pretty awesome. Nutbutter mentioned using CLIPTextEncodeSDXL to set the hxw to multiples of the image size, and it seems to help quite a bit. ddpm3whatever (I think) and sgmuniform seem to do well with sd3 sampler at 1, cfg of 3, and 0 for PAG. Adding "junk" or even the alphabet in the negative prompt can improve outputs (but can harm some outputs), but I haven't yet found the magic bullet for all uses. Setting 832x126 or 1216x832 (or other nonsquare resolutions) is huge for realism. 1024x1024 kills realism.

I sure wish I knew how to unlock the full potential of the model. I saw nutbutter made some text encoder training trial runs, but never stripped the pickles off of them or posted them, and had mentioned working on a 2.6 version. I'm kind of wondering how all that is going.

Either way, this is my goto with chroma for my uses. I just wish I could figure out what kind of magic I need to get it to be the model I know it can be (so close sometimes, and once in a while it's perfect).

Maybe one day we can access the full magic of the asp as it was intended to be.

Maybe someone who knows how can pull the pickles off a few versions of the text encoder training runs to try out while we hope for a 2.6?

this shit is fucking incredible. keep cooking.

Anyone know how to remove pickles so we can try the text encoder trained versions on hugging face?

BigAsp is the GOAT of NSFW models. No other model has this much flexibility.

I find sometimes when generating images with a completely blank prompt that it will spit out 100% perfect photoreal images like no other model, but it's hard to prompt and get that same quality and extreme detail.

Also, depending on what you are trying to prompt for, you may get a case of animeface/sameface/ponyface which only shows up with certain concepts (putting anime and cartoon in the negative prompt destroys the model's ability to make said concepts at all). The asp models otherwise have the best face flexibility of all the models I've found, it's just with certain things that it has issues (limited dataset maybe or I just don't have the right tokens).

Very flexible model, the best so far. Any tips on how to maximize quality/flexibility is greatly appreciated. Any special syntax or settings. I feel like all I'm missing is some kind of "score_7_up" and the model would transform into a life-altering time hog. (actually already is)

Certain concepts seem to be subject to large amounts of jpeg artifacts and people appear moderately pixelated in them (I loled when I got a chick with an entire arm that was pixelated against the wall). This is when using 832x1216 for all settings including the clip settings. Any tips to get around this would be awesome. Very happy to have what we have been given, but I beg for more...

I check in daily at least once to see if nutbutter has a new model out. I hope everything is going well and whatever you are working on is even more flexible and delicious than those you released before.

Imagine when this level of finetuning is applied to a 20 billion parameter model like Qwen in the future...

I'm super excited for whatever we might get. If wielded properly, Asp2.5 is genuinely incredible and brilliant. I'm sure whatever gets released will be amazing. I'm curious if nutbutter is still planning a 2.6 or if they are moving on the other models.

I think he said Qwen is too big for him. Was choosing between Chroma or Wan 2.2 5B, IIRC. Either would probably work well, the SDXL text encoder is really the only thing that holds back BigASP currently.

@ZootAllures9111 Wan 2.2 5B could be amazing. On paper, it has the specs to become a true SDXL successor.

The model is better than you give it credit for. Training on varied data instead of only on adult is def the way to go. Looking forward to your future work!

@nutbutter Any way to grab some pre-release versions of 2.5 or 2.6? :P UWU

Unfortunately, with certain prompts (especially in the negative), I keep getting images overwhelmingly dominated by a single color, and if I dial the prompt power to 2, it will almost always spit out a solid, featureless shade of pink, red, or orange with those prompts (except maybe with a frame around it here or there). I would be immensely grateful for any advice on how to bypass this issue and produce as intended!

when will bigasp 2.6 be released.

@Kitten123 Whenever (or if) we get it, I just hope we get it as-produced, and not intentionally hamstrung. Some authors will damage their photoreal-capable models intentionally, especially ones that are trained on booru data. Unfortunately, it leads to all kinds of unusual and unexpected issues with even remotely adjacent concepts and often degrades the model's output in bizarre and unpredictable ways.

Almost there. 2.5 had a slight drop in fine detail quality compared to 2.0. I want to tighten that back up for 2.6 so I know that everything is ready for 3.0. And to do that I needed a better scoring model (the model that tags images as "high quality", "low quality", etc). The old one only worked at 224x224, so it couldn't reliably tell a 4k RAW from a thumbnail 🤣 The new one is getting a lot of love and a huge resolution bump, so I think that will help 2.6 (and thus 3.0) do better at fine details. Everything else is ready for 2.6's training run, so hopefully soon?

@nutbutter comfy only again?

@raymirjamani227 i hope not 😑

@nutbutter What model were you thinking of basing 3.0 off of? As an aside, for some reason no matter what negatives I enter into 2.5, I always randomly get the best 20 out of 10 "there's no way anyone would know that's not real" gens with no negatives at all. Unfortunately, I get tons of heavily pixelated images that appear to be composed of 6x6 grids of pixels (can't figure out how to harvest the good gens without the pixelated ones). I think your source content is awesome and you are doing amazing work. Hoping maybe we can get the checkpoint 2.5 was intended to be with 2.6 :D

@nutbutter really glad to hear that !

@null Please do not ------ the model. Give it to us raw and wriggling.

@raymirjamani227 It's not really his problem that other UIs don't have the ability to support flow-matching SDXL models. Well, other than SD.Next. Tell the maintainers of other A111-based UIs to be more like Vladmantic in terms of model support lol.

@nutbutter Please info on 2.6? Hopefully it will use the same datasets (or more)?

Impressive model! But how can we train it properly?

Could you please release the fine-tuning code with some recommended tips and settings?

Okay, self-answered: I made a fork of sd_scripts that uses flow matching in the vector field for SDXL. The training works correctly.

@deGENERATIVE_SQUAD It sounds like you don't need them anymore, but the official training scripts are available here:

https://github.com/fpgaminer/bigasp-training

@deGENERATIVE_SQUAD Where might one find an eventual finetune of asp2.5? Would it end up on civit?

@deGENERATIVE_SQUAD Yea, so LoRA is no problem at all.

@liftweights I’ve seen that LoRA training script, but it’s somewhat limited in functionality and doesn’t fit my needs. I use the PISSA SVD method to simulate full-model training in the form of a LoRA - essentially, I extract the weights into the desired dimension and continue training those weights with LyCORIS, specifying a null diff model as the pretrained one.

@null

>Where might one find an eventual finetune of asp2.5?

As far as I know, version 2.5 is the latest one at the moment.

>Would it end up on civit?

Don't know.

@deGENERATIVE_SQUAD Very interested in any offshoots of 2.5 or 2.6 that might become available...

@null My model Snakebite 2.2 is finetuned on bigASP 2.5: Snakebite 2 - v2.2 Turbo | Stable Diffusion XL Checkpoint | Civitai

@deGENERATIVE_SQUAD I hadn't heard of PISSA SVD - sounds intriguing. I'll have to read more about it soon. Have you made your Flow Matching fork of sd_scripts available? It would make for a good pull request.

@liftweights

PISSA SVD is indeed a very efficient method for fine-tuning a model into virtually any direction.

The core approach is described here: https://github.com/GraphPKU/PiSSA, but the actual SDXL preparation process is still quite rough due to the lack of proper SDXL-compatible tools - it requires several manual steps to extract and prepare the weights.

I could write a guide on how to adapt any SDXL model for PISSA-style training if you’re interested.

My fork is specifically designed for LoRA training using LyCORIS (especially PISSA-based ones) and is available here: https://github.com/rozmary1/sd-scripts

It basically uses three arguments with sdxl_train_network.py:

--flow_matching # enables flow matching

--flow_matching_objective="vector_field" # selects the objective type

--flow_matching_shift=2.5 # sets the flow shift

I’ve also added two fixes that aren’t included in this fork:

Safe averaging regardless of tensor shape (for FFT loss using torch-frft) and a corrected loss formula to make it work properly with flow. If you’re not using FFT, you don’t need it.

IMAGE_TRANSFORMS fix, since the original fork (https://github.com/67372a/sd-scripts) - which includes a lot of new features and working EDM2 - had a transform implementation incompatible with my environment.

There’s also a fork from the author of this NoobAI flow model, but it’s mostly aimed at re-training a full epsilon checkpoint: https://github.com/bluvoll/sd-scripts

@deGENERATIVE_SQUAD Excellent, thank you for sharing your fork! I would be interested in hearing more about your PiSSA preparation process. I'm sure others would too. 🙂 After looking at the research, I figured it might be possible to extract a LoRA from a checkpoint to supply to kohya via --network_weights... I tried doing this with the Peft library, but have been running into issues related to incompatible keys.

There's also a draft pull request for PiSSA here, but it only supports Flux at the time of writing: https://github.com/kohya-ss/sd-scripts/pull/2001

@deGENERATIVE_SQUAD It's very interesting about PISSA, I thought many people really would be happy if you took the time and explained about preparation!)🙂

@deGENERATIVE_SQUAD Have you found any good negative prompt groups for asp2.5 that are good for photorealism?

@liftweights @Ivanivan47172

Okay guys, I did it [⚡WIP DRAFT⚡] [PISSA] [SVD] Fast full finetune simulation at home on any GPU | Civitai

@null I don’t like complex walls of negative prompts, I usually rely on various guidance methods or DMD instead.

@deGENERATIVE_SQUAD AMAZING, thank you a lot!

@deGENERATIVE_SQUAD Is there any way you could release the fix for 2.5?

@null As I answered in the guide:

The Bigasp example is more of a proof of concept - a demonstration that you can quickly fine-tune a model’s generative capabilities to the desired state without much hassle. The goal wasn’t to “fix” the model itself.

The guide is generally about fast and efficient training of any SDXL model for personal needs.

So there isn’t really a final model I could share - the current one is in a disassembled state, along with a bunch of different epochs from various test runs.

At the moment, I’m testing a fast conversion method for turning any EPS checkpoint into a FLOW one, which is already showing good results with minimal time investment.

@deGENERATIVE_SQUAD Darn. I was hoping there was a fix for the blurriness present in some concepts. I figured it was due to intentionally inflicted damage to make the model more suitable for release, and was hoping someone had found a way to repair it, but it was way beyond my skill unfortunately. Any way you could make the useless components available so they can be tinkered with?

What's going on with the hf page? Looks like the diffusers file for 2.5 was updated and asp1 has some (I guess previously private) files showing with a bunch of samples deleted or something. Is something up?

Can we have the original 2.5 as it was intended since you seem to be opening up all of your model stash?

HF has limited private storage, so I just flipped my old repos to public. Mostly just old training junk.

@nutbutter I see. Worried you were hacked or something. Did 2.6 take to training well, or did it cause issues like 2.5?

Recent discovery of mine: the stock "CFGNorm" node at the default setting of 1.0 placed right before ModelSamplingSD3 helps WAY more with preventing burn-in / reddening for bigASP 2.5 than any equivalent node seems to. That and then PAG at 2.0 - 3.0 after ModelSamplingSD3 plus DPM++ 2S Ancestral / Bong Tangent with 5.5 - 6.5 CFG give great results.

@nutbutter Have you seen this? It looks like an incredible candidate for BA 3.0. They're claiming SOTA photorealism with 6B parameters, text encoder is only 4B, and it converges in 8 steps...

Weights dropping any second now

I'll keep an eye on it, thank you.

@nutbutter Any way we could get some in-dev versions of 2.6 to play with? @liftweights Is the z-image seeming to have a problem with being overly predictable and producing little variation on a prompt, or this that just an issue with settings?

Looks like 2.6 is almost done from the uploads to HF?

I guess not.

Does anyone have a functioning IPadapter workflow that works with this model? I need to use an image as a negative, but only get static when using SDXL stuff.

Amazing model. Just got to test it for the first time, and I'm honestly baffled why this isn't much more popular.

Very sensitive to settings. What settings do you find best?

@darkmodi338 Did you find any good settings or does it need more training?

@rootingforba26 it’s rough to say the least. You need to prompt for a lot of details of what you want, using the tags and sentence from joycaption. Euler 30-40 steps is good at around 4/4.5 cfg using either normal or beta scheduler. Also, using res_2s sampler at shift=3 can help prompt adherence, but might overcook the image more easily with cfg. I usually avoid PAG because it gives too much guidance for my taste (loss of small imperfections, everything becomes too clearly defined, giving an unnatural look). Generally, id a good positive + negative, both detailed and verbose, are really useful here and more likely to help gens.

@Aethnos It can indeed be rough, depending on what you are trying to prompt for. Have you tried any of the training checkpoints on fancyfeast huggingface page? Are those any good? I haven't been able to try since they aren't safetensor format.

@rootingforba26 Nope, I’m waiting for 2.6 like everyone else :-). I took a break from AI for a while but still look forward to 2.6

@Aethnos Did you find any good negative prompt combos that clean up the output?

@rootingforba26 depending on what I want, I twist it a lot. When I go for nonprofessional photorealism, it usually look like this

cgi, 3d render, unreal engine, octane render, illustration, anime, cartoon, ai-generated look, uncanny, watermark, logo, text, magazine editorial, fashion shoot, advertisement, model face, perfect symmetry, beauty retouch, skin smoothing, airbrushed, flawless skin, waxy skin, plastic skin, poreless skin, glossy skin, HDR, overprocessed, oversharpened, crispy skin texture, studio lighting, dramatic chiaroscuro, perfect framing, centered composition, stiff mannequin pose, intense eye contact, staring into the camera, fake bokeh, seamless backdrop, pure black background, deformed hands, extra fingers, bad anatomy, standard definition, low definition, 480p, 720p, blurry, undetailed

The lack of any text encoder training makes it sort of harder to use than BigASP 2.0 was

@nutbutter Very eagerly anticipating next model for months. At this point I'm pretty sure you could charge to download a full 2.6 and some people would be ok with it.

How to convert the training checkpoints to safetensors for use?

I Sincerely hope it will still be released for the open source community for free without paywalls, but with support option, but I’d understand a decision like that as well.

@Aethnos I might have spooked nutbutter with my incessant poking around and question asking. Hopefully not. I just have nothing better to do during my free time than burn out my GPU on the asps. Just an addict trying to get my fix, and asp is my drug of choice.

nah let him cook

@ZootAllures9111 I struggle to contain my anticipation. Hopefully the next release is not hindered in any way.

Just, holy shit. Amazing.

@olddillpickle Any usage or training tips for this model?

@rootingforba26 I'm using this with it and going through different models to try for different results. Pretty damn baller. ASP is my new hero.

https://civitai.com/models/1513601?modelVersionId=2030454

@MasterShakeZulaTheMicRula Sweet, I will check it out. There is a workflow for littleasp, but I haven't seen such a model. Is there anywhere to get it?

@MasterShakeZulaTheMicRula Is there no way to clean up the output natively (only ba2.5)? I love the flexibility, but it seems to refuse to want to do certain concepts without jumping through hoops.

One of the best SDXL models ever IMO. I love it. I can't wait for whatever you're cooking up next.

@ObsidianDreams Any preferred workflow/settings/prompting/negatives? I'm always seeking better quality way to produce unique content. Sometimes a simple negative can make or break things.

@ObsidianDreams Please any help or model recommendations?

whats the progress on bigasp 2.6

Sooo I did the training on it; that finished quite awhile ago. But it turned out quite bad. I've been tinkering with post-training approaches instead to see if I can turn it into something worthwhile. Also been on vacation for a bit. The raw training checkpoints are up on huggingface, but I don't think they're worth playing with.

@nutbutter okay, maybe if the bigasp 2.5 was more focused on photo images like bigasp 2.0 maybe it would have worked out better.

@nutbutter Anyway you can drop a safetensors of the best of the run from before the post-training? I'd like to play with it regardless of quality. I see it as a challenge to get good results out of a tricky checkpoint. Everyone needs a hobby.

@Kitten123 Bruh. Thank goodness for the diverse anime and manga content is all I'm going to say, I think it gave it vastly greater range and the right negatives and settings can really clear up the weirdness/smearing/etc.

@Kitten123 Maybe. I do think the anime side helps a lot with broadening concepts, but yeah it might be overloading SDXL's capacity. I figure one fix is to do a LORA on top for photos specifically, which might help while preserving the concept broadening? It's on my list of post training experiments to try.

@nutbutter I think another fix is to convert 2.6 to diffusion model first, then merge it with 2.0, and convert to FlowMatching model if necessary on the situation.

Also, is it possible to subtract sdxl from 2.0 to extract a lora for the purpose of merging?

@foobar545 If you manage to fix 2.5 or 2.6, can you please post the fixed one to civit or hf for the less experienced at training? I'd try it myself but have had no luck with kohya, and installing it is giving nothing but errors.

@foobar545 Is there any way to fix bigasp 2.5? It seems to have a text encoder or clip issue maybe?

should we use discrete flow shift when training loras for this?

@ichp9 Someone made a guide: [⚡WIP DRAFT⚡] [PISSA] [SVD] Fast full finetune simulation at home on any GPU (part 1) | Civitai Also, do you know how to clear up the issues with this model? I seem to be stuck with obnoxious blurring and artifact shapes.

@rootingforba26 thanks this is a really interesting method! as for the artifacts, I believe you just have to make sure and set modelsamplingsd3 shift >= 1 with this model

@rootingforba26 Nevermind I see what you meant. It really needs a good negative prompt sometimes, but it's hard to get rid of all the errors

@ichp9 Yeah, it's hypersensitive to negatives and settings. Feels like it's using a wrong component somewhere, I just don't know which part or how to fix it.

@ichp9 I'm getting better results at 0.5 shift, actually.

@rootingforba26 thanks, I was using 1.0 but this is working even better. I'll have to test more with it. This negative prompt seems to work the best: "(worst quality:1.2), (low quality:1.2), lowres, bad anatomy, bad hands" along with dpm++2m sde / bong_tangent

@mr_zoo09 I've been using shift 0.5, cfg 3.4, 40 steps, Euler (also works very well -maybe better- with dpm2/3etc), Simple schedule. Seems to offer good results some of the time, but still working through really weird issues.

Are you all using the clip that comes as part of the model, or a different clip/text encoder etc?

2.7 in training? Or maybe 3.0?

Good news! I'm told 3.0 is in training using Klein.

In the meantime, anyone got tips on schedule/sampler/etc for 2.5?