Donations: Training these new heavy models like Qwen-Image and WAN2.2 is very expensive for me and I don't earn any money with these models, so any donation is appreciated to keep my models free and fund training new ones: https://ko-fi.com/aicharacters

Trigger (must include in prompt or else LoRa likeness will be very lacking):

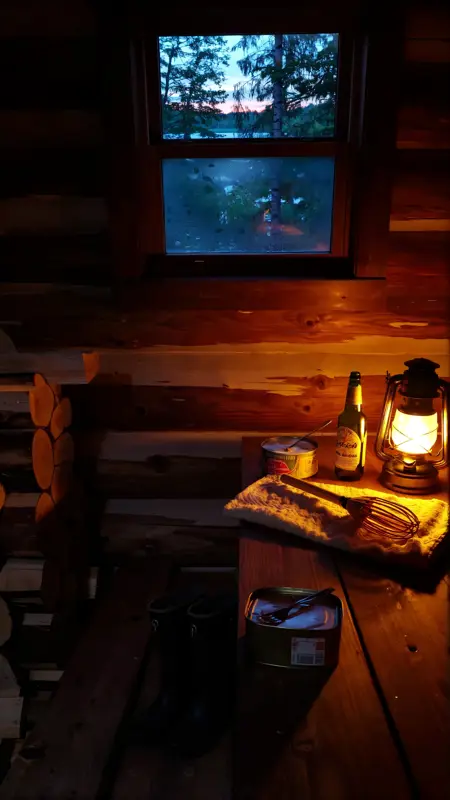

amateur photo

Important notice: This model was designed for Text2Image and WAN2.2. I do not know how well it works for video generation and while it will probably work okay with WAN2.1 (use the low-noise version then), it is better to use it with WAN2.2.

Please note that as this model was designed for WAN2.2, it has both a high-noise and a low-noise version. I recommend using both in tandem!

Please also note that the online generator will NOT give you as good results as running the model locally in ComfyUI using my following recommended inference workflow:

Latest version notes and model page updates:

completely retrained, should be the best version of all my WAN2.1 and 2.2 models now

completely reworked the recommended inference workflow

LoRa description:

This LoRa model was designed to generate images that look like casual snapshot photos taken with a smartphone.

Disclaimer:

I do not claim to be the current owner, original creator of, person or legal representative of a person depicted in, any of the concepts that my models, including this one, aim to emulate nor the training data used to train this model. I do not claim that any of my models have received an endorsement by those respective individuals. I also do not claim that the emulation attempted by my models represents a 100% accurate depiction of the original concept in question nor do I claim that the quality of my emulation reaches the same quality of the original concept. All credit goes to the respective current owners, original creators, or people and I encourage you to support them in any way you can.

If you are the current owner, original creator of, person or legal representative of a person depicted in, any of the concepts that any of my models aims to emulate and would like that specific model to be removed, then please leave me a private message here or on Reddit with proof of authenticity of your identity and claim and I will remove it.

Additionally, I do not endorse my models being used to violate the law or enact still legal, but immoral acts such as the spread of misinformation using deep fakes.

Description

FAQ

Comments (16)

are you supposed to load both high and low as loras? or how is this working?

Bro I literally link the recommended workflow in the model description...

Really neat workflow that seems to capture the essence of what the developers may have had in mind with the two noise models. Although I have no idea lol

Works also with t2v only using high noise file, very good!

Yep, i can confirm, amazing sharp results!

Hello, I'd like to ask, since both of your SAMPLER seeds are fixed, does that mean you think it's better to fix the high noise too? Should I use your seeds? Or can the high noise be random? I know the low noise is always fixed, but I saw in your workflow that the high noise was also fixed, which is why I'm wondering. Or was it for testing purposes, and in practice, I can use a random seed, right?

ive been doing high and low noise same seed but randomized, worked pretty well so far

https://huggingface.co/Kijai/WanVideo_comfy/tree/main/Wan22-Lightning

I tried various combinations of Kijai’s 4-step dual-noise acceleration LoRA with your smartphone LoRA, but the results were unsatisfactory. I hope you can provide an 8-step workflow optimized for acceleration.

I just uploaded an entirely new model version and inference workflow version. Feel free to try out both.

For some reason I have grey output . I use all the same as you exept WAN2.2 fp8 scaled.

你好,我在用的时候发现男性都有出现衣服透出乳头的问题,部分女性也会出现

Hello, when I was using it, I found that for men, there is a problem that the nipples show through the clothes, and the same problem also occurs for some women.

V2 high noise is still way better, at least in my case. I use it in both high and low noise paths

I just uploaded an entirely new model version and inference workflow version. Feel free to try out both.

@AI_Characters kool will give it a spin

Nice

Details

Files

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

Mirrors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors

WAN2.2-HighNoise_SmartphoneSnapshotPhotoReality_v3_by-AI_Characters.safetensors