Sampler: dpmpp_2m

Scheduler: Beta

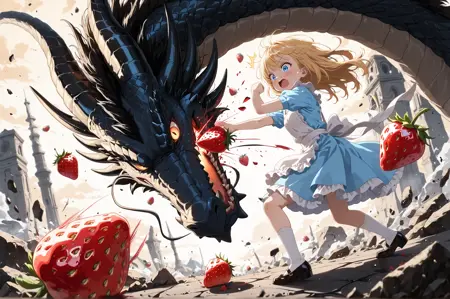

23-28 steps No lora has been used in any image.Mikogen.com (Create comics and/or generate images)

![]() (Try it for free with daily free credits).

(Try it for free with daily free credits).

To achieve quality results, a base resolution of 1024×1536 is recommended (before any kind of hi-res fix; the shorter side should be 1024 px or higher).

v1.0 Balance Mix

This version is a middle ground between the “super-detailed” and the “Extreme Mix” versions.

It is not a merge of the two models, but a new model with improvements taken from both plus new ones (mainly for better hands and feet).

The model is still very good for backgrounds and lighting, yet the most impressive results in terms of realism combined with anime faces remain somewhat exclusive to the “Extreme Mix” model.

Instead, this one is much more “friendly” to anime styles (anime screencap, flat colors, anime illustration, no lineart, etc.).

Usually, placing any of those tags at the beginning is more than enough and the outputs look less overloaded.

Backgrounds stay highly detailed, but sometimes, especially when using the “intricate” tag, they can become too abstract.

This model is easier to prompt than the Extreme version.

Overall, it is probably a much more versatile model that is easier to prompt for a wider range of styles and environments.

v1.0 Extreme Mix

This “Extreme Mix” version combines every improvement from the previous two models with a much more intense boost in detail level, background quality, and overall fidelity.

Because of that, the model can be a bit harder to prompt—especially for flat anime styles—but it’s perfectly doable.

For a flat anime style with this model, the key is to include these tags:

(anime screencap:1.25), (flat colors:1.4)

both at the beginning and at the end of the prompt. (anime coloring) is also an option.

(These are ComfyUI weights; the values should be much lower depending on the system and the length of the prompt. If the prompt is short, the weights should be reduced to avoid oversaturation.)

By default the output may lean quite “2.5D” or look more adult.

To make characters appear younger you can add the tag “(young:1.35)” (or others like “big eyes”, “big head” that the model links to younger characters without distorting proportions).

For shiny materials, reflections, particles, dark scenes and high detail, the results can be straight-up sensational with the right prompting.

v2.0 Superdetailed

This version is much more refined and generates more consistent and stylized results as standard.

It supports all types of styles with the appropriate prompting while maintaining high quality and detail.

Version 2.0 performs better in dark scenes.

The style is slightly more “moe” than version 1.0.

The maximum possible detail in backgrounds may still be slightly higher in version 1.0.

==================

v2.0 Anime

This version improves the basic detail even with heavy weights for anime such as “anime screen” or “anime style.”

This version attempts to approximate the “Nijijourney” style.

Apart from general improvements, the style tends slightly toward a more moe or adorable style (but remains just as versatile for all types of styles, from neutral to anime or semi-realistic).

==================

v1.0 Anime and v1.0 Superdetailed: Initial release.

About:

A merge centered on anime style but highly adaptable, especially to dark lighting, detailed backgrounds, or even semi realistic styles.

All sample images are generated as follows:

Steps: 23

CFG: 7.5

Sampler: dmpp_2m

Scheduler: beta

Base resolution:

1024x1536

Latent upscale x1.29

Second KSampler (for hiresfix) with the same settings as the first, using a denoise value of 0.45-0.52

Prompting is very important.

Use style reinforcement tags (anime, flat colors, anime screencap, etc.) at the beginning OR end of the prompt.

(In the end, it is necessary to repeat it if the prompt is very long. With this model, there will be no degradation for doing so, nor will there be any “burn-in.”)

For backgrounds, it's best to add various background quality tags at the end of the prompt.

A merge centered on anime style but highly adaptable, especially to dark lighting, detailed backgrounds, or even semi realistic styles.

Description

This version adds massive detail, especially to the backgrounds.

It is just as flexible, but you will need to increase the weights for more anime or moe styles.

Sampler: dpmpp_2m

Scheduler: Beta

28 stepsNo lora has been used in any image.All sample images are generated as follows:

Steps: 28

CFG: 7.5 or 8

Sampler: dmpp_2m

Scheduler: beta

Base resolution:

1024x1536

Latent upscale x1.29

Second KSampler (for hiresfix) with the same settings as the first, using a denoise value of 0.52 (Fixed hires-fix seed in the examples: 566302606917507)

Prompting is very important.

Use style reinforcement tags (anime, flat colors, anime screenshot, etc.) at the beginning of the prompt.

If a prompt is too long, it will lose stylistic influence, but this can be fixed by adding one of the style tags again at the end.

For backgrounds, it's best to add various background quality tags at the end of the prompt.

FAQ

Comments (14)

Very good model according to example pics,really amazing,but there's one question:this model tend to generate very round eyes.Any method to fix that?Prompt,lora or something?

and add prompt for other example pics plz

In those examples, they are more rounded because I used "big eyes" in all of them.

The character's age greatly affects their roundness.

But of course, you can adjust it using prompts.

Here you can see variations of eyes:

Riwer OK,thanks.

prompts of the sampl,e pics?

I tried using the sample prompt and settings, but the output images did not match the samples.

It seems that some important elements, such as negatives, custom nodes, or Lora settings, may be missing.

If possible, could you please upload the entire workflow?

All images use hires fix (a second KSampler with the same seed, 12 steps and 0.52 denoise, after increasing the latent space by x1.5. The base resolution is 1024x1536).

All of this is the most decisive factor for generation.

Additionally, the scheduler for both the base and the hires fix is "beta".

I don't use LoRAs or extras.

Let me know which specific image you're interested in, and I'll check the negative prompt I used.

Riwer https://civitai.com/images/94326726

This is my workflow.

Please let me know if you notice anything unusual.

takeo5433 You're right on both counts.

The negative prompt you're using is too simple.

It will always remove too much quality, especially with particularly "dense" models.

Additionally, my "giant spaghetti workflow" led me to give you incorrect settings, ones that weren't even being read as I thought, so I apologize for that. (Although the main issue is still the negative prompt).

The upscale factor is x1.29, not x1.5, and I had fixed seed enabled in the hires-fix KSampler, but not set to the same seed as the base.

Anyway, I'll send you the workflow that produces the EXACT result shown in the demo image, so you can better understand how to influence these models and experiment with them. (Although, essentially, what will boost the quality of your generations is using a more comprehensive negative prompt.)

https://civitai.com/articles/18229

Hi, I use your Checkpoint since a moment, and I love the images that cames out. But I have many time hands with 6 fingers, when holding something for exemple. Do you have some advice for counter that ? I am pretty sure that's coming from the checkpoint (maybe I am wrong)

The base model is Illustrous, and this is something that may or may not happen depending on the generation. However, you can avoid it in several ways.

Mainly by improving your negative prompt or using LoRAs/embeddings for hands.

Alternatively, keep generating variations with different seeds until the results are more accurate.

If you particularly like a generation from a specific seed, you can use "tricks" such as increasing or decreasing the step count by 1, or slightly adjusting the CFG value.

In my tests, there are drastic changes in the output for the same parameters between steps 23 and 24 (and beyond a certain point, more steps aren't always better).

As a last resort, if you can't fix a hand in an image you really like, you can always use inpainting, either with the same model or a different one.

@Riwer mmh ok ok, I will test, thanks for your answer !

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.