EasyWan22 FastMix (Wan 2.2 I2V-A14B)

Wan 2.2 I2V-A14B, 6steps/generation. apache-2.0.

I'm using Q4_K_M with Geforce RTX 3060 12GB.

For different quantizations, see HuggingFace.

2025/08/25 FirstStepCfgHack

Boost1stStep sample: A-ON, A-OFF | B-ON, B-OFF

Wan22-I2V-FastMix_v10-H

HighNoise

Sampler

scheduler:

lcmshift:

5cfg:

1sigmas:

1, 0.94, 0.85, 0.73, 0.55, 0.28, 0steps:

6, start_step:0, end_step:3

Enhance-A-Video

weight:

1

Fresca

use_fresca:

true

NAG

Kijai default

Additional Negative Prompt:

mouth moving, 嘴在动, talking, 说话, speaking, 讲话,

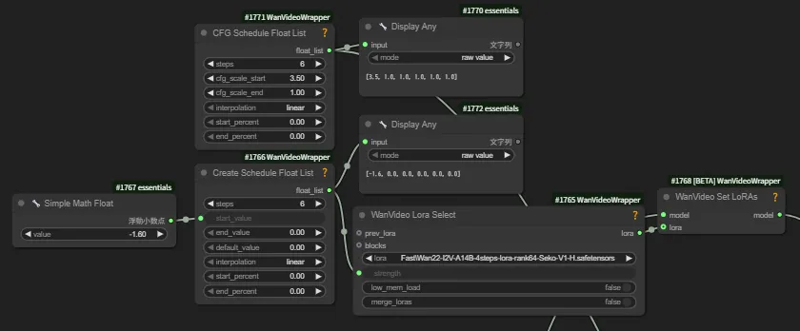

EnhanceMotion: <lora:Wan2.2-Lightning/Wan2.2-I2V-A14B-4steps-lora-rank64-Seko-V1/high_noise_model.safetensors: -1 ~ 1>

Wan22-I2V-FastMix_v10-L

lightx2v_14B_T2V_cfg_step_distill_lora_adaptive_rank_quantile_0.15_bf16.safetensors

lightx2v_I2V_14B_480p_cfg_step_distill_rank256_bf16.safetensors

LowNoise

Generation

Sampler

scheduler:

lcmshift:

5cfg:

1sigmas:

1, 0.94, 0.85, 0.73, 0.55, 0.28, 0steps:

6, start_step:3

Enhance-A-Video

weight:

1

NAG

Kijai default

Additional Negative Prompt:

mouth moving, 嘴在动, talking, 说话, speaking, 讲话,

Refiner

SeedGacha SSR Video > Upscale x1.5 & Encode samples

Sampler

scheduler:

lcmshift:

5cfg:

1sigmas:

1.0, 0.97, 0.94, 0.90, 0.85, 0.795, 0.73, 0.65, 0.55, 0.42, 0.28, 0.14, 0.0steps:

12, start_step:10add_noise_to_samples:

true

Enhance-A-Video

weight:

1

NAG

Kijai default

Additional Negative Prompt:

mouth moving, 嘴在动, talking, 说话, speaking, 讲话,

Description

FAQ

Comments (21)

is this fastmix better in time/quality than your 6-step lightning quantized checkpoints in your huggingface repository?

For now I'm using FastMix.

so how many cfg and steps should we use in this one?

can u add workflow plz

@Zuntan This is fucking hell

@Zuntan dude, can u give me a video link (or whatever you might sent) to explain how it work? i just use simple workflow right now, your is fucking huge.

Both of your merged models are impressive (Wan2.2 Lightning LoRA merged and FastMix). They work in as few as four steps and 6GB vram. I will post the results here soon.

By the way, any chance to make the Q4KM Wan2.2 T2V 14B Lightning version?

wow, I was wondering if you have a simpler workflow, that is based on the initial 2.2 lighting workflow, which include the possibility to use extra loras, high/low noise compatible loras?

wich one is the full version in your hg?

On further experimentation it turns out the appearance alteration was entirely my fault.

pretty cool checkpoint. It seems to be trying to adhere to my prompt more strongly than others I've used. Only complaint I have is that it definitely alters the look of the source image a fair amount. But thats not really the end of the world. I'm just happy my long ass prompts are actually useful lol

Gonna try some of the others.

New workflow doesn't work ?

https://github.com/Zuntan03/EasyWan22/blob/main/EasyWan22/Workflow/00-I2v_ImageToVideo.json

Hey there's a newer version of i2v lightx2v recently released for wan2.2, can you merge wan2.2 i2v Q4KM with this one please..

https://huggingface.co/lightx2v/Wan2.2-I2V-A14B-Moe-Distill-Lightx2v/tree/main/loras

Wich VAE do i have to use?

When i use standard WAN2.2_vae i get a mismatch error.

what kind of file type is ".gguf"? I can't use this in comfyui. Why isn't it a ".safetensors" file?

GGUF files work by being saved into the unet folder. These are usually optimized for lower vram systems.

and workflow ? , i tried using ur video workflow, its too comfusing

This job is very good!Light work for me, I'm using an RTX 3060 12G。GGUF requires plugin support; a reference website ishttps://github.com/city96/ComfyUI-GGUF.git

You can use the built-in video workflow in ComfyUI, just replace the UNet loader with the 【UNet loader (GGUF)】.

I'm back to this mod after a few months and honest... how is this so damn good? I've been trying all of the checkpoints for months now and this is the only one I can get to truly follow my prompts. Its so full of vibrant motion too.

I'm a rookie and I have never used GGUF models , can somebody please give me a JSON or a WORKFLOW ?