Depth Reference Fusion LoRA

📝 Short description

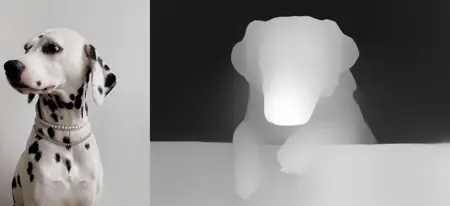

A LoRA for Flux Kontext Dev that fuses a reference image (left) with a depth map (right).

It preserves identity and style from the reference while following the pose and structure from the depth map.

Trigger word: redepthkontext

Example 2

Example 3

📖 Extended description

This LoRA was primarily trained on humans, but it also works with objects.

Its main purpose is to preserve identity — facial features, clothing, or object characteristics — from the reference image, while adapting them to the pose and composition defined by the depth map.

⚙️ How to use

Concatenate two images side by side:

Left: reference image (person or object)

Right: depth map (grayscale or silhouette)

Add the trigger word

redepthkontextin your prompt.

✅ Example prompt

redepthkontext change depth map to photo

🎯 What it does

Preserves character or object identity across generations.

Embeds the subject into the new pose/scene defined by the depth map.

Works best when the depth map has similar proportions and sizes to the reference.

⚡ Tips

Works better if the depth map is not drastically different in object scale.

Can be combined with text prompts for additional background/environment control.

📌 Use cases

Human portraits in different poses.

Consistent character design across multiple scenes.

Object transformations (cars, furniture, props) with depth-guided placement.

Storyboarding, comics, or animation frame generation.

Description

FAQ

Comments (39)

It works great! Thank you!

Glad to hear, thank you! 🙌

The only catch is that when I load two 1024x1024 images, it stitches them and resizes 2048x1024 to 1456x720, so in the end we get a modified 720x720 image, not the 1024x1024 image.

Yeah, this is limitation of flux, and hard to fix it :(

Cropping reference object to vertical aspect ratio, can help a bit, if it is possible for your case.

这个问题很好解决,使用没有经过FluxKontextImageScale节点的2048*1024的图输入到latent接口上即可!正面条件是经过FluxKontextImageScale和ReferenceLatent的就行了。

This problem is very easy to solve. Just input the 2048*1024 graph that has not passed through the FluxKontextImageScale node into the latent interface! The positive condition only needs to be verified through FluxKontextImageScale and Reference client.

Does the LoRA not work by just chaining together two ReferenceLatent nodes, without stitching?

kaptainkory 估计不行,它里面是含有latent的,合并后信息就乱了。

I guess it won't work. It contains latent information. After merging, the information will be in a mess.

zml_w

謝謝你的評論!很好的建議,你說得對,確實可以,雖然效果可能不如推薦的解析度。但值得一試!

thank you for your comment! Good advice, you right it can work, maybe not that good as with recomended resolution. But it worth to try!

kaptainkory Lora trained that way that it require to have both images (reference and depth) in the latent that feed into sampler. So probably won't.

Let say i would like to create 1280x832 image using the lora

My flow to this lora is:

1) Manually prepare subject image 768x768 (for example: standing girl and bicycle)

2) Manually prepare action image 768x768 (for example: girl riding a bike)

-- The rest is in Comfy workflow

3) Extract depth map from action image

4) Stitch subject image and depth image

5) Run Kontext

6) split output to separated images, take right half

7) prepare black image 1280x832

8) resize splited output to minor destination size (Resize to shortest) 768x768 -> 832x832

9) blend splited output on black image in the center

10) once again run kontext but without lora with prompt: fill black matching to the image in the center (you can improve the prompt)

-- End kontext flow, but...

Because Kontext is pretty shitty itself I copy (clipspace) my kontext image as input to QWEN img2img with strength denoise 0.3 res_multisetp sampler.

-- End flow. I've got pretty image with subjects and action i want.

zml_w I'm not sure I got the last sentence right, but I tried inputting a stitched image 1.5x larger than recommended. The result wasn't good.

kaptainkory I checked more, right now it don't work this way, but i defenetly should retrain it to support this usage. Thank you for the idea!

Hello, it is really nice! May I ask you how do you train such a model? How does one entry in the dataset looks like? And how big was the dataset? Thank you!

hey.

Sorry, I am planning to add some more LoRAs with a similar pipeline, so I’m not sharing the full dataset preparation details right now. Once I finish this series of LoRAs, I may release the pipeline publicly so that others can reproduce and adapt it

thedeoxen are you thinking to make similar for lineart controlnet too?,that would be great.Thank you ❤️❤️❤️

LastDelivery4801226 yep, line art, poses, canny edges planned :)

@thedeoxen my hero

@LastDelivery4801226

@LastDelivery4801226 Hey, just released lineart 🙌 Hope it will be helpfull for you

https://civitai.com/models/1902256?modelVersionId=2153190

I seem to be the only person in the world that can't get this to work. Where is this depth extract node? I can't find it online or in the comfy manager. I also getting an error on the workflow. Anybody can help me would be great.

hi, I am sory that workflow didn't work for you

I grouped midas model loader and midas depth approximation from this nodes

https://github.com/WASasquatch/was-node-suite-comfyui

but actually it can be any of depth extraction

use depthcrafter or depth anything

a workflow would be great, since the aspect ratio issues are not too clear ... would like a single image 1:1 with the depth map

I just share an example workflow . You may try it. p.s The ratio don't need to be 1:1

https://civitai.com/models/1887029/depth-reference-fusion-lora-example-workflow?modelVersionId=2135933

It seems to work decently well with anime/digital art style with the few tests I've done - not nearly as good as with realistic images though. Could something like this be doable with lineart/canny too?

Hey, thank you. Next week i am going to publish lineart and canny. Maybe also poses.

I'll ping you when it will be available :)

@Kenchaila

Hey, just released lineart 🙌 Hope it will be helpfull for you

https://civitai.com/models/1902256?modelVersionId=2153190

@thedeoxen Thanks for the heads up! Gonna test it out soon :)

Error:

GroundingDinoSAM2Segment (segment anything2)

Failed to get source for <function Enum._generate_next_value_ at 0x0000021869CE0AE0> using inspect.getsource

Really like it so far, but i cant get to the same quality of the referance images, are u using like an absurdly big resolution or something urrently trying with around 1568 width wich makes the final output 784x672 which is not a great resolution and the model seems to do bad on smaller details.

Was anybody able to make it work on ForgeUI?

ATTENTION Comfy UI users

There is an easier, faster and better looking way to use this LoRA.

Instead of using the Stitch node that is terrible wasteful in resources and most of the time the final result is less than ideal.

You should use the Reference Latent nodes, just daisy chain two of this bad boys then connect in the first one the depth image and in the second one the ref image.

Finally the latent connector for the ksampler, or similar, use a Empty Latent node to define the dimensions.

Maybe i will post later a workflow that uses this technique.

Hey, that works great for me. Surprised how accurate the results. Any tips for training? I want to train something similar for my self

Hey, sorry for the delay. I opensourced datasets that i prepared for training.

https://huggingface.co/datasets/thedeoxen/refcontrol-flux-kontext-dataset

Some details about training you can find here

https://huggingface.co/thedeoxen/refcontrol-flux-kontext-reference-pose-lora/discussions/1#68addfb59ae343ad624e1ca7

@thedeoxen Oh, thanks man

It's crazy to me that with the advent of Qwen Edit and Flux Klein, this is still the most powerful method to edit an image via depth map. Those models already have some fundamental concept when passing a depth map as input but the results are no where near as good as Kontext + your LoRA.

Do you have any plans to retrain on any of the newer architectures?

Thank you, I am on it, there is some difficulties with them, but hope that release it soon.

@thedeoxen Amazing news! I'll be on the lookout

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.