Real-Qwen-Image v2.0

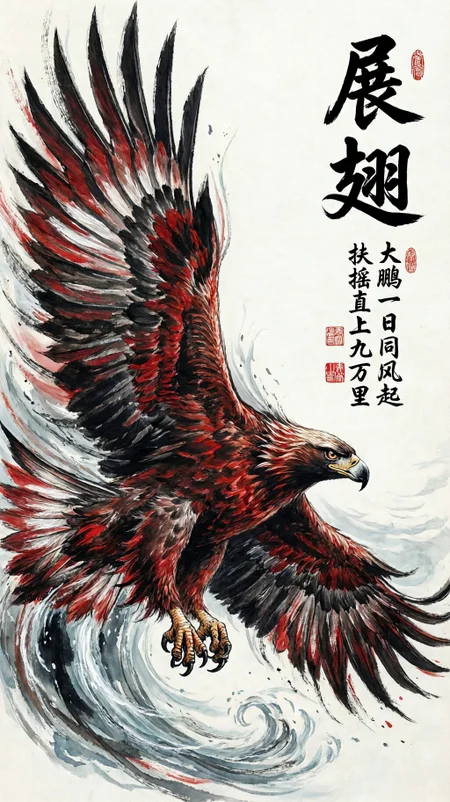

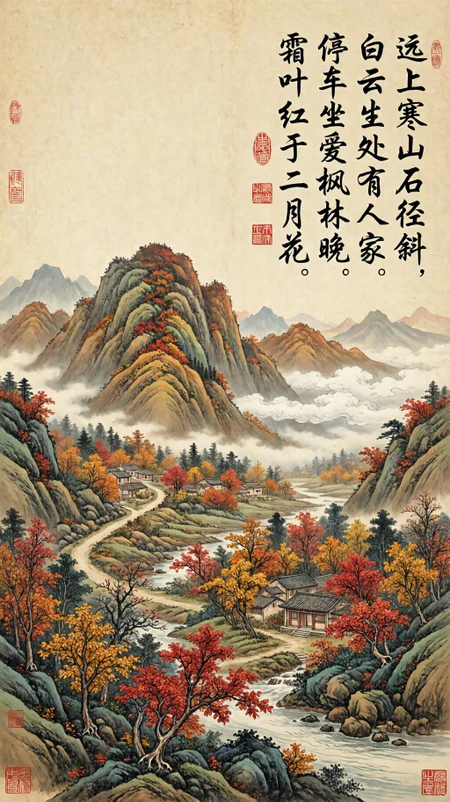

本模型为 Qwen-Image-2512 的微调模型,比官方模型具有更好的锐度和真实感,以及对亚洲人脸美学进行了的优化。具体效果参见如下示例图片,本模型易于使用、参数适配性和LoRA兼容性良好,文字和出图品质俱佳,真正的开源生产力工具。

This model is a fine-tuned version of Qwen-Image-2512, have better sharpness and realism than the official model, and it has been optimized for the aesthetics of Asian faces. For specific effects, see the sample images below. This model is easy to use, has good parameter adaptability and LoRA compatibility, with excellent text and image quality, This model is a truly open-source productivity tool.

使用方法:

basic workflow pls refer to: Image

model shift: 1.0 - 8.0;

inference cfg: 1.0 -4.0;

inference steps: 10 -50;

sampler / scheduler: euler / simple ,or any other。

Also on: Huggingface.co, Modelscope

致谢:

感谢 kanttouchthis 提供量化脚本,sdnq_uint4_r128 量化版本模型,出图品质能达到 Q6 以上,接近 Q8,欢迎大家试用,需要安装 ComfyUI-SDNQ 插件。

Much thanks to kanttouchthis for providing the quantization script and the sdnq_uint4_r128 quantized model. The image quality can reach above Q6, nearly Q8. Everyone is welcome to try it; the ComfyUI-SDNQ plugin needs to be installed.

===============================================

Real-Qwen-Image v1.0 version:

本模型为 Qwen_Image 微调模型,主要提升了出图的清晰度和写实感。具体效果参见示例图片,图片中也附带有 ComfyUI 工作流,本模型极易使用、快速出图、LoRA兼容性良好。

The model is the Qwen_Image fine-tuned model, It enhances the clarity and realism of the generated images. For specific effects, please refer to the example images, which also include the ComfyUI workflow. The model is very easy to use and quickly generates images, and have a good LoRA compatibility.

For bf16 / gguf version you can download from my: https://www.modelscope.cn/models/wikeeyang/Real-Qwen-Image

模型使用:

基本组合:euler+simple,cfg 1.0,setps 20 -30,您可以尝试不同的组合。

Basic: euler+simple, cfg 1.0, setps 20 - 30, You can try more different combinations.

工作流 (Example Workflow) : Pls refer to - https://civarchive.com/images/97293023

Text_encoder model file link: https://huggingface.co/Comfy-Org/Qwen-Image_ComfyUI/tree/main/split_files/text_encoders, bf16/fp16/fp8/fp8_scaled/gguf version all ok.

Also on Huggingface.co

Description

Real-Qwen-Image-V2 base on 2512

FAQ

Comments (7)

I was confused about this comfyui-SDNQ Loader thing.

On the Github page the file listing is almost empty.

the only working file is the loader.py so I opened that and found this description.

The function:

1. Detects and handles different model formats (regular, diffusers, mmdit)

2. Configures model dtype based on parameters and device capabilities

3. Handles weight conversion and device placement

4. Manages model optimization settings

5. Loads weights and returns a device-managed model instance

Loader.py also installs this:

https://github.com/kanttouchthis/hqqsvd/tree/main/hqqsvd

The main file list here gives me a better idea of what this custom node does. But It also, i'm guessing, doesn't seem needed to run your qwen Refiner model.

Thank you for providing the detailed information and for carefully reading the source code. The author has clearly indicated that to install the "ComfyUI-SDNQ" node, you need to "pip install git https://github.com/kanttouchthis/hqqsvd" at first, because parsing SDNQ quantized models requires support from the hqqsvd library. at the head of "loader.py" script, there is a reference: "from hqqsvd.linear import HQQSVDLinear, ComfyLora."

Great model, but Q8 seems broken.

I was having trouble getting the same result from a workflow. Mine was washed out, lower contrast, less detail.

Realized that the only difference in the workflows was that he was using FP8 and I was using Q8.

I switched to FP8 and generated the EXACT image as the other guy. I also saw that the time is now cut in HALF for the same everything. I know GGUFs are slower, but should not be 2x slower.

So... EXCELLENT MODEL. But, the GGUF at least listed here is bad.

The image I used to prove this out: https://civitai.com/images/119810290

After I converted of gguf, I had tested it, should be ok, I will test your workflow to check what happend...

Pls ref to: https://civitai.com/posts/26458345, I tested fp8 / Q8_0 / Q4_K_M / SDNQ-uint4 again, All the parameters are the same, and the images look normal, with only minor differences. This is related to the differences in quantization precision across different layers during quantization.

@wikeeyang I hear you. But I've checked it out. With that workflow I posted from, it's way off. To the point that the Q8 was unusable.

@fohowop124538fdfdfdfds May be: 1. your GGUF version need update, 2. ComfyUI core version need update, 3. Other, may be cause by your envs, I don't know.