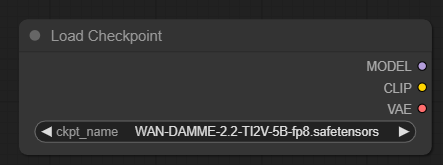

WAN 2.2 5B Turbo Checkpoint T2V/I2V. All included: U-Net, CLIP and VAE. Just load as any other checkpoint model and have fun:

4 steps is enough. CFG 1, sampler is Euler or SA_Solver or Uni_PC, scheduler is simple or normal or beta. Multiplier 0.8 applied to latent will help with oversaturation.

Preferrable sizes are 1280x704 and 704x1280.

LoRAs compatible with this model on Civitai.

Sample workflow attached here as train data.

Tech info:

base model is Wan2_2-TI2V-5B_fp8_e4m3fn_scaled_KJ

1. fp8 Fast is merged with Wan2_2_5B_FastWanFullAttn_lora_rank_128_bf16

2. fp8 Turbo is merged with Wan22_TI2V_5B_Turbo_lora_rank_64_fp16

Description

FAQ

Comments (34)

This Model is unbelievable! The next big step, so fast but good quality! (I do have a RTX 5090.) Forget about your sorrows concerning the resolution: Other sizes work as well... Just brillant! Thank you!

Look, I've tried to gen full HD, and what I've got? White sparkles all over the video.

https://civitai.com/posts/22890941

Okay, you were right, even full HD works well. White dots were some bug of ComfyUI/CUDA.

@trolljump How did you get rid of the white sparkles if I may ask? I cant seem to get rid of them. (I'm using SwarmUI)

@Eekcapone2144 Try to use recommended resolution. Try to multiply latent by 0.8.

@trolljump DOH! Didn't see that Multiplier by 0.8 sentence in description. Much better now, thank you!

Suggested workflow for this one please? Ignore that-

This one is really fast.

Too late, I've uploaded standard ComfyUI workflow for you as "Training data" 😁

Hello decoding is fast ?

Decoding is heaviest thing of 5B model, it always takes long time

WIth 12Gb VRAM for 61 video frames I see ~1min. for Full HD 8-steps sampling and ~2min. for VAE decoding

@mistporyvaev yeah generation is 5 times faster than decoding lol

ksampler 45 sec but vaedecoding - 15min

@kumarkishank959811 seems like we need a totally new thing: distilled lightning VAE ⚡️⚡️⚡️

36 minutes turned into 4-6...me and my RTX 3060 thank you

I simply combined together what real experts in their field have done. But thanks anyway. ✌️

I'am using a rtx 3060 too and i generate videos in only 30 seconds on 540p resolution + vae decoding together in like 70-100 seconds with fastwan lora or for image to video with turbo lora on 4 steps

@AndyZocker I have just 6GB vram 😞 can't even make videos with high and low models at same time without get cuda error

Workflow?

attached as "train data" 😃

thanks. It's really fast but the video is not sharp at all, even using the scall you recommanded. It looks like vhs video of the eighties. I don't know what I'm doing wrong because I use the workflow with the settings you recommanded...

@dehyilo397 I have a lot of questions. Did you use text-only generation or text and an image? What did the generation request look like? If there was a source image, what size was it? Did you use my workflow?

Great model, thanks for the work.

Do you know if any LoRAs work with the model and if so, what types? (e.g.; Wan2.2, Wan2.1, other)

There are two types of WAN 2.2 models: 5B (small) and 14B (full). This model is based on 5B. So any LoRAs for 5B should work.

LoRAs for WAN 2.2 5B on Civitai

Thanks for sharing. Quick question, why 12 fps considering 5B can do 24?

I like slow-motion videos 😍

WTF ? I'm usually very careful when civitai models claim this kind of speed but... IT WORKS !

I could not get this kind of speed with a gguf+turbo lora, but somehow with this model I finally get the speed. THANK YOU

Glad to see your positive review ✌️

work on my 8gb vram gpu

nice

Impressive! Works well for fast, less complex clips.

EDIT: Thanks a lot!!

Thank you! what is the best input size, because I have seen blurred eyes, distorted eyes.

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.