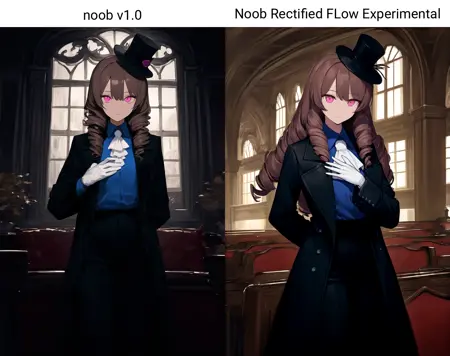

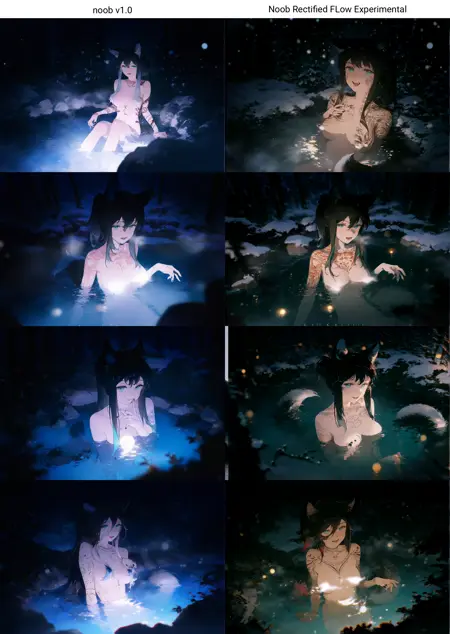

A finetune using NoobAI v-pred 1.0 as base and converting it to Rectified Flow by default uses Anzhc's EQ-VAE-B7 this experiment struggles with dark scenes due to data still being out of distribution but shows that Rectified Flow is indeed the next step in SDXL's improvement efforts (beyond just EQ-VAE), this model somehow recovers v-pred from its innate blue-shift and blue bias, but still lacks improvements in the uncond, and needs fixing for Zero Out negatives which can be fixed in a proper finetune.

Usage Tips:

YOU'LL NEED TO USE COMFYUI/SWARMUI or any webui that can do ModelSamplingSD3 at 2.5

Positive Prompt:

masterpiece, best qualityNegative Prompt:

worst quality, low quality, worst aesthetic, old, early, blurry, lowres, signature, artist name, watermark, twitter username, sketch, logo, furry, text, speech bubble, censored, ai-generated, censorship, censor, mosaic censoror similars as needed

Technical Settings:

Sampler: Euler, Euler CFG++ DO NOT USE ANCESTRAL SAMPLERS!

Steps: 20-28

Scheduler: Simple, Normal

CFG Scale: 4.5-6.0

CFG++ Scale: 1.0 - 2.0

Resolution: 1024x1024 or any aspect ratio that falls within ~~1.048.576 pixels.

ModelSamplingSD3: 2.5

Is this another of your shitty models bluvoll? In a way, yes, but this is meant to show that Rectified Flow SDXL is completely possible, NoobAI recovers to this easily if we can hit it with enough data, how much data? The full NoobAI dataset which I posses but can't use due to limited money, therefore if you believe this has future I humbly ask for donations so I can make it a reality how much does this need? 2 Epochs for full dataset, or 20 million steps, some 1600 to 1900 USD due to issues that will inevitably appear.

"Why should I trust you Bluvoll?" You shouldn't, we have a stain in the community called Unstable Diffusion and another being Resonance Cascade, therefore, if someone is willing to donate compute that would be perfect, if you want to donate either, I have a Ko-Fi or you can find me in NoobAI's server or Arc en Ciel's, please consider supporting this if you want a usable Rectified Flow, not like certain slop model that has a 7 on its version.

Training information:

Used shift 2.5 for training.

User Cosine Optimal Transport.

Used Anzhc's B7 VAE

Future Improvements that can be done:

Better VAE using more data.

Finetuned Clip Text Encoders to recover Clip L and further improve the performance of BigG

Improved training with contrastive flow matching and more newer practices.

Description

FAQ

Comments (23)

Rectal Flowo :DoroRun:

@DraconicDragon you mean erectified flow? :dororun:

Why do we need EQ-VAE? The dataset has been leaked and you can check it and see... low quality blurry photos of fishermen and fish, 256 pixels max! And it seems Anzhc didn't get it at all while making his finetune. Compare them all, those EQ VAEs have log-variances higher than SDXL VAE 0.9's one, it is bad. At least they need to learn the basics. Stop wasting your time with the VAE

@2P2 Did Anzhc release the source images that even I don't have? color me curious, please do share the leak

@2P2 Stop wasting your time in the comments/pr's where you want to appear smart, although you're doing exactly the opposite, especially considering that you yourself don't really do anything substantial.

@slvc532 it's weird you don't follow your own advice, no offence

redditors in civit comments nahhhhhhhh

One idea of RFLOW with SDXL architecture and EQ-VAE on latent space encoder-decoder is amazing! I'm gonna wait for next version and wait for source code release!

Thank you for your work.

@VAX325 I'll share my training code for Rectified Flow, sadly the EQ-VAE is Anzhc's and I don't even have the dataset he used to train it, or the code.

@VAX325 if you want to try training https://github.com/bluvoll/sd-scripts

Hi! Great to see that SDXL-to-flow conversion is getting more attention - I’ve been experimenting with that myself too.

How long did it take you to achieve a consistent flow result with NoobAI?

Also, I’m curious - why did you go with Anzhc’s EQ-VAE-B7, which has known padding issues requiring manual fixes, instead of KBlueLeaf’s EQ-SDXL-VAE?

I’ve seen your fork of sd_scripts; I made a simpler version myself for fine-tuning bigasp2.5 (basically just switching the objective for SDXL).

If I understand correctly, your scripts are more focused on converting an epsilon model into a flow one, right?

@deGENERATIVE_SQUAD Kohaku's is worse in metrics alone and in the results it provided, my fork can convert either Epsilon or v-pred, its agnostic, also I converted from v-pred, needed steps to see consistent rectified flow as around 450k unique unbatched steps.

Let me tell you, this NoobAI model... it's fantastic. Absolutely fantastic. The best. Nobody's got a model this good, with the Rectified Flow and the EQ-VAE, believe me. Look at the results! The quality, the colors—it's tremendous. Others, they try, they fail. They're losers. But we, we get the job done. Two hundred thirty likes, that's huge! It's going to be the biggest, most beautiful model on the whole internet. We're winning again, folks, with this model. Incredible.

"Thank you for your attention on this matter - Donald J Trump"

any update in this one?

I think this is hands down the best SDXL model we'll have until NoobAI-Flux2VAE-RectifiedFlow becomes usable