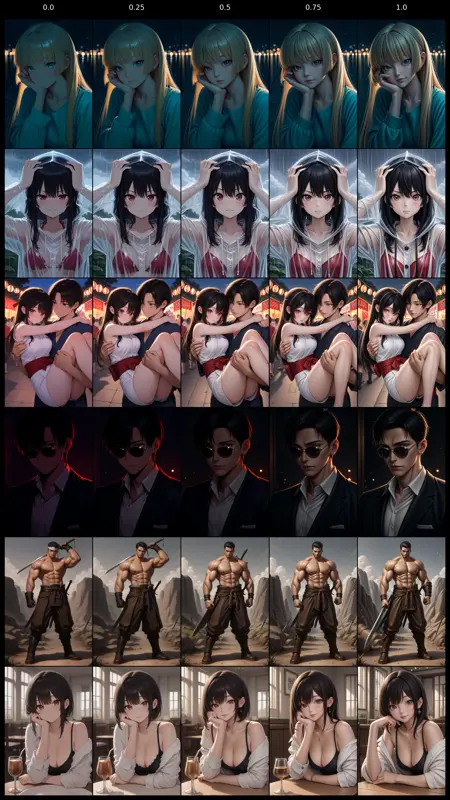

LoRAs trained using DRaFT-like post-RL using Siglip/Dino

Don't expect sensible results on any other model than indexed v2

These are just experiments. I will often not avoid over-training and forgo stronger regularization as I am more so looking for what effects are most prominent for varying data regimes.

Description

trained using DRaFT-LV setup

pairwise head on joyquality fitted on a dataset of varying cfg generations from the base model

the preference is cfg 3.0 < 5.0 > 7.0