✨One-click Pod available on:✨

🟣 Runpod ComfyUI 0.19.0 CUDA 12.8 for 5090

🟣 Runpod ComfyUI 0.19.0 CUDA 12.4 for 4090

🟡 VastAI ComfyUI 0.19.0 CUDA 13.0 for 5090

🟡 VastAI ComfyUI 0.19.0 CUDA 13.0 for 4090

Just click on the link, choose a Video Card and the Template will install all you need + all my workflows.

Wan 2.2 Models are not included, you can install it using Civicomfy or ComfyUI-HuggingFace directly inside ComfyUI

OneClick-I2V-Story on Runpod Basic Tutorial

https://limewire.com/d/2TAty#No5eoN7WhU

☕️ buymeacoffee

☕️ buymeacoffee

IMPORTANT:

If you install RES4LYF node it will broke the MoEKSampler, to use it you have to use the KSampler included in that node.

🔥 02/28/26 UPDATE 🔥

✨ NEW NODES ✨

EASY MODEL DOWNLOAD FROM HUGGINGFACE

cd ComfyUI/custom_nodes

git clone https://github.com/huchukato/ComfyUI-HuggingFace.gitRIFE INTERPOLATION WITH TENSORRT

with Auto Install (CUDA 12/CUDA 13) and Auto Model Download

cd ComfyUI/custom_nodes

git clone https://github.com/huchukato/ComfyUI-RIFE-TensorRT-Auto.gitUPSCALER WITH TENSORRT

with Auto Install (CUDA 12/CUDA 13) and Auto Model Download

cd ComfyUI/custom_nodes

git clone https://github.com/huchukato/ComfyUI-Upscaler-TensorRT-Auto.git✨MY QWEN3-VL NODE IS FINALLY IN THE MANAGER✨

Just search for "QwenVL-Mod" to install it

or dowload it from GitHub: QwenVL-Mod: Enhanced Vision-Language

ComfyUI-QwenVL-Mod - Enhanced Vision-Language with WAN 2.2

Version 2.2.4 (2026/03/13) - 🎬 Critical I2V Timeline Fixes & NSFW Presets Optimization

🌟 What is ComfyUI-QwenVL-Mod?

A powerful enhanced vision-language node for ComfyUI that combines Qwen3-VL models with professional WAN 2.2 video generation workflows. Features multilingual support, visual style detection, and NSFW capabilities for professional AI content creation.

Think: "Your all-in-one solution for intelligent prompt enhancement and video generation with cutting-edge AI models!"

🎬 Key Features

🚀 WAN 2.2 Video Generation

Text-to-Video (T2V): Professional 5-second video generation

Image-to-Video (I2V): Advanced image animation with style detection

Story Generation: 20-second continuous videos with 4 narrative segments

Storyboard Workflows: Seamless storyboard-to-storyboard generation

Cinematic Video: Professional cinematography specifications

🌐 Enhanced Capabilities

Multilingual Support: Process prompts from any language (Italian, English, etc.)

Visual Style Detection: 12+ artistic styles (anime, 3D, pixel art, puppet animation, etc.)

Smart Prompt Caching: Performance optimization with Fixed Seed Mode

GGUF Backend: Efficient local model inference with quantization support

NSFW Support: Comprehensive content generation without restrictions

🧠 Intelligent Features

Auto-Prompt Enhancement: Automatically enhance user prompts for optimal generation

Professional Cinematography: Built-in specifications for lighting, camera angles, shot types

Timeline Structure: Precise 5-second timeline with frame-by-frame descriptions

Keep Last Prompt: Generate once, preserve results while changing inputs

🎯 What's New in v2.2.4 - CRITICAL I2V TIMELINE FIXES

🚨 Major I2V Timeline (20s) Fixes

✅ Style Coherence: Fixed AI changing anime→realism mid-sequence

✅ Character Stability: Fixed characters disappearing/appearing incorrectly

✅ Natural Lighting: Fixed AI adding artificial lights not in image

✅ Timeline Structure: Fixed continuous numbering (6,7,8...) instead of 0-5 restart

✅ Format Consistency: Fixed missing parentheses and unwanted labels

✅ Output Format: Each prompt starts directly with timeline markers

🔧 NSFW Presets Optimization

✅ Complete Specifications: All 8 NSFW presets now include full NSFW descriptions

✅ Emoji Display: Restored proper emoji rendering (🍿🎥🎬📖)

✅ Clear Instructions: Removed confusing recommendations from presets

✅ User Guide: Token settings guide created for workflow optimization

📋 Technical Improvements

✅ Timeline Markers: Correct

(At X seconds: ...)format for all 4 prompts✅ Character Continuity: Natural progression without forced artificial presence

✅ Lighting Rules: Logical progression instead of absolute prohibitions

✅ Style Detection: Consistent style application across all timeline segments

🎯 Model Recommendations

Qwen3-VL-8B: Recommended for I2V Timeline (20s) complex sequences

Qwen3-VL-4B: Sufficient for I2V Scene (5s) single prompts

Token Settings: 2048+ for 20s timeline, 1024+ for 5s prompts

🎯 What's New in v2.2.3

CUDA 13 Compatibility: Fixed crashes caused by conflicting unload operations

Parameter Cleanup: Removed redundant unload_after_run from all nodes

Bug Fixes: Resolved "missing required positional argument" errors

Memory Management: Streamlined VRAM cleanup with VRAM Cleanup node

Documentation: Updated all README files with new memory features

Credits: Added community credits for feedback and testing

🎯 What's New in v2.2.2

🚀 Critical T2V/I2V Workflow Fixes

Batch Processing: Fixed critical T2V → GGUF issue with batch images

Frame Detection: Added automatic batch detection and individual frame processing

Video Support: Enhanced video frame processing with proper shape handling

Debug Enhanced: Comprehensive logging for batch processing troubleshooting

🔄 Same Model Reuse Fix

Conflict Resolution: Fixed crash when using same model between T2V and I2V nodes

Memory Management: Enhanced cleanup with CUDA synchronization and timing

Signature Mismatch: Resolved different signature patterns between nodes

Aggressive Cleanup: Forced complete VRAM cleanup before model reload

🔧 keep_model_loaded Enhancement

Missing Parameter: Added keep_model_loaded to PromptEnhancer node

Consistent Behavior: Both GGUF and PromptEnhancer now have identical memory management

Conditional Cleanup: Proper cleanup based on keep_model_loaded setting

User Control: Full control over memory usage vs performance

🚨 CRITICAL BUG FIXES - v2.2.4

🎬 I2V Timeline (20s) - COMPLETELY FIXED

Before v2.2.4:

❌ Anime style changed to realism mid-sequence

❌ Characters disappeared/appeared randomly

❌ AI added artificial lights not in image

❌ Timeline numbering: 6,7,8... instead of 0-5 restart

❌ Missing parentheses and unwanted "Prompt 1:" labels

After v2.2.4:

✅ Perfect Style Coherence: Anime stays anime, realism stays realism

✅ Character Stability: Same characters throughout all 4 prompts

✅ Natural Lighting: Only lights visible in image, logical progression

✅ Correct Timeline: Each prompt uses 0-5 seconds format

✅ Clean Output: Proper

(At X seconds: ...)format, no labels

🔥 NSFW Presets - ENHANCED & FIXED

✅ Complete Specifications: All 8 presets with full explicit descriptions

✅ Emoji Display: Proper 🍿🎥🎬📖 icons (no more unicode codes)

✅ User-Friendly: Removed confusing technical recommendations

✅ Token Guide: Workflow note for optimal settings

🎯 Result: Perfect I2V Timeline generation every time!

🎬 WAN 2.2 Story Workflow - Revolutionary AI Storytelling

📖 AI Story Generation

4-Segment Videos: Automatic 20-second videos (4 × 5-second segments)

Narrative Continuity: Perfect story flow between segments

NSFW Support: Enhanced adult content generation

Timeline-Free: Natural storytelling without time markers

🔄 Smart Auto-Split

Story Split Node: Intelligent prompt separation technology

Auto-Detection: Handles any separator format automatically

4-Output Guarantee: Always produces exactly 4 prompts

Debug Mode: Built-in troubleshooting information

📦 Installation

Requirements

ComfyUI: v0.13.0+

GPU: 8GB+ VRAM (16GB+ recommended)

System: Windows/Linux/Mac

Python: 3.10+ (or use provided Docker environment)

Docker/Cloud Ready

RunPod: Pre-configured templates available

VastAI: Optimized instances ready

Local: Docker support included

Quick Install

Download: ComfyUI-QwenVL-Mod (latest version)

Extract to

ComfyUI/custom_nodes/ComfyUI-QwenVL-ModRestart ComfyUI

Load included workflows

🎮 Usage Examples

Basic Image-to-Video

Load WAN2.2-I2V-AutoPrompt.json

Upload your image

Select model (HF or GGUF)

Generate enhanced video

Basic Text-to-Video

Load WAN2.2-T2V-AutoPrompt.json

Input your text prompt

Select model (HF or GGUF)

Generate enhanced video

Image-to-Video with Style

Load WAN2.2-I2V-AutoPrompt.json

Upload your image

Enable style detection

Generate animated video

AI Story Generation

Load WAN2.2-I2V-AutoPrompt-Story.json

Input your story idea

Auto-split into 4 segments

Generate 20-second story video

🔧 Technical Specifications

⚡ Performance

Context: 65,536 tokens (8B models)

Memory: Optimized VRAM usage

Stability: Crash-free operation

Speed: Fast generation times

🎨 Model Support

Qwen3-VL 4B: 7 GGUF variants (2.38GB-4.28GB)

Qwen3-VL 8B: 7 GGUF variants (4.8GB-8.71GB)

HF Models: Josiefed and official variants

Quantization: Q4_K_S, Q5_K_S for VRAM efficiency

🌐 Multilingual Capabilities

Input Languages: Any language supported

Auto-Translation: Automatic translation to optimized English

Style Detection: Works with multilingual prompts

Cultural Adaptation: Context-aware prompt enhancement

🎯 Included Workflows

🍿 WAN 2.2 Presets

🍿 Wan 2.2 I2V Timeline: Image-to-video with timeline structure

🎥 Wan 2.2 I2V Scene: Image-to-video with professional specs

🍿 Wan 2.2 T2V Timeline: Text-to-video with timeline structure

🎥 Wan 2.2 T2V Scene: Text-to-video with professional specs

🎬 Wan 2.2 I2V Timeline: 20-second multi-segment continuity

📖 Wan 2.2 I2V Scene: 20-second single scene with cinematography

🔥 Advanced Features

NSFW Enhancement: Uncensored content generation

Professional Lighting: 8 light types + 9 qualities

Camera Control: 6 shot types + 5 compositions

Color Grading: 4 tone options

🎨 Visual Style Detection

Automatically detects and enhances:

Photorealistic style - Realistic lighting, natural textures, lifelike details

Anime style - Japanese animation aesthetics with vibrant colors

Cartoon style - Bold outlines, flat colors, exaggerated expressions

3D animation style - Computer-generated 3D rendering characteristics

Fantasy style - Magical elements, ethereal lighting, imaginative atmosphere

Artistic portrait style - Painterly qualities, artistic brushwork, stylized composition

🔥 NSFW Content Support

Enhanced Generation

Explicit Content: Uncensored adult descriptions

Detailed Scenes: 8-12 sentences per segment

Natural Progression: Smooth story flow

Style Adaptation: Automatic visual style matching

Quality: Consistent characters & scenes

Professional Applications

Adult Content: Industry-standard generation

Artistic Nudity: Classical art styles

Educational: Anatomy and artistic study

Creative: Artistic expression

🚀 Why Choose ComfyUI-QwenVL-Mod?

🎬 For Content Creators

Storytelling: Create compelling narratives

Efficiency: One prompt → complete video

Quality: Professional video output

Flexibility: Any genre, any style

🔥 For NSFW Content

Explicit: Uncensored generation

Detailed: Rich scene descriptions

Continuous: Smooth story flow

Natural: Realistic progression

⚡ For Power Users

Customizable: Easy to modify

Extendable: Add more segments

Integrable: Works with existing setups

Optimized: Maximum performance

🌟 What Makes This Special?

First: Complete AI story system with vision enhancement

Smart: Intelligent prompt splitting and enhancement

Complete: End-to-end solution from text to video

Optimized: Performance-tuned for professional use

Ready: Works out-of-the-box with included workflows

🎬 Create Amazing AI Videos Today!

Transform your ideas into stunning videos with the power of Qwen3-VL vision enhancement and WAN 2.2 video generation.

Perfect for creators, artists, and professionals looking for the ultimate AI video enhancement tool! 🌟

Built with ❤️ for the ComfyUI community

🔶 All the images used to create the videos in the gallery are generated with PimpMyPony 🔶

The new version is out today, with a brand new anime style ✨

WORKFLOWS TESTED ON:

ComfyUI 0.17.1

Python 3.12.12

Pytorch 2.9.1 + CUDA 13.0

🟣 Credits

This workflows are intended to be used with the models by taek75799 as they follow the structure of Dynamic Prompts you can find under these models:

WAN 2.2 Enhanced NSFW | SVI | camera prompt adherence (Lightning Edition) I2V and T2V fp8 GGUF

🟣 Other tested models:

Smooth Mix Wan 2.2 14B (I2V/T2V) by DigitalPastel | GGUF versions by Santodan

Wan2.2-Remix (T2V&I2V) by FX_FeiHou | GGUF versions by Santodan

Thanks to all the users who are commenting and helping me improve the workflows ❤️

🔖 T2V-I2V AUTOPROMPT

🛑 Experimental WF 🛑

Start with a T2V prompt and extend the generated video with I2V

This workflow requires both T2V and I2V Wan 2.2 Models

🔖 SVI I2V AUTOPROMPT

Thanks to taek75799 for his models ❤️

SVI LORAS:

LIGHTX2V LoRaS are not included in the model

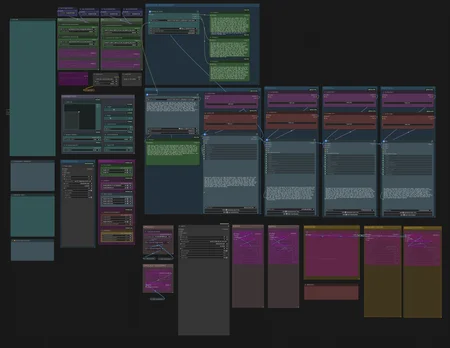

🔖 FULL I2V AUTOPROMPT

Complete workflow that includes:

Long Video Generation [from 5 to 20 seconds]

Auto Prompting [Qwen3-VL]

Upscale [2xLexicaRRDBNet and Tensorrt]

Frame Interpolation [30fps and 60fps for img2vid | 24fps and 50fps for MMAudio]

MMAudio [NSFW Unlocked]

📝 AUTO PROMPT

Prompt Description Box [Multilanguage]: Just write your idea and the LLM will do the rest, formatting the prompt in the dynamic prompt format used in the Wan 2.2 models

Final Prompt Preview: Shows up the final prompt

🔶 QWEN3-VL NODE FOR GGUF MODELS 🔶

🔖 To use the Qwen3-VL GGUF Quantized models you have to install llama-cpp-python

If you are not comfortable in manual install the llama, just go with the normal version inside Full-I2V-LongVideo

🛑 STOP COMFYUI

📂 Activate the ComfyUI Virtual Enviroment

In your ComfyUI root installation folder type:

on Windows:

Command Prompt:

\venv\Scripts\activate.bator Power Shell:

\venv\Scripts\Activate.ps1on Linux:

. /venv/bin/activateIf you use ComfyUI Desktop:

Click on Console and then on Terminal

⬇️ Install llama-cpp-python

pip install --upgrade --force-reinstall --no-cache-dir "llama-cpp-python @ git+https://github.com/JamePeng/llama-cpp-python.git"🔄 Restart ComfyUI and enjoy

📝 SWITCH CLIP

By default the WF uses the GGUF node to load quantized Clip, if you wanna switch to the NSFW Clip model, you have to bypass the GGUF node and connect the other Clip loader to the "Set_CLIP"

ACCELERATION:

Triton is disabled by default, you can enable it by opening the first Subgraph

Inside the workflow you will find all the links to download the models you will need

That's all, hope you enjoy ^^