🧪 F1D | Ultimate Flux GGUF LoRA Tester

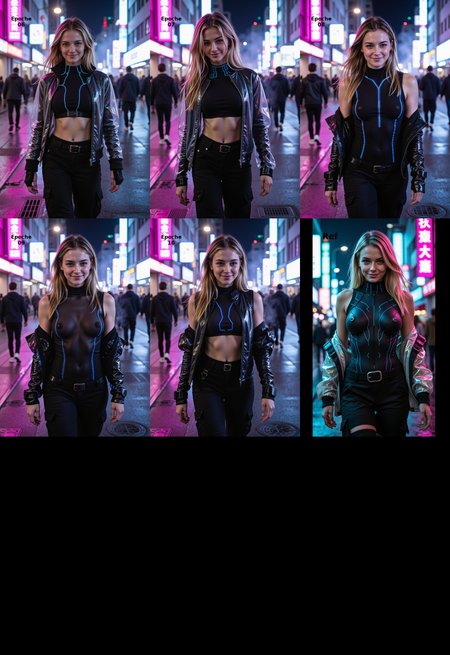

Stop guessing which Epoch was the best! This workflow turns the chaos of LoRA training into a clean, professional comparison matrix. Designed for Flux (GGUF) users who need efficiency, structure, and visual clarity.

🖼️ WHAT THIS WORKFLOW DOES:

It generates a comparison grid for your LoRA training steps (Epochs).

Visual Documentation: Automatically stamps the Epoch Name and Project Name directly onto the image pixels (bottom bar). No more wondering "Is this Epoch 6 or 7?".

Smart Filenaming: Saves files with

Project_Epoch_Strengthautomatically.Wireless Architecture: No cable spaghetti! Uses "Set/Get" nodes to transmit data cleanly.

🎛️ THE "CONTROL ROOM" (Top Left)

Everything is controlled from one central hub on the left side:

Load Checkpoint: Select your Flux GGUF model (Q8/Q4 etc.).

Load Assets: Select your LoRA files for the test columns.

Set Text: Input your Project Name & Epoch Labels once – they sync to all overlays!

⚙️ HOW TO USE:

Download & Drag: Simply drag the Header Image (PNG) of this post into ComfyUI. The workflow is embedded inside the image!

Install Missing Nodes: Use ComfyUI Manager to install the required packs (see below).

Setup Control Room: Load your GGUF Checkpoint and the LoRA versions you want to test in the "Control Room" (Top Left).

Set Strength: Adjust the Sliders located directly above each image column to test specific strengths (e.g., 0.8 vs 1.0).

Queue Prompt: Watch the grid fill up with labeled, organized test shots.

📦 REQUIRED CUSTOM NODES:

Please install these via ComfyUI Manager > "Install Missing Custom Nodes"

ComfyUI-GGUF (City96) - Essential for Flux GGUF

ComfyUI-KJNodes (Kijai) - For Set/Get Wireless logic

ComfyUI Book Tools - For the Smart Text Overlays

WAS Node Suite - For IO & Saving

ComfyUI-Easy-Use - For efficient LoRA loading

RgThree - For Optimization & Mute/Bypass

💡 PRO TIP: Because this workflow uses GGUF Models, it is highly VRAM efficient. You can test multiple LoRA versions without running OOM on 12GB/16GB cards (depending on Quantization).