Arknights: Endfield | Operators Collection

Intended to generate non-commercial fan works of Operators from the Arknights: Endfield video game.

ℹ️ LoRA work best when applied to the base models on which they are trained. Please read the About This Version on the appropriate base models and workflow/training information.

Trained on a large mixed NL and tags dataset with 30% tag dropout rate, at mixed [1024, 1280] resolutions. Previews are mostly generated at 1024x1536.

Operators (game version 1.0):

female endministrator \(arknights\)

male endministrator \(arknights\)

perlica \(arknights\)

chen qianyu \(arknights\)

akekuri \(arknights\)

alesh \(arknights\)

antal \(arknights\)

arclight \(arknights\)

ardelia \(arknights\)

avywenna \(arknights\)

catcher \(arknights\)

da pan \(arknights\)

estella \(arknights\)

fluorite \(arknights\)

gilberta \(arknights\)

laevatain \(arknights\)

last rite \(arknights\)

lifeng \(arknights\)

snowshine \(arknights\)

wulfgard \(arknights\)

xaihi \(arknights\)

yvonne \(arknights\)

Operators (game version 1.1):

mi fu \(arknights\)

rossi \(arknights\)

tangtang \(arknights\)

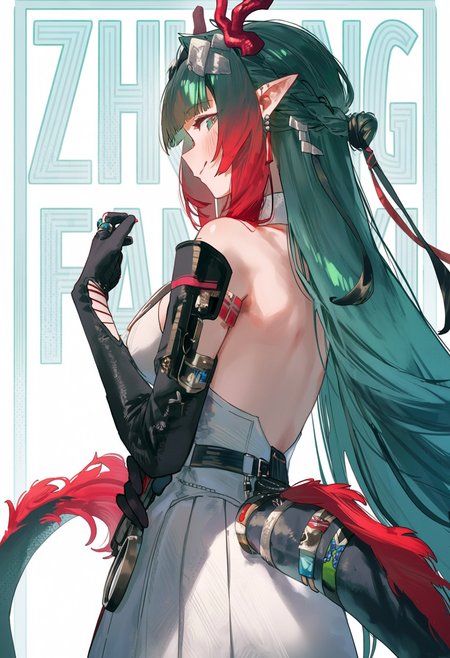

zhuang fangyi \(arknights\)

Antagonists:

ardashir \(arknights\)

nefarith \(arknights\)

Works best in combination with NL if you name a character, then describe their basic appearance.

A vibrant and dynamic illustration of Yvonne from Arknights: Endfield, featuring her with long pink hair styled in twintails, pointy ears, and small horns, along with a playful tail...

To be fixed:

Pogranichnik had a mistake for his labelling

Ember did not seem to learn her outfit/features

Description

Trained on Anima Preview 3 Base

Dataset uses a mix of tags and natural language captions

Less prominent characters were not learned as well in this version

Training config:

# trained using diffusion-pipe commit 6e95020cad0b3cd7dcb93ce42b358669051bf6d2

output_dir = '/mnt/d/anima/training_output/arknights3'

dataset = 'dataset-anima.toml'

# training settings

epochs = 1000

# Per-resolution batch sizes

micro_batch_size_per_gpu = [[512, 64], [1024, 32], [1536, 16]]

pipeline_stages = 1

gradient_accumulation_steps = 1

gradient_clipping = 1

warmup_steps = 100

# misc settings

save_every_n_epochs = 1

#save_every_n_steps = 1000

#save_every_n_examples = 4096000

#checkpoint_every_n_epochs = 1

#checkpoint_every_n_minutes = 120

activation_checkpointing = true

#reentrant_activation_checkpointing = true

partition_method = 'parameters'

# partition_method = 'manual'

# partition_split = [10]

save_dtype = 'bfloat16'

caching_batch_size = 1

map_num_proc = 8

steps_per_print = 1

compile = true

[model]

type = 'anima'

transformer_path = '/mnt/c/workspace/ComfyUI_windows_portable_nvidia/ComfyUI_windows_portable/ComfyUI/models/diffusion_models/anima-preview3-base.safetensors'

vae_path = '/mnt/c/workspace/ComfyUI_windows_portable_nvidia/ComfyUI_windows_portable/ComfyUI/models/vae/qwen_image_vae.safetensors'

llm_path = '/mnt/c/workspace/ComfyUI_windows_portable_nvidia/ComfyUI_windows_portable/ComfyUI/models/text_encoders/qwen_3_06b_base.safetensors'

dtype = 'bfloat16'

#cache_text_embeddings = false

llm_adapter_lr = 2e-6

#timestep_sample_method = 'uniform'

#flux_shift = true

#multiscale_loss_weight = 0.5

sigmoid_scale = 1.3

[adapter]

type = 'lora'

rank = 32

dtype = 'bfloat16'

[optimizer]

type = 'adamw_optimi'

lr = 4e-5

betas = [0.9, 0.99]

weight_decay = 0.01

eps = 1e-8Dataset config:

resolutions = [512, 1024, 1536]

enable_ar_bucket = true

min_ar = 0.5

max_ar = 2.0

num_ar_buckets = 9

[[directory]]

path = '/mnt/d/training_data/images_arknights_captions'

repeats = 4