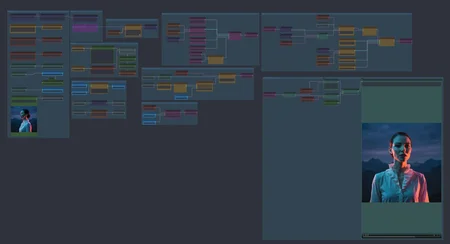

This workflow is meticulously designed to demystify the LTX 2.3 generation process. Instead of providing a "black box" solution, this setup prioritizes architectural clarity, making it the perfect tool for creators who want to understand, learn, and eventually replicate high-end AI video generation.

Key Features & Educational Value

Logical Modular Structure: Every stage of the process—from model loading to final video rendering—is laid out in a linear, easy-to-follow path. It separates the Video and Audio VAE processes, allowing you to see exactly how the multimodal generation is constructed.

Sage Attention Integration: Features the

PathchSageAttentionKJnode, demonstrating how to optimize memory and performance within the LTX architecture.Advanced Conditioning: Gain full control over the output using the dedicated

LTXVConditioningandLTX2_NAG(Negative Adversarial Guidance) nodes. This section shows how to balance CFG and guidance scales to achieve professional results.Two-Stage Upscaling: Learn how to use the

spatial-upscalermodel effectively. The workflow includes a clear path from the initial latent generation to the spatial upsampling stage, ensuring high-fidelity 5-second clips.Native Audio Management: Includes a dedicated audio latent path, showing you how to generate synchronized ambient sound or dialogue without external tools.

Precise Temporal Control: The workflow uses custom calculators to manage FPS and frame duration (e.g., 5s @ 24fps), teaching you the math behind video latency and frame consistency.

Why use this Workflow?

Most workflows prioritize speed over understanding. This one is different. It is built as a blueprint: a clean, "read-only" style layout that teaches you the importance of each connection. Whether you are a beginner looking for a solid starting point or an expert seeking a clean template for LTX 2.3, this workflow provides the precision and transparency needed for professional-grade AI cinematography.