V120_hybrid is gonna be the last model of this series, there will be no more hybrid, anime or realistic models, I will only update the vpred models, Thank you for liking my model and supporting me!!!

I AM A LAZY DOG XD so I am not gonna go deep into model tests like I used to do, and will not write very detailed instructions about versions. Plz understand, read 'about this version' and try them yourself, then decide whether to use them / choose which model to use by your preference.(I mix models for fun, you use them for fun xd)

V90 artist styles here:https://mega.nz/folder/NxJBWKpS#LZFVyOyvgFIAj2307-GDkQ

V105 artist styles here:https://mega.nz/folder/FoQR1RgA#gDLJyJe9-JK2_FDaosgSzg

If you like my work, consider buy me a coffee please <3 (completely voluntary)

声明:我只有在c站,hugging face和被迫在海艺AI上发布了我的模型,本模型完全开源不会收费,第三方网站收费与我无关()(但是欢迎给我打赏 ,完全自愿,不强求QAQ)

Indigo Furry mix (seaart.ai) They have returned the rights to my model to me, now this model is on seaart.ai (forced xd), using this model is completely free but you may need to pay for the third-party generation service.

现在海艺AI上的账号是我了()

As for XL based models, I think I’ll wait for SDXL to become more stable (waiting for fluffyrock xd), just wait a little longer.

请勿对号入座,只是吐槽:(吃瓜区)

(有一些人挺不要脸的,我没技术是吧,瞎混模型是吧,没有强求你用。但在这之前确认一下你没有偷别人的模型还蹭鼻子上脸?本来就是开源分享的东西搞这一天天的,不累吗。)续:hhh笑死我了,某个‘pytouch’大神跑遍了‘CDSN’、github问了上百遍GPT才写出来七八十行的代码,能不能把单词打对?即便你真的参与了stable tuner编写(我不信),难道全部代码都是你写的?还有你到底理解了什么叫开源协议吗?你往一条河里撒尿,然后叫嚣着“啊这条河是我的私人厕所你们都不许用”,不觉得好笑吗?何况我根本没有使用过你的训练脚本,这个模型是混合模型,甚至不存在训练过程,原理是Clip unet融合。综上所述,我很难评。谁更无耻,清者自清。(哦对,最后我的模型就放在这里,开门见山,凭君使用,不像某些人不知道咋回事完全没有成果呢,github和c站抱脸链接都不敢放,他本人说是上传模型 TOO SLOW!然后就放弃了hhhh)好像那个小学生或者初中生,真的是AI福瑞圈狮忆(建议各位老爷尊重祝福远离

以及接下来我都不会理你了,感觉是浪费时间,你就和你的提纯粉丝一起沾沾自喜吧,祝你的奥特曼佛祖模型成功(hhh绷不住了怎么会有那么逆天的名字)

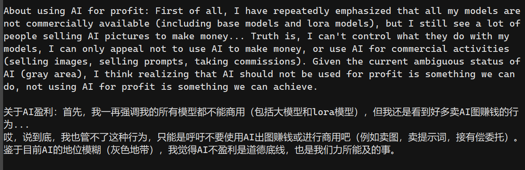

(我是有写在描述里,还有开源协议里,这模型不能商用的吧?但我知道,有些人不听劝阻,至今还在私自出图商用,还是直出,错误都没有修改的那种。)

(并且,对于AI,我个人觉得是好用的工具,AI的功能不止有出图,可以说是万能图片处理程序,把它当成工具用不香吗?但我知道,有些人视AI为洪水猛兽、智械危机,不想学习新兴事物,喜欢云别人的同时,抢占道德高地,乱给别人扣上名为‘优越感’的帽子。我真心为他们觉得不值得,也为我自己觉得不值得。)

------------------------------------------------------------------------------------------------------

YOU WILL SEE THESE IN THIS PAGE:

Male focus, NSFW.

Bara/yaoi/gay content, male dragons/furries content.

(All models can do females, it's just that I didn't specially tweak these models for females)

------------------------------------------------------------------------------------------------------

Anime: v115&v100 / Realistic: v110 v95&v80 / Hybrid: v120 v90&v75 (Recommanded) Extra:SE01_vpred

These three variations are very different, please read the description below and also ‘about this version’, it's not that the higher version number, the better.

Hybrid: (The most versatile model, Best for NSFW) (also SE01 is similar to hybrid models)

V120/V105/V90/V75/V60/V45 can replace V30, don't use V30, V16 is still usable.

Anime: (For fancy anime looking)

V115 is unique, V100 and V85 are basically the same, V70 and V55 are relatively similar. There are 2 versions for v55, you can use one or all of them, v40 is not good enough imo, V25 is usable.

Realistic: (For realistic or photorealistic/3D)

V110/V95/V80&V50 can replace V20, V65/V35 is usable.

(中文:本模型有三个大类,这三个大类的模型侧重点不太一样。请阅读“关于此版本”,里面有版本的中文介绍。可以往下拉用翻译看看"For xx"的版本描述。版本与版本之间并不是简单迭代,而是完全不一样,使用方法也略有不同,无法通用,请注意!)

通用模型:(通用模型,最擅长涩涩)(还有个SE01和通用模型差不多用法)

v120/v105/v90/v75/v60/v45可以平替v30,别用v30,v16还能可以用用。

动漫风:(纯纯为了一眼好看的动漫风)

v115很独特,v100与v85几乎一毛一样,v70和v55比较类似,v55有两个版本可以挑选,v40不是很好个人觉得,v25可以用。

真实风:(为了写实、照片写实和3D)

V110/v95/v80和v50可以平替v20,V65/v35可以用。

(如果你第一次接触AI绘画,我建议你使用v16)

(喜欢哪个模型用哪个)

------------------------------------------------------------------------------------------------------

AND Please add AI tag if you post images on media!

(The images generated by AI are currently in a gray area. Since the training dataset has not been authorized, the dataset is not so clean. These generated images are for personal usage only. Please do not use them for commercial purpose, including merges using this model, thank you!)

(出图切勿商用,包括混入此模型的混模也切勿商用!谢谢!)

------------------------------------------------------------------------------------------------------

Since version v20, models had been renamed to indigo_Furrymix. (This model used to be named indigo_maledoragoon_mix, but they are the same.)

Choose fp16 or fp32 versions by your preferences.

All models are baked with VAE, but you can use your own VAE.

uhralk/Indigo_Furry_mix at main (huggingface.co)

( Hugging Face link here if you need.)

If you have any questions, please comment in the discussion. (ENG or 用中文问我问题)

Because v16 is my personal favorite during the testing, so I will compare many models with v16, please understand xd.

------------------------------------------------------------------------------------------------------

UPDATE:

For v115:

This is an anime model with a little Niji6 style (but it may a failure depends on how you look at it), it's not very niji6, has a weird style (compared with other anime models).

This model has crazy color and contrast (but sometimes it's too yellow xd), but it's not that crisp clear as v100, and tag accuracy/responsiveness will be worse than v100 (for example it may struggle to do white wolves or tends to generate characters with clothes).

Clip skip = 1.

------------------------------------------------------------------------------------------------------

For v110:

This time the version v110 pays more attention to versatility rather than photorealistic style, compared with v80/v95, it is less photorealistic (but in some cases it is possible to do something very photorealistic), will react to artists tags (but it may not be able to completely replicate the artist styles). It's like a realistic version of hybrid models.

May not be as stable as hybrid models, and it's not that versatile as hybrid models.

Clip skip = 1.

------------------------------------------------------------------------------------------------------

For SE01:

This is SE01_vpred, a test v-prediction model, it’s similar to hybrid models but with higher color saturation and contrast, it can do almost pure black images xd. It is also more stable, does better tails than hybrid models(but not always xd). It seems to be more versatile in styles, too.

Personally I think using this model is very weird compared to hybrid models(I'm still not getting used to vpred models xd), and sometimes it has less details than hybrid models, also some people may not like its crazy contrast and high color concentration xd.

Remember to download .yaml config file and place it alongside the model files, rename the config file name to be the same as the model name.

Try not to use boring_e621_fluffyrock_v4 with this model plz bc it may blur the image outputs.

Use CFG rescale extension plz, with a value of 0-0.5(but I think it’s ok to not use it xd)

Clip skip = 1.

------------------------------------------------------------------------------------------------------

For v105:

This is a tweaked model similar to v90, compared with v90, its colors are more vivid, has more details, and the realistic style is better, but it may not be as good as v90 when doing some certain images xd

Hybrid models are basically Fluffyrock models with better details and (often) weaker NSFW abilities compared with original rock models. They are the most versatile models in this series of models, can do most content and styles.

Use e621 tags, use less danbooru tags plz.

When using hybrid models, add artists to prompts plz.

Use embeddings as negative prompt plz, but you don’t need to use a lot of them xd

Clip skip = 1 (try not to use 2 plz).

------------------------------------------------------------------------------------------------------

For v100:

This is v100_anime, a very similar tweaked version of v85, this model is basically v85 with flatter color, this model is as stable/unstable as v85, and the hands are still bad :(

This is another average model xd

Clip skip = 1 or 2.

------------------------------------------------------------------------------------------------------

For v95:

This is a tweaked version of v80 with a little difference. It is similar to v80, with even more fur, brighter colors and lower contrast (so that this model will not look so dark fantasy like v80 xd).

But this version has fewer details, feels less stable than v80, also there may be too much fur that sometimes dragons/aquatics will have fur xd.

Clip skip = 1.

------------------------------------------------------------------------------------------------------

For v90:

Based on v75_extra, v80, v85, and yiffymix34.

This is a test model with traindiff, should be better than v75?

Nothing much to say about hybrid models, they are basically Fluffyrock models with better details and (often) weaker NSFW abilities compared with rock models.

(Recommended) add artists to prompts.

(Optional) you can use WD-KL-F8-Anime2 vae to get more colorful images.

Clip skip = 1.

------------------------------------------------------------------------------------------------------

For v85:

Based on v70, v75 and indigokemonomix beta.

This is an alternative version of v70, it’s like v70_nsfw, which is better at doing nsfw than v70, but losing anime style and may not be that crisp clear as v70.

Could be a little unstable, images may be dim and not very colorful.

Clip skip = 1 or 2.

------------------------------------------------------------------------------------------------------

For v80:

Based on v65, v50, and bm lora.

An improved version of v65, it might be better than v65 imo, (70% outputs are better xd) but may lose some photorealistic style.

This version probably solved the problem that the character's body is not completely covered with fur. (maybe solved, may be not xd), also solved missing tail issue.

------------------------------------------------------------------------------------------------------

For v75:

Based on v65, v70, v45_extra.

This model is probably a combination of v45 and v60.

Note that hybrid models are common models that can do many different styles by artist names, make sure to add artists to prompts.

------------------------------------------------------------------------------------------------------

For v70:

This model is probably a combination of v55_SFW and v55_NSFW, maybe more SFW.

Trained with Nijijourney images, could probably do a lot of NJ anime styles.

Lighting is more natural according to a friend xd. Could handle very dark images. Unstable NSFW, this model is more about looking good xd.

Note that doing NSFW is unstable, can only do humanoid penises, sometimes the shape of characters’ penises will be weird xd.

------------------------------------------------------------------------------------------------------

For v65:

A similar but different model for v35, it’s a model with a strong Midjourney photorealistic style and HDR.

Trained with Midjourney images, could probably do a lot of MJ realistic styles.

Note that this model doesn’t like tails, tends to do ferals, also the character’s body may not be fully covered by fur (or become human xd)

------------------------------------------------------------------------------------------------------

For v60:

Similar model to v45, with different rock version.

------------------------------------------------------------------------------------------------------

For v55:

2 versions, see 'about this version'.

------------------------------------------------------------------------------------------------------

For v50:

A more common model than v35 with weaker style and better compatibility.

No (or less) midjourney style this time (couldn’t find dataset to make loras also it’s damn tiring to merge loras or MBW models I don’t wanna do it again xd).

------------------------------------------------------------------------------------------------------

For v45 hybrid:

This is basically a mix of all my previous models with fluffyrock, it balanced style and stability,should be able to be used as a general model.

It can do both anime and realistic content, but I think it's more realistic.

Note that in some scenarios, there is not as much details in generated images as those specialized anime/realistic models.

Should be ok with all LoRAs.

------------------------------------------------------------------------------------------------------

For v40:

Extremely high LoRA weight, fine with most of LoRAs?

It's been a hard time for me to merge v40, it's not easy to balance the style and NSFW compatibility of models during merging. I may have gone too far for this one in style, and I tried to replicate niji style but kinda fail again :( It's also an 'anime' model though, so I guess not so niji is ok? ahh whatever, here it is:

This model is more 'anime' than 'niji', it's very flat colored without oily glistening most of the time, and its niji style is still way worse than nijijourney (so sad), but i think it's better than v25 xd

Works fine with or without TI embeddings (negative textual inversions), note that some embeddings will affect style significantly, so try not to use too many of them.

The style of v40 is somewhat different from v25, and doing better NSFW than v25.

Artist tags will affect the output in a different way, they will not replicate artist styles.

The contrast of the generated image may be too high sometimes. And personally I think this version gone too far for anime styles in some circumstances.

Sometimes this model generates bad hands.

Still has trouble doing non-humanoid penis.

It tends to generated blue furries idk why xd.

LoRA compatibility still remains a mystery (?), in my tests, some loras are good, some are not.

This version was made in a hurry, If you find other problems please let me know :(

------------------------------------------------------------------------------------------------------

For v35:

Extremely high LoRA weight, worst LoRA compatibility of all.

This is v35 realistic & midjourney, it’s an improved version of v20, and it’s another attempt to replicate the images generated by Midjourney v4. This is just an attempt (it’s like v25, using a lot of LoRAs to finetune base models, I’m still learning), I can’t tell if this model is good or bad, I'll leave it to you guys to judge xd.

The example images are generated with the fp32 model.

Could probably do anything that v20 can do.

Dark themed, high contrast, HDR.

Limited sci-fi content.

Like v25, it generates detailed backgrounds that sometimes need to be stopped.

Better than v20, worse than Midjourney. (I think this model is a lower ranked Midjourney v4 not to mention v5 xd)

Use ‘photorealistic’ to do photorealistic. (Whatever, just pile up a bunch of quality tags xd)

Doing SFW or NSFW equally bad/good in my test.

Unfortunately, this model sometimes looks pale and is not colorful in style, just like the opposite of v20 xd.

------------------------------------------------------------------------------------------------------

For v30_hybrid:

Good compatibility for rock based LoRAs, ok for NAI based LoRAs.

This is v30_hybrid, an alternate version of v16. It’s a highly fluffyrock based model (credit to the author). I was told that v16 was too anime, so I tried to keep the goods of rock without affecting the generation of kemono content, I kinda messed up both sides, but whatever, here it is.

SO:

You need to know how to use fluffyrock.

Thanks to rock, this model can do almost any content.

Could probably do a lot of kinky stuffs.

Doesn't need too many TI embeddings (negative textual inversions). Don't just pile a lot of embeddings in negative prompt, this model doesn't seem to like them together sometimes :( Also they will affect the generation of certain poses.

In my tests, it generalizes better than v16, but is less stable than v16.

There is a higher threshold, not as easy to use as v16.

The outputs of kemono content are sometimes better than v16, sometimes worse than v16.

Mostly use the e621 tags, minimum danbooru tags’ support.

The quality of output images can be varied, so you need to roll for good results. If you are not that familiar with prompting, I suggest you use v16.

------------------------------------------------------------------------------------------------------

For v25_anime_niji:

High LoRA weight, ok for LoRAs.

This is a improved version of v12, a heavily lora weighted model, trying to replicate the images generated by Midjourney Niji 5, and generates kemono anthro furries in a anime style, SFW and NSFW.

This version can generate a style similar to niji 5, not exactly niji 5 style, it also generates anime style. (and you need to write good prompts and roll for a few times xd)

Generates very detailed backgrounds. (almost too detailed sometimes, you need to prevent it from doing that by prompting xd) (Yeah you can do landscapes with this version xd)

Better at doing SFW than NSFW, in my opinion. It’s more unstable to do NSFW in this version, sometimes there are good outputs indeed. If you like the style you can use v25, but if you prefer NSFW or kinks without niji style, just use v16, it’s amazing <3

More annoying to use, better style, more unstable (hands are stable tho). Prompting is more difficult than v16. Accuracy is worse than v16. It needs many quality tags to do niji 5 style, and you need to choose when to use danbooru tags or e621 tags for different content (or use a short sentence or paragraph). Less sensitive to tags, less responsive to artist styles. (Just use v16 it’s amazing <3)

Doing worse sex/duo scenes, good for solo.

Needs higher resolution to get better results.

Some tags seem to be a little overfitting: ‘anthro’, ‘dragon’, ‘wolf’ and ‘muscular’.

Seems to be more stable for females.

Can do ferals but very unstable.

This version is not perfect. Your experience with this version may vary <3

------------------------------------------------------------------------------------------------------

For v20_realistic:

A quick mix, its color may be over-saturated, focuses on ferals and fur, ok for LoRAs.

This is just a improved version of v4.1, if you don't like the style of v20, you can use other versions.

This version is intended to generate very detailed fur textures and ferals in a realistic style.

Usage is similar to v16. (it's like v16 without kemono style)

Doing better ferals than v16. (maybe xd)

The anthro dragons generated in this version will have a bit of feral features.

Like v16, this version can do females.

Can do aquatic furries.

More unstable than v16, and doing worse hands.

------------------------------------------------------------------------------------------------------

For v16_hybrid:

High anime style, good compatibility for NAI based LoRAs, ok for rock based LoRAs.

This is the first hybrid model, use 'kemono/anime' in prompt to generate anime content, 'chibi/cute' to generate cutie/cub kemono and chibi kemono (with danbooru tags), and use 'realistic' to generate realistic content (anime-ish), these are situations without artists tags. This model is best at generating bara/yaoi kemono and NSFW content.

This model is very sensitive to the prompts and artists tags you write. Add artists to prompt can significantly affect the outputs.

Can do proper ferals now. (May not be perfect xd)

Morenon-dragon male furriessupport.It's a furry mix now xd, so it could probably do any content.

More sex/duo content support. (Which had none before xd)

The style is mostly determined by the prompts you wrote.

OK for both danbooru tags and e621 tags.

Can do females.

Still can't do aquatic furries.

Still has no eastern dragons support. (Unable to solve, may have to use lora)

------------------------------------------------------------------------------------------------------

Old versions (not recommended):

Description below is for v4.1 and v12

v8 is trash.

Used to named indigo male_doragoon_mix v12/4.1

For v12_anime/v4.1_realistic:

Hello everyone! These two are merge models of a number of other furry/non furry models, they also have mixed in a lot of my own LoRAs. And they're all about male anthro dragons.

These models are heavily bara oriented, the training dataset is mostly male NSFW, so it's easier for them to generate male nudity, no clothing and NSFW content.

They mainly use e621 tags, but also support danbooru tags, and already baked in vae so you don't have to use any.

Although they are 'doragoon' mix, they can generate other male furries.

They are not specially trained for anthro females. I didn’t try a lot, but I think these models can do females, and they are okay for females.

There are two types of them that are mixed with different models to generate different outputs.

The anime one:

It's used to generate anime style dragons or kemono dragons. It's also good at generating other furry male anthros. Recommended sampler: DPM++ 2M Karras and Euler a.

The realistic one:

It's used to generate realistic or photorealistic style anthro dragon males and other furry males. Recommended sampler: DPM++ SDE Karras and also Euler a.

Example images are slightly curated and they are direct outputs without inpainting.

Known issues:

For both:

Struggling with wings and tails, because they are anatomically complex. May needs a little inpainting.

Inpainting is always good.

Without Hires Fix, both of them tend to generate blurry faces and eyes, so I recommend using Hires Fix for the whole time.

Latent, ESRGAN_4x or Anime6B.

Can not do aquatic anthros.

Getting no better results when using male dragon related LoRAs.

No eastern dragons support yet.

For the anime one:

it has noise offset issue, it generates very bright characters in dark scenes, so useContrastFixto fix it.https://civarchive.com/models/8765?modelVersionId=10638SelectSD1.5 version.And I don't think it's 'anime' enough for now. Itsstyle is somewhat fixed, and it's a little overfitting.Can be unstable.

Also anime one is worse at hands.

Can not do ferals.

(Can do a weird type of ferals: anthro feral xd)

For the realistic one:

generated characters' eyes will be blurrier in full body portrait.

Can do just a little bit of ferals.

(The images generated by AI are currently in a gray area. Since the training dataset has not been authorized, the dataset is not so clean. These generated images are for personal usage only. Please do not use them for commercial purpose, including merges using this model, thank you!)

(出图切勿商用,包括混入此模型的混模也切勿商用!谢谢!)

(English is not my native language, please forgive me if I make mistakes. )

Description

This is SE02_vpred, a similar v-prediction model to SE01 with high color saturation and contrast, it seems to have more details than SE01, but the generated backgrounds seem to be more random.

Remember to download .yaml config file and place it alongside the model files, rename the config file name to be the same as the model name.

Using different textual inversions (embeddings) may significantly affect the outputs.

Use CFG rescale extension plz, with a value of 0-0.5(but I think it’s ok to not use it xd)

Clip skip = 1.

中文:

这是SE02_vpred,和se01比较相似的一个vpred模型,有着高对比度和饱和度,与se01相比似乎有更多细节,但是生成的背景更没有逻辑性。

记得下载后缀为.yaml的配置文件,重命名为和下载的模型一样的文件名,并放在下载模型的同一个路径。

使用不同的负文本反转似乎对输出影响很大。

可用可不用CFG rescale插件。

clip跳过为1。

FAQ

Comments (119)

How do you rename the yaml config file for vpred 02 if it's already renamed to the model name? I use Rundiffusion for my image generation, and I don't know what to do to make the image an actual image instead of a weird abstract painting of color. I have no idea what to do.

Is it because of the sampler I used? Or something else?

sounds like the config file isn't working, just make sure the model file and config file have the same filename and are in the same folder if you're using onsite cloud A1111, for example: 'SE02_vpred.safetensors' and 'SE02_vpred.yaml'

@indigowing Okay, thank you, I believe I’ve figured it out.

Thanks for updating

nice. but isn't this like the hybrid model though? not that i'm complaining. looks good though. also i still can't use it, it wont let me even if i did the monthly payment. sorry but i think i'll be using -BB95 furry mix- more until it has been sorted out

if you're saying you can't use the online services on this site, then it's all civitAI's fault :(

@indigowing i can use it. i just cannot use that particular model

Any chance of enabling v 105 hybrid here? It made for some adorable characters, but the site it was on "Happy Accidents" is shutting down.

i've turned on the online services option but civitAI doesn't let us use all versions :(

is there a full list of artist styles that se02 can make?

i haven't had time to run artist grids lately, but v105's styles should be good reference

@indigowing thanks for the info

不错,不错

Is it possible for you to make this (v120) have onsite generation on civit?

i've turned it on, but civitAI won't let us use the specified versions :(

how do I get the vpred models to work on comfyui?

I can't seem to find any information on this anywhere

just as I say it I find it in the ModelSamplingDiscrete node 😆

I always wondered why vpred never seemed to work for me in Comfy, even when I put the config file in the checkpoint folder. Is MSD node required to activate the config?

其实只需要在models目录下新建一个文件夹,然后把模型和config文件都放里面就行了。

All you need to do is create a new folder in the “models” directory and put both the model(.safetensors) and config file(.yaml) inside.

So I've done some research and it turns out there's a separate config folder in the models directory. In older versions of Comfy, this is where checkpoint config files would go, and the file can be run alongside the checkpoint with a "Load Checkpoint With Config" node. This method is currently being phased out in favor of the newer MSD method being described here. MSD comes with its own config for V-Pred, which means config files are no longer necessary.

Could you possibly provide a real world example? When I create a new folder in "\webui\models\" it no longer shows up in the list

I am getting yellow-ish image results.. any idea what could i possibly doing wrong?

if you are using vpred models, check to see if the config file is working, usually this problem is caused by the config file not working properly.

@indigowing i have no idea how to check that oO

@Koljaman vpred models require an additional file to be put next to a model file. The config file should have same name as the model file, but different extension (.yaml). The config file for vpred models can be found at the right bar under the "Files" section.

@mdhyena i have put that config file from the beginning and i still got yellow-ish results :(

@Koljaman SE02 Some lora or using certain prompts will turn yellow like, garnetto, donkeyramen, you may want to check your prompts to eliminate them step by step.

其实只需要在models目录下新建一个文件夹,然后把模型和config文件都放里面就行了。

All you need to do is create a new folder in the “models” directory and put both the model(.safetensors) and config file(.yaml) inside.

question, how do you use vpred? because mostly it generates blue dots

OK, so I tried everything should be correect but still dots. I would need a clear intrustion how to use vpred. ty im using SD

Create a new folder in the models\Stable-diffusion directory, and then put the model and config file together. Just like

models\Stable-diffusion\indigoFurryMix_se02Vpred\indigoFurryMix_se02Vpred.safetensors

models\Stable-diffusion\indigoFurryMix_se02Vpred\indigoFurryMix_se02Vpred.yaml

Then it can be used in your SD.

@renziwei I've been wondering about VPRED models too. - So how do we then select it, in Automatic1111? It doesn't show up in the list, or in the CHeckpoint browser, this way.

Also, won't show up in ComfyUI (with the model pointer to Automatic1111's dir)

Everytime there's an SD update, it's a mystery how stuff works..

@FoxDude Maybe you'd try this SD UI. It's what I'm using.

https://pan.baidu.com/s/1MjO3CpsIvTQIDXplhE0-OA

password:aaki

Download the file

sd-webui-aki-v4.8.7z

Then unzip it, you'll get the SD UI I use.

@renziwei Thanks man. I'm a little apprehensive, cause it's all in Chinese and I'm not familiar with it.

ok, i reason it did not work because I was missing VAE which I never used becayse I didnt need it before lol

@renziwei okay quick question, I do felt interested to download ur version to see but it requires for me to register their app and doesnt let me download it which idk. not accessible to download

@Deekajdwc Maybe you can find it on github.

each vpred model need a config file to work properly, depending on which generative UI you are using, there may be different solutions for different UI, for A1111, you just need to make sure that your WEBUI version is up to date, and remember to download both the model and the config file (remember to rename the two files to the same if they have different names), and you're good to go <33

@renziwei this is an one-click installer for A1111 webui by an uploader(Aki) from China, very easy to use xd

@Deekajdwc there should be other one-click installers you can find on Google if you don't familiar with chinese, but please be aware that what you are downloading may contain viruses and keep your property safe, don't let those online scammers get the best of you!

Anyone else can't publish images? Mine are just stuck forever analyzing.

It does seem to be a little slower going, and previews only show every 10 iterations. No images at all - even at lower resolutions?

typical civitAI, just be patient plz

Any plans on moving to Pony?

on it.

BRUH WHAT IS THE TOJOKAI NO ROKUDAIME DOING HERE

THIS IS HOW YOU MAKE VPRED MODELS WORK IN AUTOMATIC1111:

In your "\models\Stable-diffusion" (automatic1111) folder - make another folder (name is irellevant, I call mine "VPRED" - put your Vpred models + config files here.

Like this:

Automatic1111\webui\models\Stable-diffusion\VPRED\indigoFurryMix_se02Vpred.safetensors

I've got the Automatic1111 model dir linked in ComfyUI. VPRED model shows up, but I can't make it work there atm.

That's stupid. As long as you have the model and its config file in the same directory, it will work in Auto1111. You don't need to make a new subfolder for them to work, and quite frankly they've been working just fine for everyone else without having to do this.

@DeviantRava But I did it this way. Its config file didn't work properly until I did so.

@DeviantRava Not having it in a seperate folder creates over-saturated dots and silhouettes for me, even if you think it's "stupid" Whether that's an AMD/Intel thing, a GPU-driver thing, or whatever it might be that creates these different results.

@renziwei Same for me. I created this post, because new Stable-diffusion things are rarely well explained.

comfyui need [ModelSamplingDiscrete] node and step V_prediction,before the KSampler。

@FoxDude Huh, interesting. I've never had this issue with folders as long as the config file is in the same folder as the checkpoint with the same name. But if I ever run into this issue I'll try it as a solution.

@ice_bear Thanks! I'll try it out

@RaaazzleDazzle Strange. I didn't forget the cfg file, so I'm not sure what that's about.

@ice_bear 老公 I love you❤

Can you please add the v105hybrid model to the on-site generator?

i think it's civitAI that doesn't let us use all model versions, let me know if I'm wrong <33

👍👍👍

pretty much the most convoluted and confusing versioning and model release i've seen lol why not delete all the ones you think are 'trash' or redundant and clean up the wall of text? i mean no offense ;0

good idea, im just being lazy XD

Nah, you just don't know about us lazy guys at all. Besides, everything could have its own meaning isn't it?

Very bad idea, all the different versions can yield different unique results. The latest release never was the "best" one, because that will depend on the result you're after.

I'm getting images that are blacked out. Am I missing a step?

It might be an issue with your UI settings. Are you using a local UI or are you using a UI over the web?

Cannot get the "indigoFurryMix_se02Vpred" version to work.

The v120 Hybrid works perfectly, but the se02Vpred only produces nonsense grainy images, overly saturated, and completely wrong, as in the attached link: https://drive.google.com/file/d/1EgjtGdf6EOqgmnR3mBaglLF9PwXBfHkM/view?usp=drive_link.

Is there some step I'm missing? I'm using exactly the same settings as I do with v120 Hybrid.

Do you have the .yaml config file downloaded? It's in the extra files dropdown under the model details. It should go in the same folder as the model file.

@bilboswaggins84210 Thanks that worked - it'd be good if it was mentioned in the description as not everybody is aware of this, and might downvote the model because of it :'(

Serious downgrade in quality. Version 120 is still the best

It depends on what you put in the prompt, but yeah, this version is kinda... sorta inferior to 120. Not to mention the config file being finicky to install properly. I've had more success making good images with 120 than this one.

just use whichever version you like plz, the latest version is not always the best <333

Outstanding. Best SD1.5 furry model available. Can even outperform Pony models with careful prompting and proper workflow.

Any updates on new models, Indigowing?

im doing XL models, plz be patient <33

XL_v1.0 out now: Indigo Furry Mix XL - v1.0 | Stable Diffusion Checkpoint | Civitai

@indigowing YEEE!! I’m getting it right now!! THX!

I've switched to the VPRED model, but all I get is grainy and Oversaturated outputs.

I have the .YAML file placed right next to the .Safetensor, and both are named similarly too. My sampling steps are 20-30, and the CFG scale is 7.

Anything else I should try?

If you have auto1111 try using the CFG rescale script. But even using that: They come out grainy in my end. You might have better luck though (who knows?).

I personally recommend not bothering with the model. It seems to have an inferior quality compared to the 120 hybrid, (not that you can't have success at all, it just seems more difficult to produce a good image to me), and the instructions to install the extra setup file is vague, at best.

Not to mention that for those people who use an external website to produce their images, (like me, with RunDiffusion), all downloaded files are erased after some time, which means that they have to reinstall everything, and that also cuts into their creation time.

There is a list with the artist you can use with this model, but there is one with the characters?

I tried to do mordecai from from regular show and goes well, but margaret not

so I guess there are characters that can be done and some that not

I actually have a character grid project I'm working on, but it's not finished yet xd, it's basically any characters with over 400 images and have simple appearance/features on e621 that can be done.

@indigowing oh, thanks, also, as I've been using it, I've seen that there are characters that can't be made with the styles of x artist but with anothers yes

maybe a whole page or sistem to filter it xD

There are so many characters and so many artists that the joke doesn't sound so crazy 🤔

all im getting for results are extremely oversaturated pixels, is this supposed to be for stable diffusion? what settings am i supposed to be using?

Did you download the config.yaml file and place it in the same /models directory as the model itself?

So I didn´t know why I couldn´t run the version 120 or newest and if because I have to download files, can someone explain me what I have to do? XP

You may need to provide more information to resolve the issue :o

@indigowing I mean (Because I am really new in all of this) I have always use the model I wanted to create the images, but the last ones didn´t appear to me, and I read that I have to download some archives from the model page to make it work (sorry to took so long to reply)

hey lovely model but could you make it available for use in civitAI?

I've turned on this option, but it has no effect :(

Please bring a SDXL model, which works just as great as the V120! ❤️ I found your tagging prompt system very pleasing to use. Leaving that aside, please add more artists and tags, I noticed several are missing compared to yiffy, especially macro-related ones, like crushing, building_sex, flattened, etc. but also (Dark)wargreymon, like seriously?... Example here of a full E621 list!

Like yiffy the series of models are merges using the fluffyrock models, theoretically they should all be the same, maybe the cause of missing tag problem is the lora finetuning process in merging?

Are there any plans to support flux?

nope, too much effort to train xd

Wery cool

Indigo 110 Realistic is still my favorite model. I know you said you'd only be doing VPRED from now on.. But I still hope for another realistic version :)

I think I will continue to do realistic models on XL, but lately I've been a little busy at work and all I want to do after work is playing games xddd, so plz be patient ;)

@indigowing That's fair man. I would say prioritize the important things in your life and do another if you feel it's fun at some point. Regarding XL models, I can't really run them on my GTX1080, but I'll get a new card sooner or later. Just waiting for Nvidia to play fair, so it could be a while.

Well, I'm gonna attempt to take my AI generation offline tomorrow. I looked into that .Yaml file, which triggered some red flags, and while I was looking into that, I noticed that all these .Json files that SDN downloaded to add thumbnails to my models and loras, also have restrictive parameters on them for the models/Loras.

Personally, I'm getting sick to death of corporations trying to own/control every damned thing, right down to what I do on my own computer in my own place with the money I worked for.

And they're getting so damned sneaky about it with the double speak and doing things on our systems behind our backs.. This is a big part of why I've decided to swap to Linux. Like, sssuuuurree... They're just trying to "Improve the user experience", right..

FYI, the two biggest red flags in the .Yaml were;

cond_stage_config: target: ldm.modules.encoders.modules.FrozenCLIPEmbedder

unet_config: target: ldm.modules.diffusionmodules.openaimodel.UNetModel

Both imply sending your data to Open AI. And even if you think I'm just ranting or w.e, well, if you like generating AI art for free, maybe reconsider your stance. Cuz Open AI and google are actively working to own the largest market share in AI software and research while leaving crumbs for the rest of us.

How that will play out, right now, not much surface level effect at all, sides the few odd things I've noticed that could be toggled, worked around, ignored etc. But over the course of the next 5-10 years, they will progressively buy up more and more of the AI market, and work on innovating things that no one else has, and copyrighting those things so no one else Can have them.

Basically, they will eventually make it so we have to buy from them (at an excessive premium) or be stuck with second rate options.

For those that didn't want to read all that, simple answer, don't. for those that did, and agree, leave a thumbs up, and comment if you like. Toodles!

I think .yaml file is just a config file, which is a important file for the vpred model to generate images correctly, because 'ldm.modules' is one of the structures of the SD models, it's a model Latent Diffusion pretrained by OpenAI, only a structure of the model's unet, which constitutes the Stable Diffusion, it won't send any data to any corps <33

But I agree with what you said that corporations taking everything, This is a sad era where you can do or get away with anything if you have money :((

@indigowing To clarify, my paranoia began when I noticed errors in SDN about not being able to connect to a logging server of some sort, and before that error, my full positive and negative prompt was in the konsole window. I found a file in an SDN subfolder called Text.cpp, and in there it seems it was set to take a log after every 10 iterations (image creations or w.e). So, I edited that to take a log after every 999999999999999999 image creations, and haven't been bothered by that since.

But also, when I tried to load a flux model for the first time, I get an error about how it wants me to log in to huggingface and get a key.

Cannot access gated repo for url https://huggingface.co/black-forest-labs/FLUX.1-dev/resolve/0ef5ff

f789c832c5c7f4e127f94c8b54bbcced44/model_index.json.

Access to model black-forest-labs/FLUX.1-dev is restricted. You must have access to it and be authe

nticated to access it. Please log in.

basically, that. Also, some of the paranoia due to an oops on my part. I had it in my head that black forest labs was owned by OpenAI. So, my first logical assumption was that BFL was just trying to soak people for money or stealing their data (Given how stingy OpenAI is with their models).

Also with the config on your model, OpenAI unet? I'm still trying to wrap my head around how unet works. But I don't love the idea of corporations having anything to do with my image creation process, hence why I'm using an offline webui instead of an online process (which would be much simpler given that they're already optimised, and I've only got an 8gb Vram laptop).

There's also these lines in your config;

"minor": false, "poi": false, "nsfw": false, "nsfwLevel": 19,

I know most people will say that I shouldn't care about the first of those 4 things, but sometimes I do generate younger characters, it's not a sex thing (not everything is, despite what people would think seeing most of the images of women on this site..). But it is a tad irritating finding these restrictions in my config files.

Also, after sending the original message yesterday, I noticed that all of my models now have similar config files (though the rest are Json as opposed to Yaml, and only yours has the reference to OpenAI). I'm guessing they were all downloaded to handle the model thumbnails in SDN. But, they also have the model restrictions in them at the top. I know the models don't need these files by default cuz I never seen them when I was using EasyDiffusion, or A1111.

those keys point to python modules that define the model's architecture, which is uploaded by the model author. the json file is completely optional and is only something you have if your program talks to civitai through an extension created by civitai. can you elaborate on what you mean by restrictive parameters?

@scringle Restrictive parameters = minors false, nsfw false, poi false.

To add some much needed clarification to the first two, contrary to popular believe, they Are mutually exclusive. That is to say, it is possible to create an image with minors that is not inappropriate, so to restrict them altogether due to societal paranoia, is just pure insanity. as for nsfw, if you're an adult, who cares if you make nsfw. and POI, as I found out, is short for point of interest. In other words, most models won't recreate real world locations. Which is just dumb. Why restrict artistic freedom.. I'd love to make images of crazy things happening on the streets of Toronto.

I think I get what you mean about the Json things though. Like, maybe they only affect model use on this site? Still, I haven't seen too many real world location models around, so someone's putting in their due diligence to put the Kaibosh on those.

That said, my paranoia regarding restrictions, I don't think it was completely unfounded. I could be wrong, cuz I'm still fairly new to ComfyUI, but I think there may be some built-in restrictions on the other webui's like a1111/SDN. Seems to be much easier to get younger male character in comfy. Of course, it'll still try to put boobs, long hair, and makeup on my boy wizard if I don't put 5-20 female related words and phrases into the negatives, but that's just a problem of 90% of AI art community being perverted straight dudes, or lesbos.

Oh, and for offline programs, they all, even comfyui, seem to use a suspicious amount of data. Why is something that's supposed to be offline, triggering my upload and download on my network whenever I generate an image? I moved to Linux to get away from the grabby hands of corporations.. If they want my data to sell it, they can pay me for it..

感谢@indigowing 已在comfy UI上解决问题很奇怪forge和comfy不会像web UI一样自动加载配置文件在comfy上可以通过CheckpointLoader指定配置文件而不能是CheckpointLoaderSimple但是CheckpointLoader被隐藏起来了只能通过搜索找到。

之前网络抽风civitai老吞我评论,如看到多条重复回复请忽视

indigo Did you delete the xl file?

no, it's not here in this model page, there it is:https://civitai.com/models/579632?modelVersionId=874436

I have noticed only two models can be used here is it normal as i cant access the other ones only v115 anime and v110 realistic can be used the others i cant and well i noticed some loras i try to edit with used some older models like v90 hybrid just trying to understand this in my own confusion thats all XD

This is an old problem, allegedly civitAI has a model limitation per user thing, if you need to keep a new version of a model available on site you need to delete all the old versions of models and keep the number of model versions low, which I'm not too happy about.(They don't even make the option to choose which version we can use on site for online generation available despite the limitation thing):(

For Loras, as far as I know all my sd1.5 characters loras should work on any version of sd1.5 indigo models <333

@indigowing ty for the answer and yeah i agree this limit is kinda annoying but its good to lnow some versions work still and they should add in tht option to choose anyway no matter good work on your loras

Goated model. This is too good for 1.5, I literally can't find an XL tune that would be as easy and aesthetic as this (skill issue?). The vpred versions highly recommended!

Yep, also still using this SD 1.5 model. Kinda sad, since I really want better resolutions and less glitches, but this is the only model really working nice for E621 based workflows!

what do I merge Se02_vpred checkpoint with?

Also: What folder do I put the files v90artist styles, and V105 artist styles?

It's better to use vpred models for mixing with SE02. If you want to use normal models for mixing, you need to use traindiff method.

Artist style grids are for reference only, you need tag completion extension with a csv file to fill tags automatically.

Edit : I use the v120Hybrid now and its working since it doesn't need config file

Old :

Hey, I need help pls :')

So, I was using it on automatic1111 and it was working perfectly ! (That's an amazing model, I love it !) but for performance issue I wanted to try with SD.Next (with zluda (AMD GPU)), but the output seam to be very noisy, like we can't see a thing... Other models work good, but this one not, maybe because of the config file not working with SD.Next ? Idk, that's the only difference with the other model... Do you have any idea ?

在C站在线生成时,会出现比较混乱的无意义图片

It ain’t available. Maybe put it back up for auction?

为什么我使用这个模型只能生成出一些混乱的色块,有人能告诉我吗

你用的是哪个模型,如果是VPRED模型需要配置文件才能正常使用

A good prompt!

I wish there was something, anything, in NAI, Illu, Pony, or any XL model that could do what this OG model could do.

Details

Files

indigoFurryMix_se02Vpred.yaml

Mirrors

yifurr_sunrise.yaml

furationBETA_rebootedVPREDPartF.yaml

9thTail_mainV02.yaml

yiffyfluffybutts_V061.yaml

9thTail_juicyV03.yaml

subfurryanalog_v10.yaml

subfurryanalog_v20.yaml

indigoFurryMix_se01Vpred.yaml

SD15ColorsplashV_v01.yaml

SD15ColorsplashV_v013.yaml

fluffyrockanimedilut_v10.yaml

easyfluff_5000DefaultvaeFFR3CS2.yaml

pegasusmix_VPredV1.yaml

9thTail_mainV01.yaml

9thTail_mainV03.yaml

beanv6flufusv2_v10.yaml

9thTail_altV03.yaml

steinmix_charlie2.yaml

steinmix_alpha6.yaml

yifurr_cid4.yaml

steinmix_alpha5.yaml

steinmix_alpha3.yaml

yiffymix_v41.yaml

indigoFurryMix_se02Vpred.yaml

yifurr_cid.yaml

steinmix_alpha6bL.yaml

fluffyrockE6LAION_e98E6laionE47.yaml

yiffymix_v44.yaml

pegasusmix_VPredV2.yaml

yiffymix_v43.yaml

SD15ColorsplashV_v011.yaml

furationBETA_rebootedVPDModelbaseF.yaml

furationBETA_rebootedREFINEDVPREDF.yaml

subfurryanalog_v22.yaml

fluffyrock_e184VpredE157.yaml

fluffyrock_e257VpredE11.yaml

yiffymix_v40.yaml

yifurr_2.yaml

yifurr_sun.yaml

yifurr_newmoone2.yaml

yifurr_c3.yaml

fluffyrockUnleashed_baseV10.yaml

9thTail_softV01.yaml

SD15ColorsplashV_v012.yaml

fluffyrock_e233VpredE206.yaml

yiffymix_v42.yaml

9thTail_softV03.yaml

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.