FLUX COMPACT

I am excited to present a collection of compact Flux models, with Clips and VAE included in a single model, optimized to integrate seamlessly into a variety of existing workflows. These compact versions come with the various combinations of Clips and Models, ensuring that they can be easily loaded directly via nodes such as 'CheckpointLoaderSimple' and others, without the need for additional configuration.

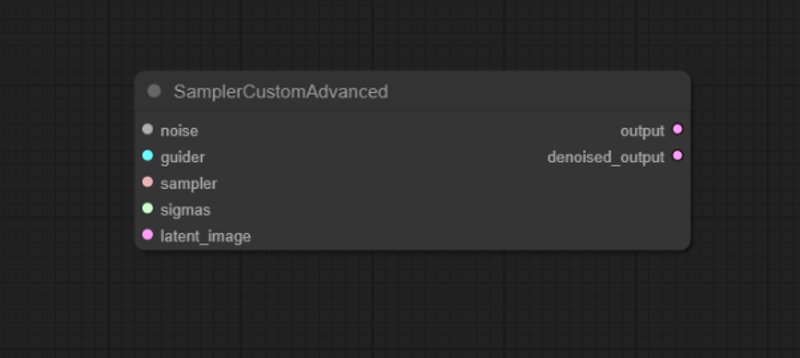

As Sampler Node you can use the ones already present for SDXL using CFG 1, or you can use 'SamplersCustomAdvanced' that is dedicated to FLUX.

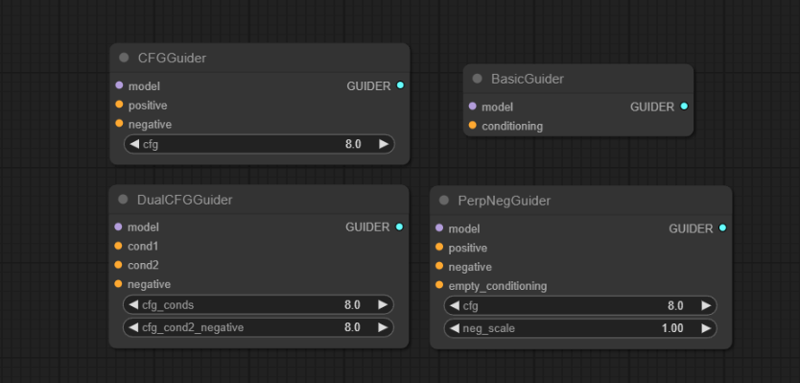

If you use the dedicated FLUX node above , you can use one of the 'Guiders' as nodes to manage the guidance instead of the 'CFG of the sampler'

If you use the dedicated FLUX node above , you can use one of the 'Guiders' as nodes to manage the guidance instead of the 'CFG of the sampler'

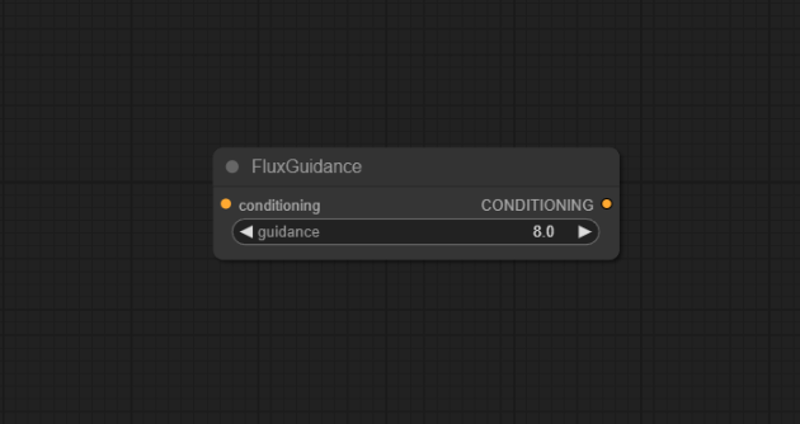

if not, you can use the 'FluxGuidance' node placed between the prompt and the sampler to manage the guidance on the other samplers already present for SDXL, keeping their CFG at 1 and modifying that of the new node.

if not, you can use the 'FluxGuidance' node placed between the prompt and the sampler to manage the guidance on the other samplers already present for SDXL, keeping their CFG at 1 and modifying that of the new node.

By reducing the final size by about 30%, these models are also accessible on less powerful machines, although I am not sure how this is possible. In my tests, I have not observed any differences in the generated outputs compared to the full versions.

Let me know more with your experiments.

I claim no credit for the original creation and publication of these models; all rights are reserved to 'Black Forest Labs' and their talented team. My goal is to make their excellent work more accessible to the community.

I hope these compact Flux models will be useful to you and contribute to your creative and technical endeavors. Your feedback is greatly appreciated as we continue to explore and refine these tools together.

Flux.1-Dev Compact

MAIN SAMPLER: Euler + Simple

NORMAL CFG: 1

FLUX GUIDANCE: 2+

BETTER STEPS: 20+

Flux.1-Schnell Compact

MAIN SAMPLER: Euler + Simple

NORMAL CFG: 1

FLUX GUIDANCE: 2+

BETTER STEPS: 4+

VERSIONS CONTENTS:

Flux.1-Dev fp8

Unet: Flux.1-Dev

weight_dtype: fp8_e4m3fn

Clip1: t5xxl_fp8_e4m3fn

Clip2: clip_l

VAE: ae_Dev

Flux.1-Dev fp16

Unet: Flux.1-Dev

weight_dtype: fp8_e4m3fn

Clip1: t5xxl_fp16

Clip2: clip_l

VAE: ae_Dev

Flux.1-Schnell fp8

Unet: Flux.1-Schnell

weight_dtype: fp8_e4m3fn

Clip1: t5xxl_fp8_e4m3fn

Clip2: clip_l

VAE: ae_Schnell

Flux.1-Schnell fp16

Unet: Flux.1-Schnell

weight_dtype: fp8_e4m3fn

Clip1: t5xxl_fp16

Clip2: clip_l

VAE: ae_Schnell

Flux.1-Dev fp16 ALT

Unet: Flux.1-Dev

weight_dtype: fp8_e5m2

Clip1: t5xxl_fp16

Clip2: clip_l

VAE: ae_Dev

Flux.1-Dev fp8 ALT

Unet: Flux.1-Dev

weight_dtype: fp8_e5m2

Clip1: t5xxl_fp8_e4m3fn

Clip2: clip_l

VAE: ae_Dev

Description

FAQ

Comments (43)

So guys can flux do nsfw yet? If not I sleep... xD

Theres another model out, a dev model called unchained that does anatomy quite well. porn is still not a thing yet, but it does bodies far better. problem is it also wants to show nips even with bathing suits, so erm...almost there. might be a little bit overtuned.

@saturngfx so... we sleep a little bit longer xD?

@Malessar for NSFW content, especially if it's hardcore, XL's finetunes still work a lot better, but no problem, don't worry, those will come soon too. And trust me, you'll be surprised. for now, you can sleep a little longer. the time for good training is much longer than before.

@ALIENHAZE ty! I look forward to progress hahaha

What is different in ALT versions?

It has a different weight_dtype baked in, so you can expect slightly different quality and vram usage results as far as I'm aware.

thanks @Art3mas for the reply. only difference is in the fp16 and fp8 versions. @Lera123 in the description you will find all the information for each version.

Loras trained for one weight type can't be used for another without some conversion?

@Lera123 I haven't done a ton of testing, so take my words with a grain of salt, I'll bring updates in the future with more information. but all Dev versions should be fine. I haven't tried using the Shnell version of lora, I don't even know if there are any already.

can you make with q8 and t5xx fp16?

🟡 Flux.1-Dev fp16, could work with:

RTX 4070 TI Super 16gb.

32gb RAM?

I use FORGE.

No problems, my 16G 4060 Ti takes 1 to 1.5 mins per image with standard Forge settings. RAM isn't an issue (Flux.1-Dev fp16)

@Haircut66 Thx. I still need to select the T5xxl text encoder right?

@zerocool22 no, nothing else is needed

Does it work in Forge ? 🤔

Thanks

Yes, no problems using it (Flux.1-Dev fp16)

What is difference between NewReality and this one ? I would install fp16 dev here otherwise if not major, and i want photorealism mostly.

'Flux Compact' are just compacted AIO versions of the basic 'Flux' provided by BlackForestLabs' with t5, clip and VAE recommended by 'BlackForestLabs'.

NewReality is instead a finetune to make the model much more photographic as done with the 'XL' versions.

For now it is only a light tuning, but the work continues and in a few days a new Dev version will be released and then the various lighter versions such as Schnell, nf4 and quantized to follow.

There are two features I wished I had if FLUX based models so bad - round chins on female faces and small breasts. And I'll keep using Pony based models until I get these two features.

BTW, did anybody try to mix Pony and FLUX? Is that possible at all?

The architectures do not match, so a pure merge between the models is not feasible. there are ways to bypass the system but they are not very functional. maybe for now, apart from them coming out with a tuning of 'Pony Flux' the best thing would be to make various lora based on the datasets or generations of Pony and then merge them into the base model.

Don't worry, everything you ask for will arrive anyway, it's just that with Flux it takes a bit longer due to the size of the tuning. in a few days a new version of my 'NewReality' model will also be released.

Until next time, good continuation and stay tuned!

You basically ask if Flux can make porn images that look more child-like and the answer is "Don't worry, everything you ask for will arrive anyway".

WTF in what a sick world do we live?

@_kaidu_ If you think I must force my wife to get huge silicon breasts instead of her natural small breasts that I like in her, just to to match your representation of a "legit, non-sick world", then you should go f*ck yourself, I won't do it.

works great in Ruined Fooocus 1.55 ! thanks a lot

Thanks a lot for the info, good to know that Fooocus has finally updated the compatibility with Flux. The new finetuned versions will arrive in a few days! stay tuned and good continuation!

how u getting them to load? no flux checkpoints show up in the models list in Ruined for me

This is the best model I've ever tried. Thank you! I hope there will be updates

Will it work on 16gb ram and 6gb vram?

even if it does, it's not worth it.

It is better if you use the GGUF versions which are quantizations of the model

hi,dear,whats different between normal version and ALT version?

"Flux compact!"

20 gigs...

Honestly the FP8 F1.S Compact is not bad, it requires a lot of rerolling images but it can run on most normal cards and only needs 4 steps so its quick.

The Dev fp16 Alt and the Dev fp8 both worked just fine on 4060 Ti 16gb. I had about 4-5gb of VRAM left over as available on the Dev fp8 and about 2-3gb leftover from the Dev fp16 Alt.

I also noticed that between fp16 and fp8 they both had nearly identical processing speed of around 2.3 seconds per iteration. Maybe that has to do with the 4060 Ti 16gb having a much narrower 128-bit bus than many other cards? for what i was doing, it seemed like a reasonable speed for both versions of the model to run identical. i think in extreme cases the fp16 vs fp8 are primarily going to be different in quality and memory amount. And ofcourse there are discrepancies about which software you're running it with (cuda, malloc, TensorRT nodes, etc)

It is pretty and it doesn't hallucinate, but it seems too curated for me. I like the fuzz on the 90s TV's, and just like that I prefer the data sets used in SD1.5

Still, it was a treat to try this, and get it working so easily.

Thank you so much for allowing me to experience this in this manner.

There is a fundamental misunderstanding about VRAM and Flux. Say you generate 1it/s, then the PCI-e bandwidth from system RAM to your GPU needs to exceed the size of the model data, which it almost certainly will do so. This is how models >>> greater than 8GB of VRAM work just fine, when you have sufficient system RAM.

which model is better for gtx 1070 8gb vram?

22 gb schnell model or 23 gb dev + 8step lora or compact 11 gb model

Perfect! its so fast at 8Vrm

Creo que deberías comenzar por, Flux.1-Dev fp8 el modelo menos demandante

hola buenas noches no entiendo nada pero queria saber cual puedo proobar en 18 gb de ram ddr3 y una gtx 1050 ti con flux? se que es malisima mi pc pero cual podria probar? que sea mejor

where can i get the ae_schnell.. can you give me the link..

Couldn't use.

I have an 8gb AMD and 16gb ram and using webui

Error: module 'torch' has no attribute 'float8_e4m3fn'

I tried out the fp8, thank you for sharing! The results are exactly the same as Comfy's fp8 weights

Of all the Flux large models I have evaluated to date, this is the one with the widest adaptability and the best image generation results. Many thanks to the author for sharing; you have shown us the vast potential of this model!

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.