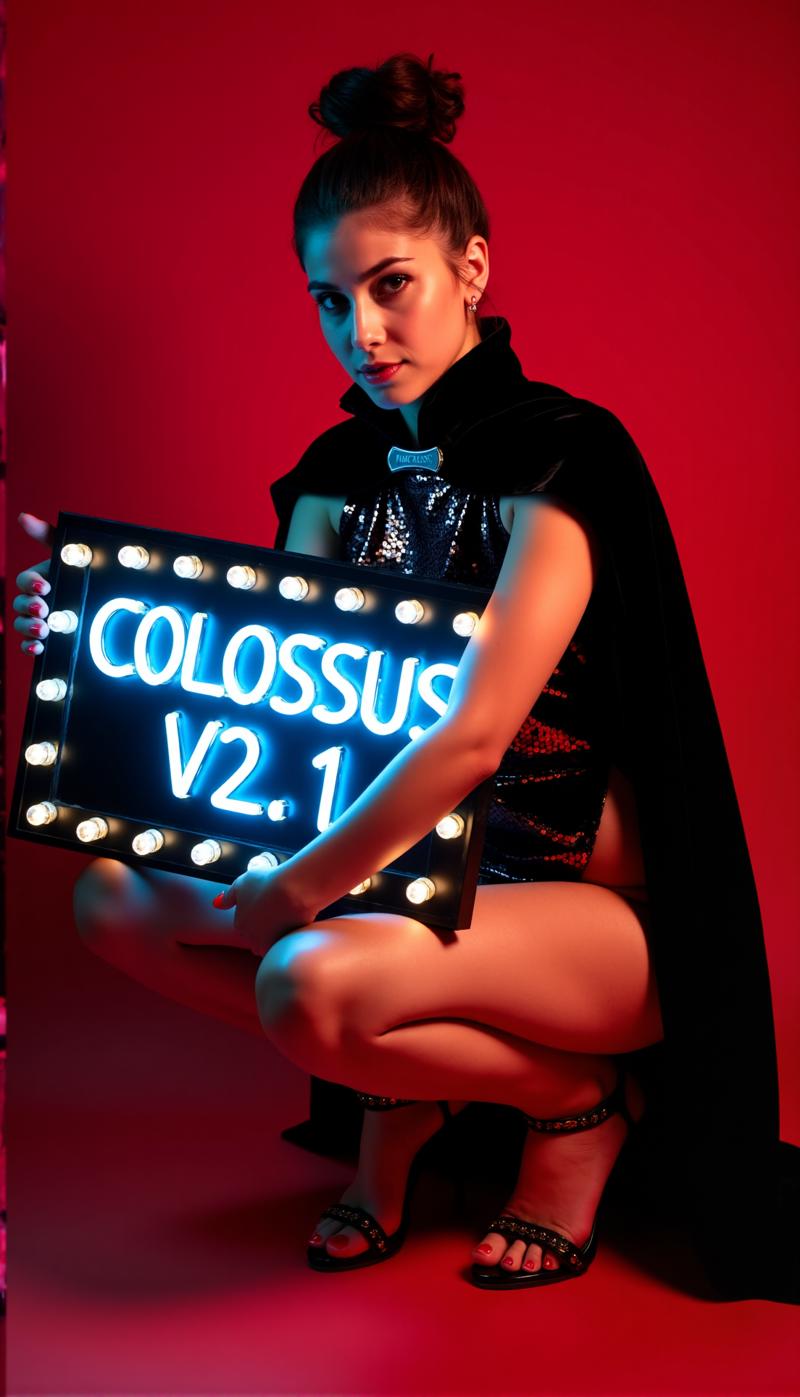

Deep under a mountain lives a sleeping giant, capable to eighter help humanity or create destruction...

A Colossus arise...

After my SDXL series its time for the FLUX series of this Project... This time I trained this thing from ground up. For training I used my own images. I have created them with my schnell Flux model DemonFlux/Colossus Project schnell + my SDXL Colossus Project 12 as refiner.

This SD Flux-Checkpoint is capable to produce nearly everything.. Colossus is very good creating extremly realistic pictures, anime and art.

If you like it, feel free to give me some feedback. Also if you want to support me you can do this here. I have spend some good money to build a computer that is capable to actually train Flux-models.. Also training and testing takes also a lot of time and electricity..

https://ko-fi.com/afroman4peace

Version V12 "Hephaistos"

Publishing this checkpoint makes me happy and sad at the same time.. V12 will be the last checkpoint of this series.. The main reason are the upcomming EU-AI laws... Another reason is the license from Flux .1 DEV itself. Thank you all for the support! I have sunken a lot of time into this Project over the last year. Now its time to move on to a different Project.

Anyways.. I will end this series on a high note...

V12 is build on V10B "BOB" but got basically the best parts of this series blockmerged into this one checkpoint. (It was the result of a new merge method which took about 1:30h to merge and used up my entire 128GB RAM). I also enhanced the face and skin textures in comparison to V10. The eyes are much more realistic and more "alive" than before.

Test it out yourself and give me feedback about V12. "Thanks" to my slow internet connection I will first upload the FP8_UNET. After that the FP8 "all in one" version and then the FP16_unet and FP16_BEHEMOTH. I will also try to get it converted into int4 and fp4 (wish me luck on that matter)

As always give me some feedback about V12..

Version V12 "Behemoth" (AIO)

This "all in one" model is the best of my V12 series.. well and the biggest in size of course :-)

The Behemoth is got an costom T5xxl and Clip_l baked inside the model. If you prefer quality over quantity this is the checkpoint for you!

Version V12 FP4/int4

Thanks to Muyang Li from Nunchakutech who did the quantification of V12. https://huggingface.co/nunchaku-tech and their amazing nunchaku!

This version is truly mindblowing. Combining quality with speed never seen before.

ATTENTION!

There are two versions FP4 and int4. FP4 is for Nvidia 50xx graphic cards only! While int4 works with 40xx and below. (you need at least a 20xx series graphics card)

You also can download both versions directly here: https://huggingface.co/nunchaku-tech/nunchaku-flux.1-dev-colossus

INSTALL GUIDE and WORKFLOW

Here is a quick install guide and WIP workflow.

https://civarchive.com/articles/17313

DETAILED GUIDE for the Workflow

https://civarchive.com/articles/17358

I am still working on my new workflows for Nunchaku.. so the following workflow is still very WIP (work in progress) I will add a detailed article at the weekend.

Version V12 FP16_B_variant

Thanks to a small mistake I made late at night (2AM) I renamed and uploaded the "wrong" checkpoint. Its an very experimental checkpoint never meant to be published. Its not much tested but performed really good when I have created the showcase. Its might better than the standard version.

It likes to lean more into asian faces.. That is because I wanted to test something to mix in a side project I am still working on. Tell me your experience with this checkpoint :-)

Version V12 AIO FP8

This version is a all in one version of V12. This means that all clips are baked inside it. It will give the same output as the FP8_unet with my custom clip_l

Version V12 GGUF Q5_1

This version was a request. Its not bad in quality..

Version V10B "BOB"

This is an alternative version of V10. I have created this to improve the FP8 version of V10. In general the FP8 version is more precise and the colors are better. Sadly I have not much time recently.. (RL goes first). Thats why this took so long.. Let me know if you prefer this version. I do have a FP16 version of "BOB" too. Depending on the feedback I will also consider to publish a int4 version.

WORKFLOW:

here is the workflow for V12 and V10: https://civarchive.com/articles/17163

Version V10_int4_SVDQ "Nunchaku"

First I want to say thanks to theunlikely https://huggingface.co/theunlikely who converted the FP16_Unet into int4_SVDQ. Go visit his page and leave a like.

This version is more or less equal to the FP8 version. Even on the normal mode inside my workflow this thing is about 2X-3X faster than the regular model.. With the "fast mode" of the workflow I can render an 2MP image in around 19 seconds with my 3090ti.

What is SVDQ "Nunchaku"?

This new quantification method allows it to shrink Flux models (in this case a native FP16 model) from 24GB to about 6.7GB. But thats not all: you can run generations faster than ever before without loosing too much quality. Sure you will see a small difference between my 32GB_Behemoth but for this thingy you will need a lot more Vram/RAM to even run it.

For more information visit: https://github.com/mit-han-lab/ComfyUI-nunchaku?tab=readme-ov-file

Installation: Please visit my workflow/install guide: https://civarchive.com/articles/15610

Version V10 "Behemoth" (FP16_AIO)

This version is still experimental. The main focus was to get more realistic results. Also I managed to reduce some "Flux Lines". This thing is based on Colossus Project V5.0_Behemoth, V9.0 and another Project I call "Ouroborus Project"

The FP16 version is very stable. I am also releasing a FP8 version soon. This version is also very good but not as stable..

I let you experiment with it though.. Tell me what you think of this version.

Have frun creating :-)

Version V9.0:

Well I have to explain a lot.. First why is it even V9.0?

I recently moved in a new flat and because of some errors the internet provider did I had no real internet connection.. So while doing the whole moving stuff.. I left my computer running. The result was that I created a lot (most broken) Checkpoints. I do have some very good V8 versions though I might will publish as well..

What changed?

I trained new faces and skin textures into the model by taking basically the best results of V5.0. Also the model got an feet/legs training for better anatomy. The V5.0 versions sometimes clipped the head and feet.. I think that I managed to fix some of those isseues..

In addition I trained it with more of my own landscape images.. And yes I did that all while moving into a new flat... I think it was a overall training time of about 2 weeks computing time which isn't exactly cheap.. (every hour basically costs me around 25 cent in electricity)

Anyway I hope that you like this version.. If you want to support me: Post some nice images/ or maybe tip me even with buzz or on Kofi..

Tell me what you think of it :-)

Version 5.0:

V5.0 is actually based on V4.2 and V4.4 (which will be also released soon). It got additional training on skin details and for anatomy in general which mostly fixed stuff like hands and nipples. The face details are much better. I also tried to fix the some minor flux lines..

In general this version is more realistic than V4.2 and better with smaller details.. Like Version 4.2 this version is also a hybrid de-distilled model. You can use it basically with the same settings like V4.2.

Here is also a new Workflow to play with: https://civarchive.com/articles/11950/workflow-for-colossus-project-flux-50

Tell me what you think of this version compared to 4.2 or V2.1..

Version 4.4 "Research":

I have added this version just for completion.. Its slightly more realistic than V4.2 and the base of Version 5.0. You can try it if you want. You can also use the workflow for V5.0 and V4.2..

Version 4.2:

This version is basically a further development of Demoncore Flux and Colossus Project Flux. The goal was to get a more stable outcome with and better skin textures, better hands and more variety of faces. So I have trained it on a hybrid model which is partly Demoncore Flux. I also enhanced the nipples and NSFW a bit. Tell me if you prefer V4.2 over version 2.1 :-)

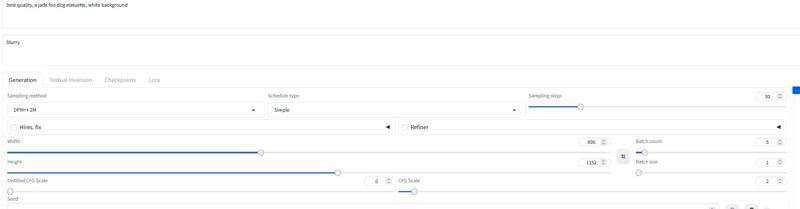

For the showcase images: I have only used native images with SDXL resolution or 2MP resolution (for example 1216x1632). This model can handle even higher resolutions.. I have tested this checkpoint for up to 2500x2500 but I only recommend going for around 2000x2000.

For the settings I recommend using about 30 steps and 2-2.5cfg. I mostly use 2.2 or 2,3 in my workfow. For the showscase I have used DPM++ 2M with Simple sheduler.

I will add more versions soon but I don't have much time before Christmas..

Settings

I will add a more new dedicated Comfy workflow soon. You can always download and open the showcase images for now..

The "All in One version also works well with Forge too..

Basically it works with the same settings as Version 2.1 (see below)

Give it 20-30 steps with around 2.2cfg..

Version 2.1_de-distilled_experimental (MERGE)

This version is completely different and works actually different than a normal Flux model!

Its a experimental merge between my version 2.0 and a de-distilled version https://huggingface.co/nyanko7/flux-dev-de-distill. This happend a bit by accident but the results are mindblowing. You will get mindblowing details. Also follows the prompts extremely well... So the next thing I am gonna do is to train on the de-distilled model directly. I have already done some test Loras with it. This is highly experimental so please let me know if you find errors which are not listed down below. If you have good images post them.. post also the bad ones this can help improve thing :-). May try also version 2.0 and tell me which type of checkpoint fits you best.

!Attention!

The normal Flux workflow isn't working with this version. YOU NEED to download my workflow for it!

You also can figure something yourself out but please don't blame me for bad images. Also this is a highly experimental model... check the downsides below..

UP- and Downsides of this checkpoint:

Well this checkpoint can create extrem details..This will come with a price.. Its slow compared to the normal Flux- checkpoints. The upside of it is that you often doesn't need a additional upscale anymore. Instead of using the Flux Guidance this model uses the cfg scale. Which also mean that it will not work with standart workflows.

You can use negative Prompts! This helps to get stuff out of the image you don't want.

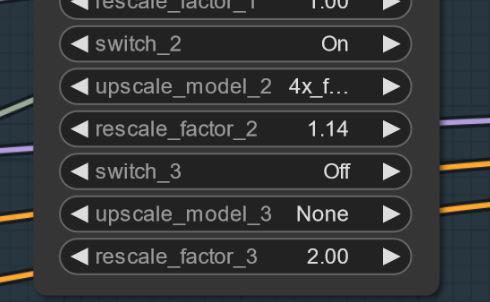

Sometimes can artifact appear.. You can solve this by a small and simple upscale (I am working on this). Here is an example.. this strangly happens not with every seed.. UPDATE: This is not a issue with the model itself.. more a workflow one.. I am working on fix for it. If this happens you can try setting the first upscale to 1.14 instead of 1.2.

Settings and Workflow V2.1:

Here you can find the workflow for it: https://civarchive.com/articles/8419

Settings: other then the normal Flux it doesn't need the Flux Guidance scale. Use the cfg instead. I mostly use 3 cfg for the workflow.. Some images may require lower cfg-scales

the most important thing is may to shut off the flux guidance scale..

Without the Workflow I have tested it with 30 steps and 2-3cfg. This is also may the settings for Forge. try to experiment here.

I recommend using the word "blurry" in the negatives

Sampler and scheduler:

You can pick from a range of working samplers:

Euler,Heun, DPM++2m, deis, DDIM ware working great.

I mostly used "simple" as scheduler

If you find better settings tell me.. :-)

For Forge I recommend using the AIO model.. here is a example setting for Forge

Version 2.0_dev_experimental

Well.. this a experimental version.. The goal was to create a more coherent and faster model. I have trained in some additional own trained loras and then merged the resulting models in a special way (Tensor merge). It got a costom T5xxl which I have modified with "Attention Seeker". For gaining speed and additional quality I have merged in the Hyper Flux lora from ByteDance. This means that it shifted the working area.. I show you what this means.. Here is the main title image..

16 steps V 2.0

30 steps V 1.0

30 steps V 1.0

Downsides:

Downsides:

Well first.. This version is a bit bigger than the last one.. second I still have to create the Unet only version. I will update this when its done..

Settings and Workflow V2.0:

You can run the model now with less steps.. 16 steps equals 30 steps from the old model.

I still recommend using around 20- 30 steps because it will get you more quality in most cases.

Sampler: I prever Euler with Simple as scheduler. The guidance can be set from 1.5-3 (feel free to test it outside this range of course). The guidance of 1.8 still works well for realistic images. You can also test out other samplers. DPM++2M and Heun also working great.

Workflow 2.0:

I have created a new workflow for V2.0 and V1.0. This got the new Flux Prompt Generator. Additionally I got the second upscaler stage working. https://civarchive.com/articles/7946

Forge:

I have tested this model also with Forge and it worked very well.. The images may can differ between Comfy UI and Forge though..

Version 1.0_dev_beta:

This model is my first entry of the series. So please give me some feedback and post some images. This helps me to improve this project further. There are several versions to choose from. The best model regarding quality is the FP16 version Well the FP16 version is huge in size and will need a beefy graphics card and lots of RAM. The FP8 version is the version I consider as good solution between quality and performence. If you want to get a GGUF version download the Q8_0. The GGUF Q4_0/4.1 version was a request. They small in size but you will loose some quality.

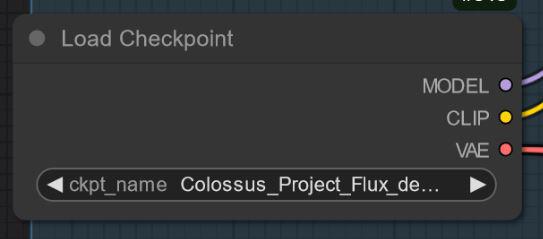

There are basically two types of my models "All in one" models which only needs one file to download. It got the Clip_l, T5xxl fp8 and the VAE baked in. (look down below). Place this inside your checkpoints folder.

The other versions are the UNET-ONLY ones. Here you need to load all files seperately.

In any case you need to download my Clip_L for those to get them working right..

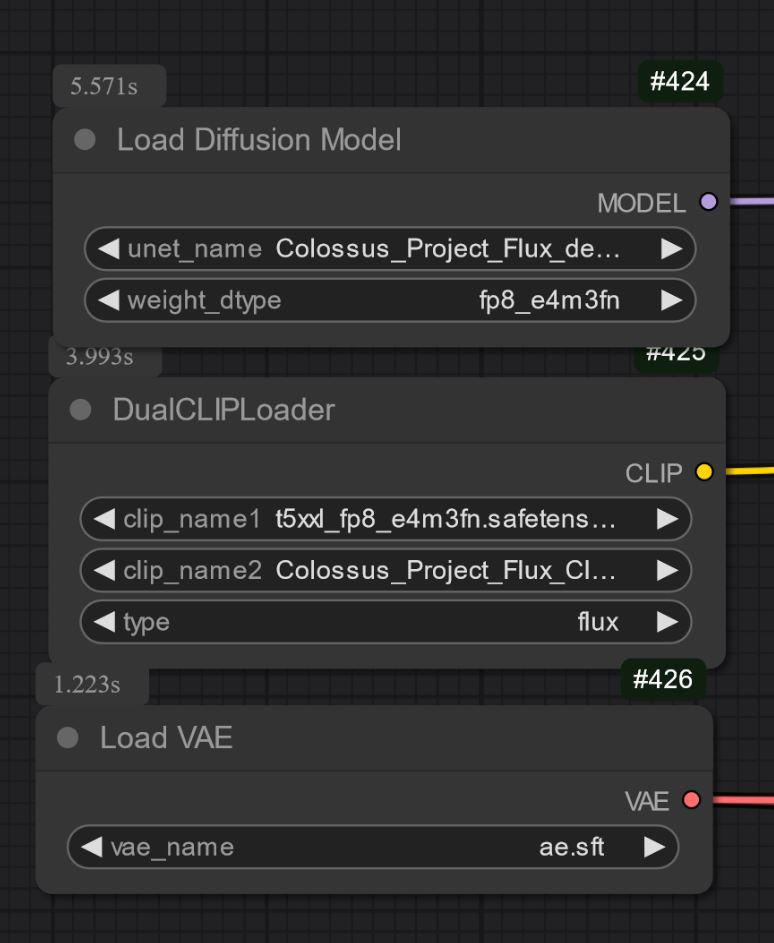

Also important is to choose the right T5xxl clip. For the FP8 version it is the fp8_e4m3fn t5xxl clip. For the FP16 it is the FP16 clip. make sure to select the default weight type. (down below is a example image for the fp8 version)

For the GGUF version you need the GGUF loader!

Some known things for now regarding V1.0:

This is just the first model of the series so at the moment it might can struggle with some prompts or styles like art. The next version will receive more training. Let me know some things the model can't do..

Settings and Workflow:

I have tested it with around 30 steps, Euler with Simple as scheduler. The guidance can be set from 1.5-3 (feel free to test it outside this range of course)

The guidance of 1.8 works well for realistic images.

Feel free to experiment with those settings.. If you get good results, please post them.

I have added the showcase images as training data.. Inside it is the workflow for Comfy. Here is the workflow for download: https://civarchive.com/articles/7946

"All in one" model:

UNET_only:

You need download the clip_L as well. its the 240MB file.

You need download the clip_L as well. its the 240MB file.

GGUF: I have added the workflow for GGUF here: https://civarchive.com/articles/7946

Important:

The dev model is not intented for commercial use. For this I will publish the "schnell" model on a different place. Its more intended for personal or scientific usage.

LICENCE:

https://huggingface.co/black-forest-labs/FLUX.1-dev/blob/main/LICENSE.md

Credits:

theunlikely https://huggingface.co/theunlikel (thank you again)

Version 2.1/V4.2/5.0: Flux_dev_de-distill from nyanko7

https://huggingface.co/nyanko7/flux-dev-de-distill

From V2.0: Hyper Lora from ByteDance https://huggingface.co/ByteDance/Hyper-SD

Black Forrest for their amazing Flux model https://huggingface.co/black-forest-labs

Description

FAQ

Comments (39)

Hi all,

Check out my workflow section https://civitai.com/articles/8419/workflow-for-colossus-project-flux-21

I have looked into my workflow and found out that the first upscaler can cause some artifacts or a "frame" on the left side of the image.

I have also added a more simple workflow there. If you using Forge: I have added some example images for it in the gallery.

In general you can always copy the workflows from the images.

De-distilled model is really nice, and it works well for training as well. The de-distilled model that you merged into was kind of deficient in some ways, and the merge seems to have fixed that. Thanks!

Technically speaking it is a sort of hybrid checkpoint now. The method I merged it makes it that both initial checkpoints are present within the model. I will train more on it myself of course. I have a FP16 version as well. I have trained the model with prodigy. This seems to work and gives amazing results. One thing I have noticed too is that some of my old Loras I trained with V2.0 are working better now with V2.1.

@Afroman4peace Nice!

Honestly, I'm really surprised that people don't use Prodigy more often. It's pretty much my go-to for lora training, and I get better results, faster than with adamw or adafactor. I wonder how many people gave up on it because they didn't know you have to set the learning rate to ~1.

I'm probably going to stick with this model as my primary one both for generating and training. I'll let you know if I run into any obvious flaws, but I haven't yet. :)

@_Envy_ oh thanks for the buzz :-) yes prodigy needs a learning rate of 1. I am training it with the Flux trainer inside Comfy UI. It took a while to get good settings but now it works like a charm. Lately I even managed to train something on a high quality level with just 5 images.. First I was very hesistate to publish this because I wanted not merge much into this project. The results blew me away however. I will use 2.1 as new training base and retrain stuff again I did with V1. nyanko7 did a great job too. The de-distilled version is amazing on itself.

@Afroman4peace

nyanko7 did a great job too.

Yes, that's very true. My comment isn't telling the full story. There are a lot of things is does better than Vanilla Flux (with much more control over image texture), and being to be able to use real CFG and negative prompts is amazing.

@_Envy_ I think people don't using Prodigy because you need to have some really good hardware for it. Before I had built my new computer with a 3090ti this was impossible to me to use.. Even now I can run into Out of memory issues when using it with too high settings.

I have a question to all: which version are you prefer?

"All in One", Unet_Only or GGUF?

If it don't use some ultra special T5 clip I don't really see the point in download those again and again in all in ones. Personal opinion of course.

I would love an FP16 unet. But I'll use the FP8 unet until then.

UNET only and GGUF, personally.

"All in One" is great. Need a FP8 AIO

I already have T5 and Clip so -> FP16/BF16 Unet_Only

GGUF Q8 using GGUF Q8 T5, better quality than FP8, not as good as FP16 but can run on all hardware

I have only tried 2.1_de-distilled_AIO_exp and I think it is very good, the others I have not tried because being a novice I do not understand the differences ☺️

I prefer Q6 GGUF in order to get it completely in 12gb VRAM. Q8 works also but can only be loaded partially, probably because the clip also needs some VRAM.

I have started a new image Contest for Colossus Project 2.1 https://civitai.com/bounties/5256/edit

2.1 works very well with most of the LORA I've tested and is the first truly usable de-distilled checkpoint I've tried without major issues. I'm definitely excited for a potential 3.0 with more flexibility and speed. Results are already impressive and a working merge with a hyper LORA would really set this one apart. For now, I'm getting good results with 35/40 steps, but being able to drop down to 16/20 on a GGUF_Q4 version would no doubt make this checkpoint very popular with people on lower-end hardware.

Hi, thank you you your feedback. I am gonna try to lower the used amount of steps. In fact this version got already parts of the hyper lora inside it. A normal de-distilled is much slower. This is already a sort of Frankenstein checkpoint, but there is still room for improvement of course. I have already published a Q4_1 GGUF version. The Q4_0 version didn't passed my tests. For it's size the Q4_1 is quite good.

Beautiful model and I like it a lot... but my God it is so slow..... On my 2070(8Gb) card it takes 2-3 minutesi on average to generate one image, and sometimes 4-5 minutes.... I hope you will be able to optimize it!

Not really possible. De-distilled models have that "faster" part removed. I think reason why they made distilled models was simply to make them operate are reasonable speed for end user. And they could also control quality of output.

And ofc built in anti-NSFW protection, which ruins the day.

@Mescalamba Hi there the next version will be uncensored... I hope I can also make it a bit faster but don't expect miracles.

@Afroman4peace That censorship wasnt directed at you, its your model, do as you please. What I dont like about original FLUX is that it has way too heavy censorship, which even if I dont mind NSFW stuff, isnt good for any model visual or language.

Also even while FLUX can do pretty pictures they are actually nowhere near as much prompt following as some say. Not saying one cannot force it to follow prompt really well, but then I can do same with SD1.5 too, so..

IMHO only benefit of FLUX is that it can and does really good visual clarity. But I suspect same could be done with lesser models too, if someone really wanted.

@Mescalamba I know. I lately managed to get the next version more uncensored. I still have to think about which version I should publish. One version of it is working way too good and I really have to considerate if I can publish it here... I am always afraid of the possible misuse of my checkpoints.

@Afroman4peace Your model is very good. Looking forward to the new version! And thank you for your hard work!

@Afroman4peace Im not sure how to put it nicely, so I will be frank.

On civitai, I often search for inspiration in pictures that other generate. Im not saying I vomited yet, but there were few times that I actually felt sick.

So to cut it short, I dont think you can physically make anything worse than already is here.

Like Im fine with depravity and sexual preferences of others, but trust me, nothing you make can be that bad.

Im sure your models are well liked anyway, so its up to you. Myself, I prefer any good NSFW FLUX model I can get, cause while there is many options for NSFW in FLUX, so far I found exactly one thats good enough but there are other technical limits. So perversed depraved world of Civitai is still waiting for its FLUX savior. Will it be you? Who knows. :D

Damn this went under my radar I downloaded q8-gguf of 1.0 weeks ago and testing now i found a fantastic working workflow and I see 2.x I haven’t even tested that yet! Wow. I hate being a fan of so many models but this one is clearly also a winner!

A quick update: I am still working on the next version.. The bad news is that it will take a bit longer for the next one. I have basically created a new entire model only to increase the quality based on V2.1 with around 20K training on high settings. Here are the good news: I have managed to create something awesome during this process.. I would consider this as new version but I like the artistic style of 2.0 and 2.1-- The new checkpoint looks too different.. Well this new checkpoint can create ultra sharp realistic images without upscaling and is less censored.. So its a fork to this series.. Which can only mean one thing.. Its time for a new DEMONCORE. I will publish this one soon. Stay tuned.

nice cannot wait!

@Zojix you don't have to wait long.. I am publishing it today

@Afroman4peace Nice !!! Thanks a lot, quick question, do you plan to upload the diffusers files into HF for be able to train lora's on it with ostris ai toolkit ? :)

I have just published it https://civitai.com/models/155977/demon-core-sfwandnsfw

It might sounds stupid, but which one should I download for Comfyui. My laptop has 8vram and my desktop has 16vram.... all the Unets and Clip L as well as other models is too much to understand for a flux noob. Can someone help me out

Sure ;-) I will explain it. For Flux you normally need the UNET which is the main model itself and two clips. t5xxl_fp8_e4m3fn in this case and the clip_l which is a costom one I made. You have to put the Unet into the Unet folder and the clips into the clips folder. The "All in One" model got everything combined in one model. This one is easier to use. For Forge especially.. For Comfy I have several workflows depending on which version you use. Oh there is also a GGUF version.. Those things are working similarly like the Unet only version. The difference is that it's quantified to save disk space. the downside is that this results in less quality depending on the qualification. Q8.0 is more or less the same as FP8 from the quality in my opinion. Q4.1 is smaller in size but will suffer in quality. I only recommend this one for systems with less ram.

In summery: If you use Forge. Go for the All in one (AIO) version. For the destop with 16GB VRam I also recommend the Fp8 version. I myself prefer the "all in one" version. To save disk space and want to use other flux models as well you can use the FP8 Unet version or the Q8.0 one. It will not make a big difference.

For the laptop you might can try also the Q4.1 GGUF version with it.

Here you will find the different workflows for comfy https://civitai.com/articles/8419

@Afroman4peace OMG thx for the quick response, and detailed explanation! I will download them right now!!!!!!!!!!!!!

Another quick update.. I have added controlnet to the workflow for Colossus Flux 2.1.. https://civitai.com/articles/8419

Also I have published a new model based on Colossus Flux 2.1 https://civitai.com/models/155977/demon-core-sfwandnsfw

This model is no replacement of this series just something that came out as result of my training for the next version. Give it a try and tell me which checkpoint you prefer.

But is it better than Guardian?

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.