NOTE: This model has it's own VAE, which is baked into the model. For best results, please ensure that the selected VAE in automatic1111 is set to "Automatic". If you've never poked around in the VAE settings, this will be the default.

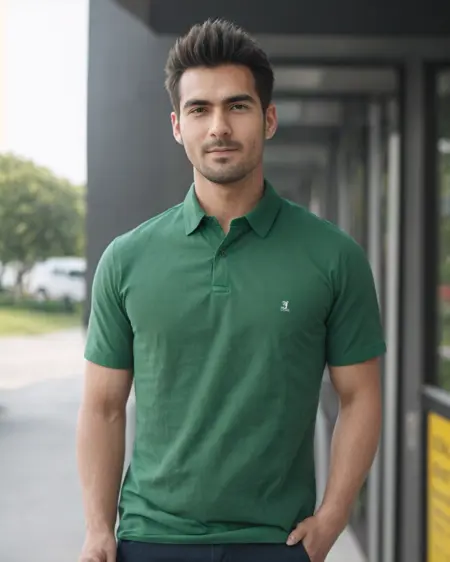

NextPhoto is the result of a whole lot of training, data curation, and block merging. The model is designed exclusively for the generation of photo-realistic photos, and as such it cannot generate non-photo images (even if prompted to do so). For more details about version 3.0, check out the "About this version".

All sample images were generated using ESRGAN_4x upscaling model at 2x upscaling, with 0.45 denoising strength. I'm not gonna upload a 32 bit model, as the v3 model was trained using 16bit precision, so it would literally just be a waste of space.

Usage Guide

(highly recommended) The negative prompt is quite important for the photorealism, but you don't really have to change it ever to get great results. I'd recommend the following negative prompt as a base: (worst quality:0.8), cartoon, halftone print, burlap,(cinematic:1.2), (verybadimagenegative_v1.3:0.3), (surreal:0.8), (modernism:0.8), (art deco:0.8), (art nouveau:0.8)

This prompt uses the verybadimagenegative_v1.3 textual embedding. You'll need

Place the downloaded file into the "embeddings" folder of the SD WebUI root directory, then restart stable diffusion.

Positive Prompts: You don't need to think about the positive a whole ton - the model works quite well with simple positive prompts.

Examples:

A well-lit photograph of woman at the train station

A perfect well-lit medium photograph of an old married couple sitting on their porch

A poorly lit photograph of a man walking on the trail at night

For more examples of positive prompts you can look at the sample photos for the model.

Upscaling: This model works will still generate photorealistic images without upscaling, but upscaling is strongly recommended for photorealism. You'll need to use the ESRGAN_4x upscaling model (not R-ESRGAN) in the hires fix section for decent results. Set the weight anywhere from 0.3 to 0.5 for best results, and the upscale amount to 2. I normally set my weight to 0.5 or 0.45.

Sampler: I use DPM++ 2M Karas, and generally don't stray from it. While the other samplers can still produce good results, DPM++ 2M Karas is the most consistent in my experience with this model.

For further improvements:

Reduce your CFG scale: The default classifier free guidance scale scale of 7 works good, but occasionally this can be too high. Reduce the CFG scale until you like the results - I generally bottom out at 4.0, as anything lower than that and the negative prompt starts getting ignored. Increasing the CFG scale past 7 or 8 will result in more "dramatized" photos (not in a good way), but will also result in the model listening more to the prompts, so balance as needed. High CFG scales can work well for specific situations, but lower CFG scales work great quite consistently.

Avoid excess LORA and Textual Inversion use: As v2 and v3 of this model are custom trained and not purely block merged, any LORAs or Textual Inversions may not work as well as they do in other models. Based on my experience, you can still get good results with them, but I'd recommend treading lightly - I'd recommend an additive approach where you add LORAs or inversions selectively when needed.

Description

Inpainting version of v3.0

FAQ

Comments (19)

I honestly can't see a difference between this and sdxl. lol

maybe the only difference is better hands with sdxl.

It is amazing. Is it possible to train it with everydream2trainer? I want to include me and my friends, but I am not sure it is worth using a bit different techniques than you.

I actually used everydream2trainer to train the model, so it should work fine

Amazing model, can't wait to see what it will do with SDXL

Love the v3 model. I dont know how you manage to create it this good.

One of the greatest realistic models! But it seems something wrong with training availability. I cant convert the model to diffusers to use it for training, with other models converting works fine. Can you upload diffusers to HuggingFace please? (V3)

I can see a lot of work has gone into this but still, no model has recreated the magic of Version 1 yet. Version 1 was an anomaly of almost perfection. Especially with the add detail Lora, it blew everything else on this site away.

yea, version 1 was the best.

Love this model. Ran this model on rendernet.ai for free. would want more models related to stock photos.

amazed with the results. few years back, I would have thought this was impossible. great to see technology moving so fast.

This would be my favorite model, but when at least 2 people are in photo the leg positioning is all wrong, for example the feet are missing and are placed elsewhere, also the faces lack detail in full body pics

amazing for skin texture & all around use

WOw Omg, this looks incredible!!!!!!!!!!!!!!

Would love to see an XL version

Never Wrote a Review for a Model This is hands down the best model I used Thank You

Outstanding! Fantastic levels of natural realism. The female face model is also really unique compared to most other checkpoints and is exquisitely beautiful.

V2 is still the best to me.

So i tought, there were no checkpoints anymore close to Cyberreallistic 4.2 in terms of flexibility and realism together.

Instead there is this NextPhoto v1, more than 1 years old, and man, its a miracle.

I only used my usual negative, which is only "anime", and this is working extremely good with loras and embeddings.

This checkpoint create even more cute, but realistic girls, than Cyberrealistic.

What a surprise!!

I tried at least 100!! checkpoint to date, but never felt to replace Cyber. But this V1 is phenomenal.

A little bit negative. V2 is absolute dogshit, V3 is like any other realistic checkpoint.

Thanx to Author. You are awesome.

Tried atleast 20 checkpoints locally. Settled on this one. Tried other in between but keep coming back to this. Probably the best 1.5 model out there. Good stuff brother.