Now available: SD1.5 version

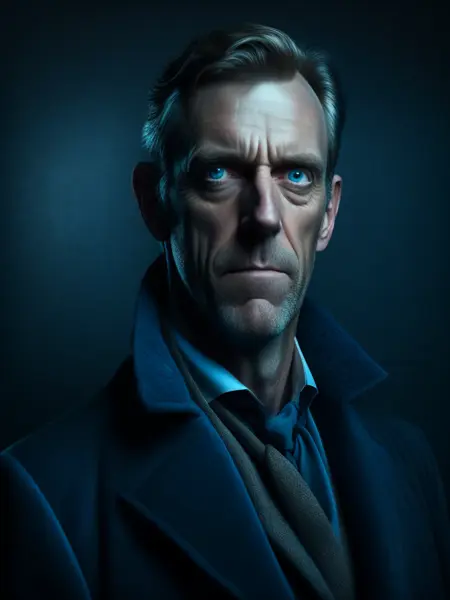

Tired of SD producing overblown, bright images?

This LORA model is trained using the technique described in https://www.crosslabs.org//blog/diffusion-with-offset-noise

Use it to produce beautiful, high-contrast, low-key images that SD just wasn't capable of creating until now.

Sample images were generated using Illuminati Diffusion v1.0

The LORA was trained using Kohya's LORA Dreambooth script, on SD2.1-768 and SD1.5 with a dataset of 44 low-key, high-quality, high-contrast photographs.

Seeing is believing: On the left is an image without the LORA, on the right is with the LORA.

Description

FAQ

Comments (70)

Wow! This looks great! I kept running into this issue, and I always though it was just me not knowing how to prompt for this style properly.

Are you planning to train a SD 1.5 version on your dataset?

Yep, planning to as soon as I get more Colab time.

looks very cool. Any chance of one for 1.5?

Yep. Working on it now.

@theovercomer8 Any update? Currently errors out on older models...

@MattEggo There's a 1.5 version available. Haven't heard of it erroring on any specific models. You might try the SD Additional Networks extension if you're not using it already.

unable to replicate with automatic1111 and sd2.1 768

The sample images are using Illuminati Diffusion v1

Also, are you using Kohya's Additional Networks extension?

Yes, I think it has something to do with nfixer, gotta find out what that is.

@iamsomeonesomething nfixer is a negative embed included with Illuminati Diffusion

No idea what I did wrong :(

Failed to match keys when loading Lora /home/dnak/stable-diffusion-webui/models/Lora/theovercomer8sContrastFix_sd21768.safetensors: ['lora_te_text_model_encoder_layers_12_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_12_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_12_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_12_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_12_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_12_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_12_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_12_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_12_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_12_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_12_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_12_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_12_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_12_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_12_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_12_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_12_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_12_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_13_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_13_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_13_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_13_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_13_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_13_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_13_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_13_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_13_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_13_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_13_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_13_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_13_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_13_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_13_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_13_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_13_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_13_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_14_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_14_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_14_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_14_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_14_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_14_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_14_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_14_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_14_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_14_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_14_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_14_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_14_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_14_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_14_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_14_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_14_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_14_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_15_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_15_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_15_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_15_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_15_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_15_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_15_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_15_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_15_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_15_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_15_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_15_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_15_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_15_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_15_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_15_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_15_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_15_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_16_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_16_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_16_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_16_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_16_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_16_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_16_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_16_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_16_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_16_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_16_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_16_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_16_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_16_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_16_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_16_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_16_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_16_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_17_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_17_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_17_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_17_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_17_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_17_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_17_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_17_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_17_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_17_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_17_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_17_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_17_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_17_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_17_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_17_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_17_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_17_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_18_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_18_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_18_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_18_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_18_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_18_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_18_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_18_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_18_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_18_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_18_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_18_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_18_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_18_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_18_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_18_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_18_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_18_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_19_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_19_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_19_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_19_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_19_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_19_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_19_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_19_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_19_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_19_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_19_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_19_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_19_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_19_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_19_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_19_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_19_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_19_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_20_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_20_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_20_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_20_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_20_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_20_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_20_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_20_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_20_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_20_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_20_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_20_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_20_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_20_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_20_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_20_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_20_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_20_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_21_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_21_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_21_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_21_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_21_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_21_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_21_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_21_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_21_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_21_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_21_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_21_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_21_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_21_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_21_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_21_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_21_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_21_self_attn_v_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_22_mlp_fc1.alpha', 'lora_te_text_model_encoder_layers_22_mlp_fc1.lora_down.weight', 'lora_te_text_model_encoder_layers_22_mlp_fc1.lora_up.weight', 'lora_te_text_model_encoder_layers_22_mlp_fc2.alpha', 'lora_te_text_model_encoder_layers_22_mlp_fc2.lora_down.weight', 'lora_te_text_model_encoder_layers_22_mlp_fc2.lora_up.weight', 'lora_te_text_model_encoder_layers_22_self_attn_k_proj.alpha', 'lora_te_text_model_encoder_layers_22_self_attn_k_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_22_self_attn_k_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_22_self_attn_out_proj.alpha', 'lora_te_text_model_encoder_layers_22_self_attn_out_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_22_self_attn_out_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_22_self_attn_q_proj.alpha', 'lora_te_text_model_encoder_layers_22_self_attn_q_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_22_self_attn_q_proj.lora_up.weight', 'lora_te_text_model_encoder_layers_22_self_attn_v_proj.alpha', 'lora_te_text_model_encoder_layers_22_self_attn_v_proj.lora_down.weight', 'lora_te_text_model_encoder_layers_22_self_attn_v_proj.lora_up.weight']

Error completing request

Arguments: ('task(ozjc0pqab9p9z7k)', 'hugh laurie as delirium from sandman, art nouveau style, Alphonse Mucha, retro, flowing hair, flowing dress, line art, over the shoulder, nature, purple hair, petite, snthwve style nvinkpunk drunken, (hallucinating colorful soap bubbles), by jeremy mann, by sandra chevrier, by dave mckean and richard avedon and maciej kuciara, punk rock, tank girl, high detailed, 8k <lora:theovercomer8sContrastFix_sd21768:1>', 'cartoon, 3d, ((disfigured)), ((bad art)), ((deformed)),((extra limbs)),((close up)),((b&w)), wierd colors, blurry, (((duplicate))), ((morbid)), ((mutilated)), [out of frame], extra fingers, mutated hands, ((poorly drawn hands)), ((poorly drawn face)), (((mutation))), (((deformed))), ((ugly)), blurry, ((bad anatomy)), (((bad proportions))), ((extra limbs)), cloned face, (((disfigured))), out of frame, ugly, extra limbs, (bad anatomy), gross proportions, (malformed limbs), ((missing arms)), ((missing legs)), (((extra arms))), (((extra legs))), mutated hands, (fused fingers), (too many fingers), (((long neck))), Photoshop, video game, ugly, tiling, poorly drawn hands, poorly drawn feet, poorly drawn face, out of frame, mutation, mutated, extra limbs, extra legs, extra arms, disfigured, deformed, cross-eye, body out of frame, blurry, bad art, bad anatomy, 3d render', [], 25, 1, True, False, 1, 1, 7, 4113613840.0, -1.0, 0, 0, 0, False, 1024, 1024, True, 0.7, 2, 'Latent', 0, 1536, 1536, [], 0, False, False, 'positive', 'comma', 0, False, False, '', 1, '', 0, '', 0, '', True, False, False, False, 0) {}

Traceback (most recent call last):

File "/home/dnak/stable-diffusion-webui/modules/call_queue.py", line 56, in f

res = list(func(*args, **kwargs))

File "/home/dnak/stable-diffusion-webui/modules/call_queue.py", line 37, in f

res = func(*args, **kwargs)

File "/home/dnak/stable-diffusion-webui/modules/txt2img.py", line 56, in txt2img

processed = process_images(p)

File "/home/dnak/stable-diffusion-webui/modules/processing.py", line 486, in process_images

res = process_images_inner(p)

File "/home/dnak/stable-diffusion-webui/modules/processing.py", line 617, in process_images_inner

uc = get_conds_with_caching(prompt_parser.get_learned_conditioning, negative_prompts, p.steps, cached_uc)

File "/home/dnak/stable-diffusion-webui/modules/processing.py", line 572, in get_conds_with_caching

cache[1] = function(shared.sd_model, required_prompts, steps)

File "/home/dnak/stable-diffusion-webui/modules/prompt_parser.py", line 140, in get_learned_conditioning

conds = model.get_learned_conditioning(texts)

File "/home/dnak/stable-diffusion-webui/repositories/stable-diffusion-stability-ai/ldm/models/diffusion/ddpm.py", line 669, in get_learned_conditioning

c = self.cond_stage_model(c)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/modules/sd_hijack_clip.py", line 229, in forward

z = self.process_tokens(tokens, multipliers)

File "/home/dnak/stable-diffusion-webui/modules/sd_hijack_clip.py", line 254, in process_tokens

z = self.encode_with_transformers(tokens)

File "/home/dnak/stable-diffusion-webui/modules/sd_hijack_clip.py", line 302, in encode_with_transformers

outputs = self.wrapped.transformer(input_ids=tokens, output_hidden_states=-opts.CLIP_stop_at_last_layers)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1212, in callimpl

result = forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/transformers/models/clip/modeling_clip.py", line 811, in forward

return self.text_model(

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/transformers/models/clip/modeling_clip.py", line 721, in forward

encoder_outputs = self.encoder(

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/transformers/models/clip/modeling_clip.py", line 650, in forward

layer_outputs = encoder_layer(

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/transformers/models/clip/modeling_clip.py", line 379, in forward

hidden_states, attn_weights = self.self_attn(

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/transformers/models/clip/modeling_clip.py", line 268, in forward

query_states = self.q_proj(hidden_states) * self.scale

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/extensions-builtin/Lora/lora.py", line 178, in lora_Linear_forward

return lora_forward(self, input, torch.nn.Linear_forward_before_lora(self, input))

File "/home/dnak/stable-diffusion-webui/extensions-builtin/Lora/lora.py", line 172, in lora_forward

res = res + module.up(module.down(input)) lora.multiplier (module.alpha / module.up.weight.shape[1] if module.alpha else 1.0)

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "/home/dnak/stable-diffusion-webui/extensions-builtin/Lora/lora.py", line 178, in lora_Linear_forward

return lora_forward(self, input, torch.nn.Linear_forward_before_lora(self, input))

File "/home/dnak/stable-diffusion-webui/venv/lib/python3.10/site-packages/torch/nn/modules/linear.py", line 114, in forward

return F.linear(input, self.weight, self.bias)

RuntimeError: mat1 and mat2 shapes cannot be multiplied (77x768 and 1024x100)

did you download the right version? There's a version for sd1.5 and sd2.x

Also make sure you're using Kohya's additional networks extension

I just recognize that last runtime error being what you get for running a 2.x lora in a 1.5 model lol

@theovercomer8 can u write here the link for download it please? thx

@vortex71 scroll down a bit from the top to the versions section of the page

@theovercomer8 I apparently was running the wrong version, 1.5 fixed it!

Thanks

@sliptrick thanks!

This looks great and thanks for sharing, but in the description it says an extension is needed in a1111 for use of this Lora. But doesn't a1111 support Loras by default now?

It seems you can use it without the extension now

Sorry, in my local automatic 1111, where do I have to place it ?

models/Lora

@theovercomer8 all right thank you !

can i ask how many dims was this LORA ? need to know that to help with merging with other LORAs

100

@theovercomer8 thanks

Someone can help me add this lora to google notebook stable difusion EternalBR#6572, thanks

Getting a "mat1 and mat2 shapes cannot be multiplied (77x768 and 1024x100)" when using this. Any ideas?

Me too ! same Error ... How can we use it ??

same error here.

@ghost_ @rjames123 ok i just solved it

.redownload it again and dont rename it or anything

.i try to rename it hence the error..i just redownload it without renaming and i dont have error anymore.

@kimintkroy416 Like the first time I tried, I didn't change the name. And when I look at your message and try again, I still get the error.

Have y'all tried using SD Additional Networks extension?

it's because you're using the wrong version, gotta us 1.5 with 1.5 models, and 2.x with 2.x

@snipahpheer god im an idiot thanks :D

@snipahpheer Oh ! Ver. 1.5 is working !! Thank you

Hi, I was using illuminati v1.1 and A1111. When I tried, this LoRA 2.1 768, the image gets some artifacts/blurs in the backgrounds (e.g. flowers, stone). Any thoughts?

Looks like just depth of field to me?

@theovercomer8 If you look closely at the ground/rocks, there are some kind of grainy artifacts. I kinda get these artifacts consistently

@theovercomer8 My guess is that using both LoRA and the negative embeddings from illuminati v1.1 (e.g. nrealfixer, nfixer, nartfixer) cause this issue. Not sure how to fix this though...

I get the error "AttributeError: 'Options' object has no attribute 'lora_apply_to_outputs'" using the 1.5 version with AbyssOrangeMix2 (which is a 1.5 model). Any idea what's going wrong?

Update, I tried 1.5 on a variety of models, none of them worked, all gave the same error above.

Update 2: I also tried the 2.1 version on IlluminatiDiffusion v 1.1 and get the same exact error. My Python version is the correct version: python: 3.10.6 • torch: 1.13.1+cu117 • xformers: N/A • gradio: 3.16.2 • commit: 48a15821 • checkpoint: cae1bee30e

Am I missing something?

Full error message if it helps:

Traceback (most recent call last):

File "E:\Automatic1111\webui\modules\call_queue.py", line 56, in f

res = list(func(*args, **kwargs))

File "E:\Automatic1111\webui\modules\call_queue.py", line 37, in f

res = func(*args, **kwargs)

File "E:\Automatic1111\webui\modules\txt2img.py", line 52, in txt2img

processed = process_images(p)

File "E:\Automatic1111\webui\modules\processing.py", line 476, in process_images

res = process_images_inner(p)

File "E:\Automatic1111\webui\modules\processing.py", line 614, in process_images_inner

samples_ddim = p.sample(conditioning=c, unconditional_conditioning=uc, seeds=seeds, subseeds=subseeds, subseed_strength=p.subseed_strength, prompts=prompts)

File "E:\Automatic1111\webui\modules\processing.py", line 809, in sample

samples = self.sampler.sample(self, x, conditioning, unconditional_conditioning, image_conditioning=self.txt2img_image_conditioning(x))

File "E:\Automatic1111\webui\modules\sd_samplers.py", line 544, in sample

samples = self.launch_sampling(steps, lambda: self.func(self.model_wrap_cfg, x, extra_args={

File "E:\Automatic1111\webui\modules\sd_samplers.py", line 447, in launch_sampling

return func()

File "E:\Automatic1111\webui\modules\sd_samplers.py", line 544, in <lambda>

samples = self.launch_sampling(steps, lambda: self.func(self.model_wrap_cfg, x, extra_args={

File "E:\Automatic1111\system\python\lib\site-packages\torch\autograd\grad_mode.py", line 27, in decorate_context

return func(*args, **kwargs)

File "E:\Automatic1111\webui\repositories\k-diffusion\k_diffusion\sampling.py", line 145, in sample_euler_ancestral

denoised = model(x, sigmas[i] s_in, *extra_args)

File "E:\Automatic1111\system\python\lib\site-packages\torch\nn\modules\module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "E:\Automatic1111\webui\modules\sd_samplers.py", line 350, in forward

x_out[a:b] = self.inner_model(x_in[a:b], sigma_in[a:b], cond={"c_crossattn": [tensor[a:b]], "c_concat": [image_cond_in[a:b]]})

File "E:\Automatic1111\system\python\lib\site-packages\torch\nn\modules\module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "E:\Automatic1111\webui\repositories\k-diffusion\k_diffusion\external.py", line 167, in forward

return self.get_v(input c_in, self.sigma_to_t(sigma), *kwargs) c_out + input c_skip

File "E:\Automatic1111\webui\repositories\k-diffusion\k_diffusion\external.py", line 177, in get_v

return self.inner_model.apply_model(x, t, cond)

File "E:\Automatic1111\webui\repositories\stable-diffusion-stability-ai\ldm\models\diffusion\ddpm.py", line 858, in apply_model

x_recon = self.model(x_noisy, t, **cond)

File "E:\Automatic1111\system\python\lib\site-packages\torch\nn\modules\module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "E:\Automatic1111\webui\repositories\stable-diffusion-stability-ai\ldm\models\diffusion\ddpm.py", line 1329, in forward

out = self.diffusion_model(x, t, context=cc)

File "E:\Automatic1111\system\python\lib\site-packages\torch\nn\modules\module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "E:\Automatic1111\webui\repositories\stable-diffusion-stability-ai\ldm\modules\diffusionmodules\openaimodel.py", line 776, in forward

h = module(h, emb, context)

File "E:\Automatic1111\system\python\lib\site-packages\torch\nn\modules\module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "E:\Automatic1111\webui\repositories\stable-diffusion-stability-ai\ldm\modules\diffusionmodules\openaimodel.py", line 84, in forward

x = layer(x, context)

File "E:\Automatic1111\system\python\lib\site-packages\torch\nn\modules\module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "E:\Automatic1111\webui\repositories\stable-diffusion-stability-ai\ldm\modules\attention.py", line 322, in forward

x = self.proj_in(x)

File "E:\Automatic1111\system\python\lib\site-packages\torch\nn\modules\module.py", line 1194, in callimpl

return forward_call(*input, **kwargs)

File "E:\Automatic1111\webui\extensions-builtin\Lora\lora.py", line 175, in lora_Linear_forward

return lora_forward(self, input, torch.nn.Linear_forward_before_lora(self, input))

File "E:\Automatic1111\webui\extensions\a1111-sd-webui-locon\scripts\main.py", line 391, in lora_forward

if shared.opts.lora_apply_to_outputs and res.shape == input.shape:

File "E:\Automatic1111\webui\modules\shared.py", line 530, in getattr

return super(Options, self).__getattribute__(item)

AttributeError: 'Options' object has no attribute 'lora_apply_to_outputs'

So I ran the update.bat and run.bat again in my webui folder and then it started working, but started generating a bunch of NaN results. I disabled the a1111-sd-webui-locon and restarted and that seemed to fix that. So now it works, sort of. Instead of getting beautiful high contrast images, they're basically the same except now my images are all abstract horrors, mangled beyond belief like some fever dream. I have no idea what this lora is doing to my images, but it's not good. What weight should the lora have? If I turn it down to 0.7 it doesn't seem to do anything. If I crank it up to 1, I get abstract images.

Final update: did get this working, but it works better in some models than other. AOM didn't seem to work, but others it did what it was supposed to. Thanks!

Can you clarify how you got it working? It outright crashes me. "Python unexpectedly quit". I really want to see and believe, alas... No dice yet.

@BooBerry "So I ran the update.bat and run.bat again in my webui folder and then it started working, but started generating a bunch of NaN results. I disabled the a1111-sd-webui-locon and restarted and that seemed to fix that." That got rid of the errors for me, but it still wasn't doing anything on some models, and looked terrible on others. But on some models it works as advertised.

I have encountered the same problem. Does anyone know what's going on?

i have the same problem on Vlad automatic, same as stable diffusion 2.1, don't know what to do.. is it cause by an update or something ?

@VOLTA007 Just to update my experience - it works very well on some models. On some other models it conflicts in a way that either "overbakes" the image (distortion), gives a NaN error, or simple has no effect whatsoever. This seems to be the nature of so many undocumented variations of models/training being shared around. Some things just don't work together. Also, extensions can really conflict with eachother, and removing/disabling all the ones you aren't currently using seems to be the best way to avoid issues.

@BooBerry i think we need an update .... i just removed it cause it seems to inject so many problems on my installation :(

It doesn't work now

You're probably using the wrong version, there's two on this page, one for SD 1.5, the other for SD 2.1

Not working! <lora:theovercomer8sContrastFix_sd21768:1> for SD 2 models - errors (((((((((

nsanely detailed wide angle architecture photography, cozy contemporary living room, intersting lights and shadows, award-winning contemporary interior design, ARCHITECTURE,FURNITURE,INDOORS,BUILDING,LIVING ROOM,ROOM,RUG,PLANT,HOME DECOR,COUCH,CHAIR,INTERIOR DESIGN, <lora:theovercomer8sContrastFix_sd21768:0.6>

Negative prompt: nartfixer, nfixer, nrealfixer,

Steps: 30, Sampler: Euler a, CFG scale: 7.5, Seed: 4042022690, Size: 1024x768, Model hash: cae1bee30e, Model: illuminatiDiffusionV1_v11, Denoising strength: 0.45, ENSD: 31337, Hires upscale: 2, Hires steps: 10, Hires upscaler: R-ESRGAN 2x+

Used embeddings: nartfixer [bd80], nfixer [7ade], nrealfixer [ff26]

Time taken: 14.56s

Torch active/reserved: 9080/16008 MiB, Sys VRAM: 19208/24564 MiB (78.2%)

Getting errors too when using 2.1 768 models (illuminati, and rMadv21768

I have encountered the same problem. Does anyone know what's going on?

For 2.1 Loras, need to use the Additional Networks extension instead of bringing it into the prompt

Why it doesn't work, any ideas? Does anyone know of similar ones that work?

It does work if you actually use the correct version for your SD version lmao

I am receiving this error Couldn't find Lora with name theovercomer8sContrastFix_sd15

you need to put the lora in the lora directory and reference the it by name in the prompt. For example, you probably want to use <lora:to8contrast-1-5:1> in your prompt

Am getting:

RuntimeError: The size of tensor a (768) must match the size of tensor b (1024) at non-singleton dimension 1

When trying to use v2.1

The version numbers of the Lora match directly with SD version numbers, 2.1 ONLY works with SD 2.1

Before you post here claiming this doesn't work, make sure you actually downloaded the correct version... there are TWO, the first (the default for this page) is for SD 2.1 768, the other, if you switch the tab at the top left, is for SD 1.5.

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.