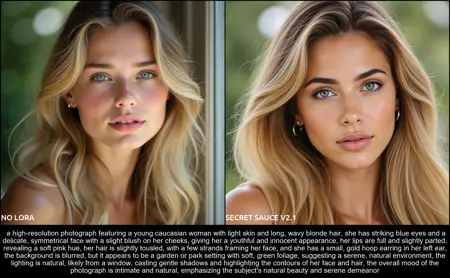

The Secret Sauce, trained on (≈5600 images)

-This model's dimension is large, so combining it with other LoRA models may not be beneficial.

Description

Resumed training for 35 000 more steps

Getting to a point where Adafactor LearningRate drops too low, and so continuing the training would be wasted computation, so this is the final iteration of this model

FAQ

Comments (10)

Thank you very much for your great work! Have you created Prompts (they are very detailed) manually or used Joy Caption? (at least the last sentences in the prompt with "expression like" sounds like Joy Caption) :)

Hi thank you, I'm using llava-onevision-qwen2-7b-ov with LLaVA-OneVision Run, GPT3.5 from the API, and Florence-2-base-PromptGen-v1.5 from MiaoshouAI node

@pgc Thank you for a helpful answer! I will try to use it.

@pgc I don’t exactly understand . Did you generate the images with flux and then run it through that llava API to describe the image ? Also not sure how Florence fits in since that’s also used to describe images as well to my knowledge

@Melty1989 Each of these solutions serves different applications, so I don't use them simultaneously. I typically choose Florence2 (The COG 2.2 fine-tune) for most of my prompts, while LLAVA OneVision is my go-to for image descriptions, though slower it allows for instructions. For generating random prompts from scratch without image input, the GPT API is a fast and cheap

@pgc Okay kind of went over my head , but let me see if I understood right :

1) You use Florence to generate a prompt based off an input image. So you don’t just describe the image but you can tell the model to rewrite it like a Hunyuan friendly prompt? Also, by Cog finetune I assume you’re using Cog-Florence 2.2 large and loading it into the miaoshou tagged?

2) Llava-OneVision run is again fed an image to describe , except this one is just used to describe the image and not reword it as a prompt?

3) For random text to image prompts , you’re paying for GPT 3.5 to do that. Is it censored?

@Melty1989 Not exacly right ,) (Florence2 RUN by kijai using cog 2.2 model) can describe an image just like LLAVA One vision, but with LLAVA you can ask to focus on certain things like "Describe this video... Focus on the motion etc..." even with an image input, this will fill the holes by creating more than a simple description. GPT 3.5 api is censored yes, but you can use a local uncensored LLM to generate random prompts in comfyUI this is fine, but abit slower ofc

I used LLAVA with this type of instruction to insert motion to an image description, on most of the Hunyuan videos I posted

@pgc Ok, thanks for clarifying. I was looking at this workflow from you https://civitai.com/models/1014537/hunyuanvideo-kijaiwrap , and even the T2V workflow relies on an input image. So I guess it's not really a T2V but rather ImageToVid?

@Melty1989 Its more like a T2V workflow than I2V, since the conditioning is text based, even if the text is generated from an image, its still text ,) HunyuanVideo doesn't provide support for i2v yet, but some workarounds are possible like the IP-Adapter conditioning from the Kijai Wrapper, or my approach using LTX to generate a video based on an image, and then use Hunyuan V2V, I prefer this option atm

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.