下载模型之前,请仔细查看模型介绍

Please review the model introduction carefully before downloading the model

モデルをダウンロードする前に、モデル紹介をよく見てください

本系列模型及衍生模型,禁止上传LiblibAI或ShakkerAI

This series of models and their derivatives are prohibited from being uploaded to LiblibAI or ShakkerAI

このシリーズのモデルおよびその派生モデルは、LiblibAIまたはShakkerAIにアップロードすることは禁止されています

注意:

👇下面的Anything模型为冒用名称,并非本人制作,请不要进行付费

👇This Anything model is an unauthorized use of the name and was not created by me. Please do not make any payments.

—————————————————

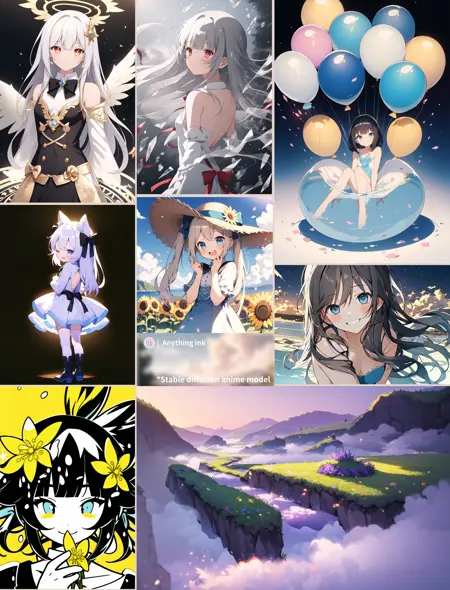

AI art should be looked like AI, not like humans.

—————————————————

Anything-XL

AnythingXL β 4 is the AnythingXL here. If you download the previous model, there is no need to download it again.

AnythingXLbeta4 is another product encouraged by friends in the group. The premise is the highly developed SDXL model, with the quality of the trained model getting higher and higher. The negative hint word in the display graph's embedding name is only copied, and it actually has no effect. Beta4 was created because the model with the fourth test version was the only one I could tolerate looking at. Model fusion is a dead end, with a complete mess in terms of artistic style diversity and the accuracy of some prompt words.

Formula:

aingdiffusionXL_V0.6 x 0.144375

animagineXLV3 x 0.144375

cutecore_xl x 0.12375

kohakuXLDelta_rev1 x 0.1375

BAXLBArtstyleXLv2 x 0.3375

ponyV6 x 0.1125Model order only represents fusion order and has nothing to do with model quality

Model merge is implemented using the Webui-Supermerge plugin. According to FairAIPublicLicense1.0-SD, the recipe needs to be publicly disclosed, and any merged models that merged this model must also disclose the merge recipe in accordance with this license.

Parameters+:

Prompt words are different from SD1.5, and for best results, it is recommended to follow a structured prompt template:

<|special|>,

<|artist|>,

<|special(optional)|>,

<|characters name|>, <|copyrights|>,

<|quality|>, <|meta|>, <|rating|>,……

<|tags|>, special(optional):These prompt words only need to be typed once, put in the front, there is no need to put in the back

Special tags:

The model can still be used without these special cue words, but incorporating these special tags when necessary can help steer the generated results towards the desired direction.

years:

These words help guide the results towards modern and retro anime art styles, with a specific timeframe of approximately 2005 to 2023

newest 2021 to 2024

recent 2018 to 2020

mid 2015 to 2017

early 2011 to 2014

old 2005 to 2010NSFW:

These words help guide the results towards adult content, but generally do not generate adult content if rating words are not included.

Of course, you can also put it in negative prompts.

safe General

sensitive Sensitive

nsfw Questionable

explicit, nsfw Explicitquality:

While this model can function without quality words, in practice, these words can still be used to adjust the output.

masterpiece > 95%

best quality > ?

great quality > ?

good quality > ?

normal quality > ?

low quality > ?

worst quality ≤ 10%Resolution:

You are free to use the vast majority of reasonable resolutions, whether it is the resolution used by SD1.5 at 512*768 or higher resolutions above 2048, each will have a different effect. However, using images that are too large or too small may cause the picture to break down or the character/background structure to become distorted.

Tags:

If you want to generate high-quality pictures, you can use negative prompts, such as:

nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, artist nameNegative tags can include common negative tags, but it is best not to assign too high of a weight to their content, for example (ugly:2.8).

Because of models merge, some labels in the original model that have not been fully trained may be lost, and some labels may need to have a weight of over 1.5 in order to be effective.

Resolution:

A resolution greater than 1024×1024 is recommended, and hires fix is recommended if you want higher resolution or quality

Most of the generation parameters of the example graph are:

euler_a | 20steps | no hires fix | CFG72048 x 2048 not recommended

……

1280 x 2048

1280 x 1536

960 x 1536 Recommended

1024 x 1024 1:1 Square

……

960 x 640

768 x 512 SD1.5

……

2048 x 512 ¿ Unable to guarantee the quality

512 x 2048 ¿ Unable to guarantee the qualityDisclaimer:

All images generated by the model are created by the users themselves, and the model author cannot control the images generated by the users. The model author will not be held responsible for any potential copyright infringement or unsafe images.

License:

Anything now uses the Fair AI Public License 1.0-SD, compatible with Stable Diffusion models.All versions of the models in the Anything series are open source using this protocol. Key points:

Modification Sharing: If you modify Anything, you must share both your changes and the original license.

Source Code Accessibility: If your modified version is network-accessible, provide a way (like a download link) for others to get the source code. This applies to derived models too.

Distribution Terms: Any distribution must be under this license or another with similar rules.

Compliance: Non-compliance must be fixed within 30 days to avoid license termination, emphasizing transparency and adherence toopen-sourcevalues.

—————————————————

您所看到的是单独给日本和中国AI玩家的备注:

①除非需要生成2048x2048以上的图或者遇到严重问题,否则请放弃高清修复。

②如果你感觉模型偏向某方面,请先检查提示词。一些提示词在之前使用的模型上可能无效,在这里可能有效。

③请不要用SD1.5的使用习惯来使用SDXL,因为两个模型本质上不同。如有必要,请使用简介中的质量词,而不是8k高清等。

④最好不要和NegativeXL一起使用,也不建议使用高权重的负面提示词,如(ugly:2)。

⑤想要一张好图片,请尽可能详细描述内容,而不要仅标注"1girl, nsfw",这样无法得到好的图片。

あなたが見ているのは、日本と中国のAIプレイヤーへの単独の備考です:

①2048x2048以上の画像を生成する必要があるか、深刻な問題が発生している場合を除き、高画質修復をやめてください。

②モデルが特定の側面に偏っている場合や他の問題がある場合は、ヒントワードを確認してください。一部のヒントワードは以前のモデルでは機能しないかもしれませんが、ここでは有効になる可能性があります。

③SD1.5の使用法ではなく、SDXLの使用法は異なるため、注意してください。必要な場合は、8kの高画質などではなく、以下に示す品質の言葉を使用してください。

④NegativeXLとの組み合わせは避けるべきであり、(ugly:2)のような大きなウェイトのネガティブなヒントワードの使用もおすすめしません。

⑤良い画像が必要な場合は、内容を可能な限り詳細に説明してください。単に「1girl, nsfw」というラベルを付けるだけでは良い画像が得られません。

Description

这次更新模型是为了代替老旧的Anything3.0。

FAQ

Comments (72)

hi, 我想请问下你在anythingv5簡介还有那个模型手册那边提到用easy negative会破坏出品。

那请问你会有什么比较推荐的负面词吗,因為我發現用EN通常情況下是會比沒有的好

相反的你推荐的civit好用提示词手册那边很多作品都用了easyN。 雖然是Aice模型的作品

感謝!!!

不推荐固定负面提示词,

那个提示词手册是直接复制的其他人的生成信息,我并不想对其进行改动,那样工作量太大了,有些时候改动也会脱离这个提示词原本的意思

@Yuno779 没有比较通用的负面词啊...。有时候颜色飘了或者突然清晰度变低 粗糙之类的话。输入各自对应的负面词效果也是不太好. 但如果像你说的那样用EN又会令风格飘了。

看到你那些手册的描述的确觉得使用恰当的话anythingV5的可塑性很高但相对应的门槛会比较高,至少不是那种不听话随便输入就能得到好结果的模型, 我去看看其他同用anything的高手会怎么做

There's a problem with a dress made up of flowers

I don't know what you're trying to say.Please give a clear description of the problem. Can you explain what the problem is? Is it producing bad images or something else?

@Yuno779 Ta可能想要表达的是,这个模型在让角色穿上用花做成的裙子方面存在困难,或者换句话说,这个模型很难生成一条由花朵组成的裙子。

I think what they’re trying to say is that with this model, they’re having trouble getting a character wearing a dress that is made out of flowers, or in other words, that they’re having trouble with the model generating a dress that is composed of flowers.

@KTTB You can use LoRA or other methods to train, but most of the training images are skirts with printed patterns rather than skirts made directly with flowers, so generation is relatively difficult.

well it's a bold name anything

well it has the problem of creating a dress from flowers but I assume that in general it is a difficult task

I had far better results with V3.2 ++ [ink]+ kl-f8-anime2 VAE. As if from my eyes, someone was taking a gray veil. Just a tip.

请问如何查看生成图片时的分辨率以及开启Hires的具体参数?小白抄作业都抄不明白 需要大佬们帮助

点击图片进去点Copy generation data,最后一小节就是

Was the V5 PRT RE Model trained on booru tags with or without underscores? So is it "long hair" or "long_hair"?

No underline required

感谢大佬。小弟刚入门,512以上的图会爆,A1111启动加了half指令无效,是该换显卡了吗?其它模型不会,但我很喜欢这个啊!报错:NansException: A tensor with all NaNs was produced in VAE. This could be because there's not enough precision to represent the picture. Try adding --no-half-vae commandline argument to fix this. Use --disable-nan-check commandline argument to disable this check.

Can you create this style for me that I am famous and everyone loves it

These are the pictures I have collected for you

pay him and he will

Hey there! Don't mind these guys. I like the collection you put together. I study computer science and play with generative AI often. I can help you train a model and give you some prompting tips if you want. How about you add a discord to your profile so we can get in touch?

It would be great if you can tell the story of AnythingV3. How was it made?

It is an important part of SD history, hopefully, you can reveal its secrets.

He has the story of it in the description. He said AnyV3 is simply a merge of some existing anime models at the time. He then shared it on some chinese forums without realizing it would become so popular outside of china until someone told him a while later. He thinks the model is abit overhyped too.

@kylocat

Thank you for summary.

I've seen the description, but it's too short for me.

It would be nice to have more details, recipe maybe.

Also the strange part is that AnythingV3 has its unique VAE that is not matching NAI or other models, as far as I know. Also AnythingV3 has an unique terrible saturation.

I still think that it was trained somehow and not just merged.

I don't want to sound like a conspiracy guy, but something here is not summing up.

@alexds9 I'd suggest checking early models like Abyss, Holo, AOM/AOM2, there were some early models that took from NAI and trained on booru data, can't remember which exactly. Also why do you say that it has a different VAE? To me it looks like a NAI base VAE tbh

@LyloGummy

I'm not sure why you are suggesting me to check them...

I haven't tested AnythingV3 VAE lately.

@LyloGummy

I just checked, the internal VAE of AnythingV3 against NAI with SuperMerger Analysis, and there are a lot of differences between the two. Actually, NAI has the same VAE as other SD models, but AnythingV3 has changes in VAE.

You wouldn't get such changes from a merge...

@alexds9 Hmm very interesting, I'll try comparing it to some older models VAEs with supermerger and follow-up

Can you make inpaint version. Please?

you can make it your own this is what i used :D

https://www.reddit.com/r/StableDiffusion/comments/zyi24j/how_to_turn_any_model_into_an_inpainting_model/

edit you can try mine here if you cant merge it yourself:

https://huggingface.co/shiowo/ckptandstuff/resolve/main/anyv5inpaint-inpainting.safetensors

果然是万物熔炉,用了一个月了,就它最稳定,什么都能应付!包括排名前50的模型我都试过了,就你这个给我最多惊喜!(什么时候出个写实版的呀?)

Has an issue with futa lora/lyco. Overall although good one

Does this model include the model leaked from novel ai?

Or, is it safe to use?

JSYK: This model produces very young-looking / childlike characters, even with prompts like “old man/old woman/mature male/mature female”. It’s great otherwise.

这个模型是我的最爱,我心中的综合性能NO.1

Can images created with this model be used for commercial purposes?

Not that I'm going to, but I'm wondering how the copyright works on this because the other models kind of write about it.

ink's training sets all use nijijourney's generated graphs

@Yuno779 I'm sorry, but that doesn't tell me anything.

It's a grey area..

it can.Author has already told you that it is trained by nijiMJ,that can be used for commercial purpose

用了那麼多種模型,Anything是唯一可稱得上六邊形戰士的模型

Anything是不是指能夠生成的概念比較多?

if you don't have a good gpu like me, you can try this on Google Colab (for free, no ban, no limited token). Also a useful guide here Youtube.

想问下大佬。anything基础模型的训练数据都是从哪个渠道得到的呀,还是说自己手动挑选的呀,以及训练数据量多少呀

can someone explain to me what this "ink" version thing im seeing means? feels like you a take a month or 2 away from AI art a dozen new abbreviated terms pop up

I think "ink" just means it looks like it was made with digital ink software (i.e. it's not trying to create realistic images)

不同的图片反向提示词都不一样,该怎么选择反向提示词?

你可以用任何足够强力的反向提示词。You can try any.

ink, prt, re, what do these mean? I don't know which version to get!

兼容性很不错的2d模型。我先前对sd的理解可能有些错误

我需要提醒一下的是,你所了解到的很多大概率是部分人在完全不了解原理的情况下得出的不正确的内容,有部分人连LoRA是干嘛的就在乱讲,带歪了不少的人。

@Yuno779 我不认为大部分有热度的模型作者会不知道这些,他们有能力做出能出好图的模型,但他们的模型却兼容性差,却过拟合甚至缺clip了。我说这些话,是希望大伙能重新思考评判模型的标准,也希望那些作者能好好想想要做出什么样的模型

@dyr233 很多模型作者确实不知道,因为你说的这些内容,有多达十几处的原理上的或者逻辑的错误,多数都是听信的苟斯特米的民科理论。受表达能力和篇幅限制,很多的内容我无法讲解的很详细,我非常建议你去看一下相关的论文。

@Yuno779 请问我应该看哪些,可以推荐一下吗

@Yuno779 对呀大佬,给个论文出处啊,我是川大视传学生刚入学SD

Please, Which version is the best to train Lora anime?

NoVae/Unet-Only version is broken, when loading WebUI for the first time with this version as the loaded model, images produced will be full of broken artifacted noise.

Edit: I think the prunning is the problem. Could you please upload a full or not prunned version of Unet-Only version?

这个是基于webui的特性制作的,你可以尝试先加载其他SD1.5的模型,再切换到这个版本。如果不能用建议用1.99GB的完整版

Excellent model for linearts! Thank you

Translated the referenced guide.

https://civitai.com/articles/2726

牛啊,纯2d的。非常适合用来做基底融模型

What is the difference between v5 (Prt-RE) and "ink"?

In my opinion, Prt-RE more detailed, ink is softer, you know, Ink painting, that's it.

作者如果有意把Anything进化到XL版可以和我联系,我们可以提供硬件,XL的Token数能发挥更多的绘画概念。我们也需要像Anything的图量。

XL的训练思路目前还没有摸清,或者说无法实现。如果只是单纯使用我训练ink模型所使用的这些训练集进行训练的话,那么大概率只会得到一个占用更大体积更大,效果可能还会更差的原模型。我现在有一些想法,但是这意味着我需要放弃现有的训练集,并且所需要训练集几乎是不可能获得的,所以只能作罢。

欢迎各位来尝试一下用RLHF给 A5 Prt-RE 训练出来的“稳定器” A5 Stablizer,并给出反馈 :)

Welcome to try the "stabilizer" A5 Stablizer trained by RLHF for A5 Prt-RE, and give your feedback :)

老师好,由于这个界面做了改动我无法知道当时最长的后缀名是哪个,我现在电脑有两个版本,分别是anything-v5-PrtRE和AnythingV5Ink_v5RE,请问用于训练lora应该用哪一个版本比较合适

别用ink

@Yuno779 了解了,感谢回复

good

My loras trained using Anylora works pretty good in the V5 model. The style was transferred nicely. I even used stuff like 1girl in the prompt so I'm not sure why everything is working as expected but hey I'm thankful for your nice model.

Details

Files

AnythingXL_inkBase.safetensors

Mirrors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingXL_inkBase.safetensors

test_v10.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingXL_inkBase.safetensors

AnythingV5_v32.safetensors

AnythingV5Ink_v32Ink.safetensors

Anything-ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

anythingv5ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5_v32.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink (1).safetensors

Anything-ink.safetensors

anything_inkBase.safetensors

基底|Anything-ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_v32Ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors

Anything v5.safetensors

Anything-v3.2pp-Ink.safetensors

Anything-ink.safetensors

AnythingV5Ink_ink.safetensors

Anything.safetensors

AnythingXL_inkBase.safetensors

anything_inkBase.safetensors

AnythingV5Ink_ink.safetensors

AnythingV5Ink_ink.safetensors