V4.0-Full Version:

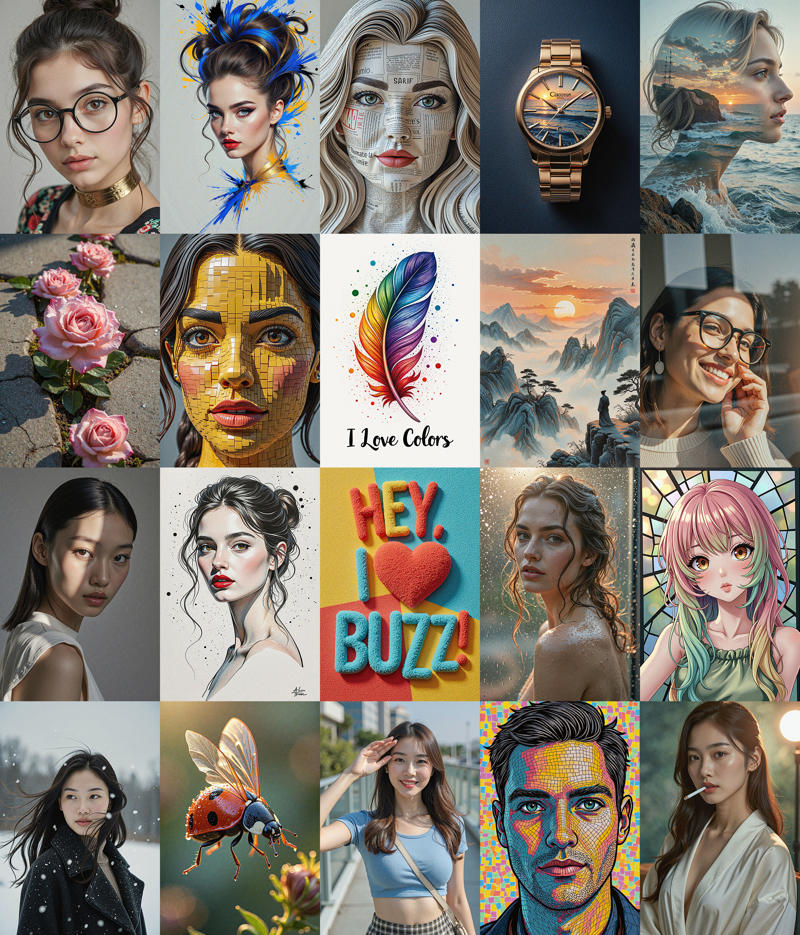

V4.0 模型(纯净基础模型),是在 V3.0 的基础上,融合了 SRPO 模型的写实与细腻,Krea 模型的艺术性与多风格,以及良好的质感与LoRA兼容性,模型的综合能力有较大提升。

非常的真实与细腻,即使 TTP 直接放大至 8M 像素,依然能保持极好的细节。

提示词还原能力较大提升,推荐使用 LLM 强化和详细描述的结构化提示词。

良好的艺术表现力和风格多样性,良好的 LoRA 兼容性,包括 NSFW / SFW 各种 LoRAs。

The V4.0 model(Pure base model), built on the foundation of V3.0, integrates the realism and delicacy of the SRPO model, the artistry and multi-style capabilities of the Krea model, as well as good texture and LoRA compatibility, resulting in a significant improvement in the model's overall capabilities.

Extremely realistic and delicate, maintaining excellent details even when TTP is directly enlarged to 8M pixels.

Greatly improved prompt restoration capability, it is recommended to use LLM-enhanced or structured prompts.

Good artistic expression and style diversity, good LoRA compatibility, include all kind NSFW / SFW LoRAs.

Also on: Huggingface.co, Modelscope

===========================================================================

V3.0-Krea Version:

Flux.1-Dev-Krea 模型改善了 Dev 模型的艺术风格与写实摄影的能力,但人像的清晰度和美学方面有所减弱,特别是与原 Dev 模型训练的 Lora 兼容性很差。本 V3.0-Krea 保留了 Krea 模型的主要长处,改善了图像清晰度及与原 Dev 模型 Lora 的兼容性,但 Lora 兼容性方面改善不多(主要指人像和风格LoRA,其它LoRA及CN还行),不太理想,这是该版本比较遗憾的地方,请大家慎重下载。

Flux.1-Dev-Krea has improved the artistic style and realistic photography ability of the Dev version model, but the clarity and aesthetics of portraits have weakened, especially with poor compatibility with Lora trained on the original Dev model. The V3.0-Krea retains the main features of the Krea model, improves image clarity, and enhances compatibility with the Lora, but the Lora compatibility is improved minimal and not ideal(Mainly refers to portrait and style LoRA, other LoRA and CN are acceptable), which is a disappointing aspect of this version. Please download cautiously.

推荐使用 GNER-T5-XXL 代替 T5-XXL,获得更好的提示词理解能力,你可以从 https://civarchive.com/models/1888454 下载,或我的 HF Repo 下载。

Recommended to use GNER-T5-XXL instead of T5-XXL for better prompt understanding capabilities, you can download it from https://civarchive.com/models/1888454 or my HF Repo.

一些示例图片 (Some example image) :

模型使用:

基本组合:deis+simple / euler+beta,您可以尝试不同的组合。

Basic: deis+simple / euler+beta, You can try more different combinations.

Also on Huggingface.co

===========================================================================

V3.0-PAP Version:

v3.0-PAP:人像与艺术摄影出图优化基础模型。模型在构图、光影以及东方人脸型等方面进行了专门优化,进一步加强脸模的敏感性、适配性。

v3.0-PAP:人像与艺术摄影出图优化基础模型。模型在构图、光影以及东方人脸型等方面进行了专门优化,进一步加强脸模的敏感性、适配性。

与 Dev 原版模型相比,该版本在人种和脸型方面更真实、更丰富,脸模的例图来自以下作者的 LoRA 模型,在此表示感谢!如有侵权,知会立删。

Portrait and Art Photography Optimization Base Model. The model has been specially optimized in composition, light and shadow, oriental face shape to further strengthen the sensitivity and adaptability of the face model.

Compared to the Flux.1 Dev original model, this version is more realistic and richer in terms of ethnicity and face shape, and the example drawings of the face model are from the LoRA model of the following authors, thanks in advance! If there is any infringement, it will be notified and deleted immediately.

https://civarchive.com/user/el_fluppe

https://civarchive.com/user/wolfcatz

https://civarchive.com/user/seanwang1221

https://civarchive.com/user/nawusijia

模型使用简单指南:

基本构图:deis+simple / euler+beta; 噪点更多:ddim/dpm_2/dpmpp_2+beta/beta57/sgm_uniform; 细节更丰富、更具想象力:heunpp2+ddim_uniform; 放大:UltimateSDUpscale/TTP; 胶片效果:添加 lut(35mm/AGAF/Kodak); 或者基于您自己环境的最佳测试组合。steps 20-30。工作流参见例图。

Basic: deis+simple / euler+beta; More noise: ddim/dpm_2/dpmpp_2+beta/beta57/sgm_uniform; More detail, more imaginative: heunpp2+ddim_uniform; Upscaler: UltimateSDUpscale/TTP; Film effects: add LUT (35mm/AGAF/Kodak); Or the best combination of tests based on your own environment. steps 20-30。 The workflow is shown in the example POST image.

Also on Huggingface.co

一个有趣的脸模控制测试示例 (An interesting face model LoRA control sample):

===========================================================================

===========================================================================

DedistilledMixTuned Dev V3.0:

中国农历蛇年钜献! (Great upgrade for Chinese Snake Year!)

V3.0 版模型全面升级,可能是目前 Flux Dev 微调模型中,模型能力最均衡,LoRA 兼容性、真实感、出图质量和艺术创作力最接近 Flux Pro 的模型。(为了方便评测对比,本模型的Seed与原Dev模型基本对齐)

Fully upgraded Version 3.0, it may be the best model in the current Flux Dev fine-tuning models. Have the very good balance in model capabilities, LoRA compatibility, realism, image quality and artistic creativity closest to the Flux Pro model. (For evaluation and comparison, the seeds of this model are basically aligned with the original Dev model)

V3.0 版模型使用指南:

模型通过分层融合技术,去除反蒸馏干扰,与原版 Flux.1 Dev 模型完全兼容,具有更高的 LoRA 权重敏感性。1024x1024 分斌率以下,建议 euler/deis + normal/beta/simple 等,1024 - 2048 大分斌率图像,建议 ddim/dpm_2/dpmpp_2m/heunpp2 + ddim_uniform/beta.

细节最强:dpmpp_2m+beta,艺术性最好:heunpp2+ddim_uniform

建议:KSampler, 20-30步。工作流请参见图:https://civarchive.com/images/53432419

The model is fully compatible with the original Flux.1 Dev. Had removed the de-distillation interference, and has a higher sensitivity to LoRA weights. For 1024x1024 and below, euler/deis + normal/beta/simple, etc., 1024 - 2048 for large binning images, ddim/dpm_2/dpmpp_2m/heunpp2 + ddim_uniform/beta.

More details: dpmpp_2m + beta, More artistry: heunpp2 + ddim_uniform

Recommended: KSampler, steps 20-30. Workflow of the model pls ref: https://civarchive.com/images/53432419

===========================================================================

DedistilledMixTuned Dev V2.0:

2025 新年献礼!经过一个多月打造,在 v1.0 基础上全新升级 V2.0 版本,以照片级的真实感,在细节体现、出图速度、LoRA兼容性、光影和谐度等方面达到一个新的平衡。

2025 New Year Gift! More than a month of training and fine-tuning, The V2.0 version has been upgraded based on v1.0, and has reached a better balance in detail reflection, drawing speed, LoRA compatibility, light and shadow harmony with photorealistic realism.

===========================================================================

DedistilledMixTuned Schnell V1.0:

可能是目前基于 Flux.1 Schnell 调制的各种模型中,快速出图(4-8步),遵循原版 Flux Schnell 构图风格,提示词还原能力强,且在出图质量、出图细节、回归真实和风格多样化方面取得最佳平衡的开源可商用 Schnell 基础模型。

Only 4 step, The Model may achieve to the best balance in terms of image quality, details, reality, and style diversity compare with other tuned of Flux.1 Schnell. and have a good ability of prompt following, good of the original Flux model style following.

Based on FLUX.1-schnell, Merge of LibreFLUX, finetuned by ComfyUI, Block_Patcher_ComfyUI, ComfyUI_essentials and other tools. Recommended 4-8 steps, usually step 4 is OK. Greatly improved quality and reality compare to other Flux.1 Schnell model.

===========================================================================

DedistilledMixTuned Dev V1.0:

可能是目前快速出图(10步以内)的 Flux 微调模型中,遵循原版 Flux.1 Dev 风格,提示词还原能力强、出图质量最好、出图细节超越 Flux.1 Dev 模型,最接近 Flux.1 Pro 的基础模型。

May be the Best Quality Step 6-10 Model, In some details, it surpasses the Flux.1 Dev model and approaches the Flux.1 Pro model. and have good ability of prompt following, good of the original Flux.1 Dev style following.

Based on Flux-Fusion-V2, Merge of flux-dev-de-distill, finetuned by ComfyUI, Block_Patcher_ComfyUI, ComfyUI_essentials and other tools. Recommended 6-10 steps. Greatly improved quality compared to other Flux.1 model.

GGUF Q8_0 / Q5_1 /Q4_1 量化版本模型文件,经过测试,已同步提供,将不会再提供别的量化版本,如有需要,朋友们可根据下面提示信息,自己下载 fp8 后进行量化。

GGUF Q8_0 / Q5_1 /Q4_1 quantized model file, had tested, and uploaded the same time, over-quantization will lose the advantages of this high-speed and high-precision model, so no other quantization will be provided, you can download the FP8 model file and quantizate it according to the following tips.

===========================================================================

Recommend:

UNET versions (Model only) need Text Encoders and VAE, I recommend use below CLIP and Text Encoder model, will get better prompt guidance:

Text Encoders: https://huggingface.co/silveroxides/CLIP-Collection/blob/main/t5xxl_flan_latest-fp8_e4m3fn.safetensors

VAE: https://huggingface.co/black-forest-labs/FLUX.1-schnell/tree/main/vae

GGUF Version: you need install GGUF model support nodes, https://github.com/city96/ComfyUI-GGUF

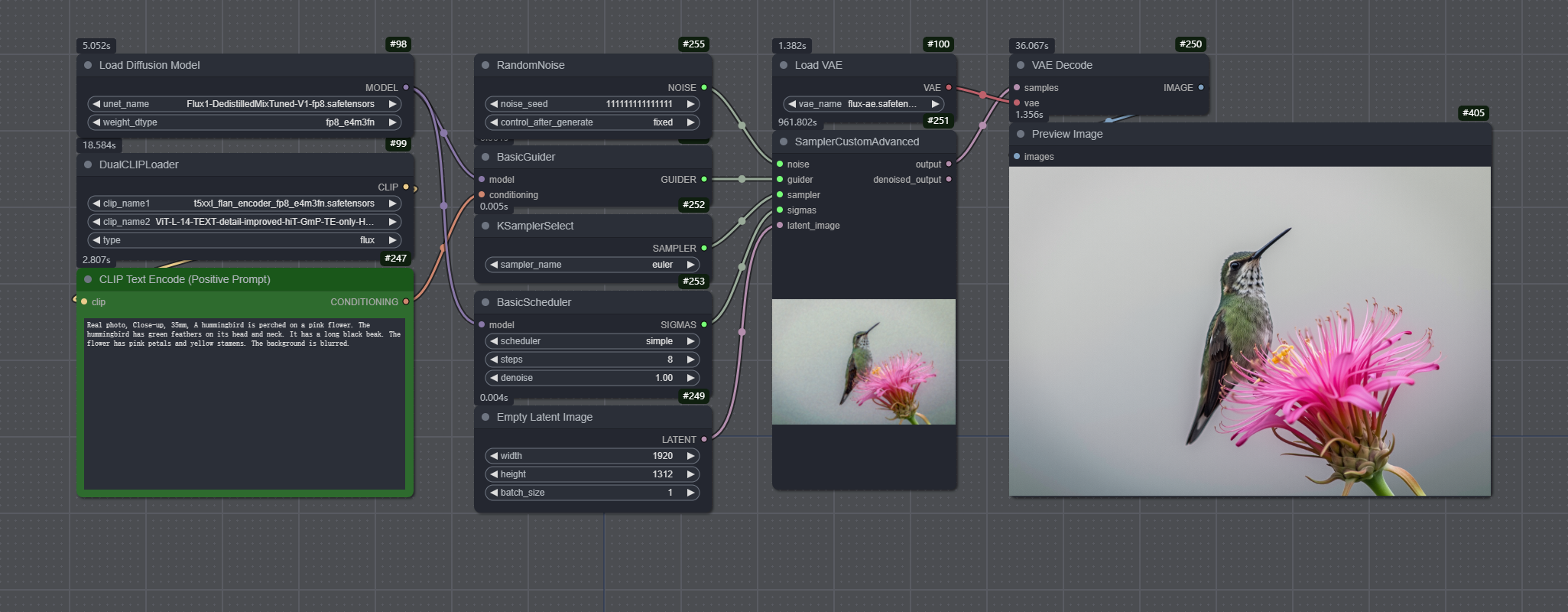

Simple workflow: a very simple workflow as below, needn't any other comfy custom nodes(For GGUF version, please use UNET Loader(GGUF) node of city96's):

===========================================================================

===========================================================================

洗去蒸馏油腻,回归模型本真,致力打造最纯正的 Flux 优质底模!

Wash away the distillation and return to the original basic.

如果您使用后觉得模型不错,请多多返图,谢谢!

If you feel the model is good for you, please post the image here, thanks a lot!

Thanks for:

https://huggingface.co/black-forest-labs/FLUX.1-dev, A very good open source T2I model. under the FLUX.1 [dev] Non-Commercial License.

https://huggingface.co/black-forest-labs/FLUX.1-schnell, A very good open source T2I model, under the apache-2.0 licence.

https://huggingface.co/Anibaaal, Flux-Fusion is a very good mix and tuned model.

https://huggingface.co/nyanko7, Flux-dev-de-distill is a great experimental project! thanks for the inference.py scripts.

https://huggingface.co/jimmycarter/LibreFLUX, A free, de-distilled FLUX model, is an Apache 2.0 version of FLUX.1-schnell.

https://huggingface.co/MonsterMMORPG, Furkan share a lot of Flux.1 model testing and tuning courses, some special test for the de-distill model.

https://github.com/cubiq/Block_Patcher_ComfyUI, cubiq's Flux blocks patcher sampler let me do a lot of test to know how the Flux.1 block parameter value change the image gerentrating. His ComfyUI_essentials have a FluxBlocksBuster node, let me can adjust the blocks value easy. that is a great work!

https://huggingface.co/twodgirl, Share the model quantization script and the test dataset.

https://huggingface.co/John6666, Share the model convert script and the model collections.

https://github.com/city96/ComfyUI-GGUF, Native support GGUF Quantization Model.

https://github.com/leejet/stable-diffusion.cpp, Provider pure C/C++ GGUF model convert scripts.

Attn: For easy convert to GGUF Q5/Q4, you can use https://github.com/ruSauron/to-gguf-bat script, download it and put to the same directory with sd.exe file, then just pull my fp8.safetensors model file to bat file in exploer, will pop a CMD windows, and follow the menu to conver the one you want.

LICENSE

The weights fall under the FLUX.1 [dev] Non-Commercial License.

Description

v2.0 Q4_1

FAQ

Comments (26)

I've deleted my previous negative review after further testing, so it doesn't mislead people wanting to try this model. I thought the model was plainly broken in Forge UI, but it is not, it's working properly, and it does work with LORAs, thou with some caveats.

Using DEIS + simple at a range of 15-20 steps does work much better than other options for this model in Forge Ui, thanks to the creator of the checkpoint for indicating it to me!

Still, I think my initial impression of this model being overtuned towards very grainny, low resolution images is not something everyone is gonna like. When it works well, it's magnificent, very realistic, and solves a lot of Flux problems with the plastic look of people in the base model. That's fantastic!

In the other hand, that type of training make it so it generates very low resolution and very low detail in anyting that is not a closeup portrait or a cowboy shot (mid body shot) at most. Also excesive depht of field doesn't help with this problem. That kind of images are going to need a lot of fine tunning and upscaling to look good. Maybe something that can be mitigated with prompting?? I doesn't seems like it, but maybe.

Still a very interesting checkpoit that I'm gonna keep using and trying to figure out with time. Maybe future versions can achive a middle ground between the very easy to use v1 and this more ambitious, much more realistic, but finicki v2.

Thanks to the creator for his hard work!

Much thanks for your use and testing, feel free to provide me with any positive or negative feedback.

@wikeeyang

I agree with JCriterio, 2.0 model does not respond like 1.0 in almost all settings. It is a model hard to manage. I have seen such behavior in de-distilled models (grainy images, not able to use samplers, strange unusable results). Why 1.0 works in most cases but 2.0 is so restricted?

@FarFromGeometry Is your env Forge WebUI? I test the v2 model on ComfyUI, many schduler + sampler like euler/deis + normal/beta/simple/ddim_uniform combination should be ok, Why v2 do a lot tuning? yes, for v1 model, it is simple and rapid to gengrate image directly, but v1 diffcult to compatible with LoRA, the resulting images are too detailed for post-upscaling, and the distillation marks in the image have not been removed thoroughly enough. Therefore, the model is subject to many limitations in some professional drawings, such as: artistic creation (watercolor, pencil painting), super portraits, architecture, landscape, street photography, etc. thanks for your feedback, the next version will better.

@FarFromGeometry Pls refer https://civitai.com/images/50294859, Zoom it and take look.

@wikeeyang Thanks for the reply. I use stability Matrix https://github.com/LykosAI/StabilityMatrix, "Inference" an easy front end gui for confyui nodes, in essence is confyui. And more or less results are in the description of JCriterio. Could that point to an incompatibility issue here with confyui?

@FarFromGeometry Sure, v1.0 is easy and simple to get high quality image directly, but v2.0 is more professional for user, can combine some user's LoRA and special post step(workflow) to get better artistic effects, then it is more suitable for complex workflows in the ComfyUI environment.

@JCriterio @FarFromGeometry please refer to https://civitai.com/user/cakc this user's posted image like https://civitai.com/images/49441201, https://civitai.com/images/49441276 , refer the generation data to get very realistic image, this is v2.0 model's true ability.

@wikeeyang Ok, maybe there is an issue with the quantized 4_1 version I have used, I will check an other model later and report back. For now 4_1 produces distorted results using the same parameters as the examples provided

Hi! I've being using the model for the past week on and off, and I think both things are true. The model can be fantastic and hyper-resalitic at some things, and frustratingly weird at others. In some aspect improves a lot over basic Flux and in other reverts Flux to something that looks more like early SDXL. That's my experience with it so far, but I'm aware that this could be for a lot of reasson related to my inexperience with the model and no the model itself.

FarFromGeometry, I don't kow if this is useful or not for you, but I'm using the fp8 version and only using DEIS simple, 20 steps, the others are not workable in FORGE UI for me. Also, there are 2 options you can try that work for me sometimes to improve result. You either go for distilled CFG 5 and a resolution of 2 megapixeles, so 1664 x 1216 for 4:3 for example (this get rid of the ouf of focus, graininess, etc..., and helps with deformation); or go for true CFG (this also helps with prompt adherence) at around 2 to 3, and use the negative prompts for blurry, etc...if you go for true CFG above 3 all the blurrines intensifies a lot for some reason.

Both methods at 20 steps. Obviously, both methods take double the time to generate that a 1 megapixel distilled image, but... it's what works for me.

Considering that a lot of people praise this model and there are images in the gallery that doesn't show the problems (thou funny enough some do), maybe we are having hardware related issues, or maybe is our enviroments UIs, it's hard to tell. And even if this is not the case, it's still posible that this model is so especialized, as the creator of the model suggests, that we are simply trying to use it in a less than ideal scenario.

When I got frustrated with it and revert to the v1, I immediately remember how unnatural the skin of people looks in base Flux and give this new version another try XD. In my opinion, this v2 shows great potential for a future v3 realese!

@FarFromGeometry According to my test results, Q4_0 and Q4_1 will lost more than 20% of the details than fp8, if you only can use Q4 limited due to HW, pls use v1 Q4_1, it more detail than v2, then will get better.

@JCriterio thanks for your feedback! for v2.0 model, if you genreate like oil paint, watercolor, pencil sketch or line drawing and so on, not like ID portrait, live photo, street photography and landscape view need more detail, you can generate 2K image directly, but otherwise, I suggest just generate 1K and use less step for example 8 - 10 step to upscale it to 2K or 4K will get fine texture and quality.

@wikeeyang Still testing, just writing to let you know that the first tests seem to be working perfectly with diverse samplers in Forge UI for me. Whatever was wrong with v2 for me, seems to be fixed with v3. I'll keep testing it, but I think you probably have again the best fp8 Flux in the site with this v3.

大佬,实测2.0好像肢体出现畸形的几率很大,1.0版本比较稳定。

多谢反馈!有些说1.0版手脚容易崩,2.0好一些。😂😂😂只能下一版继续优化了。

What samplers and what schedulers should I use with this model when it is used in forge ui .pytorch 2.3.1 and cuda 12.1

euler/deis + normal/beta/simple/ddim_uniform should be ok, you can try which combination is optimal yourself. please refer to: https://civitai.com/images/48844731 get prompt and parameter in Forge_webUI.

我下了FP8 2.0版对于写实COMFYUI中 有对采样调度器有什么特别搭配吗?我在生成1024*1536的情况下,总是 出来的照片有类似真实照片像素不够强行放大的那种 模糊像素块条纹那种一块一块的感觉?

我的步数是15到20 如果10步会更惨

ComfyUI 中 euler/deis + normal/beta/simple/ddim_uniform 任意组合都可以,具体参见样图的工作流,建议第一级出图不要太大分斌率,可通过 Latent Upscaler 或 Ultimate SD Upscaler 2x 或 4x 放大,可以输出非常逼真的效果,如:https://civitai.com/images/50294859 达到了 1200 万像素,皮肤非常逼真。

@wzr905636 参见 Post 样图中包含的工作流。步数 15-20 步。

相对来说,只是任意出图的话,v1.0 更易于使用,v2.0 要求更专业一些,但 v2.0 有更好的 LoRA 兼容性,能出更专业的图,比如非常逼真的人像、实景照片级的街拍、商品展示等等,要求使用者对 ComfyU 有比较熟练的使用技巧。

@wikeeyang 大佬,可否分享一下你的高清放大的工作流我不太明白怎么搞

@wikeeyang 主要是你发的post样图没有一张可以读取工作流信息的。

@alinasame11807 老兄,说话太绝对了吧?没有一张???都是相同工作流相同参数跑的,有几张带工作流也就够了,置顶的鹰油画效果就带完整工作流。https://civitai.com/images/53433496

@wikeeyang 对不起,是我没注意。无论如何非常感谢大佬的努力和成果,如有冒犯,请多多包涵