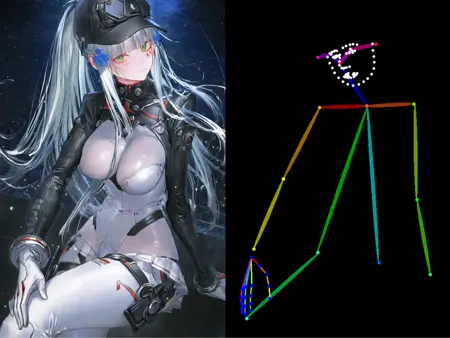

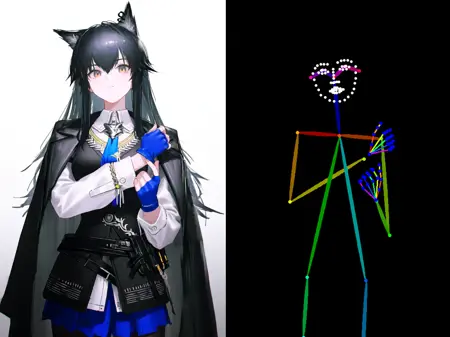

NoobAI-XL ControlNet

This is the ControlNet collection of the NoobAI-XL models.

How to use?

Version name is formatted as "<prediction_type>-<preprocessor_type>", where "<prediction_type>" is either "v" for "v prediction" or "eps" for "epsilon prediction", and "<preprocessor_type>" is the full name of the preprocessor.

You HAVE TO match the preprocessor type and this ControlNet model.

You MAY NEED TO match the prediction type of your base model and this ControlNet model.

Where is Other ControlNet?

Find it here!

Acknowledgments

Thank you to nieta and Qinglong Shengzhe for their help in the creation of the dataset for this model

Description

FAQ

Comments (23)

As I wrote also on HF: can this be provided in diffusers format like the other CNs? It would make it easier for software like SD.Next to download the models.

很好的模型

把文件放在stable-diffusion-webui/extensions/sd-webui-controlnet/models/ControlNet

的里面就行了,然后你在WEBUI打开之后点开controlnet 的Openpose,你会发现多了一个模型

MY FAVORITE

If it's in an upside-down position, it seems that it cannot be recognized correctly. Are there any useful suggestions?

flip the image?

So is it solved, bro?

Depends on what UI you use. But a lot of UI's will feature either directly or as an comunity made extension some form of additional input for controllnets.

In ComfyUI I like to use the "Clip Text Encode Controllnet" node that allows to add some further conditioning/promting to the conditioning/prompt that goes in the "Apply Controllnet" node besides the pose image input. That way some more cocise information can be added to support the controllnet in generating the targeted result. So for a pose controllnet I add stuff that describes the upside down pose in question in that way if I want to generate one, because Controllnets struggling with these kinds of poses is not a rare phenomen. Especially when the Checkpoint used already might have not the best training data or understanding of the concept.

It also will help to improove results in certain fringe cases when limbs overlap in certain ways and the controllnet gets confused and starts to switch what tzpe of limb it is or contorts them in weird ways. A simple "*insert Limb* behind insert other Limb" added in like described has resolved that problem in many cases, or at least mitigated it.

Could you train a dw openpose?

It seems it works for me in Easy Diffusion (v3.5 engine) with this exact CN model.

the best openpose model I've tried for sdxl

very nice model, can it is used in other illustrious models?

Any idea if it works?

Can i use this with pony models?

i used it with v-pred checkpoint and it seemed not work well.i wounder if this is only for eps-prediction?

会更新VP可用的版本吗?

Could you please do the animal openpose model for this aswell?

Where is the YAML file? I cant find it anywhere and i cant get this to work without it.

No idea what you're using, but you don't need any yaml files for this to work in ComfyUI

太伟大了

Can someone help me install ?

Sorry but I only saw one version which can be downloaded, where are the other versions? THX!

my model type is eps and need to mach,but where could i choise these openpose type?the only type here don't mach

Details

Files

noobaiXLControlnet_openposeModel.safetensors

Mirrors

noobaiXLControlnet_openposeModel.safetensors

noobaiXLControlnet_openposeModel.safetensors

openpose_pre.safetensors

diffusion_pytorch_model.safetensors

noobaiXLControlnet_openposeModel.safetensors

noobaiXLControlnet_openposeModel.safetensors

noobaiXLControlnet_openposeModel.safetensors

noobaiXLControlnet_openposeModel.safetensors

noobaiXLControlnet_openposeModel.safetensors

noobaiXLControlnet_openposeModel.safetensors