Neural Repair & Portable Checkpoints Lora Type.

Hello! I'm back with something much juicier than ever!

Originally, I planned to release more Samplers (and I will), but I pivoted to solve a critical flaw I found in the community: Many popular merged checkpoints have a corrupted Layer 11.

This translates to:

❌ Text Encoder errors (NaNs).

❌ Poor LoRA compatibility.

❌ Massive information loss.

And here's the solution: [Anti-Nans + RAM Cleaner] Uh-huh... The LoRA repair method failed, so I engineered a Runtime Fixer. Just paste the provided script into a new cell in your Colab/Notebook, run it before the WebUI, and you are good to go.

Herrscher Shield: Scans Layer 11 and eliminates NaNs in RAM instantly. No need to download fixed checkpoints.

AGGA Optimizer: Aggressively cleans RAM to prevent Colab crashes.

Okay, now let's talk about these Loras (Total / Duplo / Ultra):

Since I couldn't "fix" the NaNs inside a LoRA, I decided to extract the soul of the best models. I managed to convert 6GB checkpoints into lightweight 500MB-1GB files.

These allow you to inject the exact style/knowledge of a model into any other refined model without downloading the full base checkpoint every time.

In conclusion: Now you have a tool for every need:

Do you just want a refined DMD? -> Total.

Do you want the information and style? -> Duplo.

Do you want it all? It'll be a Copy -> Ultra.

🟢 TOTAL (Concept Injector):

What it extracts?: Text Encoders +

attn2(Cross-Attention).Visual Only: Style extraction only (UNet).

What does it do?: Associates words with concepts.

Total knows that "Miku" means "Teal hair, long pigtails".

Result: Corrects what is drawn, but the "brushstroke" remains from your base model.

🟡 DUPLO (Structure & Geometry):

What it extracts?: Text Encoders +

attn2+attn1(Self-Attention).What does it do?: Controls geometry and spatial composition.

attn1is where the "shape" of the style resides (eye size, body proportions, composition).Result: The image gets the structure of the source model (e.g., Pony), but the rendering (skin, lighting) is a hybrid.

Best for fixing anatomy while keeping your checkpoint's texture.

🔴 ULTRA (Full Replica):

What it extracts?: EVERYTHING (

attn,ff,proj,te).What does it do?: Copies the FeedForward (FF) layers too, which determine the Render Style (lighting, line weight, shading).

Result: A complete conversion. The base model visually disappears and becomes a perfect replica of the source.

⚠️ IMPORTANT VERSIONS & WARNINGS

🟡 Visual Only vs. Complete (Zip)

Visual (Online Gen Friendly): Use this for quick style transfer.

Complete (Zip): Includes the "Fixed" files that connect text properly. Use this for serious work.

🟡 Architecture Warning (IL Base) Some of these files are based on the Illustrious (IL) architecture.

High Steps Warning: Since it's based on IL, using Duplo/Ultra on high-step merges might cause artifacts or corruption.

Recommendation: Use them at LOW STEPS (0.18-0.2) or stick to Total if you are merging deeply.

Note: I fixed Duplo, but the IL & NoobAI base is sensitive. Treat it with care!

⚠️ Visual Only Usage Note: Don't be scared! Even though this is based on my DMD2 architecture:

It works perfectly at HIGH STEPS (20-30+) without burning (great for detailing).

It works perfectly at LOW STEPS (4-8) for speed.

Wink wink 😉

Description

Hi, I'm back with pixel art! This time I used a specific pixel art checkpoint as a base (I'll add it to the recommended resources since I can't recall the exact name right now), but I adapted it to my own workflow.

The main challenge is that Velvette doesn't do pixel art natively (I think that's the simplest proof that this method actually works). It was tricky because my previous workflow kept the DMD2 base at 100% (which translates to three LoRAs being way too strong for specific concept/style mini-checkpoints). However, just when I was about to give up, I decided to reduce the DMD2 strength to 70% (just like I did with Voxel). This made the LoRA work great at 16-18 steps, allowing for higher CFGs, but I wanted to achieve the same result with fewer steps without losing that intensity.

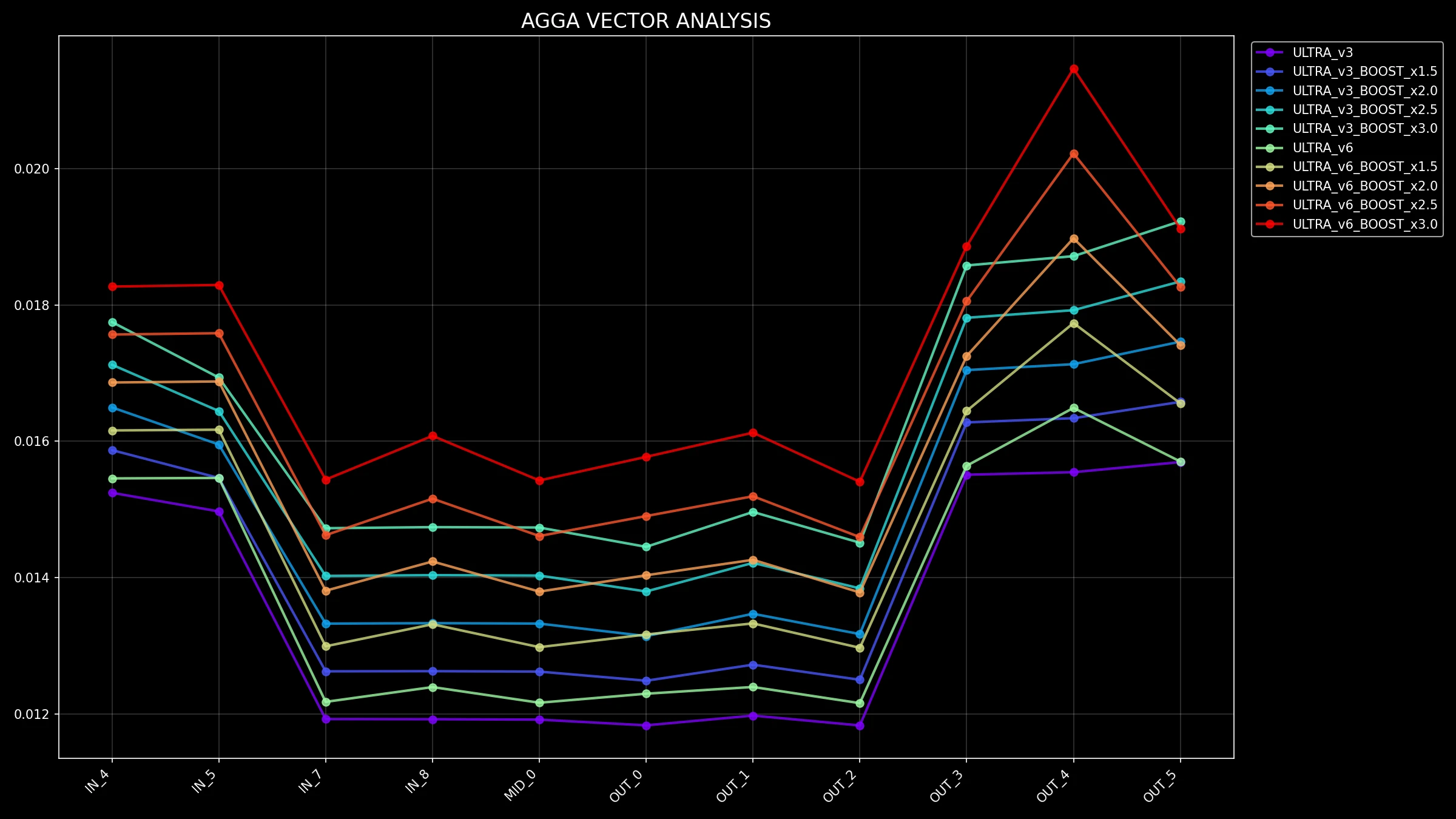

So, I started playing around with the math of the Up and Down vectors. I simply multiplied them directly, since "cooking" (re-training) an extraction taken from a checkpoint only introduces noise (I already tried that, and it just doesn't work the same).

In short: it was a really fun experiment, and now I have a new tool capable of slashing generation time from 16 steps down to just 6 steps for pixel art. It used to be complicated to pull off, but we are improving. Hope you enjoy it! xD

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.