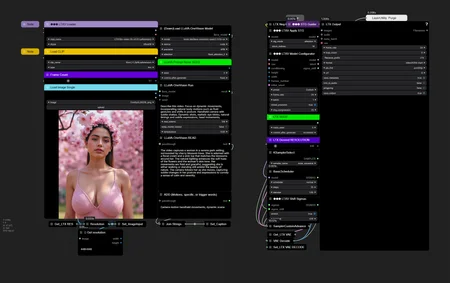

Simple I2V LTXV workflow

Description

FAQ

Comments (2)

Does one have to install anything else to get LTV to work? I get this error when trying to generate a video:

LTXVModelModified.forward() missing 1 required positional argument: 'attention_mask'

EDIT: Okay, this only happens when I use PixArt-XL-2-1024-MS. T5XXL works fine.

Flash Attention 2 has been logged on, but it cannot be used due to the following error: the package flash_attn seems to be not installed. Please refer to the documentation of https://huggingface.co/docs/transformers/perf_infer_gpu_one#flashattention-2 to install Flash Attention 2. Which video card models does your process work with? or which nodes can be replaced with Flash Attention 2?

Looks like we don't have an active mirror for this file right now.

CivArchive is a community-maintained index — we catalog mirrors that volunteers upload to HuggingFace, torrents, and other public hosts. Looks like no one has uploaded a copy of this file yet.

Some files do get recovered over time through contributions. If you're looking for this one, feel free to ask in Discord, or help preserve it if you have a copy.