HUNYUAN | AllInOne

no need to buzz me. Feedbacks are much more appreciated. | last update: 06/03/2025

⬇️OFFICIAL Image To Video V2 Model is out!⬇️ COMFYUI UPDATE IS REQUIRED !

Get files here:

link 1 paste in: \models\clip_vision

link 2 or Link 3 paste in: \models\diffusion_models (pick the one that works best for you)

⚠️ I2V model got an update on 07/03/2025 ⚠️

This workflows have evolved over time through various tests and refinements,

thanks also to the huge contributions of this community.

Requirements, Special thanks and credits above.

Before commenting, please keep in mind:

The Advanced and Ultra workflows are intended for more experienced ComfyUI users.

If you choose to install unfamiliar nodes, you take full responsibility.I do this workflows for fun, randomly in my free time.

Most issues you might encounter are probably already been widely discussed and solved on Discord, Reddit, GitHub, and addressed in the description corresponding to the workflow you're using, so please..Read carefully..

and consider do some searches before comment.I started this alone, but now there's a small group of people who are contributing with their passion, experiments and cool findings. Credits below.

Thanks to their contributions this small project continues to grow and improve

for everyone's benefit.Fast Lora may works best when combined with other Loras, allowing you to reduce the number of steps.

- Wave Speed can significantly reduce inference time but may introduce artifacts.

- Achieving good results requires testing different settings. Default configurations may not always work, especially when using LORAs, so experiment to find settings that fits best.THERE'S NOT UNIVERSAL SETTINGS THAT WORKS FOR EVERY CASES.

- You can also try to switch to different sampler/scheduler and see wich works best for you case, try UniPC simple, LCM simple , DDPM, DDMPP_2M beta, Euler normal/simple/beta, or the new "Gradient_estimation"

(Samplers/schedulers need to be set for each stage and mode; they are not settings found in the console)

Legend to help you choose the right workflow:

✔️ Green check = UP TO DATE version for its category.

Include latest settings, tricks, updated nodes and samplers, working on latest ComfyUI.

🟩🟧🟪 Colors = Basic / Advanced / Ultra

❌ = Based on deprecated nodes, you'll have to fix it yourself if you really want to use

Quick Tips:

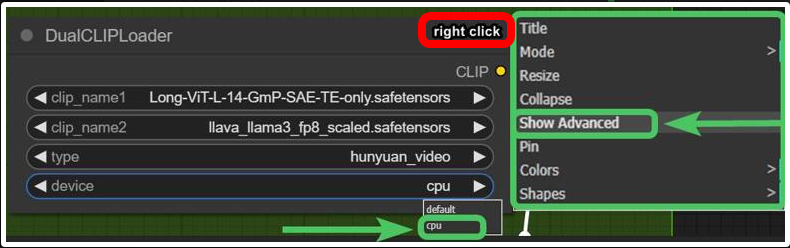

Low Vram? Try this:

and/or try use GGUF models avaible here.

and/or try use GGUF models avaible here.

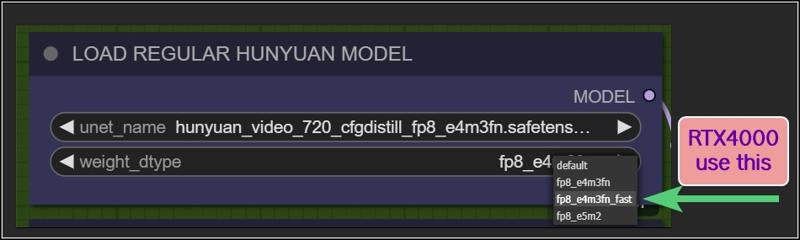

Rtx4000? use this:

Want more tips?

Check my article: https://civarchive.com/articles/9584

All workflows available on this page are designed to prioritize efficiency, delivering high-quality results as quickly as possible.

However, users can easily customize settings through intuitive, fast-access controls.

For those seeking ultra-high-quality videos and the best output this model can achieve, adjustments may be necessary, like Increasing steps, modifying resolutions, reducing TeaCache / WaveSpeed influences, or disabling Fast LoRA entirely to enhance results.

Personally, I aim for an optimal balance between quality and speed. All example videos I share follow this approach, utilizing the default settings provided in these workflows. While I may make minor adjustments to aspect ratio, resolution, or step count depending on the scene, these settings generally offer the best all-around performance.

WORKFLOWS DESCRIPTION:

🟩"I2V OFFICIAL"

require:

llava_llama3_vision: ➡️Link paste in: \models\clip_vision

Model: ➡️Link or ➡️Link (pick the one that works best for you)

paste in: \models\diffusion_modelshttps://github.com/pollockjj/ComfyUI-MultiGPU

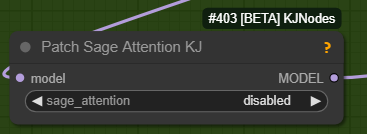

The following node is for SAGE ATTENTION, if you don't have it installed just bypass it:

🟩"BASIC All In One"

use native comfy nodes, it has 3 method to operate:

T2V

I2V (sort of, an image is multiplied *x frames and sent to latent, with a denoising level balanced to preserve the structure, composition, and colors of the original image. I find this approach highly useful as it saves both inference time and allows for better guidance toward the desired result). Obviously this comes at the expense of general motion, as lowering the denoise level too much causes the final result to become static and have minimal movement. The denoise threshold is up to you to decide based on your needs.

There are other methods to achieve a more accurate image-to-video process, but they are slow. I didn’t even included a negative prompt in the workflow because it doubles the waiting times.

V2V same concept as I2V above

require:

https://github.com/chengzeyi/Comfy-WaveSpeed

https://github.com/pollockjj/ComfyUI-MultiGPU

🟧 "ADVANCED All In One TEA ☕"

an improved version of the BASIC All In One TEA ☕, with additional methods to upscale faster, plus a lightweight captioning system for I2V and V2V, that consume only additional 100mb vram.

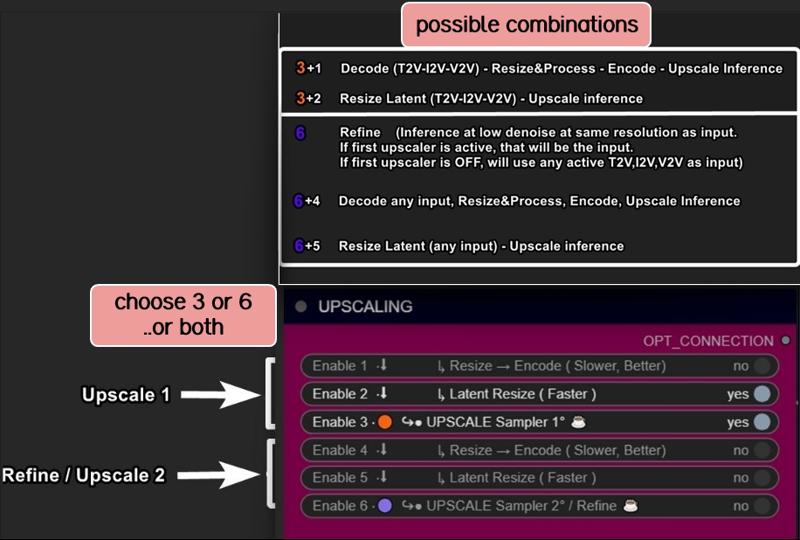

Upscaling can be done in three ways:

Upscaling using the model. Best Quality. Slower (Refine is optional)

Upscale Classic + Refine. It uses a special video upscaling model that I selected from a crazy amount of multiple video upscaling models and tests, it is one of the fastest and allows for results with good contrast and well-defined lines. While it’s certainly not the optimal choice when used alone but when combined with the REFINE step, it produces well-defined videos. This option is a middle ground in terms of timing between the first and third method.

Latent upscale + Refine. This is my favorite. fastest. decent.

This method is nothing more than the same as the first, wich is basically V2V, but at slightly lower steps and denoise.

Three different methods, more choices based on preferences.

Requirements:

-ClipVitLargePatch14

download model.safetensors

rename it as clip-vit-large-patch14_OPENAI.safetensors"

paste it in \models\clip

paste it in \models\ESRGAN\

-LongCLIP-SAE-ViT-L-14

-https://github.com/pollockjj/ComfyUI-MultiGPU

-https://github.com/chengzeyi/Comfy-WaveSpeed

Update Changelogs:

|1.1|

Faster upscaling

Better settings

|1.2|

removed redundancies, better logic

some error fixed

added extra box for the ability to load a video and directly upscale it

|1.3|

New prompting system.

Now you can copy and paste any prompt you find online and this will automatically modify the words you don't like and/or add additional random words.

Fixed some latent auto switches bugs (this gave me serious headhaces)

Fixed seed issue, now locking seed will lock sampling

Some Ui cleaning

|1.4|

Batch Video Processing – Huge Time Saver!

You can now generate videos at the bare minimum quality and later queue them all for upscaling, refining, or interpolating in a single step.

Just point it to the folder where the videos are saved, and the process will be done automatically.

Added Seed Picker for Each Stage (Upscale/Refine)

You can now, for example, lock the seed during the initial generation, then randomize the seed for the upscale or refine stage.

More Room for Video Previews

No more overlapping nodes when generating tall videos (don't exagerate with ratio obviously)

Expanded Space for Sampler Previews

Enable preview methods in the manager to watch the generation progress in real time.

This allows you to interrupt the process if you don't like where it's going.

(I usually keep previews off, as enabling them takes slightly longer, but they can be helpful in some cases.)

Improved UI

Cleaned up some connections (noodles), removed redundancies, and enhanced overall efficiency.

All essential nodes are highlighted in blue and emphasized right below each corresponding video node, while everything else (backend) like switches, logic, mathematics, and things you shouldn't touch have been moved further down. You can now change settings or replace nodes with those you prefer way more easily.

Notifications

All nodes related to the browser notifications sent when each step is completed, which some people find annoying, have been moved to the very bottom and highlighted in gray. So, if they bother you, you can quickly find them, select them, and delete them

|1.5|

general improvements, some bugs fixes

NB:

This two errors in console are completly fine. Just don't mind at those.

WARNING: DreamBigImageSwitch.IS_CHANGED() got an unexpected keyword argument 'select'

WARNING: SystemNotification.IS_CHANGED() missing 1 required positional argument: 'self'

🟪 "AIO | ULTRA "

Embrace This Beast of Mass Video Production!

This version is for the truly brave professionals and unlocks a lot of possibilities.

Plus, it includes settings for higher quality, sharper videos, and even faster speed, all while being nearly glitch-free.

All older workflows have also been updated to minimize glitches, as explained in my previous article.

From Concept to Creation in Record Time!

We are achieving world-record speed here, but at the cost of some complexity. These workflows are becoming increasingly intimidating despite efforts to keep them clean and hide all automations in the back-end as much as possible.

That's why I call this workflow ULTRA: a powerhouse for tenacious Hunyuan users who want to achieve the best results in the shortest time possible, with all tools at their fingertips

Key Features and Improvements:

Handy Console: Includes buttons to activate stages with no need to connect cables or navigate elsewhere. Everything is centralized in one place (Control Room), and functions can be accessed with ease.

T2V, I2V*,V2V, T2I, I2I Support: Seamless transitions between different workflows.

*I2V: an image is multiplied into *x frames and sent to latent. Official I2V model is not out yet. There's a temprorary trick to do I2V here wich require Kijai's nodes.

Wildcards + Custom Prompting Options: Switch between Classic prompting with wildcards or add random words in a dedicated box, with automatic customizable word swapping or censoring.

Video Loading: Load videos directly into upscalers/refiners and skip the initial inference stage.

Batch Video Processing: Upscale or Refine multiple videos in sequence by loading them from a custom folder.

Interpolation: Smooth frame transitions for enhanced video quality.

Random Character LoRA Picker: Includes 9 LoRA nodes in addition to fixed LoRA loaders.

Upscaling Options: Supports upscaling, double upscaling, and downscaling processes.

Notifications: Receive notifications for each completed stage, organized in a separate section for easy removal if necessary.

Lightweight Captioning: Enables captioning for I2V and V2V with minimal additional VRAM usage (only 100MB).

Virtual Vram support.

Use the GGUF model with Virtual VRAM to create longer videos or increase resolution.

Hunyuan/Skyreel (T2V) quick merges slider

Switch from Regular Model to Virtual Vram / GGUF with a slider

Latent preview to cut down upscaling process.

A dedicated LoRA line exclusively for upscalers, toggled via a dedicated button.

RF edit loom

Upscale using Multiplier or "set to longest size" target

a button to toggle Wave Speed and FastLoRA as needed for upscaling only.

Ui improvements based on users feedbacks

- Sequential Upscale Under 1x / Double Upscaling

You can now downscale using the upscale process and then re-upscale with the refiner, or customize upscaler multipliers to upscale 2 times.

New Functionality:

The upscale value range now includes values as low as 0.5.

Two sliders are available: one for the initial upscale and another for the refiner (essentially another sampler, always V2V).

Applications:

Upscale, Refine or combine the twoUpscale fast (latent resize + sampler) or accurate (resize + sampler)

Refine (works the same as upscale, can be used alone or as an auxiliary upscaler)

Double upscaling: Start small and upscale significantly in the final stage.

Downscale and re-upscale: Deconstruct at lower resolution and reconstruct at higher quality.

Combos: Upscale & Refine / Downscale & Upscale

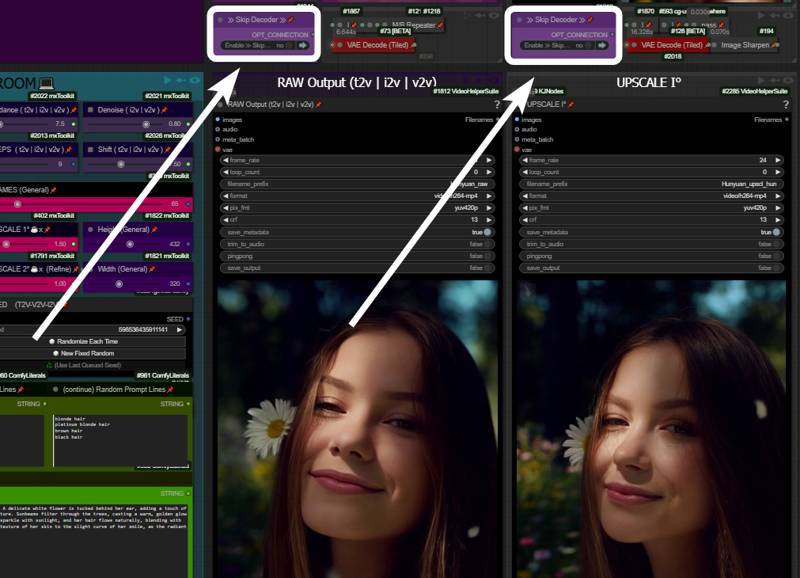

- Skip Decoders/Encoders Option

Save significant time by skipping raw decoding for each desired stage and going directly to the final result.

How It Works: If your prompt is likely to produce a good output and the preview method ("latent2RGB") is active in the manager, you can monitor the process in real-time. Skip encoding/decoding by working exclusively in the latent space, generating and sending latent data directly to the upscaler until the process completes.

Example:

A typical medium/high-quality generation might involve:Resolution: ~ 432x320

Frames: 65

One Upscale: 1.5x (to 640x480)

Total Time: 162 seconds

In this example case, by activating the preview in the manager and skipping the first decoder (the preview before upscaling), you can save ~30 seconds. The process now takes 133 seconds instead of 162.

Bypassing additional decoders (e.g., upscale further or refinement) can save even more time.

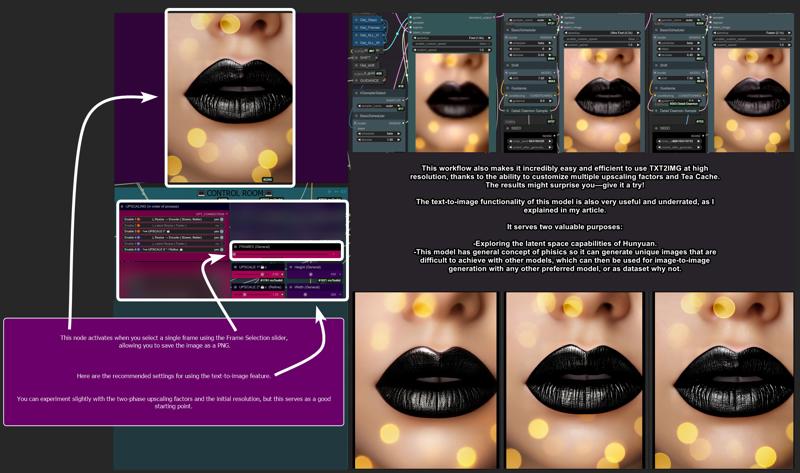

- Image Generation (T2I and I2I)

Explore HUN latent space with this image generation capabilities.

When the number of frames is set to 1, the image node activates automatically, allowing the image to be saved as a PNG.

Use the settings shown here for the best results:

T2I Example Gallery: Hunyuan Showcase

- Structural Changes / Additional Features

Motion Guider for I2V

This feature enhances motion for image-to-video workflows, lowering chances to get a static video as result.

9 Random Character Loras Loader: Previously limited to 5, now expanded to 9.

Random Character Lora Lock On/Off:

By default, each seed is set to corresponds to a random Lora

(e.g., seed n° 667 = Lora n° 7).Now, you can unlock this "character Lora lock on seed" and regenerate the same video with a different random Lora while maintaining the main seed.

Clarifications:

Let’s call things by their real names:"Refine" and "Upscale" are both samplers here. Each optimized for specific stages:

Upscale: Higher steps/denoise, fast results, balanced quality.

Refine: Lower steps/denoise, focused on fixing issues and enhancing details.

Refine can work alone, without upscaling, to address small issues or improve fine details.

UI Simplification:

The "classic upscale" is now replaced by a faster and better-performing resize + sharpness operation and hidden in back-end to save space.Frame Limit Issue (101+ Frames):

Generating more than 101 frames with latent upscale can cause problems. To address this, I added an option to upscale videos before switching to latent processing.

- Bug Fixes

Latent Upscale Change:

Latent upscaling now uses bicubic interpolation instead of nearest-exact, which performs better based on testing.

"Cliption" Bug Fixed

201-Frame Fix:

Generating 201-frame perfect loops caused artifacts with latent upscale. Switching to "resize" via the pink console buttons now resolves this issue.

- Performance and other infos:

Once you master it, you won’t want to go back. This workflow is designed to meet every need and handle every case, minimizing the need to move around the board too much. Everything is controlled from a central "Control Room."

Traditionally, managing these functions would require connecting/disconnecting cables or loading various workflows. Here, however, everything is automated and executed with just a few button presses.

Default settings (e.g., denoise, steps, resolution) are optimized for simplicity, but advanced users can easily adjust them to suit their needs.

-Limitations:

No Audio Integration:

While I have an audio-capable workflow, it doesn’t make sense here. Audio should be processed separately for professional results.No Post-Production Effects:

Effects like color correction, filmic grain, and other post-production enhancements are left to dedicated editing software or workflows. This workflow focuses on delivering a pure video product.Interpolation Considerations:

Interpolation is included here. I set up the fastest i could find around, not necessary the best one. For best results, I typically use Topaz for both extra upscaling and interpolation after processing but is up to the user to choose whatever favourite interpolation method or final upscaling if needed.

Requirements:

ULTRA 1.2:

-Tea cache

ULTRA 1.3:

-UPDATE TO LATEST COMFY IS NEEDED!

-Wave Speed

-ClipVitLargePatch14

ULTRA 1.4 / 1.5:

-UPDATE TO LATEST COMFY IS NEEDED!

https://github.com/pollockjj/ComfyUI-MultiGPU

https://github.com/chengzeyi/Comfy-WaveSpeed

https://github.com/city96/ComfyUI-GGUF

https://github.com/logtd/ComfyUI-HunyuanLoom

https://github.com/kijai/ComfyUI-VideoNoiseWarp

NB:

The following warning in console is completly fine. Just don't mind at it:

WARNING: DreamBigImageSwitch.IS_CHANGED() got an unexpected keyword argument 'select'

WARNING: SystemNotification.IS_CHANGED() missing 1 required positional argument: 'self'

Update Changelogs:

|1.1|

Better color scheme to easily understand how upscaling stages works

Check images to understand

|1.2|

Wildcards.

You can now switch from Classic Prompting system (with wildcards allowed)

to the fancy one previously avaible

|1.3|

An extra wavespeed boost kicks in for upscalers.

Changed samplers to native Comfy—no more TTP, no more interrup error messg

Tea cache is now a separate node.

Fixed a notification timing error and text again.

Replaced a node that was causing errors for some users: "if any" now swaps with "eden_comfy_pipelines."

Added SPICE, an extra-fast LoRA toggle that activates only in upscalers to speed up inference at lower steps and reduce noise.

Added Block Cache and Sage to the setup. Users who have them working can enable them.

Changed the default sampler from Euler Beta to the new "gradient_estimation" sampler introduced in the latest Comfy update.

Added a video info box for each stage (size, duration).

Removed "random lines."

Adjusted default values for general use.

Upscale 1 can now function as a refiner as well.

When pressing "Latent Resize" or "Resize," it will automatically activate the correct sampler.

A single-frame image is now displayed in other stages as well (when active).

Thanks to all users that contributed on discord for this workflow improvements!

|1.4|

Virtual Vram support

Hunyuan/Skyreel quick merges slider

Toggle to switch from Regular Model to Virtual Vram / GGUF

Longer vids / Higher Res / extreme upscaling now possible

Default res changed to 480x320 wich looks like a balanced middle way for lowres quick vids and most users should be ok with that.

Latent preview for skip preview mode

Switch toggle to enable/disable Exclusive LoRA for upscalers

RF edit loom

V2V loading time improved

Upscale to longest size target

Fixed slider upscale mismatch

info node moved

clean up and fixes

better settings for general use

upscale one can now use "resize to longers size" optional slider

added extra wave speed toggle for upscalers

added exclusive loras line for upscalers

general fixes

Ui improvement based on users feedbacks

fixed fast lora string issue on bypass in upscalers

more cleaning

changed exlusive loras for upscalers again, main fast lora is NOT going to pass in that line, since it has already a separate toggle (upscale with extra fast lora) previously called SPICE FOR UPSCALING.

fixed output node size for videos

moved resize by "longest size" toggle in extra menu

added extra wave speed toggle

control room is finished.. for now. I dont want to stress Aidoctor further. He already did a great job

lower fast lora default value now to 0.4

fixed VIDEO BATCH LOADING

|1.5|

general improvements, Ui improvements, some bugs fixes

leap fusion support

Go With The Flow support

Bonus TIPS:

Here an article with all tips and trick i'm writing as i test this model:

https://civarchive.com/articles/9584

if you struggle to use my workflows for any reasons at least you can relate to the article above. You will get a lot of precious quality of life tips to build and improving your hunyuan experience.

All the workflows labeled with an ❌are OLD and highly experimental, those rely on kijai nodes that were released at very early stage of development.

If you want to explore those you need to fix them by yourself, wich should be pretty easy.

CREDITS

Everything I do, I do in my free time for personal enjoyment.

But if you want to contribute,

there are people who deserve WAY more support than I do,

like Kijai.

I’ll leave his link,

if you’re feeling generous go support him.

Thanks!

Last but not least:

Thank this community, especially those who given me advices and experimented with my workflows, helping improve them for everyone.

Special thanks to:

https://civarchive.com/user/galaxytimemachine

for its peculiar and precise method of operation in finding the best settings and for all the tests conducted.

https://civarchive.com/user/TheAIDoctor

for his brilliance and for dedicating his time to create and modify special nodes for this workflow madness! such an incredible person.

and

https://github.com/pollockjj/ComfyUI-MultiGPU

Also special thanks to:

Tr1dae

for creating HunyClip, a handy tool for quick video trimming. If you work with heavy editing software like DaVinci Resolve or Premiere, you'll find this tool incredibly useful for fast operations without the need to open resource-intensive programs.

Check it out here: [link]

Have fun

Description

FAQ

Comments (121)

Tried the Hunyuan I2V model. Seems like the Loras need to be retrained to do some of the more complex actions. I can't even get the character to do a simple walk. WAN is much ahead in terms of i2v right now

https://civitai.com/articles/9584

check new Image to Video chapter

@LatentDream I'm not sure what you want me to look for there? I have no issues with the workflow itself, just the end results are disappointing compared to WAN.

@Melty1989 have you tryed the new I2V model released few hour ago?

check my latest workflow

@LatentDream I'm referring to the Official Hunyuan I2V yes. I stated as such in my initial comment. I am using your latest "official I2V" workflow. I even saw the little 1.1 update you did.

for the moment, the results are really not pretty, we're a long way from wan2.1 in terms of image to video.

you may want to redownload it,

i made a stupid mistake and loras were not connected properly.

Sorry... is Alpha 🤦

@LatentDream I'm not talking about the workflow...but about the model in general, which doesn't reproduce faces correctly.

@dominic1336756 same here

i keep getting "Sizes of tensors must match except in dimension 0. Expected size 841 but got size 266 for tensor number 1 in the list." i updated comfyui and everything, but to no avail, it stops at the text box

comfy update is needed. you done that?

@LatentDream yes, the issue was the clipvision, for some reason the one you provided didn't work, so i used the clip_vision_h.safetensors, i don't know why, but my comfy in particular can be a bitch, probably some bug with my local installation

@souzahp11321 LOL ok... if works then... 🤷

Works well, thanks for creating this. I get better results by bypassing wavespeed. Is there a way to use sage attention in this workflow?

yes the sage nodes are there, you probably need to set them up or unbypass them.

check the setup section

I think I need a video guide for Advance workflow, I don't know how to use I2V, I'm getting this error:

VAEDecodeTiled

'NoneType' object is not subscriptable

which vae did you use?

I did my waiting... 12 years of it... In Azkaban!

Missing Node Types

When loading the graph, the following node types were not found

TeaCacheHunyuanVideoSampler

sometimes i need to delete my vast ai instance, and sometimes when i reinstalled its working, sometimes not, please help me, i enjoy creating with your workflow but got a serious problem i don't know how i managed to fix it sometimes not.

ok little update, manage to get it work, for anybody in the future having this problem follow this steps:

WHEN TeaCacheHunyuanVideoSampler(and also logic) is missing, im doing this steps to fix it

navigate Comfyui/custom_nodes

1. git clone https://github.com/theUpsider/ComfyUI-Logic.git

2. go to install missing custom nodes, there should be only 1 there(Comfyui_TTP_Toolset), switch ver to nightly- refresh, done!

THIS IS SOME STATE OF THE ART SCI-FI SHIT OMG. WORKS STRAIGHT OUT OF THE BOX AND WORKS PERFECTLY!!!

I know the feeling but WAN 2.1 is insane! it retains every bit of detail of the image.

https://civitai.com/models/1317373/runpod-wan-21-img2video-template-comfyui

This is cool!! Tired out Kajai's and ComfyOrgs version with bad results but this is great!

I'm not sure of the issue but I can't seem to get it img2vid to follow the image I upload. Its always completely different.

Using hunyuan_video_I2VB_Q3_K_S.gguf, all my outputs are just black videos, using the default settings. Are the .gguf models not really supported?

friend just follow the instructions, this concerns the location of the model

@ImmortalSoul clueless you are. Literally isn't related to the issue.

wavespeed is currently incompatible with latest ComfyUI v0.3.26, which also breaks your workflows: https://github.com/comfyanonymous/ComfyUI/issues/7147

Is it worth to downgrade ComfyUI to have wavespeed work or better to disable the wavespeed node instead (in regards to results and speed)

edit:

this also means that your "I2V Official" workflow can not work, because it needs updated ComfyUI and wavespeed (with disabled wavespeed node the result does not look like the starting image)

- workflow was updated (but few hours later again outdated :D)

Either use TeaCache 1.3.2 (old) or replace the missing Video TeaCache Node with the normal one (they were merged, see TeaCache github v. 1.4)

Workflow v2 I2V Official also works without wavespeed/teacache/FastLora (just takes longer I guess).

But based on a few tests with the "replace"-model, the leapfusion workflow is much better to generate videos from image (and takes the same amount of time)

Any plans on updating this for WAN? I've swapped out some nodes and noticed some higher quality videos compared to some other workflows but something still isn't right. I feel like the potential for WAN is there but your workflows have always produced the best results in Hunyuan and I think it can be done for WAN too. Thanks!

rtx 5080 okay for advance 1.5?

Probably.. but if it's one of those scam 8gb cards, you may have a rough time, and you'd need to load the fp8 or even one of the smaller .gguf models. But if it's 16gb, then definitely. I'm running on a 4060TI with 16gb, and I'm able to run the 25gb model, somehow.. Comfy does some miraculous optimizations when it comes to Vram use. Mind you, on my setup, I can't really go higher than 640x480 without OOM, and even at that, it's hit and miss..

I'm having a problem with Ultra 1.5. Please help me.

Node ID: 2511

Node Type: GetImageSizeAndCount

Exception Type: AttributeError

Exception Message: 'NoneType' object has no attribute 'shape'

Hi,

Seeking advice

I'm quite new to this. but managed to get this running.

Experimenting atm, I use 3090 + 32GB Ram,

Generations don't really take as long, but it takes like a minute or 3 to start, probably loading models etc.

Do you know if anything can be done about it, so it starts quicker?

Thank You !

How many GB is the model that you are using? Yes, the larger the model the more time it takes load into vram.

Thanks for your reply!

Its about 13GB,

So more RAM won't make much of a difference?

Is there a trick to tell comfyUI to keep it there, or does it have to get loaded fresh every time ?

Thanks!

@aRTISTEN I just updated the workflow I see what you are saying... the prompt is taking a long time and the render is taking less time than the prompt. I also have a 3090. I am not familiar with this type of workflow.. Also, it is not really doing a good job of staying true to the image. the old version was working better for me and was staying true to the image. And finally. I tried enabling the TeaCache and got an error. I tried to enable the WaveSpeed, and that also gave me an error. However the Sage attention does work if I enable that. So, probably need the author to chime in. But I am going to pass on this version for the time being.

you need ssd , are you on hdd

@mytronlight354 M2

Dealing with the same problem as others, it just creates a new image and animates that, doesn't animate the one I give it. Info:

- Using latest version of Comfy (0.3.26).

- Guidance set to Replace

- OG size: 832x1216

- Output size: 480x720

- Scheduler Steps: 30

Any obvious reasons why it would be doing this?

@asldkcndakcdmk36823 Try changing scheduler to simple

Nope, didn't seem to do the trick, still getting a completely different image after the first frame

Might need to wait for an update then. I haven't been able to get this one working yet because of a node install issue, but I have had success with a few other workflows in keeping the original image. Not out of the box unfortunately.

Seed checkbox in 1.5 vs. 1.4 is missing--is the seed automatically randomized or fixed in the new version??

Thank you for another update. You really get top-end workflows. Could you consider this workflow here:

Is it possible to add the image magnification process that you developed to it? We would like it to include all the features that your processes have.

After your improved upscale, the normal image zoom doesn't look very good.

I'm sorry if I bothered you too much.

Using the HUN BASIC 1.5 on the latest standalone version of ComfyUI (v0.3.26), I always get the same error :

SamplerCustomAdvanced

SingleStreamBlock.forward() got an unexpected keyword argument 'modulation_dims_img'

Any idea how I could get rid of it ? I'm new to this, the answer may be obvious.

I have the same issue, and I haven't looked into it yet but as a quick workaround I'm able to generate by bypassing the WAveSpeed node.

@dale723 i read something about wavespeed breaking in the latest comfy update .26 , in .25 works fine i just get teacache error even tho im not using it i will love to know where to download the correct module xD

Same issue

What should I do? "Cannot execute because a node is missing the class_type property.: Node ID '#1134" ?

Even I'm getting the same error. Did you find a fix?

I keep getting a "TeaCacheForVidGen" error in ComfyUI despite having Teacache installed. :(

Same here, I also can't load "UnetLoaderGGUFDisTorchMultiGPU" or "HYFlowEditSampler" and others from the MultiGPU node pack, and i had Teacache and MultiGPU installed

I had the same after upgrading all my nodes. Try downgrading the Teacache node to 1.3.0. Works for me atm.

@KansOne Thanks, it works as you mentioned. However, I see that in version 1.5 of TeaCache, the node also exists, but with a different internal name and an additional option in the node.

What is the correct parameter for the TeaCache 1.5 node for the max_skip_steps value (default 3)?

getting " SingleStreamBlock.forward() got an unexpected keyword argument 'modulation_dims_img' " :(

I am getting same.

same

same too

I'm able to generate by bypassing the WAveSpeed node. but the interpolation didn't work

Anyone find a fix for this?

@JCD007 delete WAveSpeed node.

there's a fix here that requires modifying a file, or disable wavespeed.

https://github.com/chengzeyi/Comfy-WaveSpeed/issues/115

Haven't checked for node updates yet.

Really nice set of workflows. And you're right, the latent upscale is superior!

Character consistency lost in I2V.What's going on?I upload the image but the gen is entirely different.

Been playing with this for a few days myself, still sorting it out somewhat, but if you want a more consistent character, lower the denoise to around 2, and raise the CFG a bit to compensate. What this does; Denoise enabled more diverse manipulation of the original image by introducing noise (random empty latent space) into the image, it's almost like having a low alpha mask over your entire image.

So, lowering denoise is like lowering the alpha and bringing more of the original image to the foreground. The downside to that is, you get less movement and less potential improvement of the original image. This is where CFG comes into play. Lowering this keeps closer to the prompt and raises realism (great for if you're using img2img to make realistic people from an image of an anime character, for instance). Raising CFG however, enables more creativity, which is why it works to offset the lowered denoise function, cuz it's just working to be more creative with your image, instead of removing details, and mostly just using it as a concept.

Gonna drop this, and a couple more tips on a full post under this model.

I get the error "When loading the graph, the following node types were not found: TeaCacheForVidGen" this persists even after I installed missing nodes. How do I fix this?

I keep getting this message and think it might have to do with the dualclip loader because while it starts off with the type in dualclipload to Huanyuan, if I click on it it only gives me options for sdxl, sd3 and flux.

Cannot execute because a node is missing the class_type property.: Node ID '#35'

oh and this is the first message I get when I load up the allin1

Warning: Missing Node Types

When loading the graph, the following node types were not found:

EmptyHunyuanLatentVideo

ApplyFBCacheOnModel

No selected item

Nodes that have failed to load will show as red on the graph

@prophetofthesingularity5 You may need to update your local comfyui setup. To the best of my knowledge, it's still safe to do that for now, no hardcoded/hidden telemetry/cloud services, for now. But if none of that stuff bothers you at all, you can just get comfy manager extention and that thing pretty much does 95% of the work for you. You may need to google a node/model every now and then, but it's mostly automated(minus hitting a couple buttons and running some searches for models, all within the manager).

@Lazman I have tried that and it keeps saying comfy is up to date, I am using google colab do you think that has anything to do with it? I might just try to do a fresh install , ty

@prophetofthesingularity5 A reinstall is always a safe bet if nothing else works, but rule out all possible logical obstacles first. Cloud services might be an issue, idk, cuz I don't and will never use them. And, not that I ever suggest using cloud services, cuz unless you're just doing a couple one-off's, you'll save yourself big money in the long run with a solid local setup. but if I had any suggestion.. I would suggest using a cloud service from anyone other than google, M$, Nvidia, or any multi-trillion dollar corp. Unless you're big on supporting the huge megacorps that are actively working to destroy our society.

I'm not big on the concept of people becoming overly dependent on cloud services in general, but at the very least, go with a 'lesser of the two evils' solution.

Apart from that, if it has nothing to do with the cloud stuff, you could forget about manager, and install the node packs manually from github, manager even tends to have the Github links for the node packs when you look them up. Though, this may be significantly harder if you're on Windows. As windows is a very locked down OS, and really doesn't allow you to do much of anything without a lot of extra work..

But if you're on linux, then installing anything from github is as simple as typing a command into terminal emulator (like cmd/terminal in windows), hitting enter, and maybe inputting your system password (depending on your setup).

I installed windows on my laptop (cuz I'm planning on selling it), and installed comfyui on that, and it is such a pain just to do a basic github clone (download)..

@Lazman Ty! Yeah I am going local hopefully soon I need to upgrade my system badly.

@prophetofthesingularity5 Well, in-case you weren't aware, VRAM!! That is the biggest decider of what you can do. Also, Nvidia, they're more expensive than ATI, but that's because they're gatekeeping the AI tech.. ATI can technically do AI, but slower, and not as well at training cuz of the lack of optimizations for it. Depending on your budget, a 16gb card would be best, or if you got a bit more money to toss around, like 3k+ on a card, the 5090 make you wet in pants, but it's also one of the most expensive consumer cards, I think it actually comes in closer to 4k..

If you can get your hands on 2 3090's, that's still big bank unless you got a hookup, but they were the last consumer cards to still have the NVLink, which helped greatly in large scale AI processes. Guessing that's why Nvidia got rid of it.. They didn't want people getting big into AI unless they were millionaires.. in any sense, the timing of them getting rid of it, after it had existed for 20ish years, is sketchy AF to say the least..

I got it working!

Love it!

Finishing up several videos now.

I can make 14 sec videos and configure everything , the resolution, I have always hated using the apps.

Cannot upload a video in reply but this is going to be my first one done if this link works.

Posted it here on Civit

Welcome to the Secret Club | Civitai

Hi guys i am new to hunyuan just got a question

after i generate > upscale 1 > upscale 2, how do i use the generated vid of upscale 2 and use it as the base for the next generation to further refine?

Cause one of the lora im usign for refinement states to use the same seed. How do i get the seed for the generated video?

Thanks

an image always generates with your video, so you can drag the image into comfyui as you would with any other generated image or workflow.json file, and it will be there. Why not, instead of 2 upscales, just do one upscale, then try VID2VID? I am pretty new to video generation myself, but in my experience with image generation, you can often get exponentially better results by just repeatedly pointing your AI at the most recently generated iteration of an image, and telling it to improve it (<---gross oversimplification). Hit me up if you want more details on that process. But, I'm hungry now and need food.

Been playing with this for a few days and, still sorting it out somewhat, but if you want a more consistent character, lower the denoise to around 2, and raise the CFG a bit to compensate. What this does; Denoise enabled more diverse manipulation of the original image by introducing noise (random empty latent space) into the image, it's almost like having a low alpha mask over your entire image.

So, lowering denoise is like lowering the alpha and bringing more of the original image to the foreground. The downside to that is, you get less movement and less potential improvement of the original image. This is where CFG comes into play. Lowering this keeps closer to the prompt and raises realism (great for if you're using img2img to make realistic people from an image of an anime character, for instance). Raising CFG however, enables more creativity, which is why it works to offset the lowered denoise function, cuz it's just working to be more creative with your image, instead of removing details, and mostly just using it as a concept.

I also found that I can increase the efficiency of this workflow by looping in the RIFE VFI node from this pack https://github.com/Fannovel16/ComfyUI-Frame-Interpolation

Using this I can either double the length of the video, or cut the produced frames in half and have it finish much quicker with pretty much the same result.

On my 4060TI 16gb card, I run the basic setup from this with 24 frames, 24 framerate, 20 steps at 320x480 in about 20 seconds, give or take. IMG2VID, that is. I have not yet tested the node with txt2vid, but I will do that as well.

Either way, it's worth looking into, cuz it was a part of the LTX workflow I downloaded, and although HY does way better results, that component could even improve the HY workflow, making it more efficient overall.

Honestly, even with my card, being the only 16gb video card under a grand in cost(anything higher than 16 is over 3k, thx to Nvidia's gatekeeping bullshit), I struggle to produce higher resolution without OOM..

Oddly, I actually get OOM more often while upscaling than I do while generating the actual video. So you may want to consider adding a manual trigger for the upscale.

IIRC, there's a node/nodepack out there somewhere that allows you to hit a button to continue the workflow at specified points, this way people could take repeat shots at upscale if OOM. Also would be good if you want to upscale, but don't want to upscale every output, only the ones that turn out good, then you only hit the upscale if the video is worth upscaling.

Also, I noticed that certain settings, like the CFG, are coded in this workflow to only move at 0.5 increments, for those that want more fine-grained control over CFG while running the basic workflow, you can hit the settings button (little gear) on the console node in the 'control room', and adjust the third setting from the bottom from 0.5 to 0.2 or 0.1.

Forgot to ask a couple questions in my other post,

A: is it possible in the IMG2VID to get the character's to move, or do anything other than stand there and look.. wobbly?

B: Why is it that I am somehow able to load the 25gb model on my 16gb card, yet, when I use the 13.2gb fp8 model, I still can't boost the res any more without OOM(640x480 is pretty much max)?

👺 Merci, thank you for all your WORKFLOWS,

they're really well done, congratulations 👺

For T2V, the GGUI module for low vram usage refuses to work. Nothing I have done (Updating, downgrading) has fixed it. ComfyUI still tells me it cant be found. Could someone please help me with this?

What cant be found the gguf node or the checkpoint?

@sikasolutionsworldwide709 The node itself. I'm in the middle of something, but once I have a minute I'll get the exact name of the node.

@sikasolutionsworldwide709 The node that can't be found is: UnetLoaderGGUFDisTorchMultiGPU

@KingTater check in ur custom nodes folder or through comfy ui manager if this repo is installed https://github.com/pollockjj/ComfyUI-MultiGPU

@sikasolutionsworldwide709 It is. I had someone message me and they snt me the same link and instructions on how to install. It installed, I shutdown ComfyUI entirely, and started it up again. But that node still comes up as missing.

I did that. Someone messaged me with that same link and instructions on how to install it. I installed it, shut down ComfyUI entirely, started it back up, and the node still comes up as missing.

@KingTater ok then I have strangewise suffered the same prob like u 20 mins ago. My solution: Deinstall the node, update comfy (update all) through manager and then install again the multi gpu node choose the nightly option.

@sikasolutionsworldwide709 Ok, I'll give that a shot. Appreciate you helping me with this.

@sikasolutionsworldwide709 So...I did as you said, and now ComfyUi wont even start up. lol It loads up the webpage, but I just get an eternally spinning wheel. I tried updating through the files in the folder, and still nothing. I have no idea why this app is having such a hard time. lol It seems so simple for literally everyone else.

@KingTater Its far from simple... are u using the portable 1 or the regular repo?

@sikasolutionsworldwide709 I'm using the Regular version

@KingTater ok good me too. Now u should navigate to the ComfyUI folder and command

pip install -r requirements.txt that will ensure that all dependies including the frontend version will be up to date

@sikasolutionsworldwide709 "ERROR: Could not open requirements file: [Errno 2] No such file or directory: 'requirements.txt'"

It did update PIP, but even after that, I still got the above message.

@KingTater this error means ur in the wrong directory. in ur terminal it must be look similar at the end: "C:\Users\Intersurf\Documents\ComfyUI"

@sikasolutionsworldwide709 Thats where I installed it. "E:\AI\ComfyUI"

@sikasolutionsworldwide709 It was the next "ComfyUI" folder. So "E:\AI\ComfyUI\ComfyUI".

@sikasolutionsworldwide709 Now it started checking, but I got this error:

error: subprocess-exited-with-error

× Getting requirements to build wheel did not run successfully.

│ exit code: 1

╰─> [5 lines of output]

C:\Users\chris\AppData\Local\Temp\pip-build-env-q4kexiat\overlay\Lib\site-packages\setuptools\_distutils\dist.py:289: UserWarning: Unknown distribution option: 'tests_require'

warnings.warn(msg)

error in will setup command: 'install_requires' must be a string or iterable of strings containing valid project/version requirement specifiers; Expected end or semicolon (after version specifier)

slackclient>=1.2.1<1.3.0

~~~~~~~^

[end of output]

note: This error originates from a subprocess, and is likely not a problem with pip.

error: subprocess-exited-with-error

× Getting requirements to build wheel did not run successfully.

│ exit code: 1

╰─> See above for output.

note: This error originates from a subprocess, and is likely not a problem with pip.

@KingTater for me it seems ur python installation has some issues. Try to fix it via the repair option otherwise uninstall and install python again and keep an eye on the correct setting of the environment variables. Maybe its better to follow this guide and install the portable version https://comfyui-wiki.com/en/install/install-comfyui/install-comfyui-on-windows

@KingTater is everything working now?

@sikasolutionsworldwide709 Nah, I realized I was on the portable version, and decided to just to a fresh install. I also noticed my version of Python was old. I haven't gotten around to setting it all up again, but once I do I will give the workflow a shot and see how it goes. Hopefully it goes smoother this time.

@sikasolutionsworldwide709 Ok, so I'm still having issues. The multiGPU node is installed, I did the requirment.txt thing and it worked this time, but in ComfyUI itself, that node just will not work. I've updated it, gone back a version. Its still not showing up correctly.

I also noticed something called "The-AI-Doctors-Clinical-Tools" that has always failed to update. But it doesnt show up on my list of nodes.

@KingTater "The-AI-Doctors-Clinical-Tools" has nothing to do with the multigpu nodes. I recommend at 1st, deinstall multigpu again through manager then update comfy through manager lbnl install multigpu again choose the nightly option.

@sikasolutionsworldwide709 Ok, I figured it out. There was something called GGUF that needed to be installed and that also let me install MultiGPU properly. I can run the workflow now, but it is telling me that the node "Sageattention" doesnt exist. The node is there and part of the workflow, plus I can choose options from the dropdown. So I am not sure whats wrong there.

@KingTater nothing wrong U can choose "disable" sageattn is for speed up the generation process. U might search for "sageattention 1 click install" later.

@sikasolutionsworldwide709 Ok, I disabled that, now the Basic Scheduler is saying that its pink "model" wire needs to be attached to something, and the VAE Decode (Tiled) node has a thin red line around it.

Missing MultiFloatNodeAID nodes and cant find anywhere.

Also cant install: https://github.com/chengzeyi/Comfy-WaveSpeed

as have error:

"FETCH DATA from: https://raw.githubusercontent.com/ltdrdata/ComfyUI-Manager/main/custom-node-list.json [DONE]

Node 'wavespeed@nightly' not found in [default, cache]"

ANother error:

SamplerCustomAdvanced

SingleStreamBlock.forward() got an unexpected keyword argument 'modulation_dims_img'

Hmm give up this workflows not good

Its not the wf because a wf is just implementing nodes from node repos. The reason for errors are cumulative or alternately: problem with the python installation for non portable comfy, not updated manager and comfy repo including the frontend, conflicts with other nodes. Pls check

It's a pity this amazing workflow is broken after the latest comfy update. If you use this workflow, don't update comfyUI for now, at least until mxToolkit gets updated to fix the issue. Because the problem are the sliders from mxToolkit, that are completely broken and unusable on the latest comfy version, and a lot of workflows like this one rely on that node.

:(

EDIT: If someone else is facing this problem, try updating everything again as suggested here (remember to rollback TeaCache to 1.3.2 after for this workflow to work). For me, it fixed it. If you need a newer version of TeaCache you will have to edit the workflow and replace the TeaCacheForVidGen node with the TeaCache one.

Sigh... Another workflow completely bricked my Comfy, had to do a fresh install as I'm not that well versed in fixing it.

Now, due to updates, this workflow doesn't work. Tried rolling back TeaCache, some others... No cookie.

Sliders don't work, clip input is not recognised... No idea how to fix it at this point. And that's just the basic one.

Any chance of updating this 1?

Same issue here, I hope they fix it.

ty dr erdogan

dont work

Advice, back up your VENV and CUSTOM_NODES directories so next time you don't have to re-install. Just delete these directories and throw the back ups back in. Good to go.