**Don't forget to Like 👍 the model. ;)

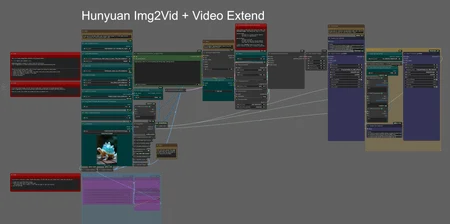

Straightforward, this is an Image-to-Video workflow using the official Tencent ImageToVideo model and packed by Kijai. No more lora needed and compatible with Fast lora, that will generate videos in 8 steps instead of the usual 20~30 steps!

The VRAM usage depends heavily on the length and dimensions of the video you want to generate, but 16GB of VRAM is good enough to get results (Maybe less).

As always, instructions and links are included in the workflow. Don’t forget to update Comfy and HunyuanVideoWrapper nodes!

Description

Workflow using ComfyUI native Ksampler node to process video (Not Kijai's process).

FAQ

Comments (12)

The FastVideo Lora doesn't work with Kijai's nodes, unfortunately.

Hmmm. It does. You just need to use it in the lora node (before model loader). My videos was made using it! se 8 steps in Sampler.

It's working great except it changes the character's face quite a bit, is there a way to improve that?

I wish... But this is part of how Hunyuan works. If you really like Hunyuan, Skyreels model is a Hunyuan variant, a little bit better in terms of keeping consistency.

Thanks for the info

@auroch22934 and @Sam_A , there is a FIXED version of the native I2V Hunyuan model out. I think it came out a few days after the initial one. I too noticed the initial version of the I2V changed the faces a lot, the FIXED version is improved in this respect. In the HunyuanImageToVideo node you will need to change guidance_type from V1 to V2 when you use the FIXED model or it appears to ignore the input image. Good luck.

@yajukun Oh! Thanks for the Info! I didn't know about it. To be true I'm using Wan2.1. I think it keeps more details frmo original image. I'll test this FIXED version and see how it does!

@Sam_A Yeah, I was watching this video and it looks like Wan is still the best for I2V, even with the Fixed version of Hunyuan. https://www.youtube.com/watch?v=SAC8kZAHXAc

@yajukun I wish it could use less memory, but seems like it understands the video in 3D, Also it keeps the original image details so well that it makes me want to use only Wan for now.

yep, switched to Wan and working great, just slow as hell :D

@auroch22934 Yeah. A little bit slower, but the quality worth IMO. For Hunyuan you need to regenerate so many times until you get a good I2V result. T2V a like Hunyuan a lot, becuase it's faster.

could you add hunyan workflow as in where to put the files like what you did to wan and what files to download.