I'd like to share Fooocus-MRE (MoonRide Edition), my variant of the original Fooocus (developed by lllyasviel), new UI for SDXL models.

We all know SD web UI and ComfyUI - those are great tools for people who want to make a deep dive into details, customize workflows, use advanced extensions, and so on. But we were missing simple UI that would be easy to use for casual users, that are making first steps into generative art - that's why Fooocus was created. I played with it, and I really liked the idea - it's really simple and easy to use, even by kids.

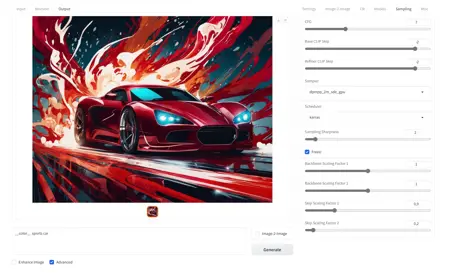

But I also missed some basic features in it, which lllyasviel didn't want to be included in vanilla Fooocus - settings like steps, samplers, scheduler, and so on. That's why I decided to create Fooocus-MRE, and implement those essential features I've missed in the vanilla version. I want to stick to the same philosophy and keep it as simple as possible, just with few more options for a bit more advanced users, who know what they're doing.

For comfortable usage it's highly recommended to have at least 20 GB of free RAM, and GPU with at least 8 GB of VRAM.

You can find additional information about stuff like Control-LoRAs or included styles in Fooocus-MRE wiki.

List of features added into Fooocus-MRE, that are not available in original Fooocus:

Support for Image-2-Image mode.

Support for Control-LoRA: Canny Edge (guiding diffusion using edge detection on input, see Canny Edge description from SAI).

Support for Control-LoRA: Depth (guiding diffusion using depth information from input, see Depth description from SAI).

Support for Control-LoRA: Revision (prompting with images, see Revision description from SAI).

Adjustable text prompt strengths (useful in Revision mode).

Support for embeddings (use "embedding:embedding_name" syntax, ComfyUI style).

Customizable sampling parameters (sampler, scheduler, steps, base / refiner switch point, CFG, CLIP Skip).

Displaying full metadata for generated images in the UI.

Support for JPEG format.

Ability to save full metadata for generated images (as JSON or embedded in image, disabled by default).

Ability to load prompt information from JSON and image files (if saved with metadata).

Ability to change default values of UI settings (loaded from settings.json file - use settings-example.json as a template).

Ability to retain input files names (when using Image-2-Image mode).

Ability to generate multiple images using same seed (useful in Image-2-Image mode).

Ability to generate images forever (ported from SD web UI - right-click on Generate button to start or stop this mode).

Official list of SDXL resolutions (as defined in SDXL paper).

Compact resolution and style selection (thx to runew0lf for hints).

Support for custom resolutions list (loaded from resolutions.json - use resolutions-example.json as a template).

Support for custom resolutions - you can just type it now in Resolution field, like "1280x640".

Support for upscaling via Image-2-Image (see example in Wiki).

Support for custom styles (loaded from sdxl_styles folder on start).

Support for playing audio when generation is finished (ported from SD web UI - use notification.ogg or notification.mp3).

Starting generation via Ctrl-ENTER hotkey (ported from SD web UI).

Support for loading models from subfolders (ported from RuinedFooocus).

Support for authentication in --share mode (credentials loaded from auth.json - use auth-example.json as a template).

Support for wildcards (ported from RuinedFooocus - put them in wildcards folder, then try prompts like

__color__ sports carwith different seeds).Support for FreeU.

Limited support for non-SDXL models (no refiner, Control-LoRAs, Revision, inpainting, outpainting).

Style Iterator (iterates over selected style(s) combined with remaining styles - S1, S1 + S2, S1 + S3, S1 + S4, and so on; for comparing styles pick no initial style, and use same seed for all images).

If you find my work useful / helpful, please consider supporting it - even $1 would be nice :).

Description

Changes since v2.0.19:

Merged major new feature from vanilla Fooocus: Inpainting & Outpainting,

Added support for FreeU,

Added information about total execution time,

Fixed error related to playing audio notification,

Updated Comfy.

FAQ

Comments (17)

I wanted to ask you about the custom option for image sizes. That is, being able to create images larger than the list of sizes that come in fooocus. Being able to generate 1152 x1152 images for example, it doesn't matter if it is a little slower. Allow user to choose size

Just type "1152x1152" - if you have GPU with enough VRAM, you can use a bit higher resolutions. But in general it's best to create higher resolution images in 2 passes: 1) generate image with native resolution given model was trained on (which is around 1024x1024 total pixels for SDXL), 2) use img2img to generate upscaled version with more details. You can see the example here: Upscaling using Image‐2‐Image.

@MoonRide Thank you very much, this has been useful for me. Congratulations on your work. I really like Fooocus and I know it will get better and better

I wonder if there's a proper way to input lora weight greater than 2 (or less than -2) in Fooocus?

The <lora> tag works fine in Negative Prompt. However, in Positive Prompt, it got duplicated multiple times by both Styles and Prompt Expansion, messing the lora's effect up.

In the meantime, I could set off-limits lora values in file settings.json and it works fine as long as I leave the lora weight UI untouched.

Or is it possible for the author to add lora weight limit variables in settings.json? Thanks a lot.

@cloudreadypc From my observations (tests in ComfyUI) values above around 1.3 lead to deformed output. Could you provide use case for that - like example of LoRA model which would benefit from that kind of increased strength range?

@MoonRide Detail Tweaker XL

May I suggest another solution? If the (+-2) auto correction of Lora Weight's text inboxes is disable, users can type in whatever values they need. It's ok to leave the sliders unchanged and capped at +-2. Thanks a lot.

Will there be a Mac version in the near future?

@Fluffies I don't have Mac to test it, but Fooocus uses ComfyUI as a backend - so if there are some ways to make ComfyUI work on Mac it can work for Fooocus, too.

For example in ComfyUI issue 1140 I noticed someone mentioned launching ComfyUI via "python3 main.py --force-fp16", so you can try something like "python3 launch.py --force-fp16". If you don't have PyTorch and other necessary Python packages installed you should start with "pip3 install torch torchvision torchaudio", then "pip3 install -r requirements_versions.txt" - that's the usual way of setting up initial Python dependencies.

@MoonRide I've got all those and I run ComfyUI as well as Invoke and Automatic1111. I'll give it a shot. Thanks!

Install went great but got some errors during runtime:

>> [code]File "/Users/fluffies/anaconda3/lib/python3.11/site-packages/torch/amp/autocast_mode.py", line 201, in init

>> raise RuntimeError('User specified autocast device_type must be \'cuda\' or \'cpu\'')

>> RuntimeError: User specified autocast device_type must be 'cuda' or 'cpu'

So I started with command flag --cpu, then got this error

>> RuntimeError: "addmm_impl_cpu_" not implemented for 'Half'

So I started without the --force-fp16 and it's running fine but it's running on the CPU so it's extremely slow, around thirty seconds per step. Ideally it would take advantage of the 32-core GPU …

Running it without --CPU or any other command flags returns error

>> Device mps:0 does not support the torch.fft functions used in the FreeU node, switching to CPU. >> /Users/fluffies/Documents/Fooocus-MRE/modules/anisotropic.py:132: UserWarning: The operator 'aten::std_mean.correction' is not currently supported on the MPS backend and will fall back to run on the CPU. This may have performance implications. (Triggered internally at /Users/runner/work/pytorch/pytorch/pytorch/aten/src/ATen/mps/MPSFallback.mm:11.)

>> s, m = torch.std_mean(g, dim=(1, 2, 3), keepdim=True)

>> /Users/fluffies/anaconda3/lib/python3.11/site-packages/torchsde/_brownian/brownian_interval.py:594: UserWarning: Should have tb<=t1 but got tb=7.754780292510986 and t1=7.75478.

>> warnings.warn(f"Should have {tb_name}<=t1 but got {tb_name}={tb} and t1={self._end}.")

... which says it's falling back to CPU. However , it's running a lot faster, instead of 30 seconds per step, it's now running around 5s per step, so maybe it's harnessing the GPU after all? iStats shows my GPU running at 99% so that would seem to be the case. Revision is still slow at 52s/step but standard T2I and I2I inference time is greatly improved.

@Fluffies It looks like limitations within PyTorch itself (some operations not supported on MPS device) - but I am glad at least basic functions started to work. What combination of ComfyUI flags seems to work best on your hardware, in either Fooocus or pure ComfyUI?

@MoonRide so far with ComfyUI just the standard "python3 main.py --force-fp16 --use-split-cross-attention" and with Fooocus just “python3 launch.py,” with no command line flags, which is fast despite falling back to CPU on some functions. I'm no real expert, yet, though, I've only dabbled in ComfyUI; most experience is with Automatic1111.

@MoonRide — Does MRE work with prompt alternative options and weights in the same fashion as Automatic111, such as:

{incandescent glow, dimly-lit, night, (dark studio:1.3), midnight, candlelit | golden hour, sunset silhouette, rim lighting, warm translucent glow}

... I presume so, as it's based on the ComfyUI backend, which does the same?

Thanks!

@Fluffies In most aspects syntax parsing is the same as in bare ComfyUI, but with 2 small changes:

1. Encoding token weights is based on A1111 method.

2. Support for wildcards is included (__color__ -> picked random value (taking seed into account) from wildcards/color.txt).

It would be really useful if it recognized folders for the models, as everything I have is organized into folders. This works well with A1111, and allows for keeping images and info such as tags, prompts, in an organized manner, when necessary.

@Polygon I am not not sure what are you asking for. Model folders structure in Fooocus is inherited from ComfyUI - it is organized, just in a bit different way. If you want to customize it to use models from different folders, you can do by specifying different folders in paths.json (or user_path_config.txt, vanilla way).

@MoonRide I mean if I place models in separate folders, it won't recognize them. In A1111 I keep all models organized in folders for XL, 1.5, then inside those I have sub folders for each of the models with images and whatever tags/info are needed. It doesn't recognize anything inside folders, the checkpoints, lora, esrgan must all be placed together at the root folder for each of those . I hope that makes more sense now.