Workflow description :

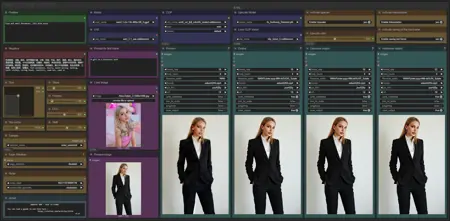

The aim of this workflow is to generate video using the face from an existing photo in a simple window.

Resources you need:

📂Files :

WAN2.1

Recommendation :

24 gb Vram: Q8_0

16 gb Vram: Q5_K_S

<12 gb Vram: Q4_K_S

I2V Quant Model: Wan2.1-I2V-14B-480P-gguf or Wan2.1-I2V-14B-720P-gguf

In models/diffusion_models

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

CLIP-VISION: clip_vision_h.safetensors

in models/clip_vision

VAE: wan_2.1_vae.safetensors

in models/vae

FLUX

GGUF_Model: FLUX.1-dev-gguf

"flux1-dev-Q8_0.gguf" in ComfyUI\models\unet

GGUF_clip: t5-v1_1-xxl-encoder-gguf

"t5-v1_1-xxl-encoder-Q8_0.gguf" in \ComfyUI\models\clip

Text encoder: ViT-L-14-TEXT-detail-improved-hiT-GmP-TE-only-HF.safetensors

"ViT-L-14-GmP-ft-TE-only-HF-format.safetensors" in \ComfyUI\models\clip

VAE: ae.safetensors

"ae" in \ComfyUI\models\vae

FLUX PuLID : pulid_flux_v0.9.0.safetensors

"pulid_flux_v0.9.0" in \ComfyUI\models\pulid

ANY upscale model (depreciated):

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

PuLID need your python to have Insightface :

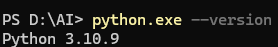

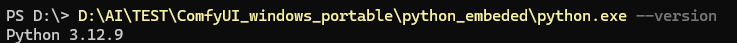

Check your python version :

for windows portable version : (the path depends on where you unzipped ComfyUI)

for windows portable version : (the path depends on where you unzipped ComfyUI)

Download Insightface whl which corresponds here : Assets/Insightface

Download Insightface whl which corresponds here : Assets/Insightface

(Here my local python is on 310 and mobile version in 312)

Then install all prerequisites and insightface :

python.exe -m pip install --use-pep517 facexlibpython.exe -m pip install git+https://github.com/rodjjo/filterpy.git

python.exe -m pip install onnxruntime==1.19.2 onnxruntime-gpu==1.15.1 insightface-0.7.3-cp310-cp310-win_amd64.whl

Description

Base version

FAQ

Comments (22)

First off, thanks for your work. Impressive stuff.

I'm not even sure why I got this workflow, I have all the files but what exactly is the purpose of this workflow?

This workflow allows you to reproduce a face in a video

Is this a flux pluid wf that feeds into a i2v wan wf?

Yes

ok, I have this working now.. However, I enter a Caucasian woman and the workflow generates an asian woman. I thought the idea of this was to keep the face the same?

With the 1.1 version? As you can see in my exemple i use a caucasian girl and have a caucasian woman in the video

The preview image is an asian girl too?

@UmeAiRT Ok, yes I had the old version. I updated to 1.1, now its working nice. Thanks for the effort on this. Yes, the resemblance is pretty good. I definitely will be using the workflow. The resemblance isn't perfect, but it is pretty good overall.

@trashkollector175 It is possible to play a little with the settings in the PuLID node for a better resemblance

not working fine...

Great workflow! Any plans to add WAN lora?

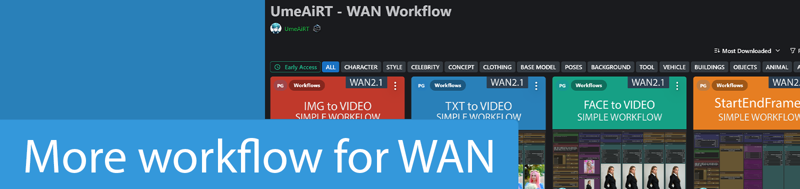

You can easily add lora by taking example from my two other workflows for WAN

I have been using this for a few days now.. this workflow is great.. kudos to the author.

Thanks !

Thank you for this, and thank you even more for your scripts. I was struggling to install the missing nodes in comfy but your script resolved it for me and the guide was easy to follow, I'll upload some videos after playing around with it for a bit.

Thanks for your feedback.

@UmeAiRT I like how you have these workflows set up, but for some reason after I resize the image I can never get it set back to 720, I always have to use 512x768. Is it possible to keep what you have but also add the option to type the exact size if needed?

Sometimes I get blurry teeth as well, I'm going to try and change the upscaler model to see if that helps fix the issue, but it might end up needing some kind of facedetailer node.

If for some reason a facedetailer node is needed is it worth also adding one for the hands as well?

@synalon973 To choose the size in exact numbers, simply double-click on the number and an editor will open.

@UmeAiRT When I double click it doesn't give me an editor, but its a minor issue anyway.

RuntimeError: Can't access transformer_options, this requires ComfyUI nightly version from Mar 14, 2025 or later