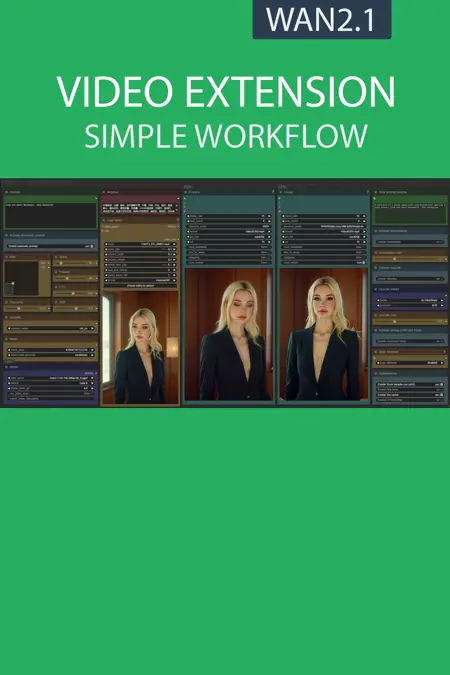

This workflow retrieves the last 15 frames of a video to create a logical sequence, then merges the sequence with your original video.

📂Files :

Recommendation :

>24 gb Vram: base or Q8_0

16 gb Vram: Q5_K_S

<12 gb Vram: Q4_K_S

For base version

VACE Model: wan2.1_vace_14B_fp8_e4m3fn.safetensors or wan2.1_vace_1.3B_fp16.safetensors

In models/diffusion_models

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

For GGUF version

VACE Quant Model: Wan2.1-VACE-14B-QX_0.gguf

In models/diffusion_models

Quant CLIP: umt5-xxl-encoder-QX.gguf

in models/clip

VAE: wan_2.1_vae.safetensors

in models/vae

ANY upscale model (depreciated):

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

Description

What's new? :

New interface,

New upscaler,

New model optimisation,

New LoRA loader.

FAQ

Comments (6)

I import a 32 FPS vid that was interpolated from 16 FPS, and always end up with 64 FPS - the 'new' half of the video plays at twice the speed. Any help adjusting this? I tried the force_rate as well as various interpolation setups but it's always the same.

Why you dont use the base 16 fps video and interpolate after?

@UmeAiRT Oh, so just make all "parts" of the video not interpolated and then interpolate everything on last extension attempt?

Edit: I mean, if I want to have a short vid made out of 4 2-second long parts, I should turn on interpolation only on the last Extension, so whole thing is interpolated?

@terrosaurx Yes ^^ the interpolation is done on the new merged video, regardless of the number of merges that were made before

@UmeAiRT Now that makes a lot of sense, initially I thought only the added part is being interpolated. Thanks for help! Your workflows are incredible <3

Thanks for all these resources

The OG result includes the full video, but IN and UP only include the new portion. Can you change it to make IN and UP include the full video? Otherwise each extra process not in one workflow seems to make the video darker and causes mismatch.