March 17, 2023 edit: quick note on how to use a negative embeddings. You download the file and put it into your embeddings folder. Since I use A1111 Webui, that is stable-diffusion-webui/embeddings. If you use a different program you will have to check with its documentation on where to put embeddings.

After you have put the file in the appropriate folder, you then use the embedding by entering its name into the "negative prompt" box instead of the normal prompt. The name, by default, will be either bad-picture-chill-75v, bad-picture-chill-32v, or bad-picture-chill-1v, depending on which one you downloaded. If you rename the file, that will change the activation word to whatever you renamed the file. So if you renamed "bad-picture-chill-75v.pt" to "pizza-is-great.pt" the activation phrase changes to "pizza-is-great"

End of edit.

This is a "bad prompt" style embedding- it is trained to be used in the negative prompt. It is made specifically for ChilloutMix (version chilloutmix_NiPrunedFp32Fix.safetensors [fc2511737a]), so it may or may not work well on a non-chilloutmix model. The prompts used for the pictures were mostly taken from the comment section on the ChilloutMix page.

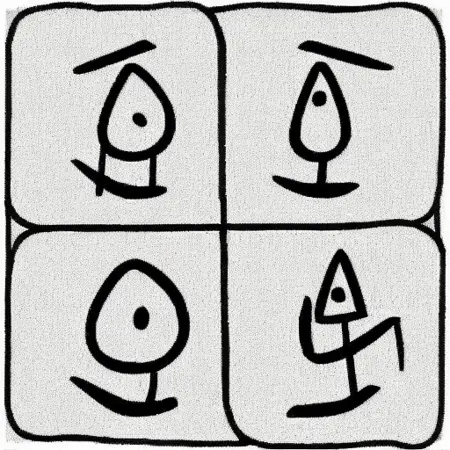

It comes in 1 vector, 32 vector, and 75 vector versions- the higher you go, the more detail you will be able to squeeze out, but the closer you will get to deep-frying your image. Check out the comparison images that I uploaded as part of the 75 vector version- you'll probably have to right-click and "open image in new tab" to properly zoom in.

The 'Training Images" download for each version is a .zip that has all the saved .pt versions that were generated during the training. Look there if you want a version that has more or less steps. If you want the actual training images, click on the "Actual Training Images" version which should be below the 1V version of the embedding.

Preview images: all preview images were generated at 512x512 with highres enabled @ a factor of 2 and 0.75 denoising, resulting in a final image of 1024x1024. Model: chilloutmix_NiPrunedFp32Fix.safetensors [fc2511737a]

Sometimes the original prompt or original negative prompt will include a LORA or embedding- here are links to them. Note: these are not used in the single image previews, only the original prompts that I borrowed and put into the stacked comparison images.

Japanese Dolllikeness lora (robot lady image)

easynegative embed (armored lady) (impossibly tight black bodysuit lady)

Description

This contains all 5000 training images that I generated and used to train all versions of the embedding. Download this if you want to train the embedding using your own training parameters. The preview images contain the image generation parameters. Pictures were selected to give an idea of what the entire image set contains.

FAQ

Comments (31)

Very useful concept. I've almost always seen in Chilloutmix and derivates, a too much thin and elongated neck in the female figures, so I hope this embeddings will fix that problem.

With this negative, photos come out A LOT realistic (like the best from all models).

However, SD also gets more difficult to detect my prompts oO

What kind of prompts are you having difficulty with?

@SeekerOfTheThicc Like ignoring clothes, poses much easier...

Wow, true, I hate limited poses in realism models so use mixed/half-anime ones, and was looking for something to make them look more realistic. This does it!

Any chance to solve the problem of the thin and elongated neck, that's present in Chillout and derivates ?

Tried (long neck) in negative prompt? In Automatic1111, save seed, and re-render same pic while changing the trait's value, for example, start with (long neck:1.1) and then go for 1.2,1.3,1.4, I noticed 1.5 usually forces whatever you want but in a cursed way.

Thanks. But I was counting on a new release of Chillout without this problem or at least a good dedicated embedding.

I'm not sure if an embedding can solve the problem. If there is any prompt combination that results in fixed necks, that can be turned into an embedding. I think the problem has to be finetuned out of the model, possibly with a negative finetune. however, finetuning isn't something I've been able to get good at yet, so hopefully someone else with an interest can look into it.

@SeekerOfTheThicc I agree, the best solution may come from a new release. We'll see.

Can you guys give me example pictures of the long neck problem, and some example pictures where there is no long neck problem? From any model, doesn't matter. Pictures where it might not be obvious at a glance if the neck is too long or not.

咋用的啊大佬们,放在哪里怎么触发啊?

how to use it?

You need to put the file you download into your embeddings folder, and then use the trigger word (the file name without the .pt) in the negative prompt.

@SeekerOfTheThicc ok,thank you

sd-webui-aki\sd-webui-aki-v4.1\embeddings,放在这个文件夹里面,在SD里面负面词,输入这个文件的名字就好啦!

this one works better than easynegative bad_prompt_version2 ng_deepnegative_v1_75t

which version

I'm always thankful 😀😀

The training data you uploaded is not training data, but model step outputs.

The main post points already this out and informs people that the actual training images are under the version labeled "Actual Training Images".

It seems to have not such a high tendency like other negatives to bias towards asian facial features

even if majority of results here are asian skull

will this one effect hands or picture quality only?

Hey Im wonder why in your exmaples 75v + negative promt is close to 32v then to 75v.

Could it be that you overloading with tokens and getting diminishing returns from the TI?

Could you imagine how to test this?

Where are the captions for the images? Also why are there so many images at all? I thought embeddings were supposed to be only a few images

I apologize for taking so long to reply to your comment- I had assumed that CivitAI would inform me any time a comment was made on one of my creations, but that may not be the case.

There are no captions for the images, as the caption used during training was from a template. I cannot recall what was in the template, but it was either "a picture of" or a list that was based off of the ones used in the original SD TI paper.

As for why I used 5000 images- I wanted the embedding to "average out" from the training images. If I put in too few images, then the embedding would take on the characteristics of a random sample and would be characteristic of that sample instead of the "true" characteristics it should have. An extreme example would be if you used only 1 or 2 images, the training program would have a difficult time learning what you are trying to teach. The more images you present, the more certain you can be that the final product is representative of the whole rather than of the few. This thinking does have a limitation- I do not actually know what is too few or too much. I chose 5000 images because early TI tutorials would suggest training an embedding to 5000 steps. I figured that if I had a unique image for every step, it would provide an optimal outcome. It could be that the ideal is 500 images or 50000 or some other number. Methodically testing such things is difficult due to the amount of subjectivity involved.

The reason why using significantly less images is a good idea when training a normal embedding, and other things such as LoRAs, I think is due to the amount of extraneous concepts that end up getting included the more pictures you add. The more concepts you introduce, the more "good" pictures you need to include in order to properly teach that concept as well so that it can be adequately differentiated from the other concepts. What is also majorly problematic is that we (people) tend to not realize how many new concepts we might be introducing with the images that we are using, and don't tag the images accordingly or discard problematic images. This information is severely underrepresented in tutorials- the furthest the author will usually go is to say what I have said already- you need "good" images and "good" tagging.

Anyways, I trained the embedding the way I did as a way to sidestep needing to both find and tag images. Sometimes it works, sometimes it doesn't.

和我的做法一样 我用Topaz photo AI去降噪,但是我用的是512X768

sideline but someone tell me where can i find and download <lora:ER4ZQV5:1>

How can I feed it into the diffuser API like https://huggingface.co/docs/diffusers/v0.17.1/en/api/pipelines/stable_diffusion/text2img?

THank you for taking the time to learn and create content like this for everyone. You're a great creator and I love your work. Thank you so much!

What is actual Training Images for?

I trained the negative embedding using the actual training images. They are there in case someone wants to use them to train a negative embedding using their own settings.