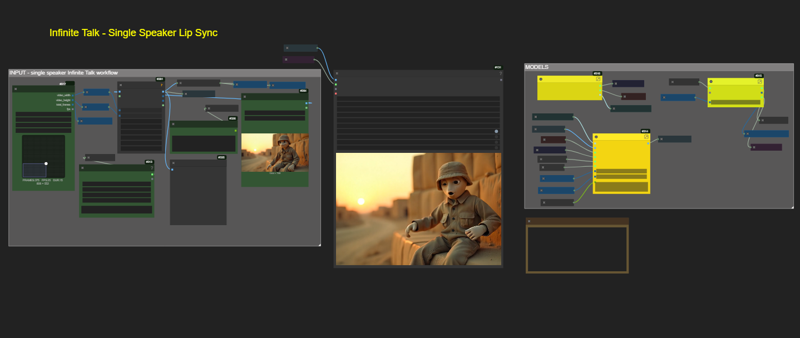

Switched over to InfiniteTalk model

Please note : this version uses subgraphs, please update your UI if necessary.

No spaghetti nightmare -- like some workflows.

Description

Two Person Talking

FAQ

Comments (9)

I have rtx3090 and RAM 64gb. I set block swap to 40 and still have OOM. I am not sure it is normal or something wrong with the workflow.

no, that's the exact same set up I have. except I am using 10 for block swap. what resolution are you trying?

@trashkollector175 The resolution is 480x832

@trashkollector175 How long it should take to generate 4 seconds for an 480x832 image in your case? For me, it took about an hour until I got OOM. I re-installed WanVideoWrapper with git as you said and also used wan gguf instead of fp8 but still no luck

I tried it again with wan gguf. This time, it didn't OOM but took about an hour for each step. I think it has something with block swap as it swap my VRAM 14 gb everytime no matter the number of "blocks_to_swap" I set. This is the screen short https://ibb.co/wZ6s6NbT

It took 8 Minutes for me do 768x384 for a 14 second video.

I used 20 for by block swap in this case.

It seems like its taking quite a long time for you, but i don't understand why.

Block swap memory summary:

Transformer blocks on cpu: 8856.39MB

Transformer blocks on cuda:0: 8856.39MB

Total memory used by transformer blocks: 17712.77MB

Non-blocking memory transfer: True

Sampling 81 frames at 768x384 with 5 steps

Max allocated memory: max_memory=14.384 GB

Max reserved memory: max_reserved=16.500 GB

0%| | 0/5 [08:08<?, ?it/s]

Prompt executed in 496.43 seconds

I forgot to ask, are you using sageattn?

@trashkollector175 I come to report back. I MADE IT!. I removed all flags from run_nvidia_gpu.bat (--high-vram, --disable-smart-memory, --fast) I deleted them all from the bat file and run it again and worked. It took around 5 minutes to generate for 81 frames at 768x448 with 6 steps. May be it --disable-smart-memory flag causes the problem.

@eakkawat Oh wow, nice job figuring that out.