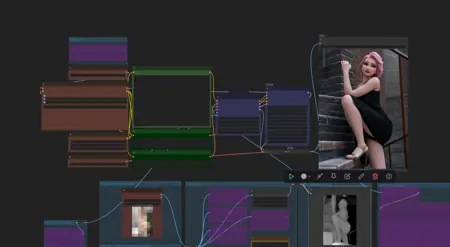

This workflow lets you use ControlNet with Wan2.1 for text-to-image (T2I), just like we used to back in the SD1.5 and SDXL days. It's easy to install and use.

Sure, you could use reference images and videos for reference-to-video (R2V) generation, but that's a real pain for me to run, so it's not my focus.

For those who don't know about or haven't tried Wan2.1_Vace yet, it's time to jump on it and give this workflow a shot.

It's based on a workflow template from ComfyUI (so Sweet of them!), which I tweaked to fit my preferences and make it easier for my daily use.

Checkpoint:

/models/diffusion_models

https://huggingface.co/QuantStack/Wan2.1_14B_VACE-GGUF/tree/main

StepDistill Lora:

https://huggingface.co/lightx2v/Wan2.1-I2V-14B-480P-StepDistill-CfgDistill-Lightx2v/tree/main/loras

Description

FAQ

Comments (8)

We should all learn to use Chinese as prompts.

i guess writing prompt in english then use an LLM to rewrite the same in native chinese would be okay too.

AI thinks in no language (that ain't how it works), and China hardcore trains with equal amounts of English and the main Chinese languages!

yea man i tried english prompting and same prompt with chinese and chinese one was 2x prompt understanding

wow! this is amazing! one thing if I want to train a T2I lora for wan 2.1 Vace, how can I do that? mostly anime or even a style if possible? you know? if yes please write a post about that.

The ksamplerwithNAG node is utterly broken when it comes to memory management, and causes the worst memory problems I've ever seen in a workflow.

Use a normal Ksampler, and if you still want to use NAG, then use WanVideoNAG node instead.

🎬 Tutorial: Using KSamplerWithNAG for WANvaceToVideo in ComfyUI

KSamplerWithNAG is a powerful sampler that improves temporal coherence in video generation — especially useful with WANvaceToVideo for making smooth, flicker-free animations.

🔧 Key Parameters

Nag_scale

Controls how strongly NAG pushes for temporal consistency.

Higher = smoother video, but can reduce detail or creativity.

Nag_tau

Adjusts how far in time the sampler "looks" for consistency.

Lower = sharper motion, higher = smoother blending across frames.

Nag_alpha

Balances spatial vs. temporal attention.

Lower = crisper images; higher = more motion smoothing (but may cause ghosting).

✅ Recommended Settings by Goal

1. General Purpose (Balanced)

Nag_scale: 5.0

Nag_tau: 2.5

Nag_alpha: 0.25

2. Sharper Video (Less Blur, More Detail)

Nag_scale: 5.5

Nag_tau: 2.2

Nag_alpha: 0.20

3. Dreamy & Smooth (Cinematic or Fantasy Look)

Nag_scale: 6.0

Nag_tau: 3.2

Nag_alpha: 0.35

4. Tight Facial or Lip Sync Coherence

Nag_scale: 6.5

Nag_tau: 3.8

Nag_alpha: 0.30

🧠 How It Works in Practice

Higher scale smooths out flickers between frames, but can limit variety.

Lower tau makes motion pop more clearly, ideal for action shots.

Alpha tuning helps find your balance between detail vs motion flow.

🛠 Tips for Best Results

Use with UnetLoaderGGUFDisTorchMultiGPU or another NAG-compatible UNet.

Keep resolution moderate (e.g., 512×896 or 480×832).

Use PromptSchedule or KeyframePrompt to evolve prompts over time.

Consider batch testing multiple values to see what fits your prompt/style best.

-

Sampler_Name: Uni_PC, or Uni_PC_BH2.

Scheduler: Normal.

CFG: 1.1

Shift: 1.50, or 5.0 if it is a long video.

Steps: 7.

Best preprocessor ( AIO Aux Preprocessor )

Depth Anything V2 - Relative.

Cannyedgepreprocessor.