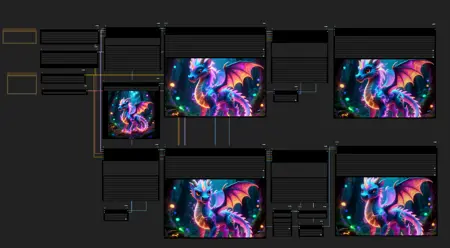

Packed up some nodes to generate longer videos with wan 2.2 in 81 frame batches. Lower vram friendly and overheating friendly lol.

To get the most out of this change seed and prompt at each subgraph. Treat it like seed traveling a bit

Description

mmaudio added

FAQ

Comments (25)

Broke it when I renamed ports, one moment.

Fixed

Thanks for this! works well. Could you possibly make a version that also can do T2V with Lora (no input image)? Thanks! This is very cool.

Yeah, I can do a t2v version. It's going to be a minute while I finish this one, adding MMAudio and etc lol.

@TheGeekyGhost Thanks!

For ppl concerned with speed, adding Sage Attention and FP16 Accumulation nodes between the checkpoint loader and the subgraphs speeds up this workflow by 25%

Tried adding my own lora stack to load up additional character and motion loras, but failed to get coherent results. 1st video is good but videos 2 through 6 get progressively more and more blurry, everythings just drifts apart. Any tips on fixing that would be appreciated.

Additional Loras in the mix will require tweaking. Once I finish I can take a look at adding some additional loras.

I'll see if I can reduce or fix the degradation by having it restore the start image for each subgraph before running it.

new version should fix quality issues, but due to MMAudio I had to cut some nodes

@TheGeekyGhost This guy figured it out, at the end of the video. Seamless transitions, no degradation. The solution is super confusing, the workflow is free, if we could just extract the solution into a much more simple workflow without the loops. We're soooooo close to solving it.

@mmrabati2154 thanks for this link. From what I understand, it re-uses the last 5 frames from the previous video to maintain consistency, among other things (using VACE + InP + Pusa loras). I downloaded that guys workflow and managed to fix the missing nodes. Downloaded the required loras. Now downloading the huge ass .gguf models theyre 18.7 Gigs each. (and the non-ggufs are like double that so I pass on those). I hope it runs on my 12Gb. As it is, there is no node for loading a character lora, will have to adress this later.

@randomchatter1234776 If you get node validation errors disable the autoCastPatch in ComfyUI_ezXY

@randomchatter1234776 how its going?

The first long I2V workflow that worked out of the box for me, simple and effective! I don't recommend going beyong 4 iterations as consistency falls dramatically

Try the new one, I added color match, some quality preservation, and MMAudio.

How easy is it to alter to use GGUF models?

Should be hard at all, just swapping out some loaders and the models. They're in the same subgraphs so if you change things out in the model subgraphs it will be set for all the other subgraphs already. No need to reconnect everything, just swap them out inside the subgraph

I stopped long clip generations as the quality degrades very fast and color shift inredibly bad. If this will be fixed in WAN, it will become great.

I've added color correction and some other things to prevent this in the new version with MMAudio.

I think they fix some of these issues in 2.5 Cant wait till it comes out

@randomchatter1234776 I think new Wan models since 2.5 onward will not fit in consumer GPU VRAM for hobby local generation unless using very low quants, but we will see.

hi Geeky Ghost,

Thanks. This is simple and fully functionally

SDKtertiaire2

My low-spec machine (Ryzen 5 2600, RTX 3060Ti 8GB, 16GB RAM) handles this workflow quite well. The image-to-video process for a 7-second clip 480p takes around 700+ seconds, which isn't too bad. I use it for making fun little videos. However, the final group of nodes connected to the audio is not working. I'm not sure what the cause is. Overall, it's a good workflow.

This works great. I replaced the FP8 model with the WAN 2.2 Enhanced NSFW | Camera Prompt Adherence (Lightning Edition) I2V Q6 model and removed the LoRAs. The quality is excellent for continuous generation, and it runs very fast on my 4070 Ti with Sage Attention. The workflow is easy to understand, compact, and aesthetically pleasing.

The MMAudio part doesnt start for me tho, the generation stops at the third subgraph. Tried to fix it but I started using another workflow for the mmaudio instead.

Thanks, man.

Works and runs really fast for me! But there seems to be a major flaw, well for me atleast, after the first 5-10seconds of the video, it will become extremely cgi/animated and does not look anything like the initial realistic image I load.

Not sure why?? I am using wan 2.2 14b fp8 model. All the other longvideo workflows don't do this.