v1.3:

Prompt enhancer added that takes the input image as a reference (LLM-based, Gemma 3 abliterated).

Works for SFW as well as NSFW

Your own prompt can be very basic and short.

You can feed it back its output and write what you want to have changed, to - for example - include trigger words.

If needed, its custom instruction prompt can be adjusted in each "Enhancer" node.

If you dont want to use it, the group needs to be manuall bypassed for each part.

Global LoRAs (SVI, lightx2v) only need to be loaded once.

Aspect ratio of the input image is automatically taken over for the video.

You only need to adjust the desired resolution tier in the CustomResolution node.

Troubleshooting for the prompt enhancer if you get errors such as "LTXVGemmaClipModelLoader No package metadata was found for bitsandbytes":

Update ComfyUI

Update all custom nodes

open cmd in your ComfyUI portable folder and type:

python_embedded\python.exe -m pip install -U bitsandbytes

or try: python_embedded\python.exe -m pip install -U bitsandbytes accelerate

Troubleshooting/best practice tips:

The SVI-LoRA is mandatory in each part for the workflow to function (link in the workflow).

The SVI-node does not seem to update with ComfyUI-manager, you`ll need to install it manually via Git (link in the workflow).

For Sage Attention, this guide on YouTube helped me a lot: "How to Install Sage Attention 2.2 on Latest ComfyUI Portable And Deskop Version".

Reduce the output bitrate to about 10 if you`re having trouble uploading to CivitAI.

Start with 2x, 3x, 4x turned off and at a lower resolution (e.g. k20) and then go from there.

v1.2:

Workflow reworked to support SVI 2.0 Pro, which uses the starting image and latent video from the previous clip to:

Improve transitions

Enable cross-clip memory for faces and other attributes

Resolution can now only be set once for part 1; it is carried over to all subsequent clips

Added switches for quickly switching between GGUF and diffusion/.safetensors models (e.g., SmoothMix). Deactivate LightX2v when using SmoothMix, as it is already embedded.

Note: SmoothMix changes faces and appearances quite a bit with this workflow compared to the GGUFs of base WAN.

Sage Attention and patched Torch are now part of the model inputs and can be bypassed globally.

Output folder structure updated: partial videos are now saved in the same folder as last-frame images.

Separate switches for last frame and all frames image preview.

v1.1:

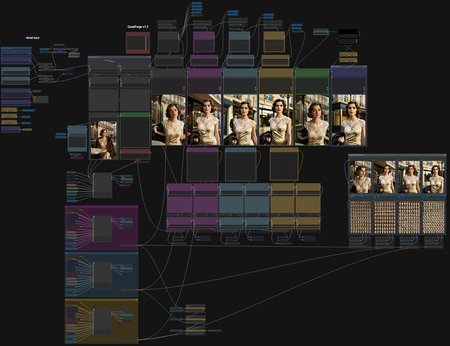

Major declutter: Removed most crossing lines using set/get, subgraphs, and anything-everywhere nodes.

Single model input propagated everywhere.

Color-coded sections + cleaner layout.

CustomResolution Node Update:

9:16 & 16:9 support with shared resolution tiers.

24 & 30 FPS support.

Auto-rounding to multiples of 8 for manual inputs.

https://github.com/AugustusLXIII/ComfyUI_CustomResolution_I2V/tree/main

v1.01:

Changes:

Missing save last frame node added for scene 1.

Switches for image preview (reduces generation time) and interpolation added.

Update: My CustomResolutionI2V node is now available via ComfyUI-manager. You might need to update ComfyUI-manager if you can`t find it.

QuadForge WAN 2.2 – ComfyUI Workflow

A powerful and flexible img2vid chaining workflow optimized for WAN 2.2 GGUF with the lightx2v-LoRA (6 steps).

Start with a single input reference image and generate up to four consecutive video segments. Each segment automatically uses the last frame of the previous clip as its starting image for perfect continuity. All segments are stitched together seamlessly, with final video interpolation (RIFE) applied as the last step for a smooth, high-quality output.

Key features:

Adjustable number of generations (1–4) via simple switches

Tip: Start with 2x, 3x, and 4x turned off and add them until you like the result of the previous part. For this to work, you have to keep the seeds, LoRAs, and prompts fixed. Bypass the interpolation video node until you like your final result to save time.

Independent prompt and LoRA control for each segment

SageAttention enabled for approx. 40% speedup (bypass the two nodes in each step if not installed yet or if not supported by your GPU)

Automatic last-frame extraction and saving after every generation

Quick resolution and clip length adjustments using my own CustomResolution node (available via ComfyUI Manager): https://github.com/AugustusLXIII/ComfyUI_CustomResolution_I2V)

Fully automated stitching

RAM cleanup and model unload nodes for better stability

Ideal for creating long, consistent tracking shots, progressive scene reveals, or any multi-stage animated sequence from one starting image.

Notes:

Lots of spaghetti but it works (will be polished in future updates)

Framerate output in the CustomResolution node is currently fixed to 16 fps (I will make that adjustable as well)

How-to manual: TBD

Description

v1.2:

Workflow reworked to support SVI 2.0 Pro, which uses the starting image and latent video from the previous clip to:

Improve transitions

Enable cross-clip memory for faces and other attributes

Resolution can now only be set once; it is carried over to all subsequent clips

Added switches for quickly switching between GGUF and diffusion models (e.g., SmoothMix). Deactivate LightX2v when using SmoothMix, as it is already embedded.

Note: SmoothMix changes faces and appearances quite a bit with this workflow compared to the GGUFs of base WAN.

Sage Attention and patched Torch are now part of the model inputs and can be bypassed globally

Output folder structure updated: partial videos are now saved in the same folder as last-frame images

Separate switches for last frame and all frames image preview

FAQ

Comments (10)

Quite the evolution of your original quadforge. Now to add Ollama goonsai prompt autogeneration!

Perfect workflow! In terms of stability, ease of use, and generation quality, this is by far the best one I've ever used.

(By the way, I spent an entire afternoon troubleshooting to get the right sageattention version for my setup, but it was totally worth it!)

getting group node missing anyone else?

Node 1091: UUID 77440340-3a38-4bdf-85bd-e86771cc43a9

Node 1371: UUID f4ff6954-7d48-46cb-8a12-4f1be0e49862

Node 1414: UUID 2f3c6f77-e06d-4c0e-9bf1-6d6b0e4b67fc

one of the best workflows, but missing some prompt enhancer and perhaps mmaudio to make it complete

Good idea to load lightnings with the Power Lora Loader (rgthree) but there is no need to load it again and again if you inject it at the beginning. Also as it has a toggle the switch is not needed. Maybe you can use it once without a switch so you can load SVI lora also only once at the beginning. Overall still good memory management of your workflow. It took some time to figure out that the SVI lora is mandatory for this worflow as I got only grey rush foggy videos without :)

Guys, and what if my first start frame in video must begins with my character stands from back and turning in front? How can i set his face before turning?

Never saw V1, but I can tell the amount of work needed to get this fine product done GJ! I noticed that each segment will change colors slightly. In my case red colors start going magenta at the end. Does this flow have a "Color Matching" node?

I don't know why, but I only getting a fully black output.

I didn't changed anything and I'm using all the right models.

Any idea?

Thank you for your great work !

Have you guys noticed that videos generated from SVI Pro have one pretty obvious flaw: the further you go toward the later clips, the video quality drops noticeably? The details of objects get way blurrier compared to the first clip, and in some more complex videos, the character's facial features even start getting messed up.

Does anyone know if there's any method to reduce or fix this issue? Please help me out !

I keep getting:

[GetNode] ✗ Variable 'Last Frame P2' not found! Available: (none) Tip: Make sure SetNode runs BEFORE GetNode in the graph.

Can someone help me fix it and explain to me like I'm 10? 😭