This is a QWEN Image Edit workflow designed to run large AIO QWEN checkpoints (≈28GB) while still generating high-resolution outputs on 12GB VRAM GPUs.

The focus here is:

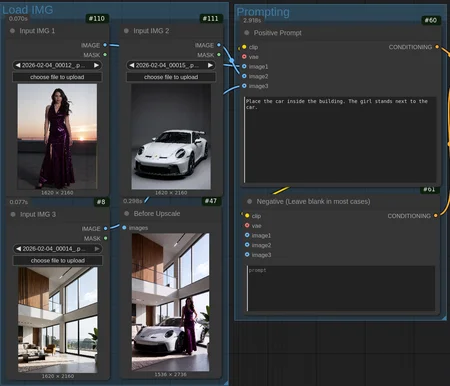

Image editing / guided edits

Very low step counts

Stable results at low CFG

Aggressive memory management

Clean upscale + post polish

If you’ve struggled getting QWEN AIO models to behave on smaller cards, this setup is built specifically to solve that.

Key Features

Runs QWEN AIO (GGUF or Safetensors) models on 12GB GPUs

Uses Phroots AIO https://huggingface.co/Phr00t/Qwen-Image-Edit-Rapid-AIO (Both NSFW & SFW available)

Tested high sampling resolution

Works with 1–3 input images for guided edits

Extremely low step counts (4–6 steps)

CFG-stable at CFG = 1

Includes upscaling, resizing, CAS sharpening, and desaturation

Cleans GPU memory automatically after runs

LoRA-compatible (QWEN-trained LoRAs supported)

This workflow prioritizes practical generation, not theory — fast previews, predictable edits, and minimal VRAM spikes.

Required Nodes / Extensions

Make sure you have all of these installed:

Core

ComfyUI (recent)

ComfyUI-GGUF

QWEN Image nodes

TextEncodeQwenImageEditPlusClipLoaderGGUFUnetLoaderGGUFVaeGGUF

Upscaling / Image

was-node-suite

KJNodes

ComfyUI Essentials

4x_foolhardy_Remacri.pth (upscale model)

Utility / Memory

easy-use

easy clearCacheAlleasy cleanGpuUsed

If something errors: double-check GGUF + QWEN nodes first — most issues come from mismatched versions.

Recommended Sampler Settings

These are intentional — higher values usually make QWEN worse, not better.

Sampler: euler_ancestral

Scheduler: beta

Steps: 4–6

CFG: 1.0

Denoise: 1.0

Seed: random or fixed

If you’re coming from SDXL: do not raise CFG. QWEN responds very differently.

LoRA Notes

QWEN-trained style LoRAs do work

Load via Model-only LoRA loader

Suggested strength range:

0.85 → 1.0

Avoid stacking multiple LoRAs unless you know what you’re doing (VRAM spikes fast)

VRAM & Stability Notes

Designed to keep peak VRAM under ~12GB

GGUF models strongly recommended for smaller GPUs

Cache clearing nodes are intentional — don’t remove them unless you have >24GB VRAM

If you OOM:

Reduce output resolution slightly

Close other GPU apps

Avoid second diffusion passes (QWEN doesn’t like them)

Description

Base 1.0 workflow